Janavi Gupta

Control Without Control: Defining Implicit Interaction Paradigms for Autonomous Assistive Robots

Mar 30, 2026Abstract:Assistive robotic systems have shown growing potential to improve the quality of life of those with disabilities. As researchers explore the automation of various caregiving tasks, considerations for how the technology can still preserve the user's sense of control become paramount to ensuring that robotic systems are aligned with fundamental user needs and motivations. In this work, we present two previously developed systems as design cases through which to explore an interaction paradigm that we call implicit control, where the behavior of an autonomous robot is modified based on users' natural behavioral cues, instead of some direct input. Our selected design cases, unlike systems in past work, specifically probe users' perception of the interaction. We find, from a new thematic analysis of qualitative feedback on both cases, that designing for effective implicit control enables both a reduction in perceived workload and the preservation of the users' sense of control through the system's intuitiveness and responsiveness, contextual awareness, and ability to adapt to preferences. We further derive a set of core guidelines for designers in deciding when and how to apply implicit interaction paradigms for their assistive applications.

Towards an LLM-Based Speech Interface for Robot-Assisted Feeding

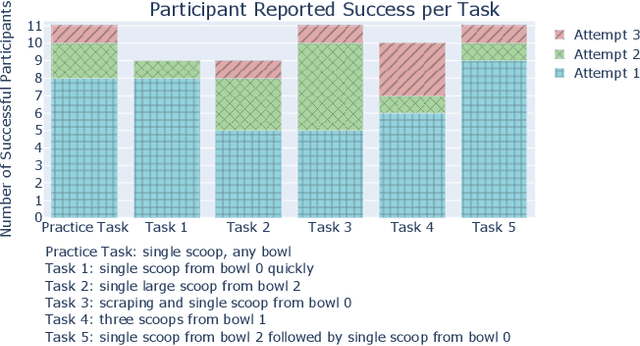

Oct 27, 2024Abstract:Physically assistive robots present an opportunity to significantly increase the well-being and independence of individuals with motor impairments or other forms of disability who are unable to complete activities of daily living (ADLs). Speech interfaces, especially ones that utilize Large Language Models (LLMs), can enable individuals to effectively and naturally communicate high-level commands and nuanced preferences to robots. In this work, we demonstrate an LLM-based speech interface for a commercially available assistive feeding robot. Our system is based on an iteratively designed framework, from the paper "VoicePilot: Harnessing LLMs as Speech Interfaces for Physically Assistive Robots," that incorporates human-centric elements for integrating LLMs as interfaces for robots. It has been evaluated through a user study with 11 older adults at an independent living facility. Videos are located on our project website: https://sites.google.com/andrew.cmu.edu/voicepilot/.

VoicePilot: Harnessing LLMs as Speech Interfaces for Physically Assistive Robots

Apr 05, 2024

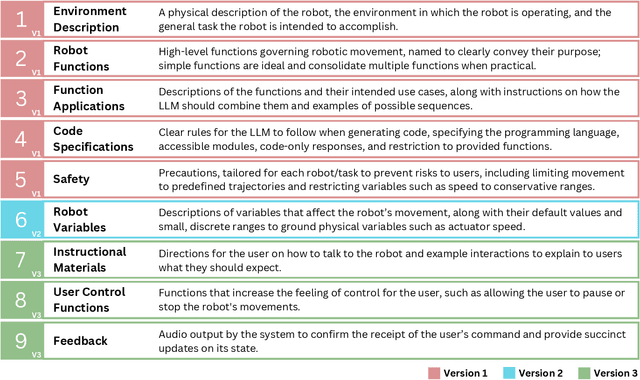

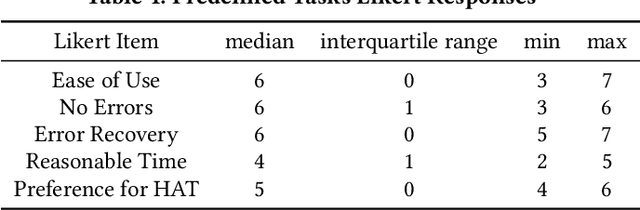

Abstract:Physically assistive robots present an opportunity to significantly increase the well-being and independence of individuals with motor impairments or other forms of disability who are unable to complete activities of daily living. Speech interfaces, especially ones that utilize Large Language Models (LLMs), can enable individuals to effectively and naturally communicate high-level commands and nuanced preferences to robots. Frameworks for integrating LLMs as interfaces to robots for high level task planning and code generation have been proposed, but fail to incorporate human-centric considerations which are essential while developing assistive interfaces. In this work, we present a framework for incorporating LLMs as speech interfaces for physically assistive robots, constructed iteratively with 3 stages of testing involving a feeding robot, culminating in an evaluation with 11 older adults at an independent living facility. We use both quantitative and qualitative data from the final study to validate our framework and additionally provide design guidelines for using LLMs as speech interfaces for assistive robots. Videos and supporting files are located on our project website: https://sites.google.com/andrew.cmu.edu/voicepilot/

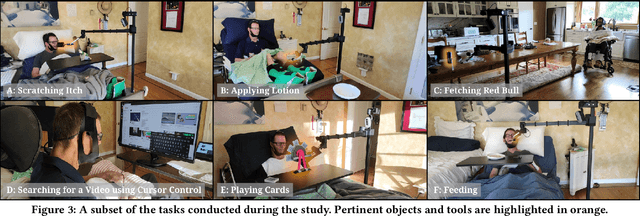

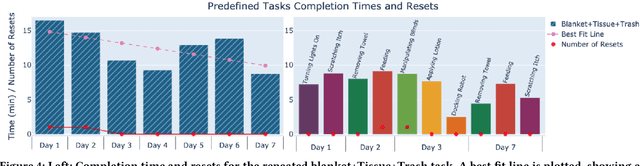

Independence in the Home: A Wearable Interface for a Person with Quadriplegia to Teleoperate a Mobile Manipulator

Jan 02, 2024

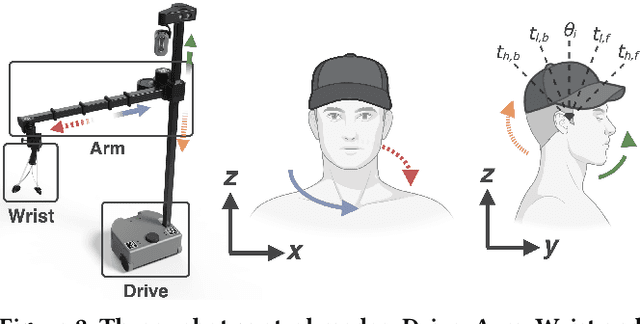

Abstract:Teleoperation of mobile manipulators within a home environment can significantly enhance the independence of individuals with severe motor impairments, allowing them to regain the ability to perform self-care and household tasks. There is a critical need for novel teleoperation interfaces to offer effective alternatives for individuals with impairments who may encounter challenges in using existing interfaces due to physical limitations. In this work, we iterate on one such interface, HAT (Head-Worn Assistive Teleoperation), an inertial-based wearable integrated into any head-worn garment. We evaluate HAT through a 7-day in-home study with Henry Evans, a non-speaking individual with quadriplegia who has participated extensively in assistive robotics studies. We additionally evaluate HAT with a proposed shared control method for mobile manipulators termed Driver Assistance and demonstrate how the interface generalizes to other physical devices and contexts. Our results show that HAT is a strong teleoperation interface across key metrics including efficiency, errors, learning curve, and workload. Code and videos are located on our project website.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge