Jacky C. K. Chow

Robust Self-Supervised Learning of Deterministic Errors in Single-Plane (Monoplanar) and Dual-Plane (Biplanar) X-ray Fluoroscopy

Jan 03, 2020

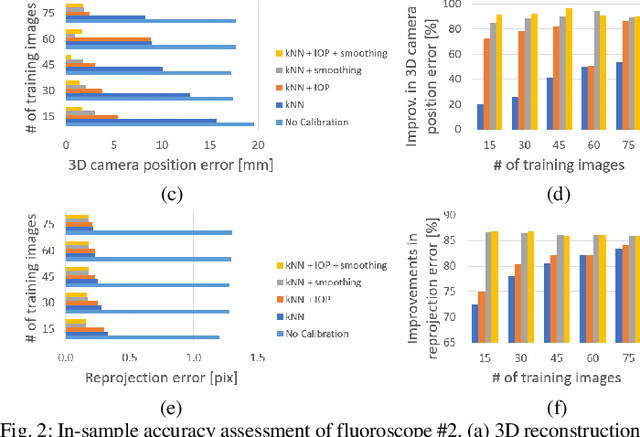

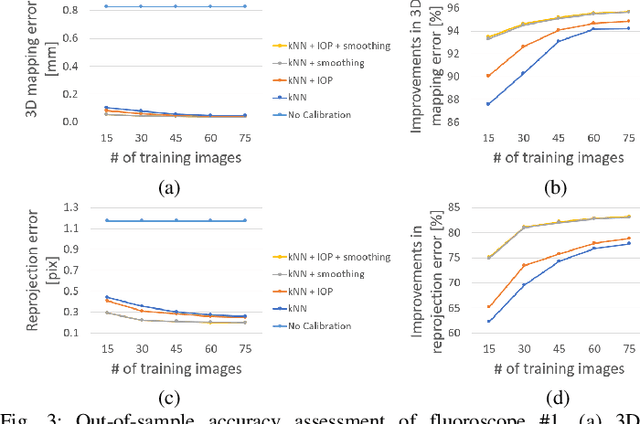

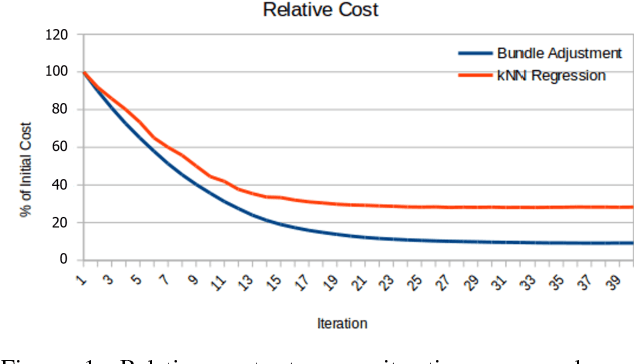

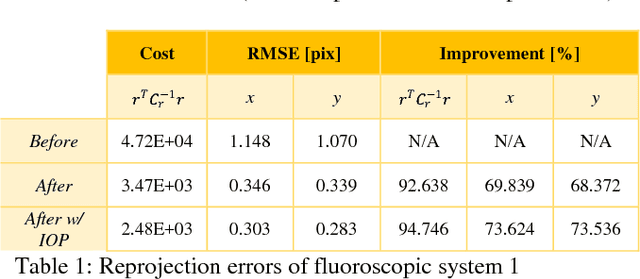

Abstract:Fluoroscopic imaging that captures X-ray images at video framerates is advantageous for guiding catheter insertions by vascular surgeons and interventional radiologists. Visualizing the dynamical movements non-invasively allows complex surgical procedures to be performed with less trauma to the patient. To improve surgical precision, endovascular procedures can benefit from more accurate fluoroscopy data via calibration. This paper presents a robust self-calibration algorithm suitable for single-plane and dual-plane fluoroscopy. A three-dimensional (3D) target field was imaged by the fluoroscope in a strong geometric network configuration. The unknown 3D positions of targets and the fluoroscope pose were estimated simultaneously by maximizing the likelihood of the Student-t probability distribution function. A smoothed k-nearest neighbour (kNN) regression is then used to model the deterministic component of the image reprojection error of the robust bundle adjustment. The Maximum Likelihood Estimation step and the kNN regression step are then repeated iteratively until convergence. Four different error modeling schemes were compared while varying the quantity of training images. It was found that using a smoothed kNN regression can automatically model the systematic errors in fluoroscopy with similar accuracy as a human expert using a small training dataset. When all training images were used, the 3D mapping error was reduced from 0.61-0.83 mm to 0.04 mm post-calibration (94.2-95.7% improvement), and the 2D reprojection error was reduced from 1.17-1.31 to 0.20-0.21 pixels (83.2-83.8% improvement). When using biplanar fluoroscopy, the 3D measurement accuracy of the system improved from 0.60 mm to 0.32 mm (47.2% improvement).

Modelling Errors in X-ray Fluoroscopic Imaging Systems Using Photogrammetric Bundle Adjustment With a Data-Driven Self-Calibration Approach

Oct 19, 2018

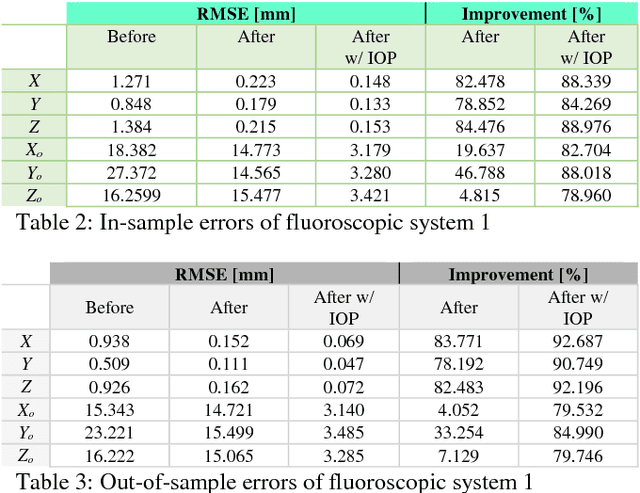

Abstract:X-ray imaging is a fundamental tool of routine clinical diagnosis. Fluoroscopic imaging can further acquire X-ray images at video frame rates, thus enabling non-invasive in-vivo motion studies of joints, gastrointestinal tract, etc. For both the qualitative and quantitative analysis of static and dynamic X-ray images, the data should be free of systematic biases. Besides precise fabrication of hardware, software-based calibration solutions are commonly used for modelling the distortions. In this primary research study, a robust photogrammetric bundle adjustment was used to model the projective geometry of two fluoroscopic X-ray imaging systems. However, instead of relying on an expert photogrammetrist's knowledge and judgement to decide on a parametric model for describing the systematic errors, a self-tuning data-driven approach is used to model the complex non-linear distortion profile of the sensors. Quality control from the experiment showed that 0.06 mm to 0.09 mm 3D reconstruction accuracy was achievable post-calibration using merely 15 X-ray images. As part of the bundle adjustment, the location of the virtual fluoroscopic system relative to the target field can also be spatially resected with an RMSE between 3.10 mm and 3.31 mm.

* ISPRS TC I Mid-term Symposium "Innovative Sensing - From Sensors to Methods and Applications", 10-12 October 2018. Karlsruhe, Germany

Robot Vision: Calibration of Wide-Angle Lens Cameras Using Collinearity Condition and K-Nearest Neighbour Regression

Sep 29, 2018

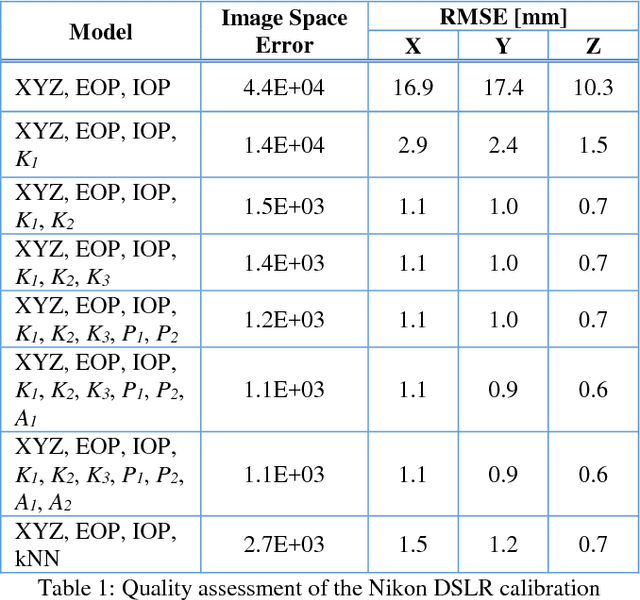

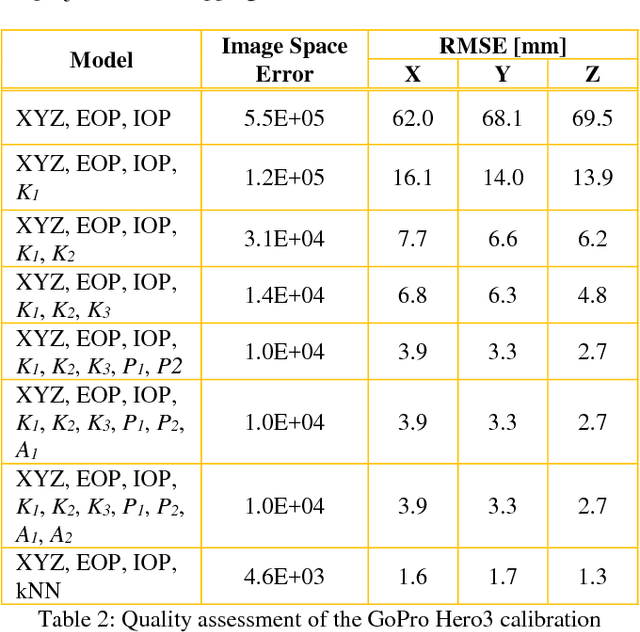

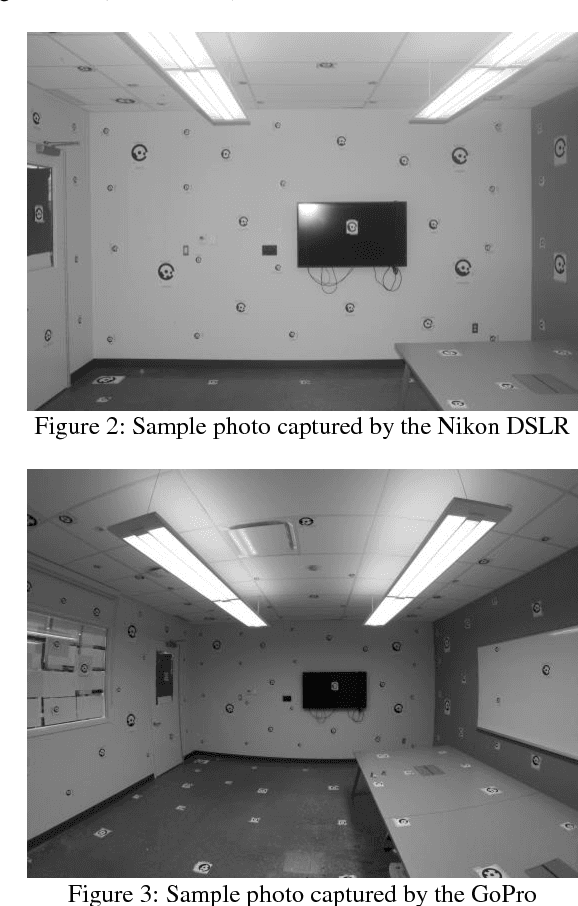

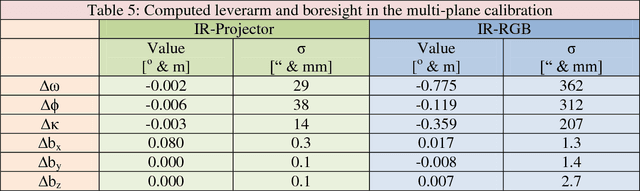

Abstract:Visual perception is regularly used by humans and robots for navigation. By either implicitly or explicitly mapping the environment, ego-motion can be determined and a path of actions can be planned. The process of mapping and navigation are delicately intertwined; therefore, improving one can often lead to an improvement of the other. Both processes are sensitive to the interior orientation parameters of the camera system and mathematically modelling these systematic errors can often improve the precision and accuracy of the overall solution. This paper presents an automatic camera calibration method suitable for any lens, without having prior knowledge about the sensor. Statistical inference is performed to map the environment and localize the camera simultaneously. K-nearest neighbour regression is used to model the geometric distortions of the images. A normal-angle lens Nikon camera and wide-angle lens GoPro camera were calibrated using the proposed method, as well as the conventional bundle adjustment with self-calibration method (for comparison). Results showed that the mapping error was reduced from an average of 14.9 mm to 1.2 mm (i.e. a 92% improvement) and 66.6 mm to 1.5 mm (i.e. a 98% improvement) using the proposed method for the Nikon and GoPro cameras, respectively. In contrast, the conventional approach achieved an average 3D error of 0.9 mm (i.e. 94% improvement) and 3.3 mm (i.e. 95% improvement) for the Nikon and GoPro cameras, respectively. Thus, the proposed method performs well irrespective of the lens/sensor used: it yields results that are comparable to the conventional approach for normal-angle lens cameras, and it has the additional benefit of improving calibration results for wide-angle lens cameras.

* ISPRS TC I Mid-term Symposium "Innovative Sensing - From Sensors to Methods and Applications", 10-12 October 2018. Karlsruhe, Germany

Multi-Sensor Integration for Indoor 3D Reconstruction

Feb 22, 2018

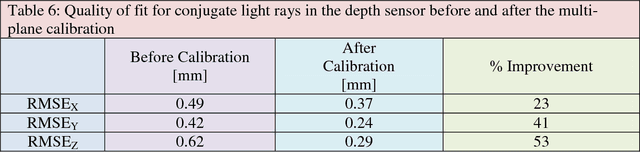

Abstract:Outdoor maps and navigation information delivered by modern services and technologies like Google Maps and Garmin navigators have revolutionized the lifestyle of many people. Motivated by the desire for similar navigation systems for indoor usage from consumers, advertisers, emergency rescuers/responders, etc., many indoor environments such as shopping malls, museums, casinos, airports, transit stations, offices, and schools need to be mapped. Typically, the environment is first reconstructed by capturing many point clouds from various stations and defining their spatial relationships. Currently, there is a lack of an accurate, rigorous, and speedy method for relating point clouds in indoor, urban, satellite-denied environments. This thesis presents a novel and automatic way for fusing calibrated point clouds obtained using a terrestrial laser scanner and the Microsoft Kinect by integrating them with a low-cost inertial measurement unit. The developed system, titled the Scannect, is the first joint static-kinematic indoor 3D mapper.

Drift-Free Indoor Navigation Using Simultaneous Localization and Mapping of the Ambient Heterogeneous Magnetic Field

Feb 17, 2018

Abstract:In the absence of external reference position information (e.g. GNSS) SLAM has proven to be an effective method for indoor navigation. The positioning drift can be reduced with regular loop-closures and global relaxation as the backend, thus achieving a good balance between exploration and exploitation. Although vision-based systems like laser scanners are typically deployed for SLAM, these sensors are heavy, energy inefficient, and expensive, making them unattractive for wearables or smartphone applications. However, the concept of SLAM can be extended to non-optical systems such as magnetometers. Instead of matching features such as walls and furniture using some variation of the ICP algorithm, the local magnetic field can be matched to provide loop-closure and global trajectory updates in a Gaussian Process (GP) SLAM framework. With a MEMS-based inertial measurement unit providing a continuous trajectory, and the matching of locally distinct magnetic field maps, experimental results in this paper show that a drift-free navigation solution in an indoor environment with millimetre-level accuracy can be achieved. The GP-SLAM approach presented can be formulated as a maximum a posteriori estimation problem and it can naturally perform loop-detection, feature-to-feature distance minimization, global trajectory optimization, and magnetic field map estimation simultaneously. Spatially continuous features (i.e. smooth magnetic field signatures) are used instead of discrete feature correspondences (e.g. point-to-point) as in conventional vision-based SLAM. These position updates from the ambient magnetic field also provide enough information for calibrating the accelerometer and gyroscope bias in-use. The only restriction for this method is the need for magnetic disturbances (which is typically not an issue indoors); however, no assumptions are required for the general motion of the sensor.

* ISPRS Workshop Indoor 3D 2017

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge