Hyunwoo Song

Automatic Search for Photoacoustic Marker Using Automated Transrectal Ultrasound

Jul 20, 2023

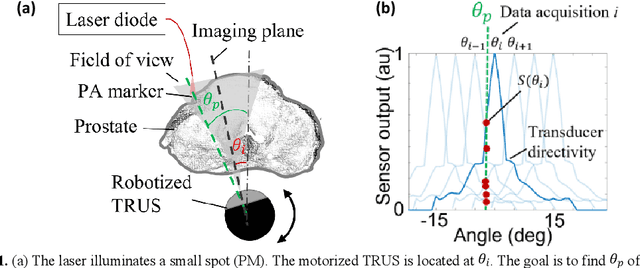

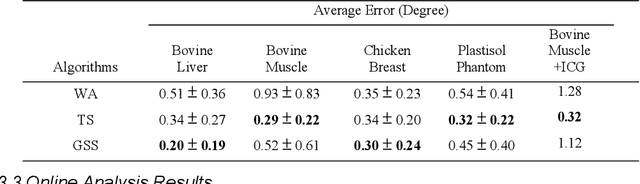

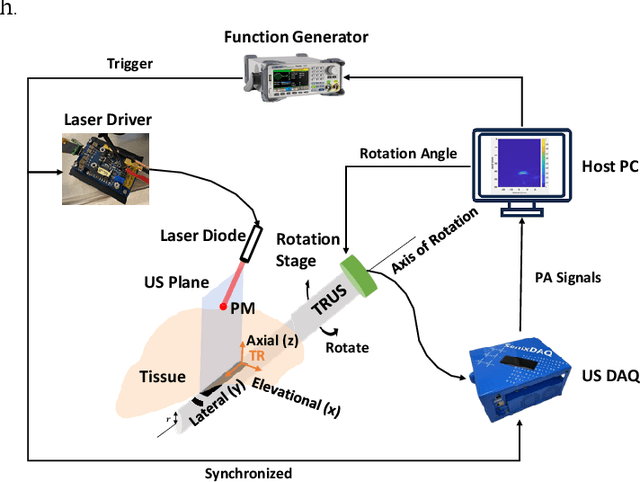

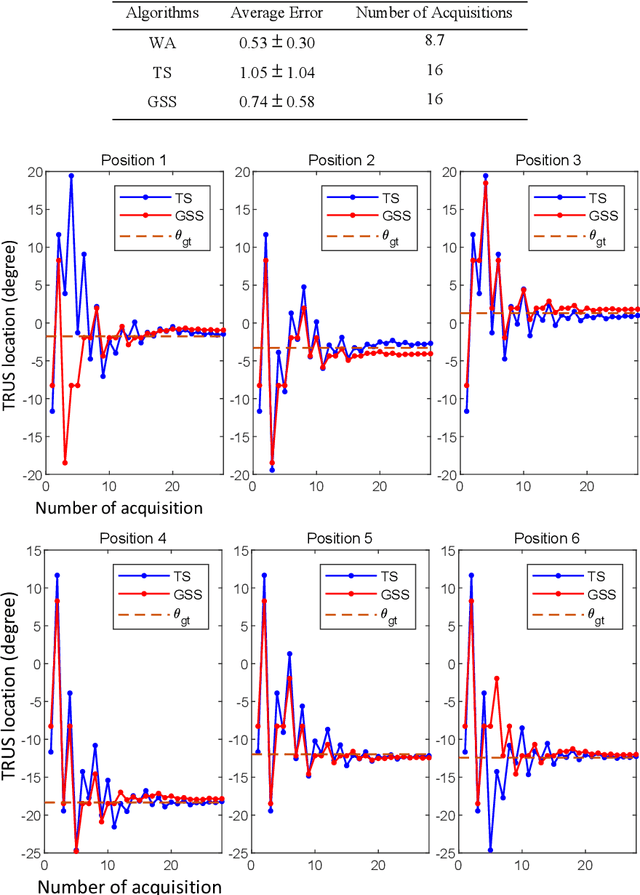

Abstract:Real-time transrectal ultrasound (TRUS) image guidance during robot-assisted laparoscopic radical prostatectomy has the potential to enhance surgery outcomes. Whether conventional or photoacoustic TRUS is used, the robotic system and the TRUS must be registered to each other. Accurate registration can be performed using photoacoustic (PA markers). However, this requires a manual search by an assistant [19]. This paper introduces the first automatic search for PA markers using a transrectal ultrasound robot. This effectively reduces the challenges associated with the da Vinci-TRUS registration. This paper investigated the performance of three search algorithms in simulation and experiment: Weighted Average (WA), Golden Section Search (GSS), and Ternary Search (TS). For validation, a surgical prostate scenario was mimicked and various ex vivo tissues were tested. As a result, the WA algorithm can achieve 0.53 degree average error after 9 data acquisitions, while the TS and GSS algorithm can achieve 0.29 degree and 0.48 degree average errors after 28 data acquisitions.

Arc-to-line frame registration method for ultrasound and photoacoustic image-guided intraoperative robot-assisted laparoscopic prostatectomy

Jun 21, 2023

Abstract:Purpose: To achieve effective robot-assisted laparoscopic prostatectomy, the integration of transrectal ultrasound (TRUS) imaging system which is the most widely used imaging modelity in prostate imaging is essential. However, manual manipulation of the ultrasound transducer during the procedure will significantly interfere with the surgery. Therefore, we propose an image co-registration algorithm based on a photoacoustic marker method, where the ultrasound / photoacoustic (US/PA) images can be registered to the endoscopic camera images to ultimately enable the TRUS transducer to automatically track the surgical instrument Methods: An optimization-based algorithm is proposed to co-register the images from the two different imaging modalities. The principles of light propagation and an uncertainty in PM detection were assumed in this algorithm to improve the stability and accuracy of the algorithm. The algorithm is validated using the previously developed US/PA image-guided system with a da Vinci surgical robot. Results: The target-registration-error (TRE) is measured to evaluate the proposed algorithm. In both simulation and experimental demonstration, the proposed algorithm achieved a sub-centimeter accuracy which is acceptable in practical clinics. The result is also comparable with our previous approach, and the proposed method can be implemented with a normal white light stereo camera and doesn't require highly accurate localization of the PM. Conclusion: The proposed frame registration algorithm enabled a simple yet efficient integration of commercial US/PA imaging system into laparoscopic surgical setting by leveraging the characteristic properties of acoustic wave propagation and laser excitation, contributing to automated US/PA image-guided surgical intervention applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge