Hyekang Joo

AEI: Actors-Environment Interaction with Adaptive Attention for Temporal Action Proposals Generation

Oct 25, 2021

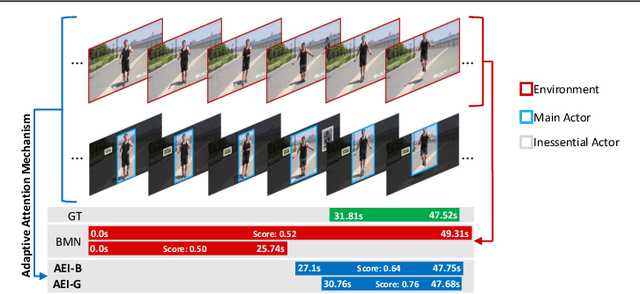

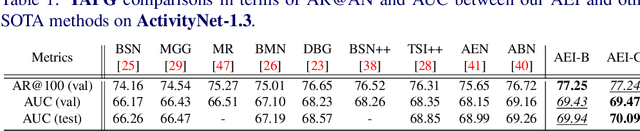

Abstract:Humans typically perceive the establishment of an action in a video through the interaction between an actor and the surrounding environment. An action only starts when the main actor in the video begins to interact with the environment, while it ends when the main actor stops the interaction. Despite the great progress in temporal action proposal generation, most existing works ignore the aforementioned fact and leave their model learning to propose actions as a black-box. In this paper, we make an attempt to simulate that ability of a human by proposing Actor Environment Interaction (AEI) network to improve the video representation for temporal action proposals generation. AEI contains two modules, i.e., perception-based visual representation (PVR) and boundary-matching module (BMM). PVR represents each video snippet by taking human-human relations and humans-environment relations into consideration using the proposed adaptive attention mechanism. Then, the video representation is taken by BMM to generate action proposals. AEI is comprehensively evaluated in ActivityNet-1.3 and THUMOS-14 datasets, on temporal action proposal and detection tasks, with two boundary-matching architectures (i.e., CNN-based and GCN-based) and two classifiers (i.e., Unet and P-GCN). Our AEI robustly outperforms the state-of-the-art methods with remarkable performance and generalization for both temporal action proposal generation and temporal action detection.

Guided Hyperparameter Tuning Through Visualization and Inference

May 24, 2021

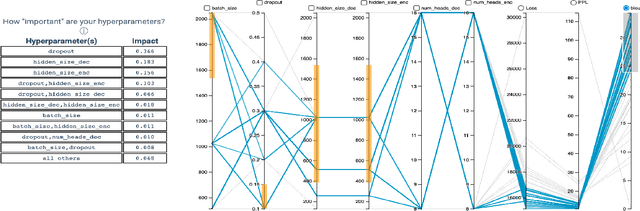

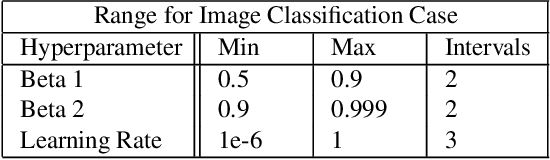

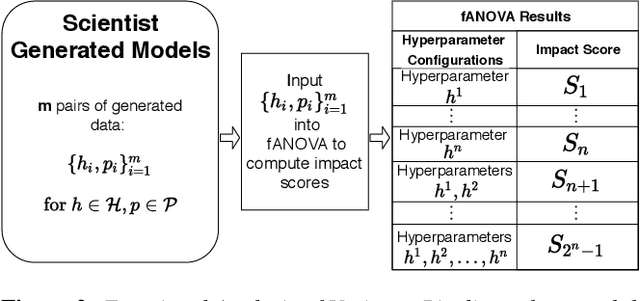

Abstract:For deep learning practitioners, hyperparameter tuning for optimizing model performance can be a computationally expensive task. Though visualization can help practitioners relate hyperparameter settings to overall model performance, significant manual inspection is still required to guide the hyperparameter settings in the next batch of experiments. In response, we present a streamlined visualization system enabling deep learning practitioners to more efficiently explore, tune, and optimize hyperparameters in a batch of experiments. A key idea is to directly suggest more optimal hyperparameter values using a predictive mechanism. We then integrate this mechanism with current visualization practices for deep learning. Moreover, an analysis on the variance in a selected performance metric in the context of the model hyperparameters shows the impact that certain hyperparameters have on the performance metric. We evaluate the tool with a user study on deep learning model builders, finding that our participants have little issue adopting the tool and working with it as part of their workflow.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge