Hongyu Ke

MamBEV: Enabling State Space Models to Learn Birds-Eye-View Representations

Mar 18, 2025

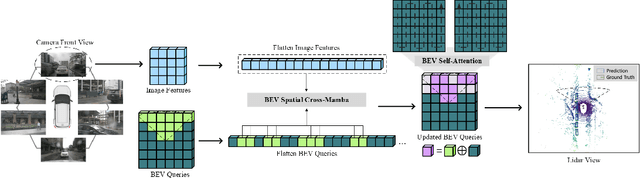

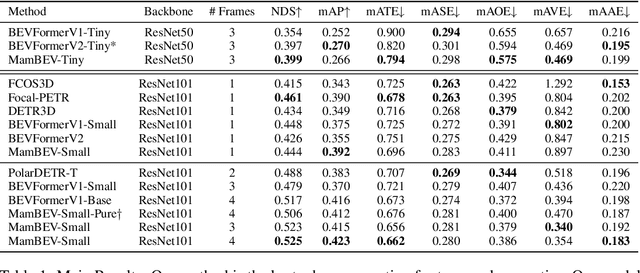

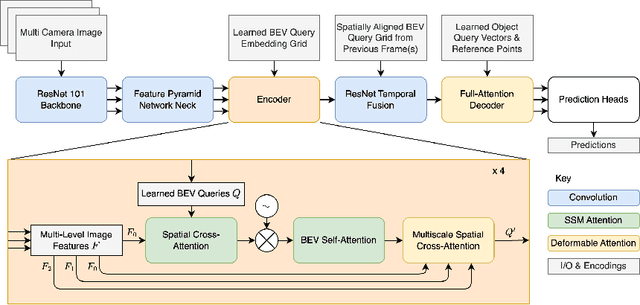

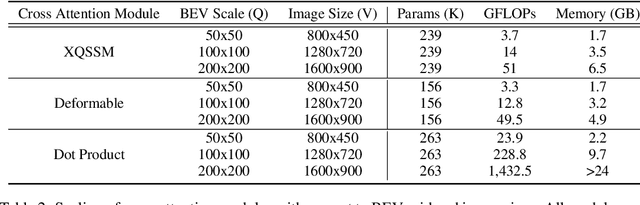

Abstract:3D visual perception tasks, such as 3D detection from multi-camera images, are essential components of autonomous driving and assistance systems. However, designing computationally efficient methods remains a significant challenge. In this paper, we propose a Mamba-based framework called MamBEV, which learns unified Bird's Eye View (BEV) representations using linear spatio-temporal SSM-based attention. This approach supports multiple 3D perception tasks with significantly improved computational and memory efficiency. Furthermore, we introduce SSM based cross-attention, analogous to standard cross attention, where BEV query representations can interact with relevant image features. Extensive experiments demonstrate MamBEV's promising performance across diverse visual perception metrics, highlighting its advantages in input scaling efficiency compared to existing benchmark models.

Poster: Real-Time Object Substitution for Mobile Diminished Reality with Edge Computing

Oct 23, 2023Abstract:Diminished Reality (DR) is considered as the conceptual counterpart to Augmented Reality (AR), and has recently gained increasing attention from both industry and academia. Unlike AR which adds virtual objects to the real world, DR allows users to remove physical content from the real world. When combined with object replacement technology, it presents an further exciting avenue for exploration within the metaverse. Although a few researches have been conducted on the intersection of object substitution and DR, there is no real-time object substitution for mobile diminished reality architecture with high quality. In this paper, we propose an end-to-end architecture to facilitate immersive and real-time scene construction for mobile devices with edge computing.

Metamobility: Connecting Future Mobility with Metaverse

Jan 17, 2023Abstract:A Metaverse is a perpetual, immersive, and shared digital universe that is linked to but beyond the physical reality, and this emerging technology is attracting enormous attention from different industries. In this article, we define the first holistic realization of the metaverse in the mobility domain, coined as ``metamobility". We present our vision of what metamobility will be and describe its basic architecture. We also propose two use cases, tactile live maps and meta-empowered advanced driver-assistance systems (ADAS), to demonstrate how the metamobility will benefit and reshape future mobility systems. Each use case is discussed from the perspective of the technology evolution, future vision, and critical research challenges, respectively. Finally, we identify multiple concrete open research issues for the development and deployment of the metamobility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge