Haoshen Hong

DialectGram: Detecting Dialectal Variation at Multiple Geographic Resolutions

Oct 16, 2019

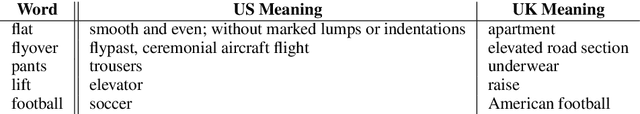

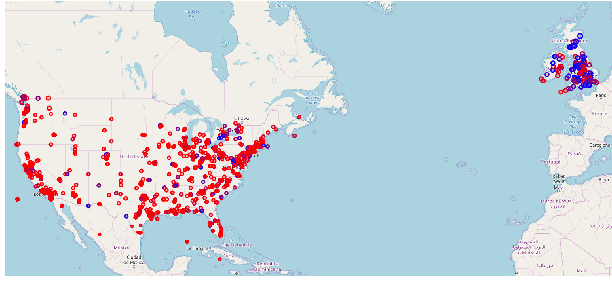

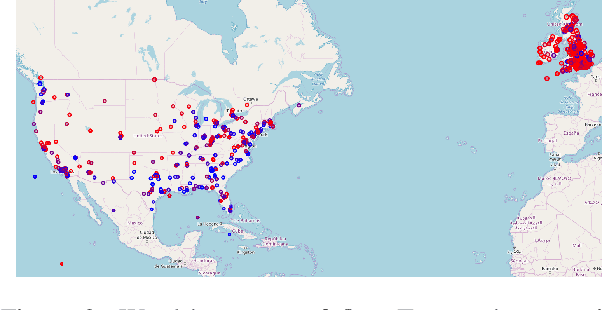

Abstract:Several computational models have been developed to detect and analyze dialect variation in recent years. Most of these models assume a predefined set of geographical regions over which they detect and analyze dialectal variation. However, dialect variation occurs at multiple levels of geographic resolution ranging from cities within a state, states within a country, and between countries across continents. In this work, we propose a model that enables detection of dialectal variation at multiple levels of geographic resolution obviating the need for a-priori definition of the resolution level. Our method DialectGram, learns dialect-sensitive word embeddings while being agnostic of the geographic resolution. Specifically it only requires one-time training and enables analysis of dialectal variation at a chosen resolution post-hoc -- a significant departure from prior models which need to be re-trained whenever the pre-defined set of regions changes. Furthermore, DialectGram explicitly models senses thus enabling one to estimate the proportion of each sense usage in any given region. Finally, we quantitatively evaluate our model against other baselines on a new evaluation dataset DialectSim (in English) and show that DialectGram can effectively model linguistic variation.

* Hang Jiang, Haoshen Hong, and Yuxing Chen are equal contributors

Controllable Top-down Feature Transformer

Nov 04, 2018

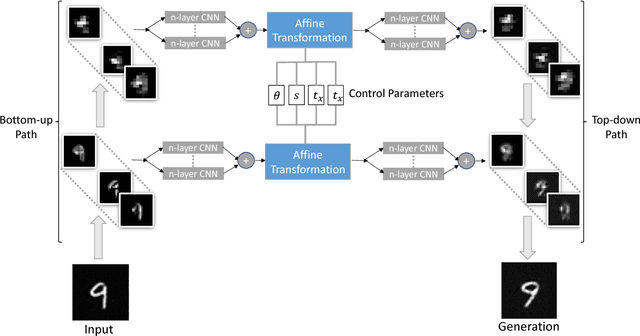

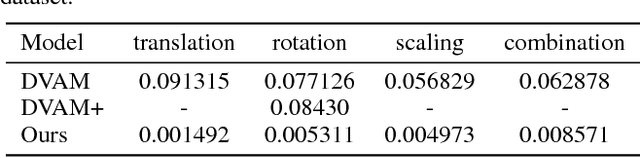

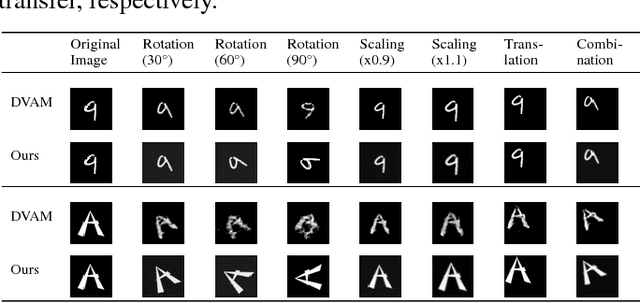

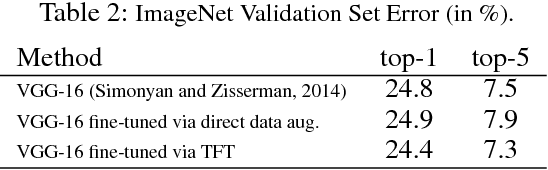

Abstract:We study the intrinsic transformation of feature maps across convolutional network layers with explicit top-down control. To this end, we develop top-down feature transformer (TFT), under controllable parameters, that are able to account for the hidden layer transformation while maintaining the overall consistency across layers. The learned generators capture the underlying feature transformation processes that are independent of particular training images. Our proposed TFT framework brings insights to and helps the understanding of, an important problem of studying the CNN internal feature representation and transformation under the top-down processes. In the case of spatial transformations, we demonstrate the significant advantage of TFT over existing data-driven approaches in building data-independent transformations. We also show that it can be adopted in other applications such as data augmentation and image style transfer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge