Haiyu Yan

Facilitating Multi-Role and Multi-Behavior Collaboration of Large Language Models for Online Job Seeking and Recruiting

May 28, 2024

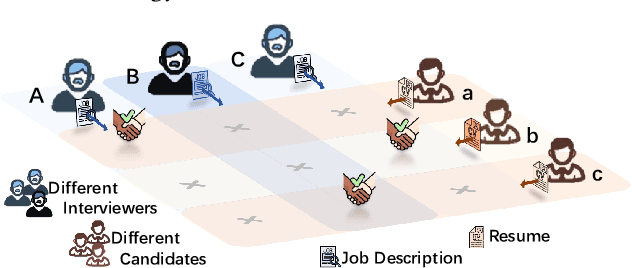

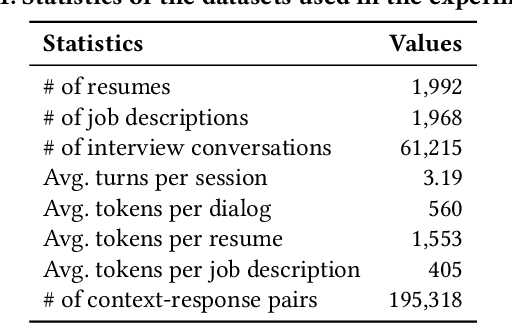

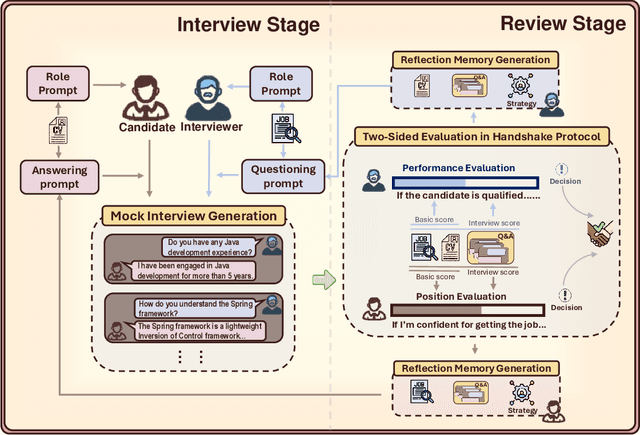

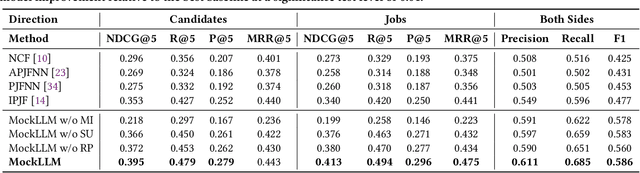

Abstract:The emergence of online recruitment services has revolutionized the traditional landscape of job seeking and recruitment, necessitating the development of high-quality industrial applications to improve person-job fitting. Existing methods generally rely on modeling the latent semantics of resumes and job descriptions and learning a matching function between them. Inspired by the powerful role-playing capabilities of Large Language Models (LLMs), we propose to introduce a mock interview process between LLM-played interviewers and candidates. The mock interview conversations can provide additional evidence for candidate evaluation, thereby augmenting traditional person-job fitting based solely on resumes and job descriptions. However, characterizing these two roles in online recruitment still presents several challenges, such as developing the skills to raise interview questions, formulating appropriate answers, and evaluating two-sided fitness. To this end, we propose MockLLM, a novel applicable framework that divides the person-job matching process into two modules: mock interview generation and two-sided evaluation in handshake protocol, jointly enhancing their performance through collaborative behaviors between interviewers and candidates. We design a role-playing framework as a multi-role and multi-behavior paradigm to enable a single LLM agent to effectively behave with multiple functions for both parties. Moreover, we propose reflection memory generation and dynamic prompt modification techniques to refine the behaviors of both sides, enabling continuous optimization of the augmented additional evidence. Extensive experimental results show that MockLLM can achieve the best performance on person-job matching accompanied by high mock interview quality, envisioning its emerging application in real online recruitment in the future.

Harnessing Multi-Role Capabilities of Large Language Models for Open-Domain Question Answering

Mar 08, 2024

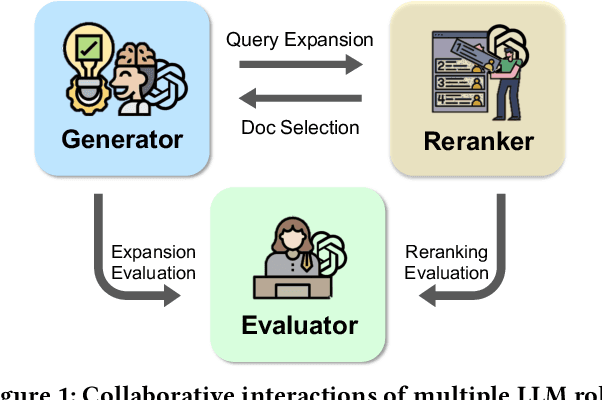

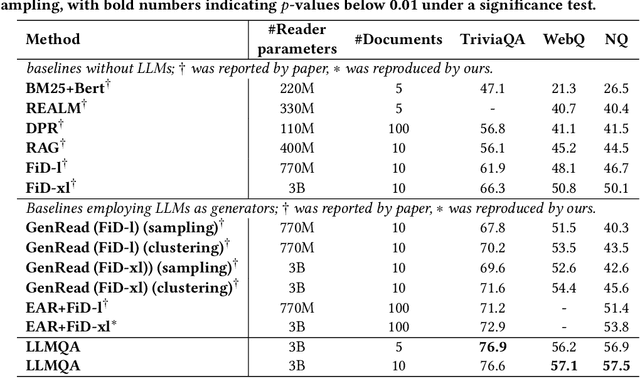

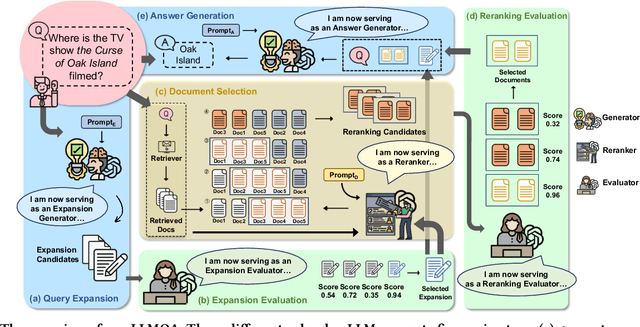

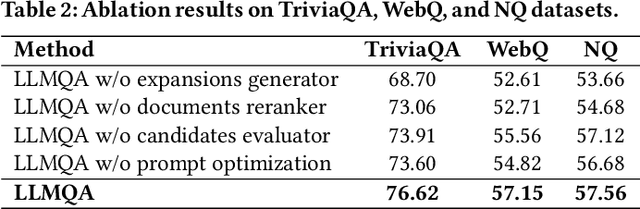

Abstract:Open-domain question answering (ODQA) has emerged as a pivotal research spotlight in information systems. Existing methods follow two main paradigms to collect evidence: (1) The \textit{retrieve-then-read} paradigm retrieves pertinent documents from an external corpus; and (2) the \textit{generate-then-read} paradigm employs large language models (LLMs) to generate relevant documents. However, neither can fully address multifaceted requirements for evidence. To this end, we propose LLMQA, a generalized framework that formulates the ODQA process into three basic steps: query expansion, document selection, and answer generation, combining the superiority of both retrieval-based and generation-based evidence. Since LLMs exhibit their excellent capabilities to accomplish various tasks, we instruct LLMs to play multiple roles as generators, rerankers, and evaluators within our framework, integrating them to collaborate in the ODQA process. Furthermore, we introduce a novel prompt optimization algorithm to refine role-playing prompts and steer LLMs to produce higher-quality evidence and answers. Extensive experimental results on widely used benchmarks (NQ, WebQ, and TriviaQA) demonstrate that LLMQA achieves the best performance in terms of both answer accuracy and evidence quality, showcasing its potential for advancing ODQA research and applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge