Guizhi Xu

SAG-GAN: Semi-Supervised Attention-Guided GANs for Data Augmentation on Medical Images

Nov 15, 2020

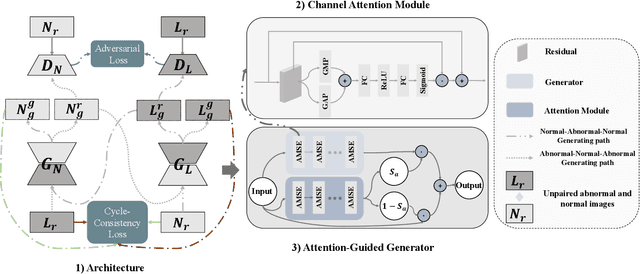

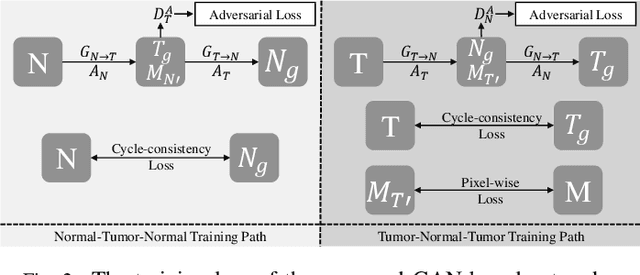

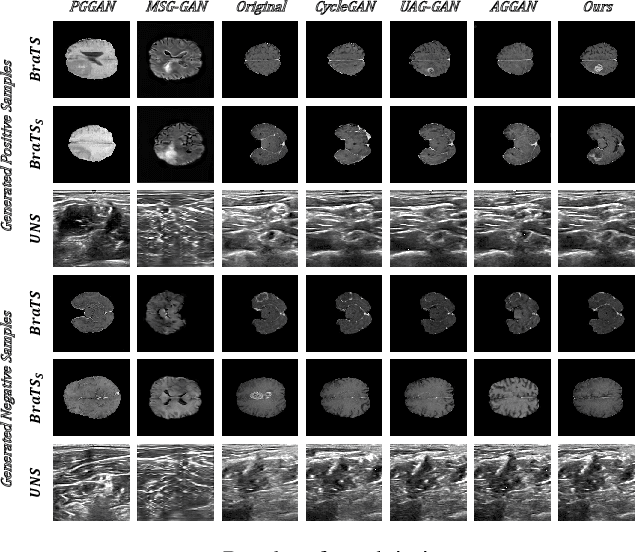

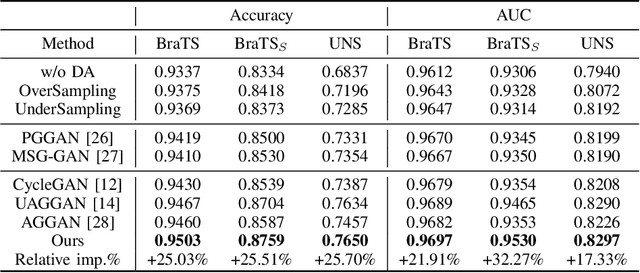

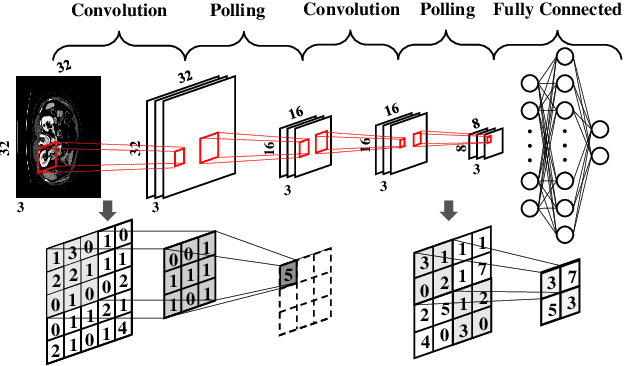

Abstract:Recently deep learning methods, in particular, convolutional neural networks (CNNs), have led to a massive breakthrough in the range of computer vision. Also, the large-scale annotated dataset is the essential key to a successful training procedure. However, it is a huge challenge to get such datasets in the medical domain. Towards this, we present a data augmentation method for generating synthetic medical images using cycle-consistency Generative Adversarial Networks (GANs). We add semi-supervised attention modules to generate images with convincing details. We treat tumor images and normal images as two domains. The proposed GANs-based model can generate a tumor image from a normal image, and in turn, it can also generate a normal image from a tumor image. Furthermore, we show that generated medical images can be used for improving the performance of ResNet18 for medical image classification. Our model is applied to three limited datasets of tumor MRI images. We first generate MRI images on limited datasets, then we trained three popular classification models to get the best model for tumor classification. Finally, we train the classification model using real images with classic data augmentation methods and classification models using synthetic images. The classification results between those trained models showed that the proposed SAG-GAN data augmentation method can boost Accuracy and AUC compare with classic data augmentation methods. We believe the proposed data augmentation method can apply to other medical image domains, and improve the accuracy of computer-assisted diagnosis.

Efficient Medical Image Segmentation with Intermediate Supervision Mechanism

Nov 15, 2020

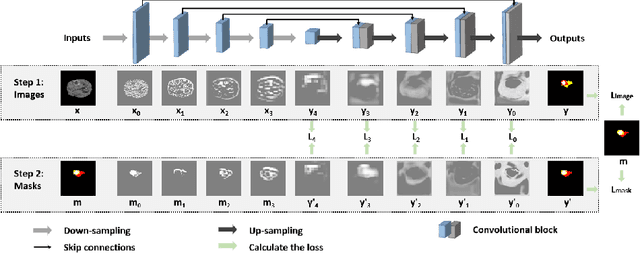

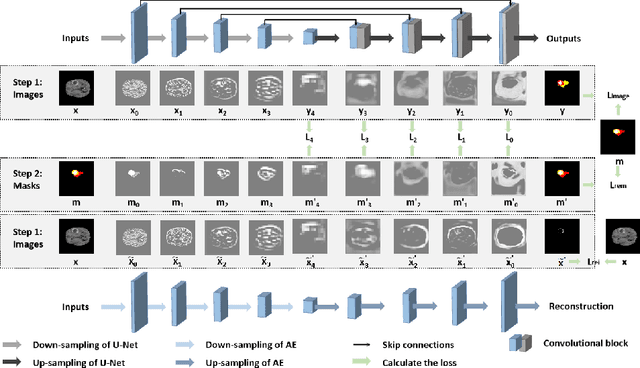

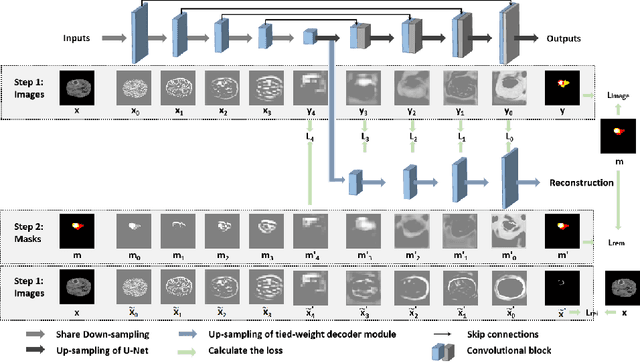

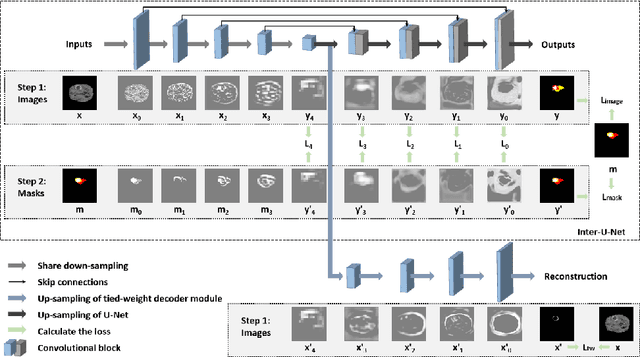

Abstract:Because the expansion path of U-Net may ignore the characteristics of small targets, intermediate supervision mechanism is proposed. The original mask is also entered into the network as a label for intermediate output. However, U-Net is mainly engaged in segmentation, and the extracted features are also targeted at segmentation location information, and the input and output are different. The label we need is that the input and output are both original masks, which is more similar to the refactoring process, so we propose another intermediate supervision mechanism. However, the features extracted by the contraction path of this intermediate monitoring mechanism are not necessarily consistent. For example, U-Net's contraction path extracts transverse features, while auto-encoder extracts longitudinal features, which may cause the output of the expansion path to be inconsistent with the label. Therefore, we put forward the intermediate supervision mechanism of shared-weight decoder module. Although the intermediate supervision mechanism improves the segmentation accuracy, the training time is too long due to the extra input and multiple loss functions. For one of these problems, we have introduced tied-weight decoder. To reduce the redundancy of the model, we combine shared-weight decoder module with tied-weight decoder module.

Deep Learning in Computer-Aided Diagnosis and Treatment of Tumors: A Survey

Nov 02, 2020

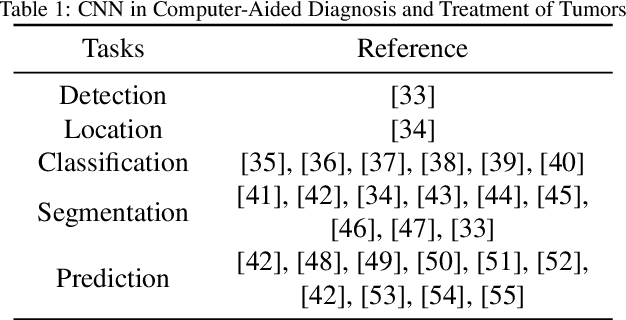

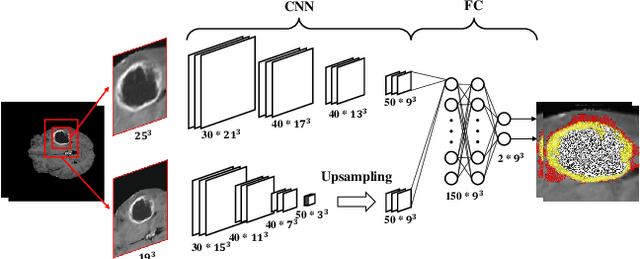

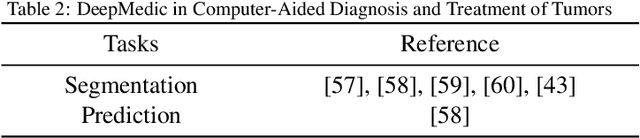

Abstract:Computer-Aided Diagnosis and Treatment of Tumors is a hot topic of deep learning in recent years, which constitutes a series of medical tasks, such as detection of tumor markers, the outline of tumor leisures, subtypes and stages of tumors, prediction of therapeutic effect, and drug development. Meanwhile, there are some deep learning models with precise positioning and excellent performance produced in mainstream task scenarios. Thus follow to introduce deep learning methods from task-orient, mainly focus on the improvements for medical tasks. Then to summarize the recent progress in four stages of tumor diagnosis and treatment, which named In-Vitro Diagnosis (IVD), Imaging Diagnosis (ID), Pathological Diagnosis (PD), and Treatment Planning (TP). According to the specific data types and medical tasks of each stage, we present the applications of deep learning in the Computer-Aided Diagnosis and Treatment of Tumors and analyzing the excellent works therein. This survey concludes by discussing research issues and suggesting challenges for future improvement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge