Guixin Liang

VideoPipe 2022 Challenge: Real-World Video Understanding for Urban Pipe Inspection

Oct 20, 2022

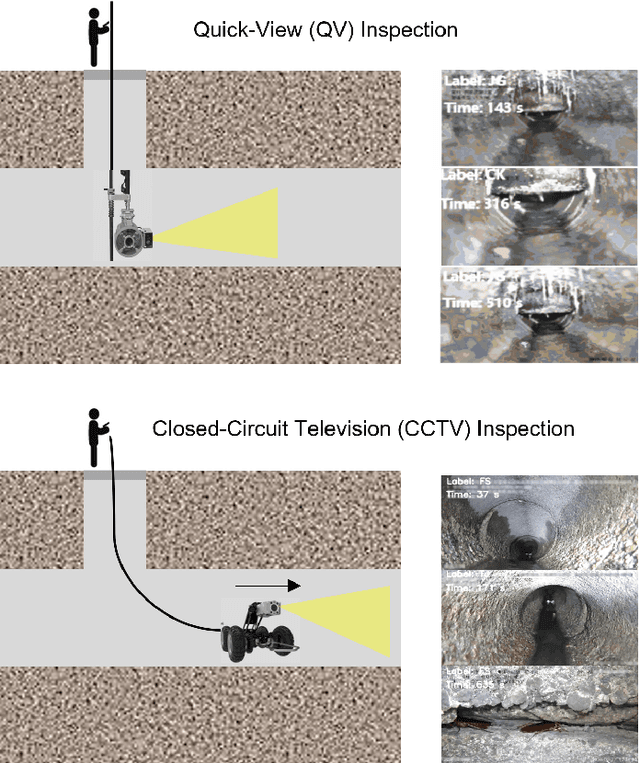

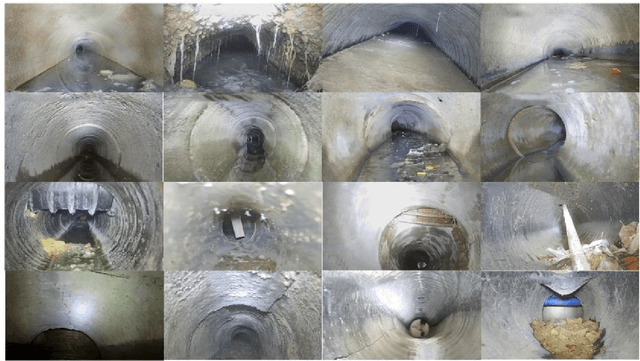

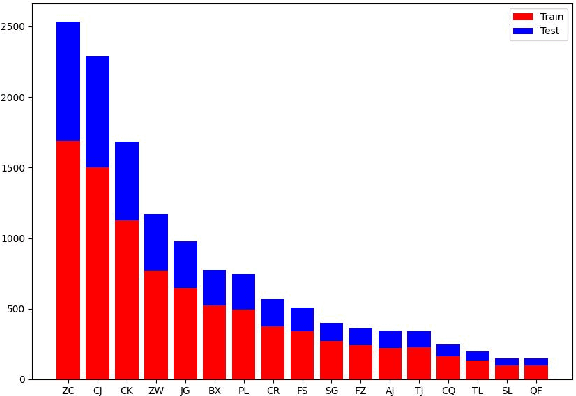

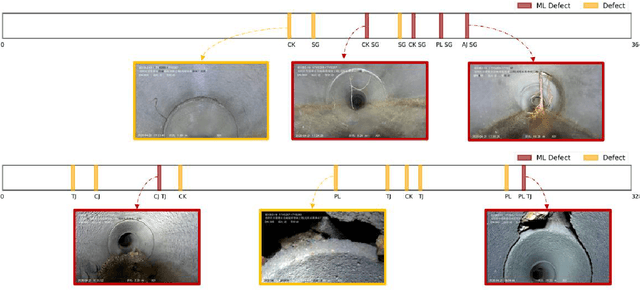

Abstract:Video understanding is an important problem in computer vision. Currently, the well-studied task in this research is human action recognition, where the clips are manually trimmed from the long videos, and a single class of human action is assumed for each clip. However, we may face more complicated scenarios in the industrial applications. For example, in the real-world urban pipe system, anomaly defects are fine-grained, multi-labeled, domain-relevant. To recognize them correctly, we need to understand the detailed video content. For this reason, we propose to advance research areas of video understanding, with a shift from traditional action recognition to industrial anomaly analysis. In particular, we introduce two high-quality video benchmarks, namely QV-Pipe and CCTV-Pipe, for anomaly inspection in the real-world urban pipe systems. Based on these new datasets, we will host two competitions including (1) Video Defect Classification on QV-Pipe and (2) Temporal Defect Localization on CCTV-Pipe. In this report, we describe the details of these benchmarks, the problem definitions of competition tracks, the evaluation metric, and the result summary. We expect that, this competition would bring new opportunities and challenges for video understanding in smart city and beyond. The details of our VideoPipe challenge can be found in https://videopipe.github.io.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge