Guanting Liu

LMHLD: A Large-scale Multi-source High-resolution Landslide Dataset for Landslide Detection based on Deep Learning

Feb 27, 2025

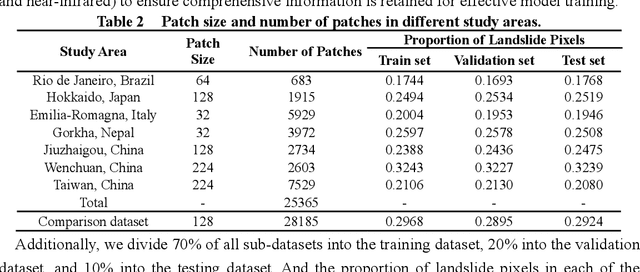

Abstract:Landslides are among the most common natural disasters globally, posing significant threats to human society. Deep learning (DL) has proven to be an effective method for rapidly generating landslide inventories in large-scale disaster areas. However, DL models rely heavily on high-quality labeled landslide data for strong feature extraction capabilities. And landslide detection using DL urgently needs a benchmark dataset to evaluate the generalization ability of the latest models. To solve the above problems, we construct a Large-scale Multi-source High-resolution Landslide Dataset (LMHLD) for Landslide Detection based on DL. LMHLD collects remote sensing images from five different satellite sensors across seven study areas worldwide: Wenchuan, China (2008); Rio de Janeiro, Brazil (2011); Gorkha, Nepal (2015); Jiuzhaigou, China (2015); Taiwan, China (2018); Hokkaido, Japan (2018); Emilia-Romagna, Italy (2023). The dataset includes a total of 25,365 patches, with different patch sizes to accommodate different landslide scales. Additionally, a training module, LMHLDpart, is designed to accommodate landslide detection tasks at varying scales and to alleviate the issue of catastrophic forgetting in multi-task learning. Furthermore, the models trained by LMHLD is applied in other datasets to highlight the robustness of LMHLD. Five dataset quality evaluation experiments designed by using seven DL models from the U-Net family demonstrate that LMHLD has the potential to become a benchmark dataset for landslide detection. LMHLD is open access and can be accessed through the link: https://doi.org/10.5281/zenodo.11424988. This dataset provides a strong foundation for DL models, accelerates the development of DL in landslide detection, and serves as a valuable resource for landslide prevention and mitigation efforts.

Unchain the Search Space with Hierarchical Differentiable Architecture Search

Jan 12, 2021

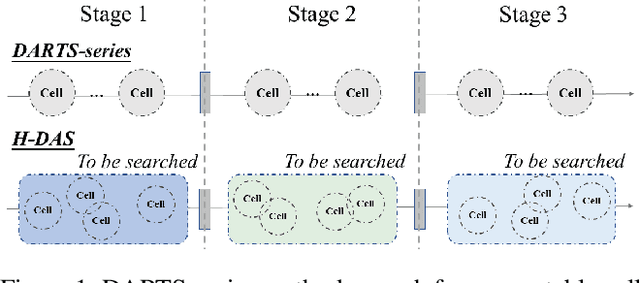

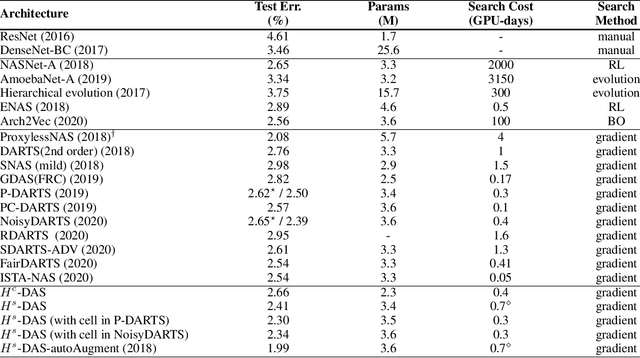

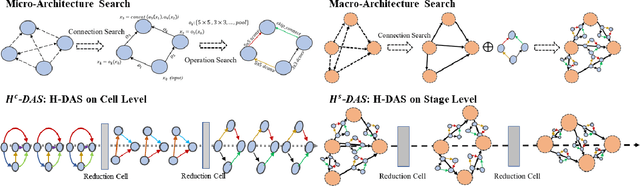

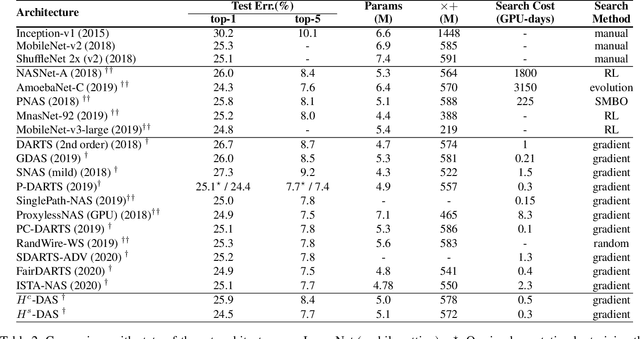

Abstract:Differentiable architecture search (DAS) has made great progress in searching for high-performance architectures with reduced computational cost. However, DAS-based methods mainly focus on searching for a repeatable cell structure, which is then stacked sequentially in multiple stages to form the networks. This configuration significantly reduces the search space, and ignores the importance of connections between the cells. To overcome this limitation, in this paper, we propose a Hierarchical Differentiable Architecture Search (H-DAS) that performs architecture search both at the cell level and at the stage level. Specifically, the cell-level search space is relaxed so that the networks can learn stage-specific cell structures. For the stage-level search, we systematically study the architectures of stages, including the number of cells in each stage and the connections between the cells. Based on insightful observations, we design several search rules and losses, and mange to search for better stage-level architectures. Such hierarchical search space greatly improves the performance of the networks without introducing expensive search cost. Extensive experiments on CIFAR10 and ImageNet demonstrate the effectiveness of the proposed H-DAS. Moreover, the searched stage-level architectures can be combined with the cell structures searched by existing DAS methods to further boost the performance. Code is available at: https://github.com/MalongTech/research-HDAS

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge