Grégoire Passault

LaBRI

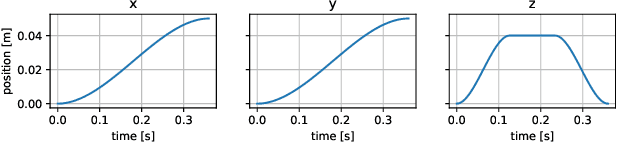

PlaCo: a QP-based robot planning and control framework

Nov 08, 2025Abstract:This article introduces PlaCo, a software framework designed to simplify the formulation and solution of Quadratic Programming (QP)-based planning and control problems for robotic systems. PlaCo provides a high-level interface that abstracts away the low-level mathematical formulation of QP problems, allowing users to specify tasks and constraints in a modular and intuitive manner. The framework supports both Python bindings for rapid prototyping and a C++ implementation for real-time performance.

FRASA: An End-to-End Reinforcement Learning Agent for Fall Recovery and Stand Up of Humanoid Robots

Oct 11, 2024

Abstract:Humanoid robotics faces significant challenges in achieving stable locomotion and recovering from falls in dynamic environments. Traditional methods, such as Model Predictive Control (MPC) and Key Frame Based (KFB) routines, either require extensive fine-tuning or lack real-time adaptability. This paper introduces FRASA, a Deep Reinforcement Learning (DRL) agent that integrates fall recovery and stand up strategies into a unified framework. Leveraging the Cross-Q algorithm, FRASA significantly reduces training time and offers a versatile recovery strategy that adapts to unpredictable disturbances. Comparative tests on Sigmaban humanoid robots demonstrate FRASA superior performance against the KFB method deployed in the RoboCup 2023 by the Rhoban Team, world champion of the KidSize League.

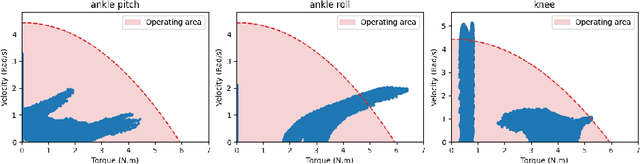

Extended Friction Models for the Physics Simulation of Servo Actuators

Oct 11, 2024

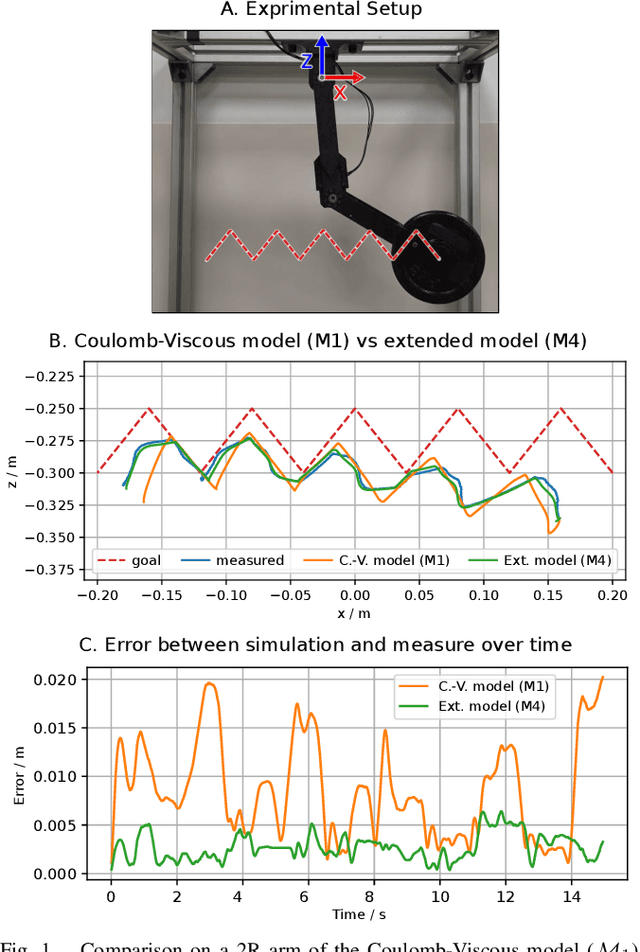

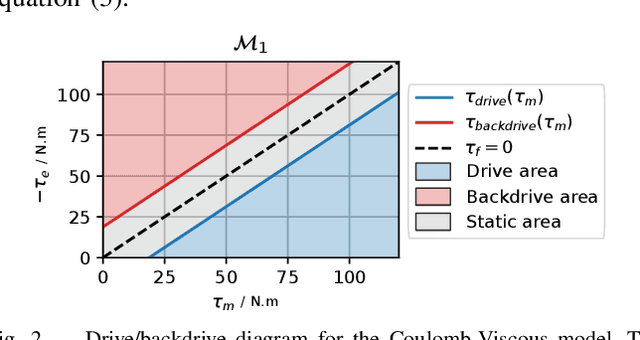

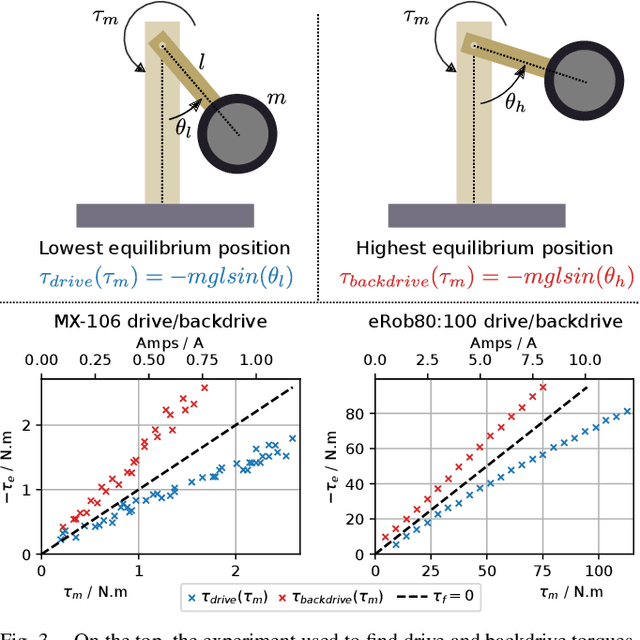

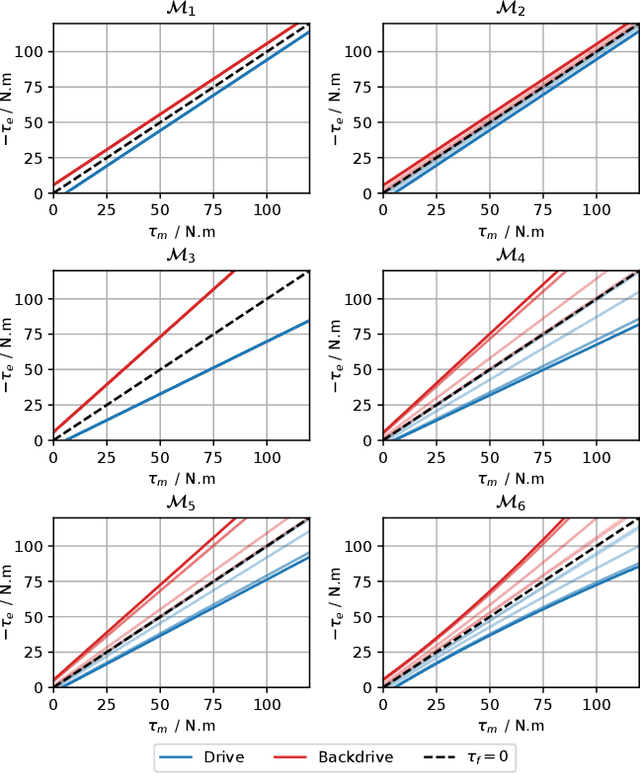

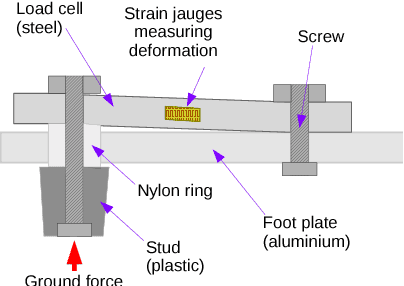

Abstract:Accurate physical simulation is crucial for the development and validation of control algorithms in robotic systems. Recent works in Reinforcement Learning (RL) take notably advantage of extensive simulations to produce efficient robot control. State-of-the-art servo actuator models generally fail at capturing the complex friction dynamics of these systems. This limits the transferability of simulated behaviors to real-world applications. In this work, we present extended friction models that allow to more accurately simulate servo actuator dynamics. We propose a comprehensive analysis of various friction models, present a method for identifying model parameters using recorded trajectories from a pendulum test bench, and demonstrate how these models can be integrated into physics engines. The proposed friction models are validated on four distinct servo actuators and tested on 2R manipulators, showing significant improvements in accuracy over the standard Coulomb-Viscous model. Our results highlight the importance of considering advanced friction effects in the simulation of servo actuators to enhance the realism and reliability of robotic simulations.

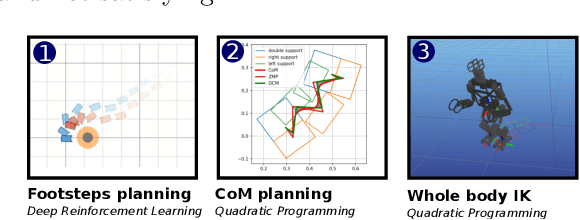

FootstepNet: an Efficient Actor-Critic Method for Fast On-line Bipedal Footstep Planning and Forecasting

Mar 19, 2024

Abstract:Designing a humanoid locomotion controller is challenging and classically split up in sub-problems. Footstep planning is one of those, where the sequence of footsteps is defined. Even in simpler environments, finding a minimal sequence, or even a feasible sequence, yields a complex optimization problem. In the literature, this problem is usually addressed by search-based algorithms (e.g. variants of A*). However, such approaches are either computationally expensive or rely on hand-crafted tuning of several parameters. In this work, at first, we propose an efficient footstep planning method to navigate in local environments with obstacles, based on state-of-the art Deep Reinforcement Learning (DRL) techniques, with very low computational requirements for on-line inference. Our approach is heuristic-free and relies on a continuous set of actions to generate feasible footsteps. In contrast, other methods necessitate the selection of a relevant discrete set of actions. Second, we propose a forecasting method, allowing to quickly estimate the number of footsteps required to reach different candidates of local targets. This approach relies on inherent computations made by the actor-critic DRL architecture. We demonstrate the validity of our approach with simulation results, and by a deployment on a kid-size humanoid robot during the RoboCup 2023 competition.

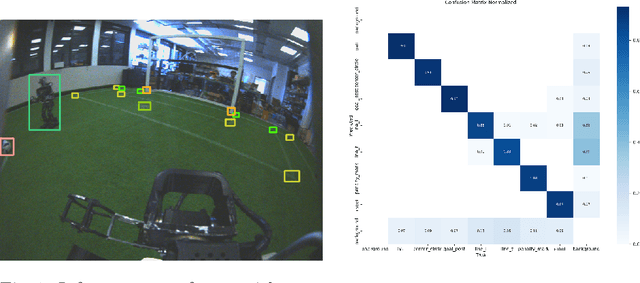

Rhoban Football Club: RoboCup Humanoid Kid-Size 2023 Champion Team Paper

Feb 01, 2024

Abstract:In 2023, Rhoban Football Club reached the first place of the KidSize soccer competition for the fifth time, and received the best humanoid award. This paper presents and reviews important points in robots architecture and workflow, with hindsights from the competition.

Rhoban Football Club: RoboCup Humanoid KidSize 2019 Champion Team Paper

Oct 25, 2019

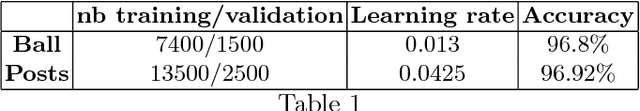

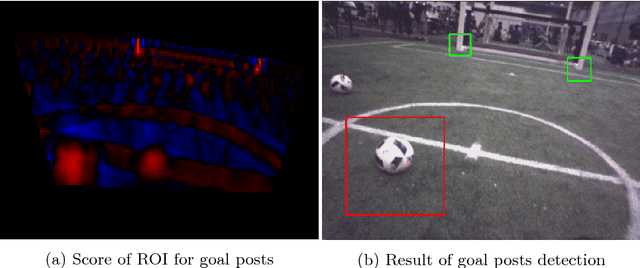

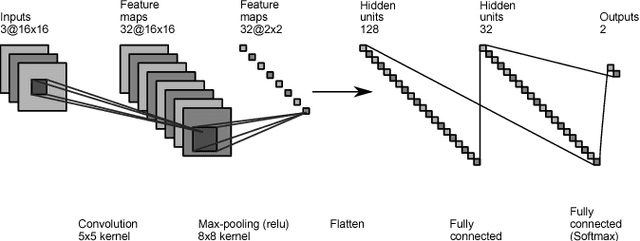

Abstract:In 2019, Rhoban Football Club reached the first place of the KidSize soccer competition for the fourth time and performed the first in-game throw-in in the history of the Humanoid league. Building on our existing code-base, we improved some specific functionalities, introduced new behaviors and experimented with original methods for labeling videos. This paper presents and reviews our latest changes to both software and hardware, highlighting the lessons learned during RoboCup.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge