Gerardo Duran-Martin

Scalable Generalized Bayesian Online Neural Network Training for Sequential Decision Making

Jun 13, 2025Abstract:We introduce scalable algorithms for online learning and generalized Bayesian inference of neural network parameters, designed for sequential decision making tasks. Our methods combine the strengths of frequentist and Bayesian filtering, which include fast low-rank updates via a block-diagonal approximation of the parameter error covariance, and a well-defined posterior predictive distribution that we use for decision making. More precisely, our main method updates a low-rank error covariance for the hidden layers parameters, and a full-rank error covariance for the final layer parameters. Although this characterizes an improper posterior, we show that the resulting posterior predictive distribution is well-defined. Our methods update all network parameters online, with no need for replay buffers or offline retraining. We show, empirically, that our methods achieve a competitive tradeoff between speed and accuracy on (non-stationary) contextual bandit problems and Bayesian optimization problems.

Adaptive, Robust and Scalable Bayesian Filtering for Online Learning

May 12, 2025Abstract:In this thesis, we introduce Bayesian filtering as a principled framework for tackling diverse sequential machine learning problems, including online (continual) learning, prequential (one-step-ahead) forecasting, and contextual bandits. To this end, this thesis addresses key challenges in applying Bayesian filtering to these problems: adaptivity to non-stationary environments, robustness to model misspecification and outliers, and scalability to the high-dimensional parameter space of deep neural networks. We develop novel tools within the Bayesian filtering framework to address each of these challenges, including: (i) a modular framework that enables the development adaptive approaches for online learning; (ii) a novel, provably robust filter with similar computational cost to standard filters, that employs Generalised Bayes; and (iii) a set of tools for sequentially updating model parameters using approximate second-order optimisation methods that exploit the overparametrisation of high-dimensional parametric models such as neural networks. Theoretical analysis and empirical results demonstrate the improved performance of our methods in dynamic, high-dimensional, and misspecified models.

BONE: a unifying framework for Bayesian online learning in non-stationary environments

Nov 15, 2024

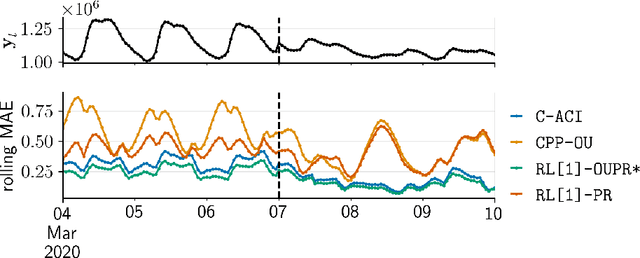

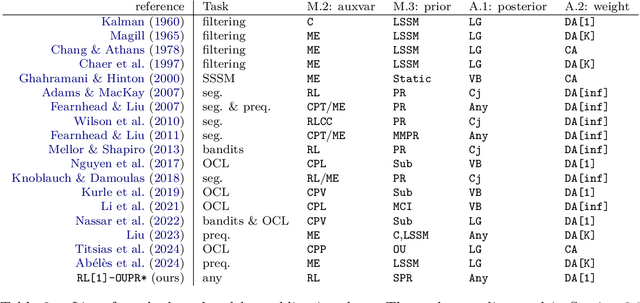

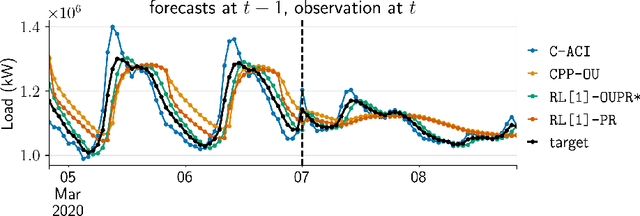

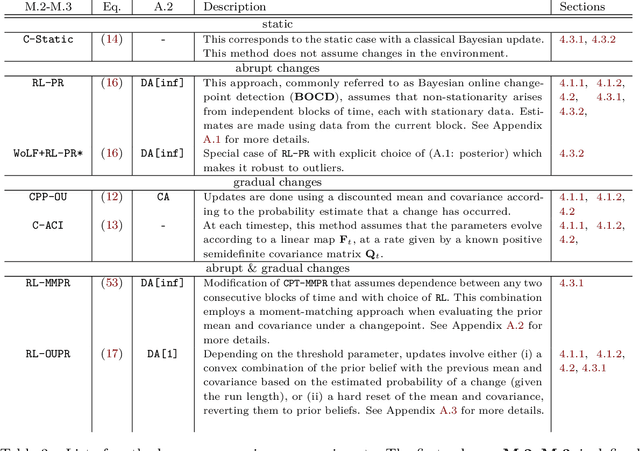

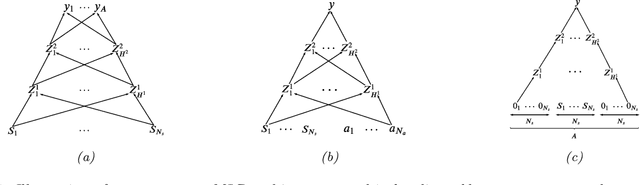

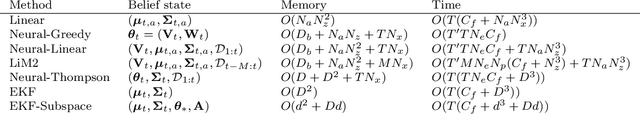

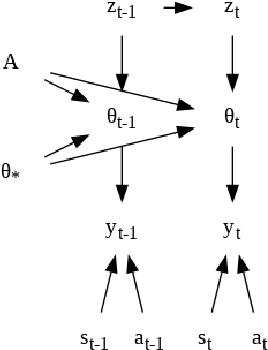

Abstract:We propose a unifying framework for methods that perform Bayesian online learning in non-stationary environments. We call the framework BONE, which stands for (B)ayesian (O)nline learning in (N)on-stationary (E)nvironments. BONE provides a common structure to tackle a variety of problems, including online continual learning, prequential forecasting, and contextual bandits. The framework requires specifying three modelling choices: (i) a model for measurements (e.g., a neural network), (ii) an auxiliary process to model non-stationarity (e.g., the time since the last changepoint), and (iii) a conditional prior over model parameters (e.g., a multivariate Gaussian). The framework also requires two algorithmic choices, which we use to carry out approximate inference under this framework: (i) an algorithm to estimate beliefs (posterior distribution) about the model parameters given the auxiliary variable, and (ii) an algorithm to estimate beliefs about the auxiliary variable. We show how this modularity allows us to write many different existing methods as instances of BONE; we also use this framework to propose a new method. We then experimentally compare existing methods with our proposed new method on several datasets; we provide insights into the situations that make one method more suitable than another for a given task.

Outlier-robust Kalman Filtering through Generalised Bayes

May 09, 2024Abstract:We derive a novel, provably robust, and closed-form Bayesian update rule for online filtering in state-space models in the presence of outliers and misspecified measurement models. Our method combines generalised Bayesian inference with filtering methods such as the extended and ensemble Kalman filter. We use the former to show robustness and the latter to ensure computational efficiency in the case of nonlinear models. Our method matches or outperforms other robust filtering methods (such as those based on variational Bayes) at a much lower computational cost. We show this empirically on a range of filtering problems with outlier measurements, such as object tracking, state estimation in high-dimensional chaotic systems, and online learning of neural networks.

Detecting Toxic Flow

Dec 10, 2023

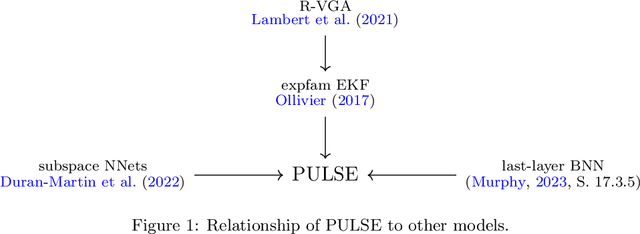

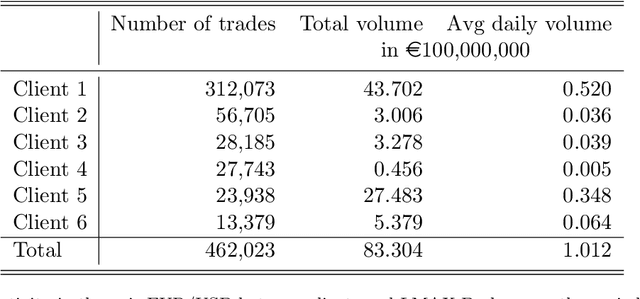

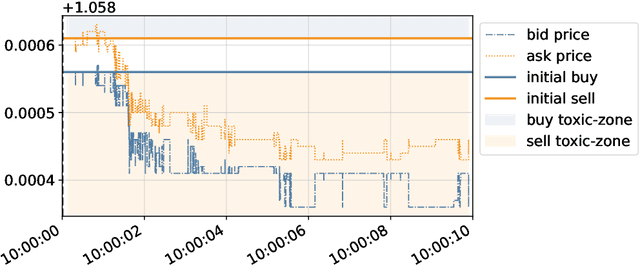

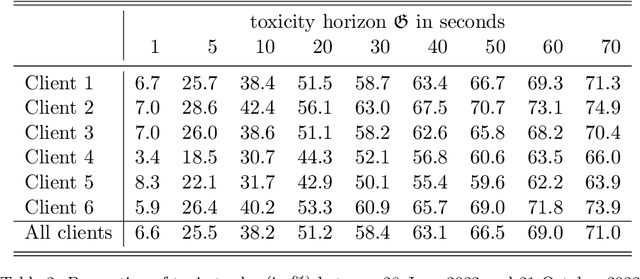

Abstract:This paper develops a framework to predict toxic trades that a broker receives from her clients. Toxic trades are predicted with a novel online Bayesian method which we call the projection-based unification of last-layer and subspace estimation (PULSE). PULSE is a fast and statistically-efficient online procedure to train a Bayesian neural network sequentially. We employ a proprietary dataset of foreign exchange transactions to test our methodology. PULSE outperforms standard machine learning and statistical methods when predicting if a trade will be toxic; the benchmark methods are logistic regression, random forests, and a recursively-updated maximum-likelihood estimator. We devise a strategy for the broker who uses toxicity predictions to internalise or to externalise each trade received from her clients. Our methodology can be implemented in real-time because it takes less than one millisecond to update parameters and make a prediction. Compared with the benchmarks, PULSE attains the highest PnL and the largest avoided loss for the horizons we consider.

Efficient Online Bayesian Inference for Neural Bandits

Dec 01, 2021

Abstract:In this paper we present a new algorithm for online (sequential) inference in Bayesian neural networks, and show its suitability for tackling contextual bandit problems. The key idea is to combine the extended Kalman filter (which locally linearizes the likelihood function at each time step) with a (learned or random) low-dimensional affine subspace for the parameters; the use of a subspace enables us to scale our algorithm to models with $\sim 1M$ parameters. While most other neural bandit methods need to store the entire past dataset in order to avoid the problem of "catastrophic forgetting", our approach uses constant memory. This is possible because we represent uncertainty about all the parameters in the model, not just the final linear layer. We show good results on the "Deep Bayesian Bandit Showdown" benchmark, as well as MNIST and a recommender system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge