Geoffrey JM Parker

DeepBrainPrint: A Novel Contrastive Framework for Brain MRI Re-Identification

Feb 25, 2023

Abstract:Recent advances in MRI have led to the creation of large datasets. With the increase in data volume, it has become difficult to locate previous scans of the same patient within these datasets (a process known as re-identification). To address this issue, we propose an AI-powered medical imaging retrieval framework called DeepBrainPrint, which is designed to retrieve brain MRI scans of the same patient. Our framework is a semi-self-supervised contrastive deep learning approach with three main innovations. First, we use a combination of self-supervised and supervised paradigms to create an effective brain fingerprint from MRI scans that can be used for real-time image retrieval. Second, we use a special weighting function to guide the training and improve model convergence. Third, we introduce new imaging transformations to improve retrieval robustness in the presence of intensity variations (i.e. different scan contrasts), and to account for age and disease progression in patients. We tested DeepBrainPrint on a large dataset of T1-weighted brain MRIs from the Alzheimer's Disease Neuroimaging Initiative (ADNI) and on a synthetic dataset designed to evaluate retrieval performance with different image modalities. Our results show that DeepBrainPrint outperforms previous methods, including simple similarity metrics and more advanced contrastive deep learning frameworks.

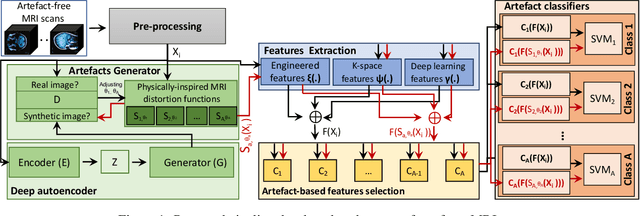

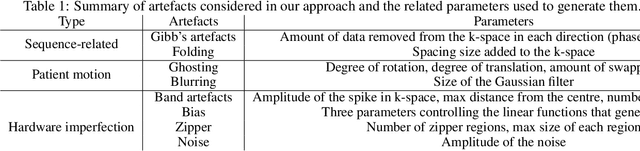

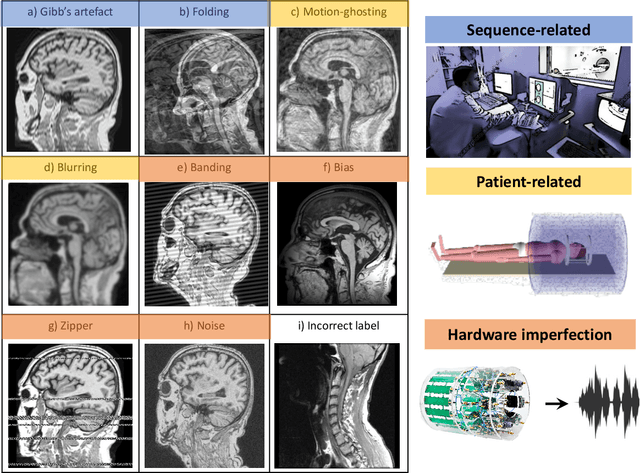

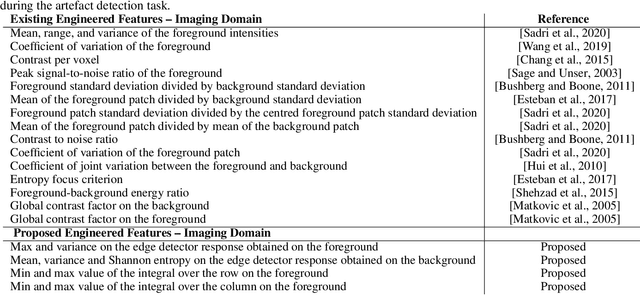

An efficient semi-supervised quality control system trained using physics-based MRI-artefact generators and adversarial training

Jun 07, 2022

Abstract:Large medical imaging data sets are becoming increasingly available. A common challenge in these data sets is to ensure that each sample meets minimum quality requirements devoid of significant artefacts. Despite a wide range of existing automatic methods having been developed to identify imperfections and artefacts in medical imaging, they mostly rely on data-hungry methods. In particular, the lack of sufficient scans with artefacts available for training has created a barrier in designing and deploying machine learning in clinical research. To tackle this problem, we propose a novel framework having four main components: (1) a set of artefact generators inspired by magnetic resonance physics to corrupt brain MRI scans and augment a training dataset, (2) a set of abstract and engineered features to represent images compactly, (3) a feature selection process that depends on the class of artefact to improve classification performance, and (4) a set of Support Vector Machine (SVM) classifiers trained to identify artefacts. Our novel contributions are threefold: first, we use the novel physics-based artefact generators to generate synthetic brain MRI scans with controlled artefacts as a data augmentation technique. This will avoid the labour-intensive collection and labelling process of scans with rare artefacts. Second, we propose a large pool of abstract and engineered image features developed to identify 9 different artefacts for structural MRI. Finally, we use an artefact-based feature selection block that, for each class of artefacts, finds the set of features that provide the best classification performance. We performed validation experiments on a large data set of scans with artificially-generated artefacts, and in a multiple sclerosis clinical trial where real artefacts were identified by experts, showing that the proposed pipeline outperforms traditional methods.

The challenges of deploying artificial intelligence models in a rapidly evolving pandemic

May 19, 2020Abstract:The COVID-19 pandemic, caused by the severe acute respiratory syndrome coronavirus 2, emerged into a world being rapidly transformed by artificial intelligence (AI) based on big data, computational power and neural networks. The gaze of these networks has in recent years turned increasingly towards applications in healthcare. It was perhaps inevitable that COVID-19, a global disease propagating health and economic devastation, should capture the attention and resources of the world's computer scientists in academia and industry. The potential for AI to support the response to the pandemic has been proposed across a wide range of clinical and societal challenges, including disease forecasting, surveillance and antiviral drug discovery. This is likely to continue as the impact of the pandemic unfolds on the world's people, industries and economy but a surprising observation on the current pandemic has been the limited impact AI has had to date in the management of COVID-19. This correspondence focuses on exploring potential reasons behind the lack of successful adoption of AI models developed for COVID-19 diagnosis and prognosis, in front-line healthcare services. We highlight the moving clinical needs that models have had to address at different stages of the epidemic, and explain the importance of translating models to reflect local healthcare environments. We argue that both basic and applied research are essential to accelerate the potential of AI models, and this is particularly so during a rapidly evolving pandemic. This perspective on the response to COVID-19, may provide a glimpse into how the global scientific community should react to combat future disease outbreaks more effectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge