Genggeng Chen

Boosting HDR Image Reconstruction via Semantic Knowledge Transfer

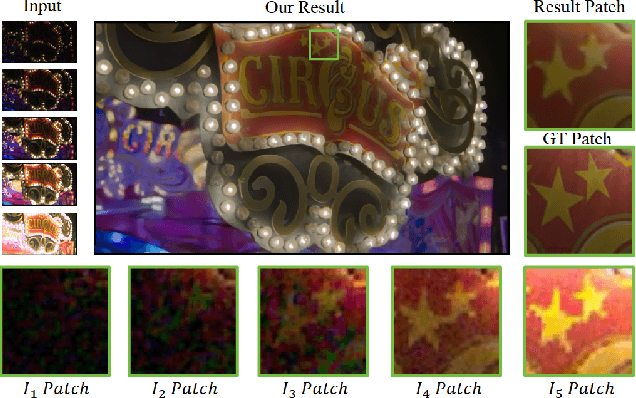

Mar 19, 2025Abstract:Recovering High Dynamic Range (HDR) images from multiple Low Dynamic Range (LDR) images becomes challenging when the LDR images exhibit noticeable degradation and missing content. Leveraging scene-specific semantic priors offers a promising solution for restoring heavily degraded regions. However, these priors are typically extracted from sRGB Standard Dynamic Range (SDR) images, the domain/format gap poses a significant challenge when applying it to HDR imaging. To address this issue, we propose a general framework that transfers semantic knowledge derived from SDR domain via self-distillation to boost existing HDR reconstruction. Specifically, the proposed framework first introduces the Semantic Priors Guided Reconstruction Model (SPGRM), which leverages SDR image semantic knowledge to address ill-posed problems in the initial HDR reconstruction results. Subsequently, we leverage a self-distillation mechanism that constrains the color and content information with semantic knowledge, aligning the external outputs between the baseline and SPGRM. Furthermore, to transfer the semantic knowledge of the internal features, we utilize a semantic knowledge alignment module (SKAM) to fill the missing semantic contents with the complementary masks. Extensive experiments demonstrate that our method can significantly improve the HDR imaging quality of existing methods.

Bracketing Image Restoration and Enhancement with High-Low Frequency Decomposition

Apr 24, 2024

Abstract:In real-world scenarios, due to a series of image degradations, obtaining high-quality, clear content photos is challenging. While significant progress has been made in synthesizing high-quality images, previous methods for image restoration and enhancement often overlooked the characteristics of different degradations. They applied the same structure to address various types of degradation, resulting in less-than-ideal restoration outcomes. Inspired by the notion that high/low frequency information is applicable to different degradations, we introduce HLNet, a Bracketing Image Restoration and Enhancement method based on high-low frequency decomposition. Specifically, we employ two modules for feature extraction: shared weight modules and non-shared weight modules. In the shared weight modules, we use SCConv to extract common features from different degradations. In the non-shared weight modules, we introduce the High-Low Frequency Decomposition Block (HLFDB), which employs different methods to handle high-low frequency information, enabling the model to address different degradations more effectively. Compared to other networks, our method takes into account the characteristics of different degradations, thus achieving higher-quality image restoration.

CRNet: A Detail-Preserving Network for Unified Image Restoration and Enhancement Task

Apr 22, 2024

Abstract:In real-world scenarios, images captured often suffer from blurring, noise, and other forms of image degradation, and due to sensor limitations, people usually can only obtain low dynamic range images. To achieve high-quality images, researchers have attempted various image restoration and enhancement operations on photographs, including denoising, deblurring, and high dynamic range imaging. However, merely performing a single type of image enhancement still cannot yield satisfactory images. In this paper, to deal with the challenge above, we propose the Composite Refinement Network (CRNet) to address this issue using multiple exposure images. By fully integrating information-rich multiple exposure inputs, CRNet can perform unified image restoration and enhancement. To improve the quality of image details, CRNet explicitly separates and strengthens high and low-frequency information through pooling layers, using specially designed Multi-Branch Blocks for effective fusion of these frequencies. To increase the receptive field and fully integrate input features, CRNet employs the High-Frequency Enhancement Module, which includes large kernel convolutions and an inverted bottleneck ConvFFN. Our model secured third place in the first track of the Bracketing Image Restoration and Enhancement Challenge, surpassing previous SOTA models in both testing metrics and visual quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge