Gavin Parpart

Event-to-Video Conversion for Overhead Object Detection

Feb 09, 2024

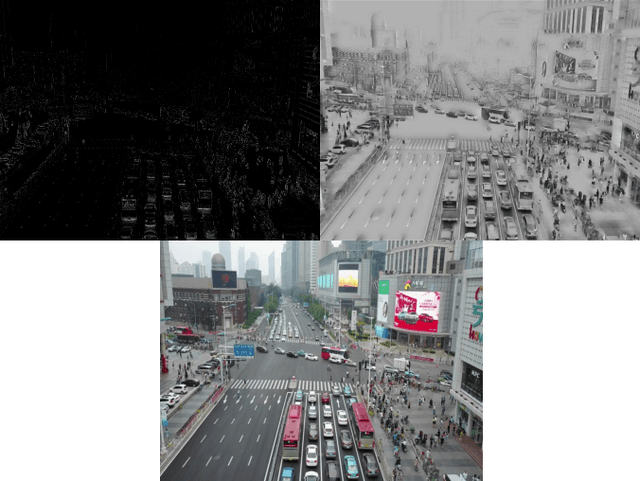

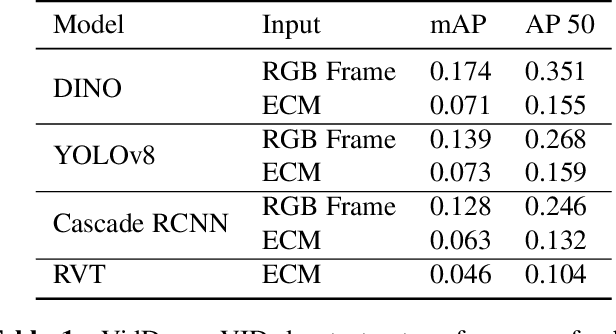

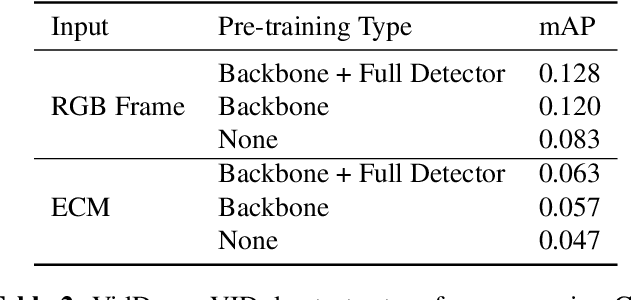

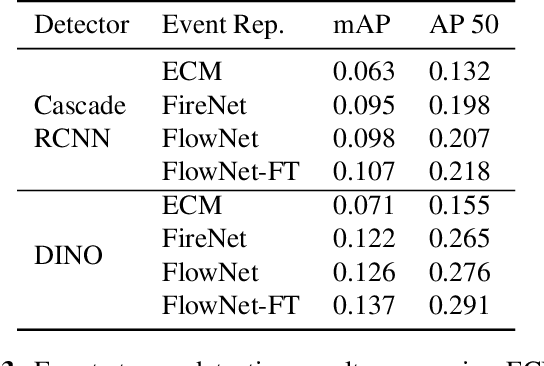

Abstract:Collecting overhead imagery using an event camera is desirable due to the energy efficiency of the image sensor compared to standard cameras. However, event cameras complicate downstream image processing, especially for complex tasks such as object detection. In this paper, we investigate the viability of event streams for overhead object detection. We demonstrate that across a number of standard modeling approaches, there is a significant gap in performance between dense event representations and corresponding RGB frames. We establish that this gap is, in part, due to a lack of overlap between the event representations and the pre-training data used to initialize the weights of the object detectors. Then, we apply event-to-video conversion models that convert event streams into gray-scale video to close this gap. We demonstrate that this approach results in a large performance increase, outperforming even event-specific object detection techniques on our overhead target task. These results suggest that better alignment between event representations and existing large pre-trained models may result in greater short-term performance gains compared to end-to-end event-specific architectural improvements.

Implementing and Benchmarking the Locally Competitive Algorithm on the Loihi 2 Neuromorphic Processor

Jul 25, 2023Abstract:Neuromorphic processors have garnered considerable interest in recent years for their potential in energy-efficient and high-speed computing. The Locally Competitive Algorithm (LCA) has been utilized for power efficient sparse coding on neuromorphic processors, including the first Loihi processor. With the Loihi 2 processor enabling custom neuron models and graded spike communication, more complex implementations of LCA are possible. We present a new implementation of LCA designed for the Loihi 2 processor and perform an initial set of benchmarks comparing it to LCA on CPU and GPU devices. In these experiments LCA on Loihi 2 is orders of magnitude more efficient and faster for large sparsity penalties, while maintaining similar reconstruction quality. We find this performance improvement increases as the LCA parameters are tuned towards greater representation sparsity. Our study highlights the potential of neuromorphic processors, particularly Loihi 2, in enabling intelligent, autonomous, real-time processing on small robots, satellites where there are strict SWaP (small, lightweight, and low power) requirements. By demonstrating the superior performance of LCA on Loihi 2 compared to conventional computing device, our study suggests that Loihi 2 could be a valuable tool in advancing these types of applications. Overall, our study highlights the potential of neuromorphic processors for efficient and accurate data processing on resource-constrained devices.

Dictionary Learning with Accumulator Neurons

May 30, 2022

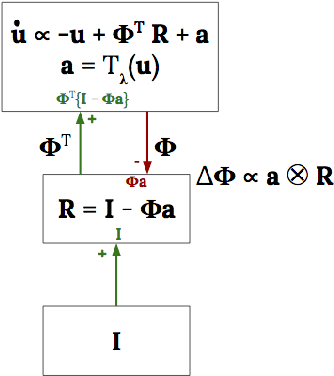

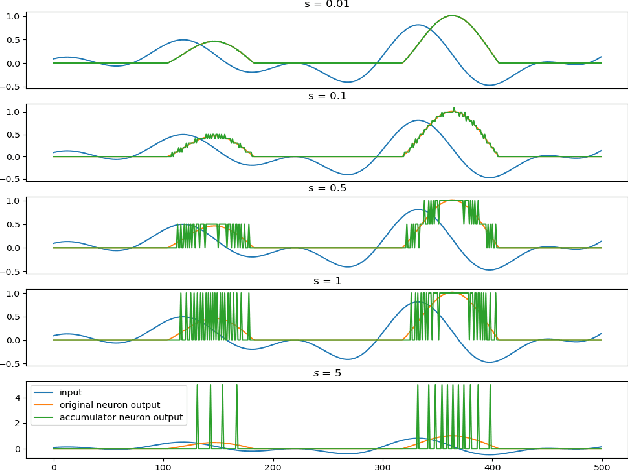

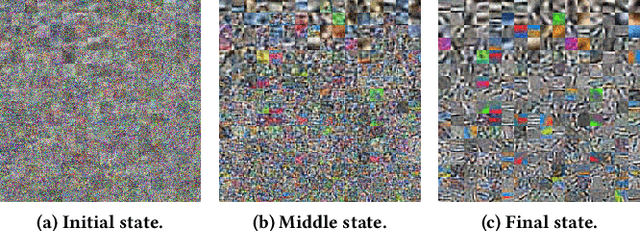

Abstract:The Locally Competitive Algorithm (LCA) uses local competition between non-spiking leaky integrator neurons to infer sparse representations, allowing for potentially real-time execution on massively parallel neuromorphic architectures such as Intel's Loihi processor. Here, we focus on the problem of inferring sparse representations from streaming video using dictionaries of spatiotemporal features optimized in an unsupervised manner for sparse reconstruction. Non-spiking LCA has previously been used to achieve unsupervised learning of spatiotemporal dictionaries composed of convolutional kernels from raw, unlabeled video. We demonstrate how unsupervised dictionary learning with spiking LCA (\hbox{S-LCA}) can be efficiently implemented using accumulator neurons, which combine a conventional leaky-integrate-and-fire (\hbox{LIF}) spike generator with an additional state variable that is used to minimize the difference between the integrated input and the spiking output. We demonstrate dictionary learning across a wide range of dynamical regimes, from graded to intermittent spiking, for inferring sparse representations of both static images drawn from the CIFAR database as well as video frames captured from a DVS camera. On a classification task that requires identification of the suite from a deck of cards being rapidly flipped through as viewed by a DVS camera, we find essentially no degradation in performance as the LCA model used to infer sparse spatiotemporal representations migrates from graded to spiking. We conclude that accumulator neurons are likely to provide a powerful enabling component of future neuromorphic hardware for implementing online unsupervised learning of spatiotemporal dictionaries optimized for sparse reconstruction of streaming video from event based DVS cameras.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge