Firas Shama

Crowd Source Scene Change Detection and Local Map Update

Mar 10, 2022

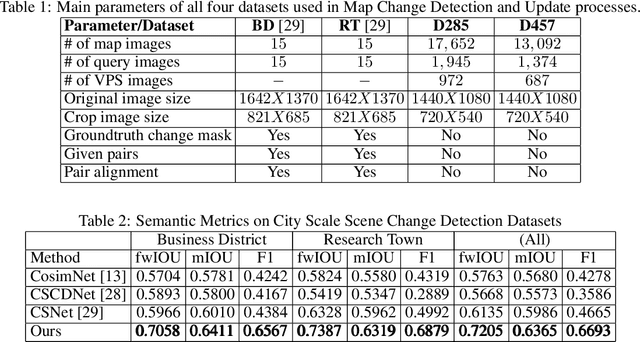

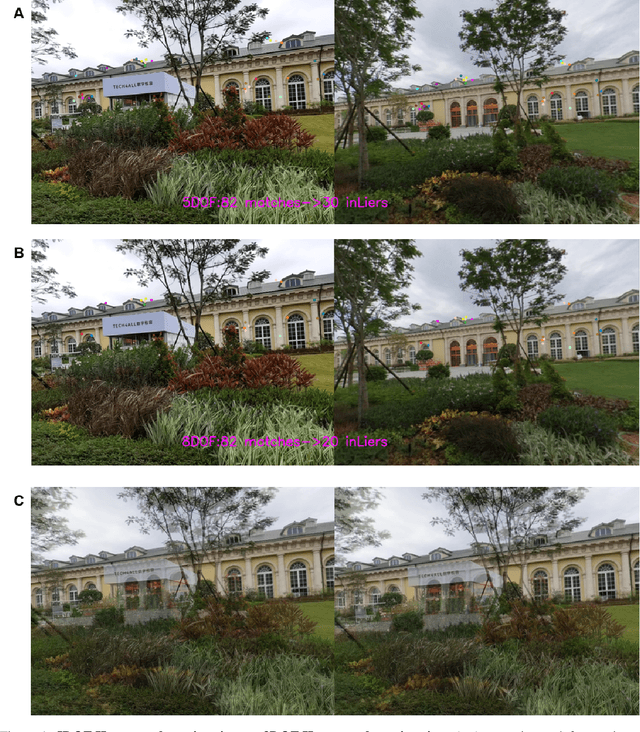

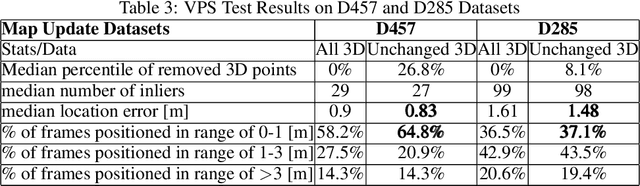

Abstract:As scene changes with time map descriptors become outdated, affecting VPS localization accuracy. In this work, we propose an approach to detect structural and texture scene changes to be followed by map update. In our method - map includes 3D points with descriptors generated either via LiDAR or SFM. Common approaches suffer from shortcomings: 1) Direct comparison of the two point-clouds for change detection is slow due to the need to build new point-cloud every time we want to compare; 2) Image based comparison requires to keep the map images adding substantial storage overhead. To circumvent this problems, we propose an approach based on point-clouds descriptors comparison: 1) Based on VPS poses select close query and map images pairs, 2) Registration of query images to map image descriptors, 3) Use segmentation to filter out dynamic or short term temporal changes, 4) Compare the descriptors between corresponding segments.

Adversarial Feedback Loop

Nov 20, 2018

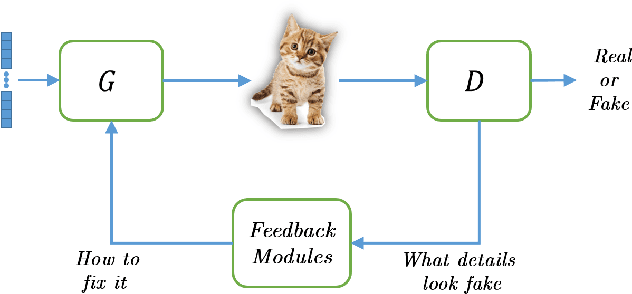

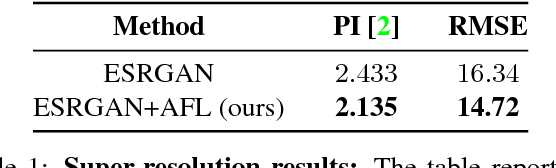

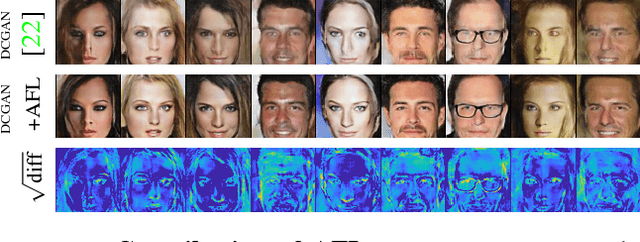

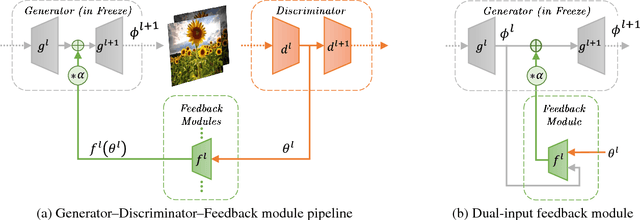

Abstract:Thanks to their remarkable generative capabilities, GANs have gained great popularity, and are used abundantly in state-of-the-art methods and applications. In a GAN based model, a discriminator is trained to learn the real data distribution. To date, it has been used only for training purposes, where it's utilized to train the generator to provide real-looking outputs. In this paper we propose a novel method that makes an explicit use of the discriminator in test-time, in a feedback manner in order to improve the generator results. To the best of our knowledge it is the first time a discriminator is involved in test-time. We claim that the discriminator holds significant information on the real data distribution, that could be useful for test-time as well, a potential that has not been explored before. The approach we propose does not alter the conventional training stage. At test-time, however, it transfers the output from the generator into the discriminator, and uses feedback modules (convolutional blocks) to translate the features of the discriminator layers into corrections to the features of the generator layers, which are used eventually to get a better generator result. Our method can contribute to both conditional and unconditional GANs. As demonstrated by our experiments, it can improve the results of state-of-the-art networks for super-resolution, and image generation.

Maintaining Natural Image Statistics with the Contextual Loss

Jul 18, 2018

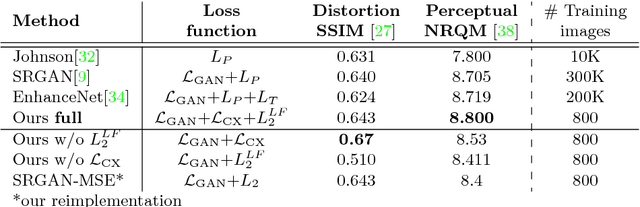

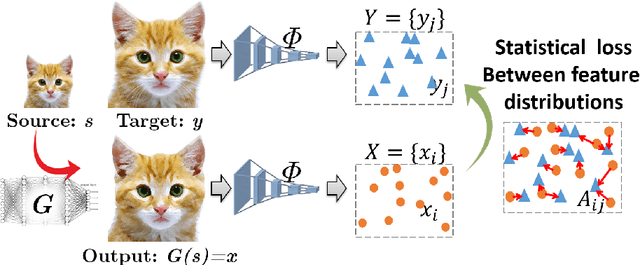

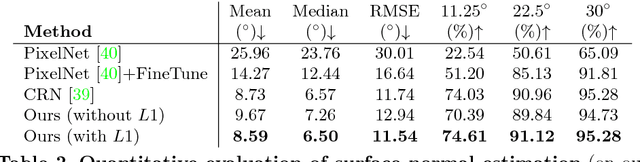

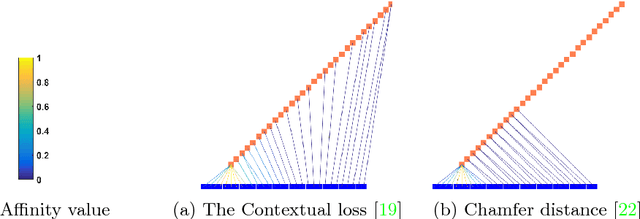

Abstract:Maintaining natural image statistics is a crucial factor in restoration and generation of realistic looking images. When training CNNs, photorealism is usually attempted by adversarial training (GAN), that pushes the output images to lie on the manifold of natural images. GANs are very powerful, but not perfect. They are hard to train and the results still often suffer from artifacts. In this paper we propose a complementary approach, that could be applied with or without GAN, whose goal is to train a feed-forward CNN to maintain natural internal statistics. We look explicitly at the distribution of features in an image and train the network to generate images with natural feature distributions. Our approach reduces by orders of magnitude the number of images required for training and achieves state-of-the-art results on both single-image super-resolution, and high-resolution surface normal estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge