Feifan Lv

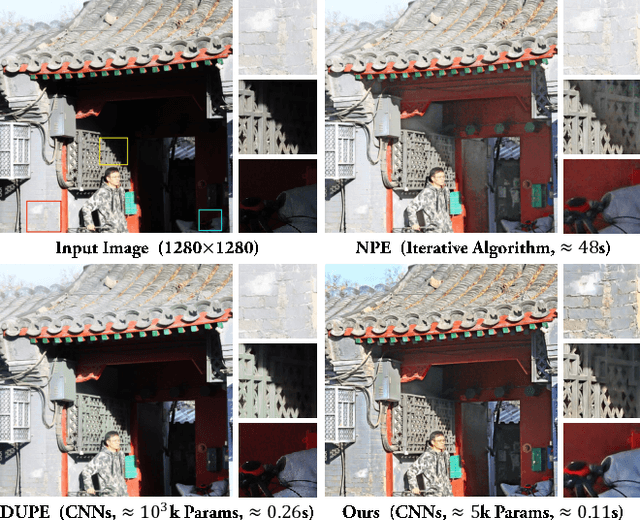

Fast Enhancement for Non-Uniform Illumination Images using Light-weight CNNs

May 31, 2020

Abstract:This paper proposes a new light-weight convolutional neural network (5k parameters) for non-uniform illumination image enhancement to handle color, exposure, contrast, noise and artifacts, etc., simultaneously and effectively. More concretely, the input image is first enhanced using Retinex model from dual different aspects (enhancing under-exposure and suppressing over-exposure), respectively. Then, these two enhanced results and the original image are fused to obtain an image with satisfactory brightness, contrast and details. Finally, the extra noise and compression artifacts are removed to get the final result. To train this network, we propose a semi-supervised retouching solution and construct a new dataset (82k images) contains various scenes and light conditions. Our model can enhance 0.5 mega-pixel (like 600*800) images in real time (50 fps), which is faster than existing enhancement methods. Extensive experiments show that our solution is fast and effective to deal with non-uniform illumination images.

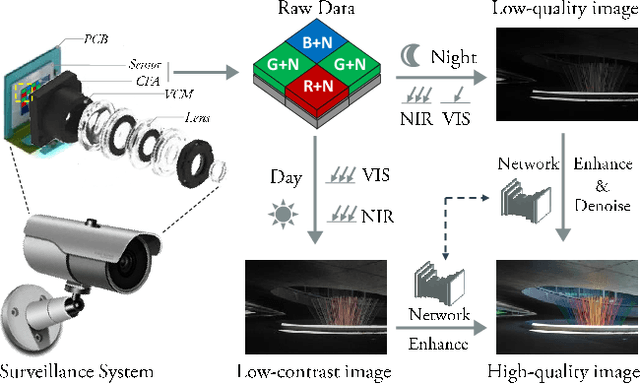

An Integrated Enhancement Solution for 24-hour Colorful Imaging

May 10, 2020

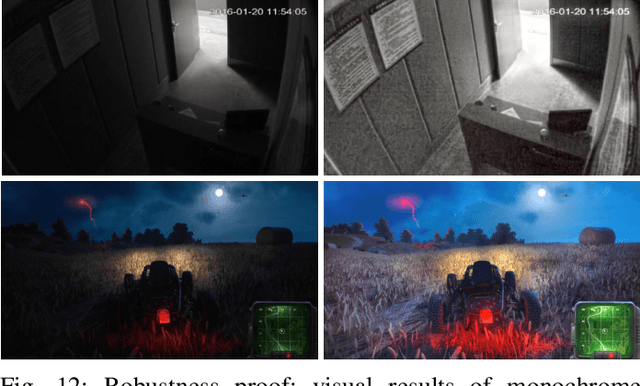

Abstract:The current industry practice for 24-hour outdoor imaging is to use a silicon camera supplemented with near-infrared (NIR) illumination. This will result in color images with poor contrast at daytime and absence of chrominance at nighttime. For this dilemma, all existing solutions try to capture RGB and NIR images separately. However, they need additional hardware support and suffer from various drawbacks, including short service life, high price, specific usage scenario, etc. In this paper, we propose a novel and integrated enhancement solution that produces clear color images, whether at abundant sunlight daytime or extremely low-light nighttime. Our key idea is to separate the VIS and NIR information from mixed signals, and enhance the VIS signal adaptively with the NIR signal as assistance. To this end, we build an optical system to collect a new VIS-NIR-MIX dataset and present a physically meaningful image processing algorithm based on CNN. Extensive experiments show outstanding results, which demonstrate the effectiveness of our solution.

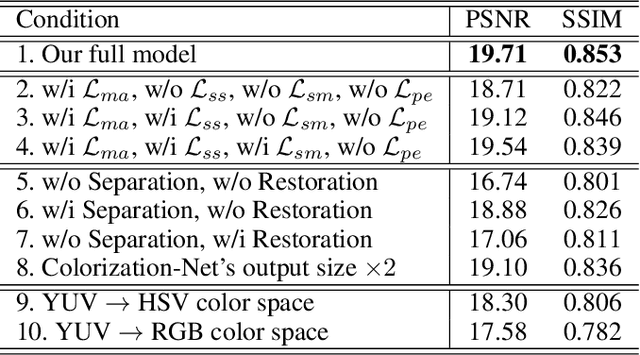

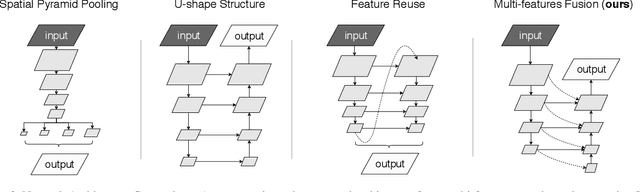

Real-Time Semantic Segmentation via Multiply Spatial Fusion Network

Nov 17, 2019

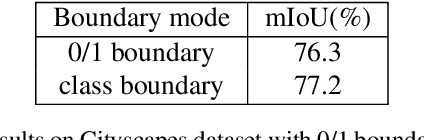

Abstract:Real-time semantic segmentation plays a significant role in industry applications, such as autonomous driving, robotics and so on. It is a challenging task as both efficiency and performance need to be considered simultaneously. To address such a complex task, this paper proposes an efficient CNN called Multiply Spatial Fusion Network (MSFNet) to achieve fast and accurate perception. The proposed MSFNet uses Class Boundary Supervision to process the relevant boundary information based on our proposed Multi-features Fusion Module which can obtain spatial information and enlarge receptive field. Therefore, the final upsampling of the feature maps of 1/8 original image size can achieve impressive results while maintaining a high speed. Experiments on Cityscapes and Camvid datasets show an obvious advantage of the proposed approach compared with the existing approaches. Specifically, it achieves 77.1% Mean IOU on the Cityscapes test dataset with the speed of 41 FPS for a 1024*2048 input, and 75.4% Mean IOU with the speed of 91 FPS on the Camvid test dataset.

Attention-guided Low-light Image Enhancement

Aug 02, 2019

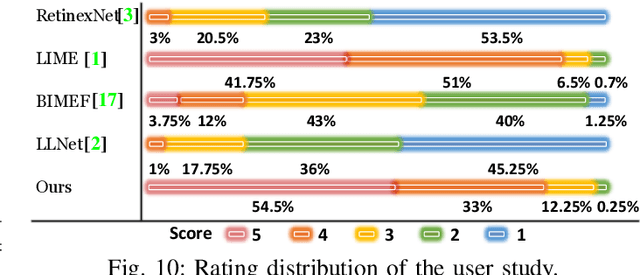

Abstract:Low-light image enhancement is a challenging task since various factors, including brightness, contrast, artifacts and noise, should be handled simultaneously and effectively. To address such a difficult problem, this paper proposes a novel attention-guided enhancement solution and delivers the corresponding end-to-end multi-branch CNNs. The key of our method is the computation of two attention maps to guide the exposure enhancement and denoising respectively. In particular, the first attention map distinguishes underexposed regions from normally exposed regions, while the second attention map distinguishes noises from real-world textures. Under their guidance, the proposed multi-branch enhancement network can work in an adaptive way. Other contributions of this paper include the "decomposition/multi-branch-enhancement/fusion" design of the enhancement network, the reinforcement-net for contrast enhancement, and the proposed large-scale low-light enhancement dataset. We evaluate the proposed method through extensive experiments, and the results demonstrate that our solution outperforms state-of-the-art methods by a large margin. We additionally show that our method is flexible and effective for other image processing tasks.

Pathological Evidence Exploration in Deep Retinal Image Diagnosis

Dec 06, 2018

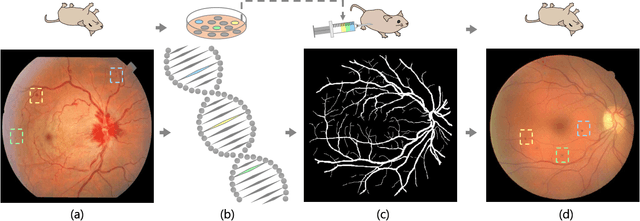

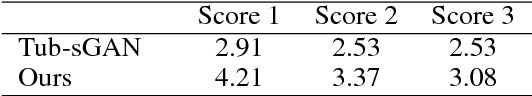

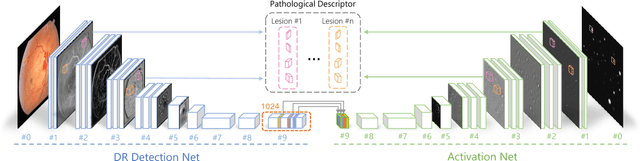

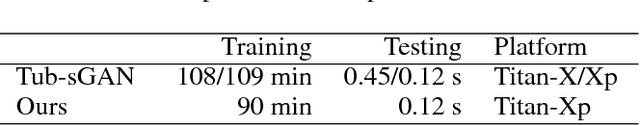

Abstract:Though deep learning has shown successful performance in classifying the label and severity stage of certain disease, most of them give few evidence on how to make prediction. Here, we propose to exploit the interpretability of deep learning application in medical diagnosis. Inspired by Koch's Postulates, a well-known strategy in medical research to identify the property of pathogen, we define a pathological descriptor that can be extracted from the activated neurons of a diabetic retinopathy detector. To visualize the symptom and feature encoded in this descriptor, we propose a GAN based method to synthesize pathological retinal image given the descriptor and a binary vessel segmentation. Besides, with this descriptor, we can arbitrarily manipulate the position and quantity of lesions. As verified by a panel of 5 licensed ophthalmologists, our synthesized images carry the symptoms that are directly related to diabetic retinopathy diagnosis. The panel survey also shows that our generated images is both qualitatively and quantitatively superior to existing methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge