Fatemeh Rahimian

DiffPAD: Denoising Diffusion-based Adversarial Patch Decontamination

Oct 31, 2024Abstract:In the ever-evolving adversarial machine learning landscape, developing effective defenses against patch attacks has become a critical challenge, necessitating reliable solutions to safeguard real-world AI systems. Although diffusion models have shown remarkable capacity in image synthesis and have been recently utilized to counter $\ell_p$-norm bounded attacks, their potential in mitigating localized patch attacks remains largely underexplored. In this work, we propose DiffPAD, a novel framework that harnesses the power of diffusion models for adversarial patch decontamination. DiffPAD first performs super-resolution restoration on downsampled input images, then adopts binarization, dynamic thresholding scheme and sliding window for effective localization of adversarial patches. Such a design is inspired by the theoretically derived correlation between patch size and diffusion restoration error that is generalized across diverse patch attack scenarios. Finally, DiffPAD applies inpainting techniques to the original input images with the estimated patch region being masked. By integrating closed-form solutions for super-resolution restoration and image inpainting into the conditional reverse sampling process of a pre-trained diffusion model, DiffPAD obviates the need for text guidance or fine-tuning. Through comprehensive experiments, we demonstrate that DiffPAD not only achieves state-of-the-art adversarial robustness against patch attacks but also excels in recovering naturalistic images without patch remnants.

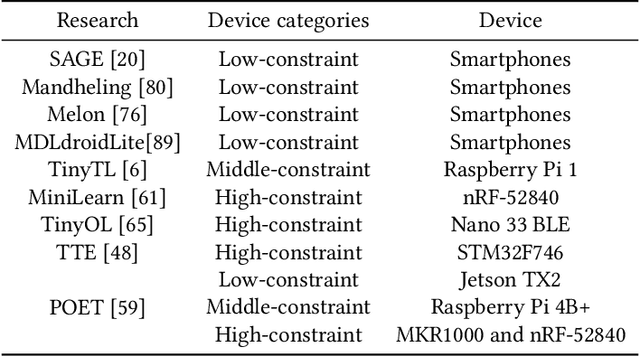

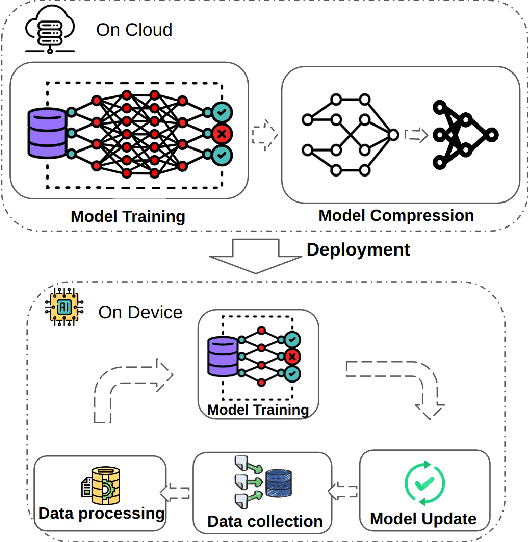

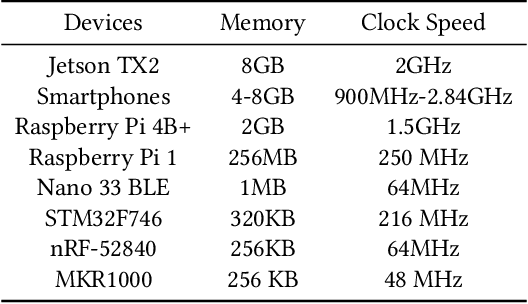

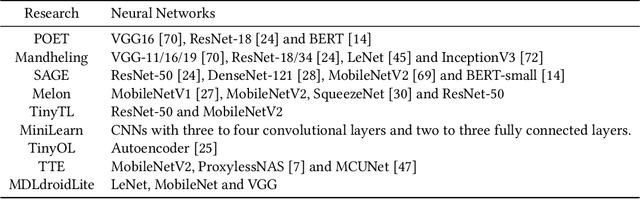

On-device Training: A First Overview on Existing Systems

Dec 01, 2022

Abstract:The recent breakthroughs in machine learning (ML) and deep learning (DL) have enabled many new capabilities across plenty of application domains. While most existing machine learning models require large memory and computing power, efforts have been made to deploy some models on resource-constrained devices as well. There are several systems that perform inference on the device, while direct training on the device still remains a challenge. On-device training, however, is attracting more and more interest because: (1) it enables training models on local data without needing to share data over the cloud, thus enabling privacy preserving computation by design; (2) models can be refined on devices to provide personalized services and cope with model drift in order to adapt to the changes of the real-world environment; and (3) it enables the deployment of models in remote, hardly accessible locations or places without stable internet connectivity. We summarize and analyze the-state-of-art systems research to provide the first survey of on-device training from a systems perspective.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge