Fabrizio Lombardi

Stochastic Streets: A Walk Through Random LLM Address Generation in four European Cities

Sep 16, 2025

Abstract:Large Language Models (LLMs) are capable of solving complex math problems or answer difficult questions on almost any topic, but can they generate random street addresses for European cities?

Can ChatGPT Learn to Count Letters?

Feb 23, 2025

Abstract:Large language models (LLMs) struggle on simple tasks such as counting the number of occurrences of a letter in a word. In this paper, we investigate if ChatGPT can learn to count letters and propose an efficient solution.

Understanding the Impact of Artificial Intelligence in Academic Writing: Metadata to the Rescue

Feb 23, 2025

Abstract:This column advocates for including artificial intelligence (AI)-specific metadata on those academic papers that are written with the help of AI in an attempt to analyze the use of such tools for disseminating research.

Speed and Conversational Large Language Models: Not All Is About Tokens per Second

Feb 23, 2025

Abstract:The speed of open-weights large language models (LLMs) and its dependency on the task at hand, when run on GPUs, is studied to present a comparative analysis of the speed of the most popular open LLMs.

Adaptive Resolution Inference (ARI): Energy-Efficient Machine Learning for Internet of Things

Aug 26, 2024

Abstract:The implementation of machine learning in Internet of Things devices poses significant operational challenges due to limited energy and computation resources. In recent years, significant efforts have been made to implement simplified ML models that can achieve reasonable performance while reducing computation and energy, for example by pruning weights in neural networks, or using reduced precision for the parameters and arithmetic operations. However, this type of approach is limited by the performance of the ML implementation, i.e., by the loss for example in accuracy due to the model simplification. In this article, we present adaptive resolution inference (ARI), a novel approach that enables to evaluate new tradeoffs between energy dissipation and model performance in ML implementations. The main principle of the proposed approach is to run inferences with reduced precision (quantization) and use the margin over the decision threshold to determine if either the result is reliable, or the inference must run with the full model. The rationale is that quantization only introduces small deviations in the inference scores, such that if the scores have a sufficient margin over the decision threshold, it is unlikely that the full model would have a different result. Therefore, we can run the quantized model first, and only when the scores do not have a sufficient margin, the full model is run. This enables most inferences to run with the reduced precision model and only a small fraction requires the full model, so significantly reducing computation and energy while not affecting model performance. The proposed ARI approach is presented, analyzed in detail, and evaluated using different data sets for floating-point and stochastic computing implementations. The results show that ARI can significantly reduce the energy for inference in different configurations with savings between 40% and 85%.

Dependability Evaluation of Stable Diffusion with Soft Errors on the Model Parameters

Mar 30, 2024

Abstract:Stable Diffusion is a popular Transformer-based model for image generation from text; it applies an image information creator to the input text and the visual knowledge is added in a step-by-step fashion to create an image that corresponds to the input text. However, this diffusion process can be corrupted by errors from the underlying hardware, which are especially relevant for implementations at the nanoscales. In this paper, the dependability of Stable Diffusion is studied focusing on soft errors in the memory that stores the model parameters; specifically, errors are injected into some critical layers of the Transformer in different blocks of the image information creator, to evaluate their impact on model performance. The simulations results reveal several conclusions: 1) errors on the down blocks of the creator have a larger impact on the quality of the generated images than those on the up blocks, while the errors on middle block have negligible effect; 2) errors on the self-attention (SA) layers have larger impact on the results than those on the cross-attention (CA) layers; 3) for CA layers, errors on deeper levels result in a larger impact; 4) errors on blocks at the first levels tend to introduce noise in the image, and those on deep layers tend to introduce large colored blocks. These results provide an initial understanding of the impact of errors on Stable Diffusion.

Concurrent Linguistic Error Detection (CLED) for Large Language Models

Mar 25, 2024

Abstract:The wide adoption of Large language models (LLMs) makes their dependability a pressing concern. Detection of errors is the first step to mitigating their impact on a system and thus, efficient error detection for LLMs is an important issue. In many settings, the LLM is considered as a black box with no access to the internal nodes; this prevents the use of many error detection schemes that need access to the model's internal nodes. An interesting observation is that the output of LLMs in error-free operation should be valid and normal text. Therefore, when the text is not valid or differs significantly from normal text, it is likely that there is an error. Based on this observation we propose to perform Concurrent Linguistic Error Detection (CLED); this scheme extracts some linguistic features of the text generated by the LLM and feeds them to a concurrent classifier that detects errors. Since the proposed error detection mechanism only relies on the outputs of the model, then it can be used on LLMs in which there is no access to the internal nodes. The proposed CLED scheme has been evaluated on the T5 model when used for news summarization and on the OPUS-MT model when used for translation. In both cases, the same set of linguistic features has been used for error detection to illustrate the applicability of the proposed scheme beyond a specific case. The results show that CLED can detect most of the errors at a low overhead penalty. The use of the concurrent classifier also enables a trade-off between error detection effectiveness and its associated overhead, so providing flexibility to a designer.

How many words does ChatGPT know? The answer is ChatWords

Sep 28, 2023

Abstract:The introduction of ChatGPT has put Artificial Intelligence (AI) Natural Language Processing (NLP) in the spotlight. ChatGPT adoption has been exponential with millions of users experimenting with it in a myriad of tasks and application domains with impressive results. However, ChatGPT has limitations and suffers hallucinations, for example producing answers that look plausible but they are completely wrong. Evaluating the performance of ChatGPT and similar AI tools is a complex issue that is being explored from different perspectives. In this work, we contribute to those efforts with ChatWords, an automated test system, to evaluate ChatGPT knowledge of an arbitrary set of words. ChatWords is designed to be extensible, easy to use, and adaptable to evaluate also other NLP AI tools. ChatWords is publicly available and its main goal is to facilitate research on the lexical knowledge of AI tools. The benefits of ChatWords are illustrated with two case studies: evaluating the knowledge that ChatGPT has of the Spanish lexicon (taken from the official dictionary of the "Real Academia Espa\~nola") and of the words that appear in the Quixote, the well-known novel written by Miguel de Cervantes. The results show that ChatGPT is only able to recognize approximately 80% of the words in the dictionary and 90% of the words in the Quixote, in some cases with an incorrect meaning. The implications of the lexical knowledge of NLP AI tools and potential applications of ChatWords are also discussed providing directions for further work on the study of the lexical knowledge of AI tools.

Concurrent Classifier Error Detection (CCED) in Large Scale Machine Learning Systems

Jun 02, 2023

Abstract:The complexity of Machine Learning (ML) systems increases each year, with current implementations of large language models or text-to-image generators having billions of parameters and requiring billions of arithmetic operations. As these systems are widely utilized, ensuring their reliable operation is becoming a design requirement. Traditional error detection mechanisms introduce circuit or time redundancy that significantly impacts system performance. An alternative is the use of Concurrent Error Detection (CED) schemes that operate in parallel with the system and exploit their properties to detect errors. CED is attractive for large ML systems because it can potentially reduce the cost of error detection. In this paper, we introduce Concurrent Classifier Error Detection (CCED), a scheme to implement CED in ML systems using a concurrent ML classifier to detect errors. CCED identifies a set of check signals in the main ML system and feeds them to the concurrent ML classifier that is trained to detect errors. The proposed CCED scheme has been implemented and evaluated on two widely used large-scale ML models: Contrastive Language Image Pretraining (CLIP) used for image classification and Bidirectional Encoder Representations from Transformers (BERT) used for natural language applications. The results show that more than 95 percent of the errors are detected when using a simple Random Forest classifier that is order of magnitude simpler than CLIP or BERT. These results illustrate the potential of CCED to implement error detection in large-scale ML models.

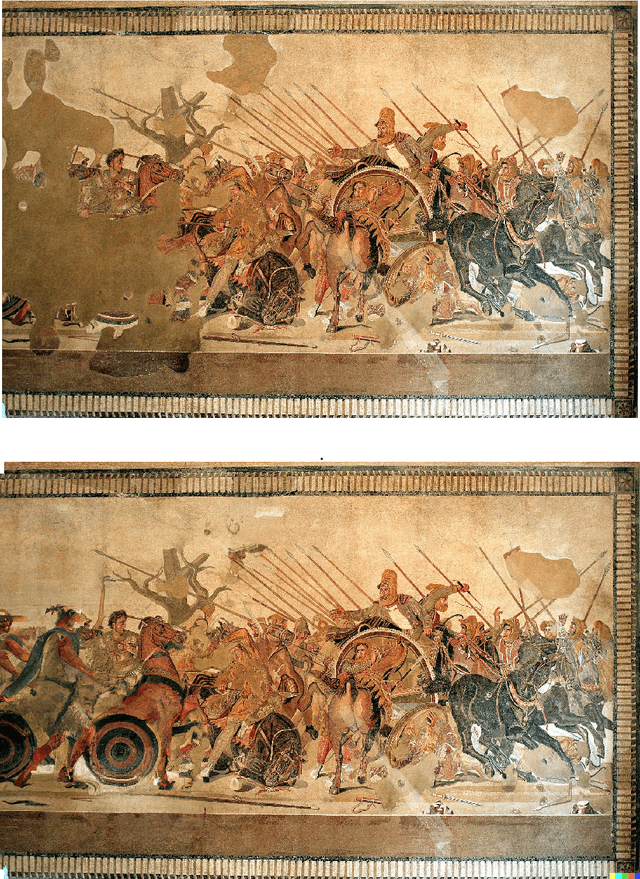

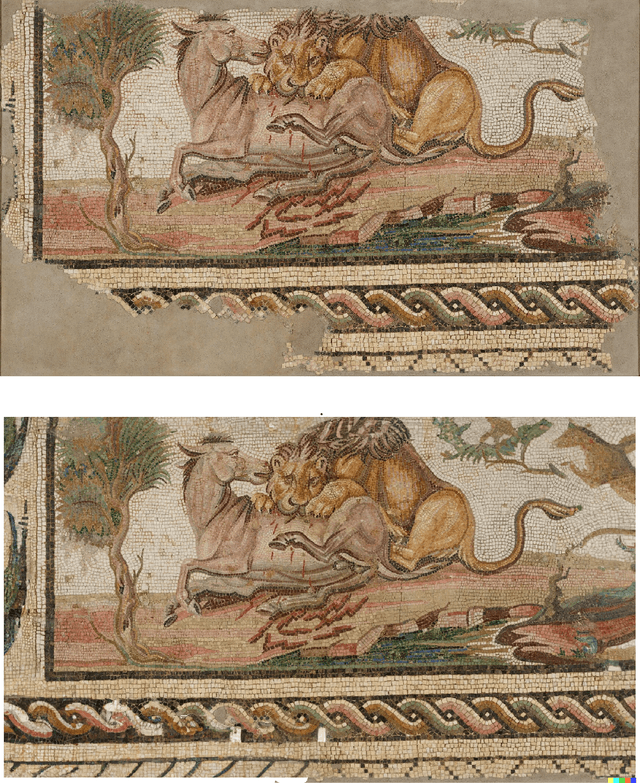

Can Artificial Intelligence Reconstruct Ancient Mosaics?

Oct 07, 2022

Abstract:A large number of ancient mosaics have not reached us because they have been destroyed by erosion, earthquakes, looting or even used as materials in newer construction. To make things worse, among the small fraction of mosaics that we have been able to recover, many are damaged or incomplete. Therefore, restoration and reconstruction of mosaics play a fundamental role to preserve cultural heritage and to understand the role of mosaics in ancient cultures. This reconstruction has traditionally been done manually and more recently using computer graphics programs but always by humans. In the last years, Artificial Intelligence (AI) has made impressive progress in the generation of images from text descriptions and reference images. State of the art AI tools such as DALL-E2 can generate high quality images from text prompts and can take a reference image to guide the process. In august 2022, DALL-E2 launched a new feature called outpainting that takes as input an incomplete image and a text prompt and then generates a complete image filling the missing parts. In this paper, we explore whether this innovative technology can be used to reconstruct mosaics with missing parts. Hence a set of ancient mosaics have been used and reconstructed using DALL-E2; results are promising showing that AI is able to interpret the key features of the mosaics and is able to produce reconstructions that capture the essence of the scene. However, in some cases AI fails to reproduce some details, geometric forms or introduces elements that are not consistent with the rest of the mosaic. This suggests that as AI image generation technology matures in the next few years, it could be a valuable tool for mosaic reconstruction going forward.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge