Evair Severo

Robust Iris Segmentation Based on Fully Convolutional Networks and Generative Adversarial Networks

Sep 04, 2018

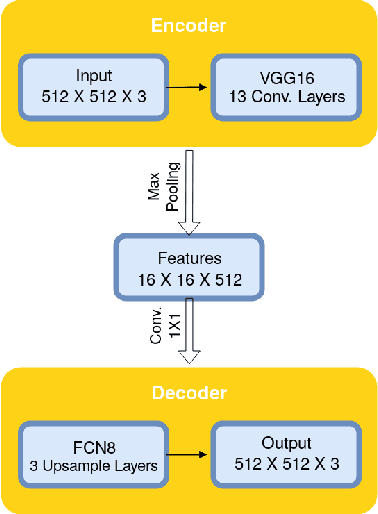

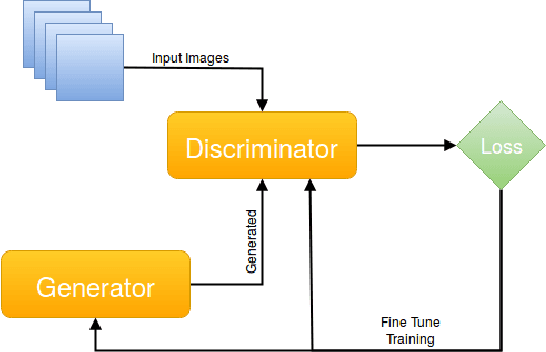

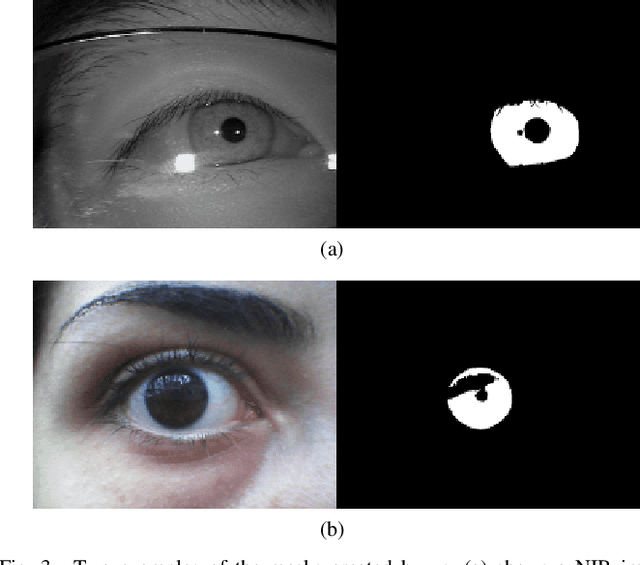

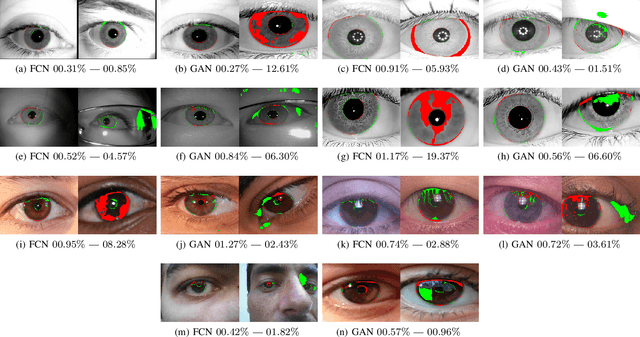

Abstract:The iris can be considered as one of the most important biometric traits due to its high degree of uniqueness. Iris-based biometrics applications depend mainly on the iris segmentation whose suitability is not robust for different environments such as near-infrared (NIR) and visible (VIS) ones. In this paper, two approaches for robust iris segmentation based on Fully Convolutional Networks (FCNs) and Generative Adversarial Networks (GANs) are described. Similar to a common convolutional network, but without the fully connected layers (i.e., the classification layers), an FCN employs at its end a combination of pooling layers from different convolutional layers. Based on the game theory, a GAN is designed as two networks competing with each other to generate the best segmentation. The proposed segmentation networks achieved promising results in all evaluated datasets (i.e., BioSec, CasiaI3, CasiaT4, IITD-1) of NIR images and (NICE.I, CrEye-Iris and MICHE-I) of VIS images in both non-cooperative and cooperative domains, outperforming the baselines techniques which are the best ones found so far in the literature, i.e., a new state of the art for these datasets. Furthermore, we manually labeled 2,431 images from CasiaT4, CrEye-Iris and MICHE-I datasets, making the masks available for research purposes.

Fully Convolutional Networks and Generative Adversarial Networks Applied to Sclera Segmentation

Jul 09, 2018

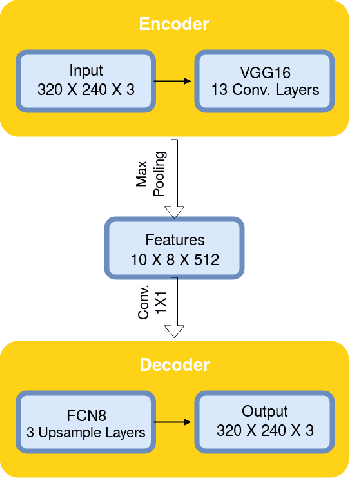

Abstract:Due to the world's demand for security systems, biometrics can be seen as an important topic of research in computer vision. One of the biometric forms that has been gaining attention is the recognition based on sclera. The initial and paramount step for performing this type of recognition is the segmentation of the region of interest, i.e. the sclera. In this context, two approaches for such task based on the Fully Convolutional Network (FCN) and on Generative Adversarial Network (GAN) are introduced in this work. FCN is similar to a common convolution neural network, however the fully connected layers (i.e., the classification layers) are removed from the end of the network and the output is generated by combining the output of pooling layers from different convolutional ones. The GAN is based on the game theory, where we have two networks competing with each other to generate the best segmentation. In order to perform fair comparison with baselines and quantitative and objective evaluations of the proposed approaches, we provide to the scientific community new 1,300 manually segmented images from two databases. The experiments are performed on the UBIRIS.v2 and MICHE databases and the best performing configurations of our propositions achieved F-score's measures of 87.48% and 88.32%, respectively.

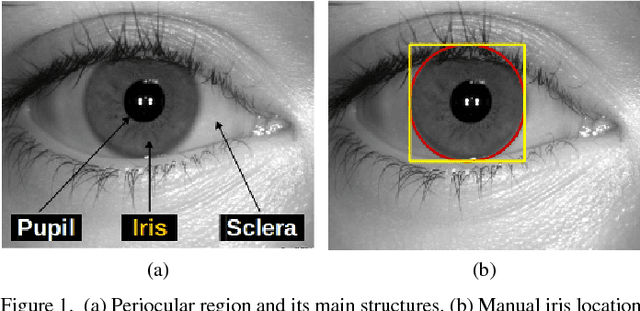

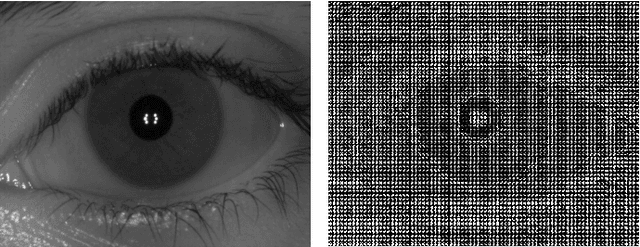

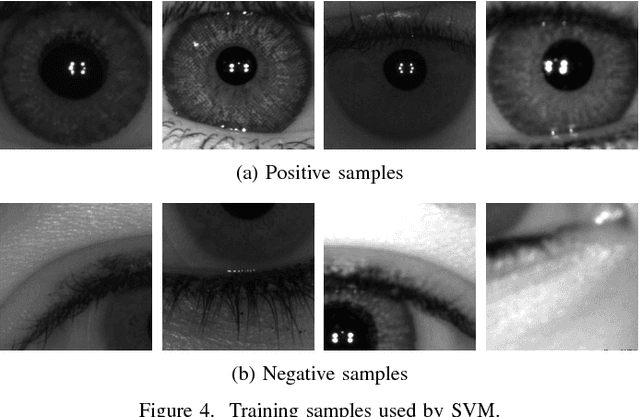

A Benchmark for Iris Location and a Deep Learning Detector Evaluation

Apr 30, 2018

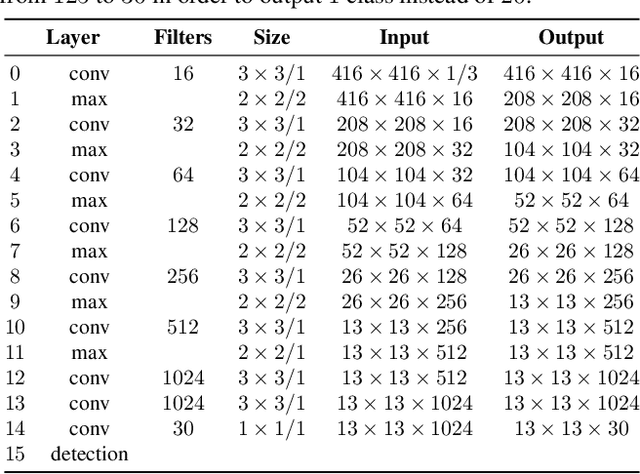

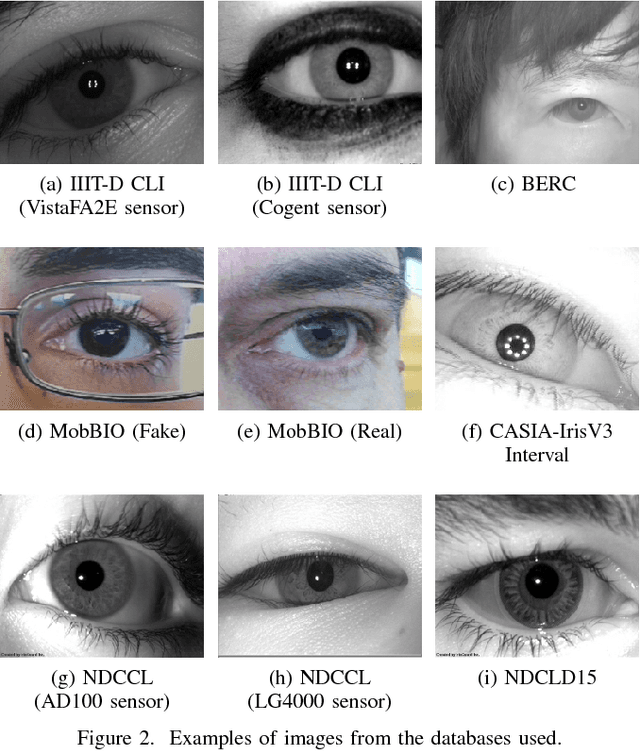

Abstract:The iris is considered as the biometric trait with the highest unique probability. The iris location is an important task for biometrics systems, affecting directly the results obtained in specific applications such as iris recognition, spoofing and contact lenses detection, among others. This work defines the iris location problem as the delimitation of the smallest squared window that encompasses the iris region. In order to build a benchmark for iris location we annotate (iris squared bounding boxes) four databases from different biometric applications and make them publicly available to the community. Besides these 4 annotated databases, we include 2 others from the literature. We perform experiments on these six databases, five obtained with near infra-red sensors and one with visible light sensor. We compare the classical and outstanding Daugman iris location approach with two window based detectors: 1) a sliding window detector based on features from Histogram of Oriented Gradients (HOG) and a linear Support Vector Machines (SVM) classifier; 2) a deep learning based detector fine-tuned from YOLO object detector. Experimental results showed that the deep learning based detector outperforms the other ones in terms of accuracy and runtime (GPUs version) and should be chosen whenever possible.

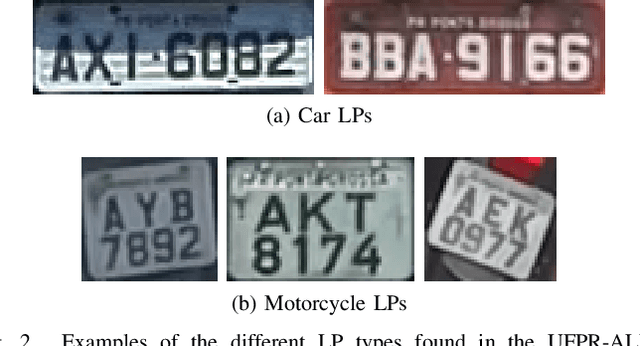

A Robust Real-Time Automatic License Plate Recognition Based on the YOLO Detector

Apr 28, 2018

Abstract:Automatic License Plate Recognition (ALPR) has been a frequent topic of research due to many practical applications. However, many of the current solutions are still not robust in real-world situations, commonly depending on many constraints. This paper presents a robust and efficient ALPR system based on the state-of-the-art YOLO object detector. The Convolutional Neural Networks (CNNs) are trained and fine-tuned for each ALPR stage so that they are robust under different conditions (e.g., variations in camera, lighting, and background). Specially for character segmentation and recognition, we design a two-stage approach employing simple data augmentation tricks such as inverted License Plates (LPs) and flipped characters. The resulting ALPR approach achieved impressive results in two datasets. First, in the SSIG dataset, composed of 2,000 frames from 101 vehicle videos, our system achieved a recognition rate of 93.53% and 47 Frames Per Second (FPS), performing better than both Sighthound and OpenALPR commercial systems (89.80% and 93.03%, respectively) and considerably outperforming previous results (81.80%). Second, targeting a more realistic scenario, we introduce a larger public dataset, called UFPR-ALPR dataset, designed to ALPR. This dataset contains 150 videos and 4,500 frames captured when both camera and vehicles are moving and also contains different types of vehicles (cars, motorcycles, buses and trucks). In our proposed dataset, the trial versions of commercial systems achieved recognition rates below 70%. On the other hand, our system performed better, with recognition rate of 78.33% and 35 FPS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge