Enrico Mariconti

Cerberus: Exploring Federated Prediction of Security Events

Sep 07, 2022

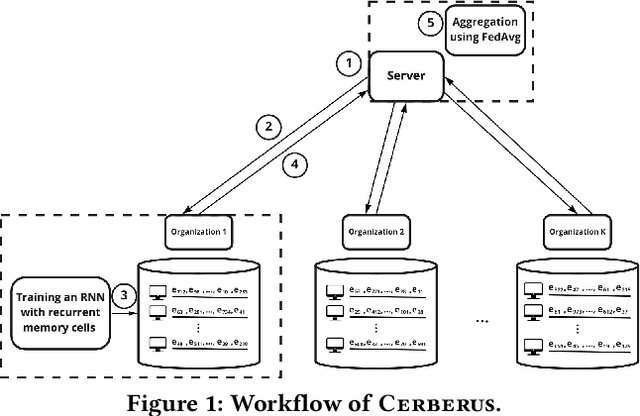

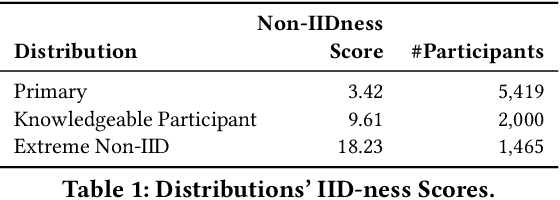

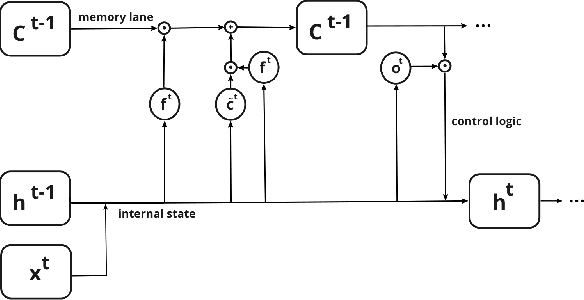

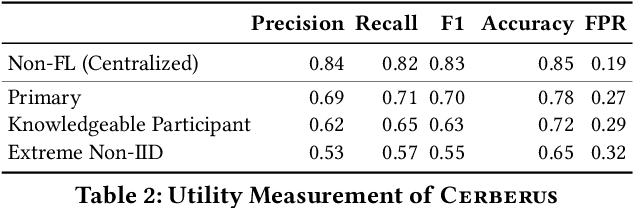

Abstract:Modern defenses against cyberattacks increasingly rely on proactive approaches, e.g., to predict the adversary's next actions based on past events. Building accurate prediction models requires knowledge from many organizations; alas, this entails disclosing sensitive information, such as network structures, security postures, and policies, which might often be undesirable or outright impossible. In this paper, we explore the feasibility of using Federated Learning (FL) to predict future security events. To this end, we introduce Cerberus, a system enabling collaborative training of Recurrent Neural Network (RNN) models for participating organizations. The intuition is that FL could potentially offer a middle-ground between the non-private approach where the training data is pooled at a central server and the low-utility alternative of only training local models. We instantiate Cerberus on a dataset obtained from a major security company's intrusion prevention product and evaluate it vis-a-vis utility, robustness, and privacy, as well as how participants contribute to and benefit from the system. Overall, our work sheds light on both the positive aspects and the challenges of using FL for this task and paves the way for deploying federated approaches to predictive security.

MaMaDroid2.0 -- The Holes of Control Flow Graphs

Feb 28, 2022

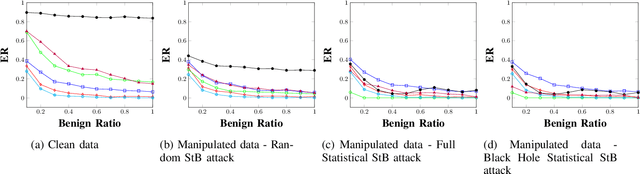

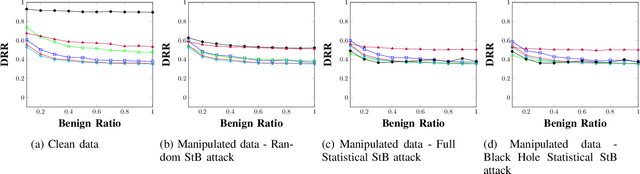

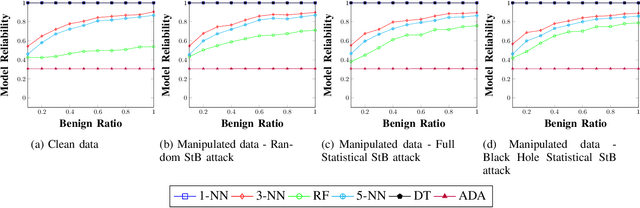

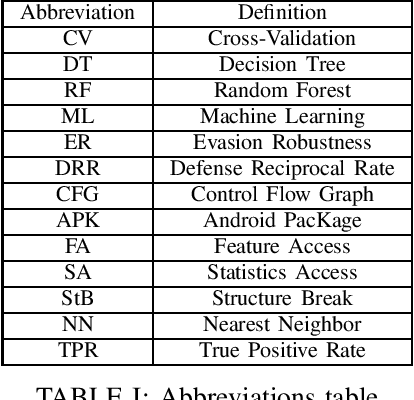

Abstract:Android malware is a continuously expanding threat to billions of mobile users around the globe. Detection systems are updated constantly to address these threats. However, a backlash takes the form of evasion attacks, in which an adversary changes malicious samples such that those samples will be misclassified as benign. This paper fully inspects a well-known Android malware detection system, MaMaDroid, which analyzes the control flow graph of the application. Changes to the portion of benign samples in the train set and models are considered to see their effect on the classifier. The changes in the ratio between benign and malicious samples have a clear effect on each one of the models, resulting in a decrease of more than 40% in their detection rate. Moreover, adopted ML models are implemented as well, including 5-NN, Decision Tree, and Adaboost. Exploration of the six models reveals a typical behavior in different cases, of tree-based models and distance-based models. Moreover, three novel attacks that manipulate the CFG and their detection rates are described for each one of the targeted models. The attacks decrease the detection rate of most of the models to 0%, with regards to different ratios of benign to malicious apps. As a result, a new version of MaMaDroid is engineered. This model fuses the CFG of the app and static analysis of features of the app. This improved model is proved to be robust against evasion attacks targeting both CFG-based models and static analysis models, achieving a detection rate of more than 90% against each one of the attacks.

Tiresias: Predicting Security Events Through Deep Learning

May 24, 2019

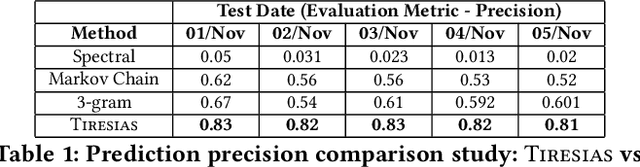

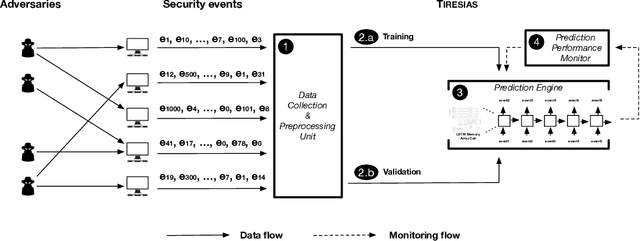

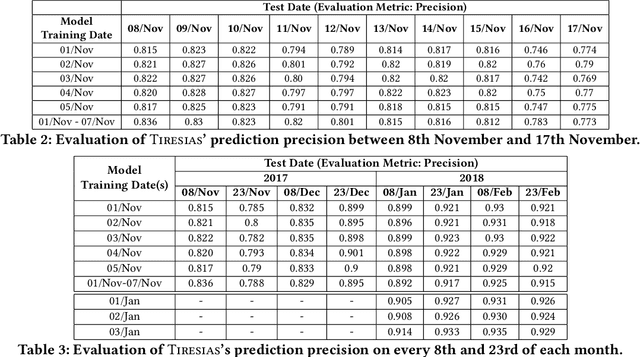

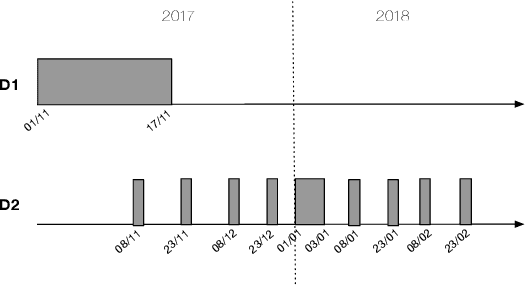

Abstract:With the increased complexity of modern computer attacks, there is a need for defenders not only to detect malicious activity as it happens, but also to predict the specific steps that will be taken by an adversary when performing an attack. However this is still an open research problem, and previous research in predicting malicious events only looked at binary outcomes (e.g., whether an attack would happen or not), but not at the specific steps that an attacker would undertake. To fill this gap we present Tiresias, a system that leverages Recurrent Neural Networks (RNNs) to predict future events on a machine, based on previous observations. We test Tiresias on a dataset of 3.4 billion security events collected from a commercial intrusion prevention system, and show that our approach is effective in predicting the next event that will occur on a machine with a precision of up to 0.93. We also show that the models learned by Tiresias are reasonably stable over time, and provide a mechanism that can identify sudden drops in precision and trigger a retraining of the system. Finally, we show that the long-term memory typical of RNNs is key in performing event prediction, rendering simpler methods not up to the task.

MaMaDroid: Detecting Android Malware by Building Markov Chains of Behavioral Models (Extended Version)

Nov 20, 2017

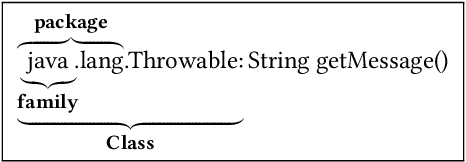

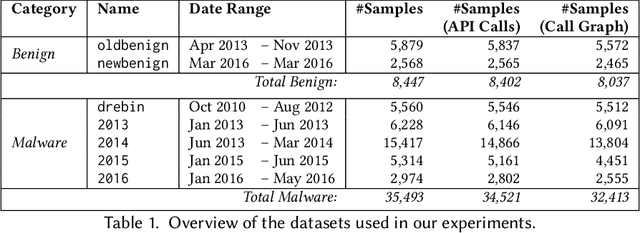

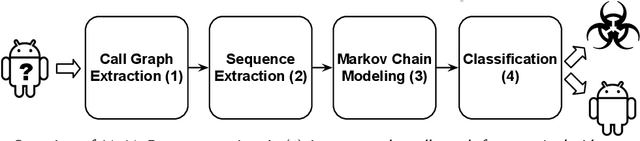

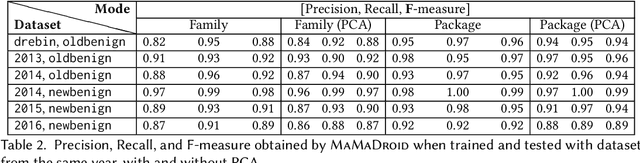

Abstract:As Android becomes increasingly popular, so does malware targeting it, this motivating the research community to propose many different detection techniques. However, the constant evolution of the Android ecosystem, and of malware itself, makes it hard to design robust tools that can operate for long periods of time without the need for modifications or costly re-training. Aiming to address this issue, we set to detect malware from a behavioral point of view, modeled as the sequence of abstracted API calls. We introduce MaMaDroid, a static-analysis based system that abstracts app's API calls to their class, package, or family, and builds a model from their sequences obtained from the call graph of an app as Markov chains. This ensures that the model is more resilient to API changes and the features set is of manageable size. We evaluate MaMaDroid using a dataset of 8.5K benign and 35.5K malicious apps collected over a period of six years, showing that it effectively detects malware (with up to 0.99 F-measure) and keeps its detection capabilities for long periods of time (up to 0.87 F-measure two years after training). We also show that MaMaDroid remarkably improves over DroidAPIMiner, a state-of-the-art detection system that relies on the frequency of (raw) API calls. Aiming to assess whether MaMaDroid's effectiveness mainly stems from the API abstraction or from the sequencing modeling, we also evaluate a variant of it that uses frequency (instead of sequences), of abstracted API calls. We find that it is not as accurate, failing to capture maliciousness when trained on malware samples including API calls that are equally or more frequently used by benign apps.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge