Ekaterina Dmitrieva

HiFi-Stream: Streaming Speech Enhancement with Generative Adversarial Networks

Mar 21, 2025Abstract:Speech Enhancement techniques have become core technologies in mobile devices and voice software simplifying downstream speech tasks. Still, modern Deep Learning (DL) solutions often require high amount of computational resources what makes their usage on low-resource devices challenging. We present HiFi-Stream, an optimized version of recently published HiFi++ model. Our experiments demonstrate that HiFiStream saves most of the qualities of the original model despite its size and computational complexity: the lightest version has only around 490k parameters which is 3.5x reduction in comparison to the original HiFi++ making it one of the smallest and fastest models available. The model is evaluated in streaming setting where it demonstrates its superior performance in comparison to modern baselines.

A Differentiable Language Model Adversarial Attack on Text Classifiers

Jul 23, 2021

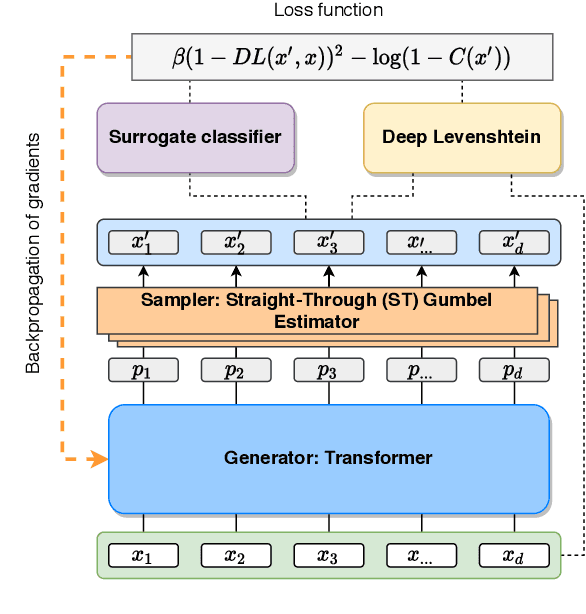

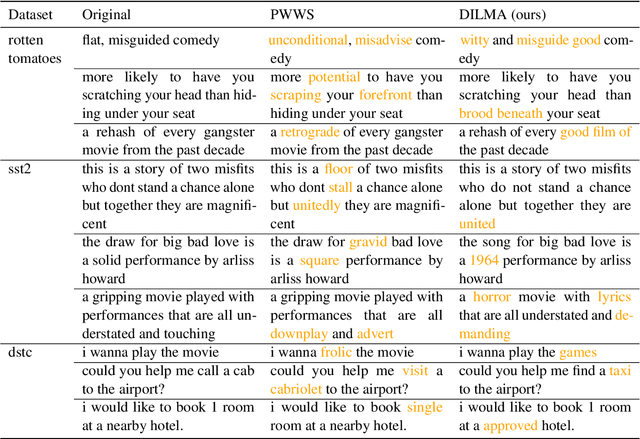

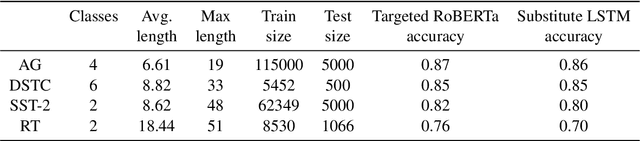

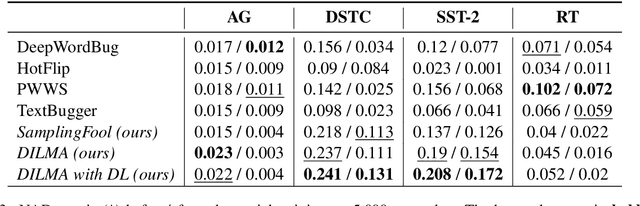

Abstract:Robustness of huge Transformer-based models for natural language processing is an important issue due to their capabilities and wide adoption. One way to understand and improve robustness of these models is an exploration of an adversarial attack scenario: check if a small perturbation of an input can fool a model. Due to the discrete nature of textual data, gradient-based adversarial methods, widely used in computer vision, are not applicable per~se. The standard strategy to overcome this issue is to develop token-level transformations, which do not take the whole sentence into account. In this paper, we propose a new black-box sentence-level attack. Our method fine-tunes a pre-trained language model to generate adversarial examples. A proposed differentiable loss function depends on a substitute classifier score and an approximate edit distance computed via a deep learning model. We show that the proposed attack outperforms competitors on a diverse set of NLP problems for both computed metrics and human evaluation. Moreover, due to the usage of the fine-tuned language model, the generated adversarial examples are hard to detect, thus current models are not robust. Hence, it is difficult to defend from the proposed attack, which is not the case for other attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge