Dirk Ruiken

Sampling-Based Grasp and Collision Prediction for Assisted Teleoperation

Apr 25, 2025Abstract:Shared autonomy allows for combining the global planning capabilities of a human operator with the strengths of a robot such as repeatability and accurate control. In a real-time teleoperation setting, one possibility for shared autonomy is to let the human operator decide for the rough movement and to let the robot do fine adjustments, e.g., when the view of the operator is occluded. We present a learning-based concept for shared autonomy that aims at supporting the human operator in a real-time teleoperation setting. At every step, our system tracks the target pose set by the human operator as accurately as possible while at the same time satisfying a set of constraints which influence the robot's behavior. An important characteristic is that the constraints can be dynamically activated and deactivated which allows the system to provide task-specific assistance. Since the system must generate robot commands in real-time, solving an optimization problem in every iteration is not feasible. Instead, we sample potential target configurations and use Neural Networks for predicting the constraint costs for each configuration. By evaluating each configuration in parallel, our system is able to select the target configuration which satisfies the constraints and has the minimum distance to the operator's target pose with minimal delay. We evaluate the framework with a pick and place task on a bi-manual setup with two Franka Emika Panda robot arms with Robotiq grippers.

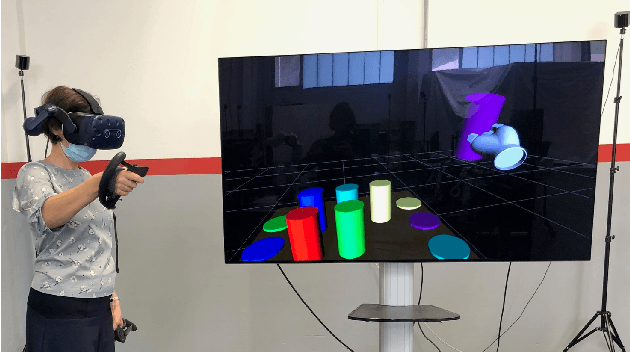

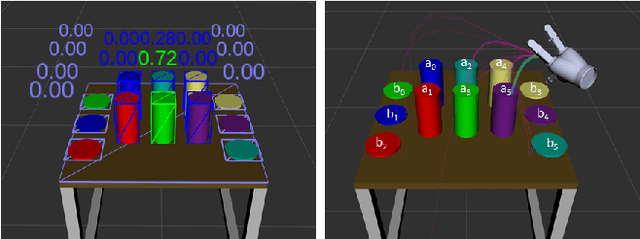

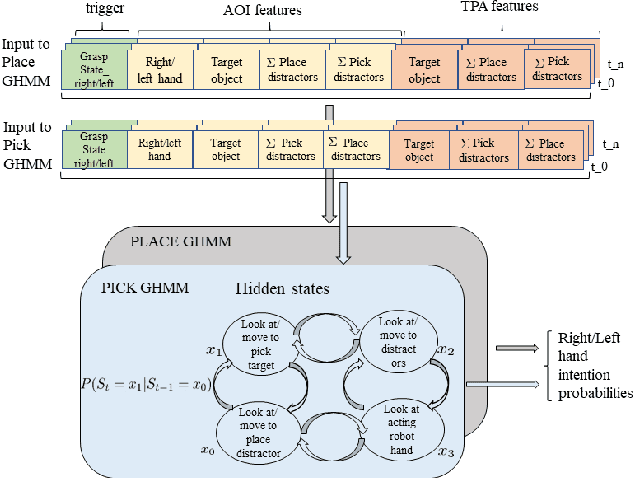

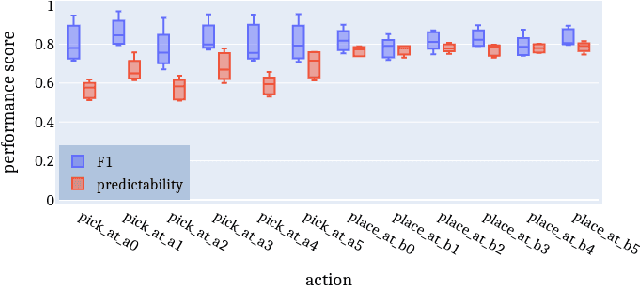

Intention estimation from gaze and motion features for human-robot shared-control object manipulation

Aug 18, 2022

Abstract:Shared control can help in teleoperated object manipulation by assisting with the execution of the user's intention. To this end, robust and prompt intention estimation is needed, which relies on behavioral observations. Here, an intention estimation framework is presented, which uses natural gaze and motion features to predict the current action and the target object. The system is trained and tested in a simulated environment with pick and place sequences produced in a relatively cluttered scene and with both hands, with possible hand-over to the other hand. Validation is conducted across different users and hands, achieving good accuracy and earliness of prediction. An analysis of the predictive power of single features shows the predominance of the grasping trigger and the gaze features in the early identification of the current action. In the current framework, the same probabilistic model can be used for the two hands working in parallel and independently, while a rule-based model is proposed to identify the resulting bimanual action. Finally, limitations and perspectives of this approach to more complex, full-bimanual manipulations are discussed.

An Adaptive Framework for Reliable Trajectory Following in Changing-Contact Robot Manipulation Tasks

Nov 15, 2021

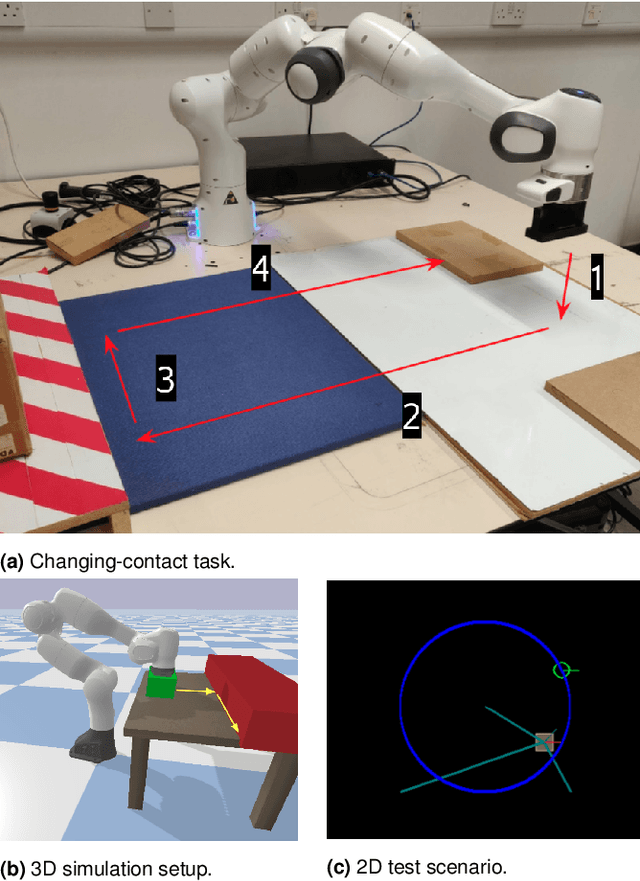

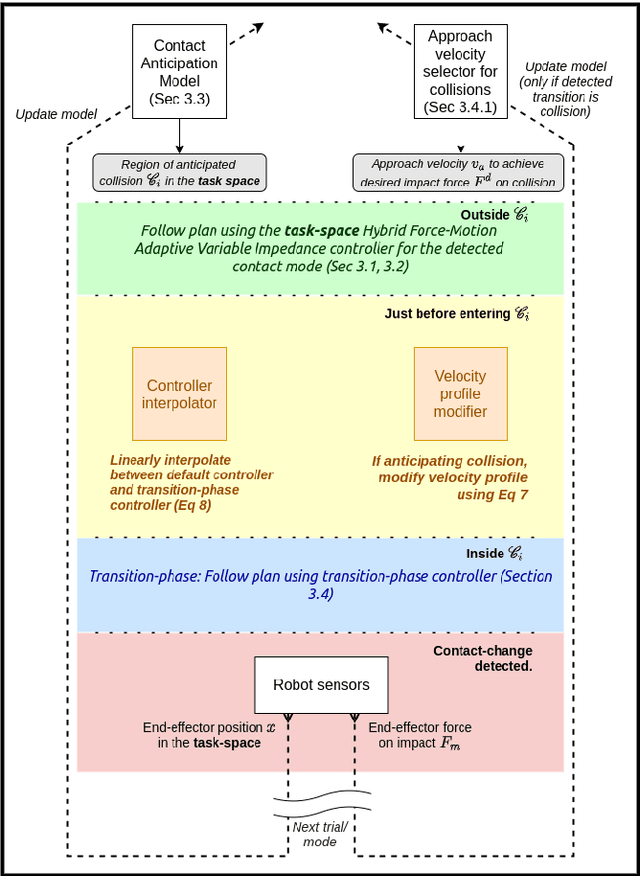

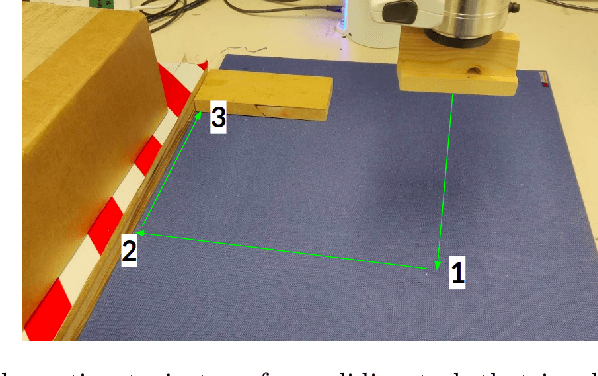

Abstract:We describe a framework for changing-contact robot manipulation tasks that require the robot to make and break contacts with objects and surfaces. The discontinuous interaction dynamics of such tasks make it difficult to construct and use a single dynamics model or control strategy, and the highly non-linear nature of the dynamics during contact changes can be damaging to the robot and the objects. We present an adaptive control framework that enables the robot to incrementally learn to predict contact changes in a changing contact task, learn the interaction dynamics of the piece-wise continuous system, and provide smooth and accurate trajectory tracking using a task-space variable impedance controller. We experimentally compare the performance of our framework against that of representative control methods to establish that the adaptive control and incremental learning components of our framework are needed to achieve smooth control in the presence of discontinuous dynamics in changing-contact robot manipulation tasks.

Towards a Framework for Changing-Contact Robot Manipulation

Jun 21, 2021

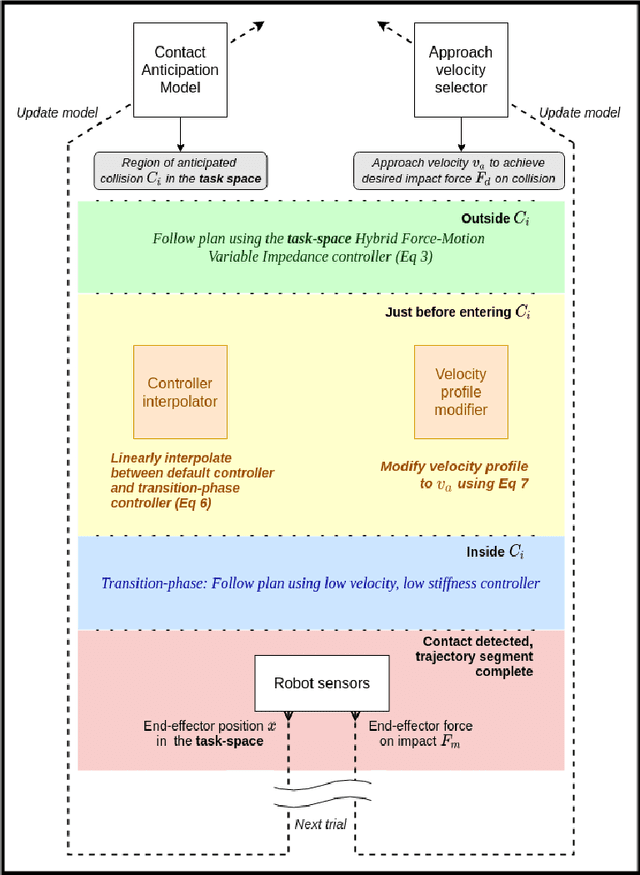

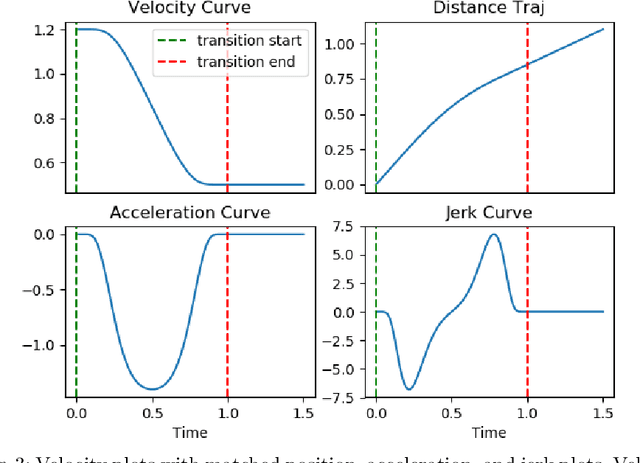

Abstract:Many robot manipulation tasks require the robot to make and break contact with objects and surfaces. The dynamics of such changing-contact robot manipulation tasks are discontinuous when contact is made or broken, and continuous elsewhere. These discontinuities make it difficult to construct and use a single dynamics model or control strategy for any such task. We present a framework for smooth dynamics and control of such changing-contact manipulation tasks. For any given target motion trajectory, the framework incrementally improves its prediction of when contacts will occur. This prediction and a model relating approach velocity to impact force modify the velocity profile of the motion sequence such that it is $C^\infty$ smooth, and help achieve a desired force on impact. We implement this framework by building on our hybrid force-motion variable impedance controller for continuous contact tasks. We experimentally evaluate our framework in the illustrative context of sliding tasks involving multiple contact changes with transitions between surfaces of different properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge