Dianpeng Wang

Adaptive Active Learning for Online Reliability Prediction of Satellite Electronics

Mar 10, 2026Abstract:Accurate on-orbit reliability prediction for satellite electronics is often hindered by limited data availability, varying operational conditions, and considerable unit-to-unit variability. To overcome these obstacles, this paper proposes a novel integrated online reliability prediction framework. The main contributions are twofold. First, a Wiener process-based degradation model is developed, incorporating a generalized Arrhenius link function, individual random effects, and spatial correlations among adjacent units. A customized maximum likelihood estimation method is further devised to facilitate efficient and accurate parameter inference. Second, a two-stage active learning sampling scheme is designed to adaptively enhance prediction accuracy. This strategy initially selects representative units based on spatial configuration, and subsequently determines optimal sampling times using a comprehensive criterion that balances unit-specific information, model uncertainty, and degradation dynamics. Numerical experiments and a practical case study from the Tiangong space station demonstrate that the proposed method markedly improves reliability prediction accuracy while significantly reducing data requirements, offering an efficient solution for the prognostic and health management of complex satellite electronic systems.

MindDiffuser: Controlled Image Reconstruction from Human Brain Activity with Semantic and Structural Diffusion

Aug 08, 2023Abstract:Reconstructing visual stimuli from brain recordings has been a meaningful and challenging task. Especially, the achievement of precise and controllable image reconstruction bears great significance in propelling the progress and utilization of brain-computer interfaces. Despite the advancements in complex image reconstruction techniques, the challenge persists in achieving a cohesive alignment of both semantic (concepts and objects) and structure (position, orientation, and size) with the image stimuli. To address the aforementioned issue, we propose a two-stage image reconstruction model called MindDiffuser. In Stage 1, the VQ-VAE latent representations and the CLIP text embeddings decoded from fMRI are put into Stable Diffusion, which yields a preliminary image that contains semantic information. In Stage 2, we utilize the CLIP visual feature decoded from fMRI as supervisory information, and continually adjust the two feature vectors decoded in Stage 1 through backpropagation to align the structural information. The results of both qualitative and quantitative analyses demonstrate that our model has surpassed the current state-of-the-art models on Natural Scenes Dataset (NSD). The subsequent experimental findings corroborate the neurobiological plausibility of the model, as evidenced by the interpretability of the multimodal feature employed, which align with the corresponding brain responses.

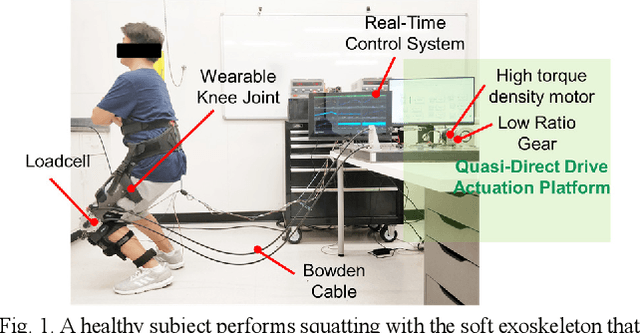

Design and Control of a Quasi-Direct Drive Soft Hybrid Knee Exoskeleton for Injury Prevention during Squatting

Feb 19, 2019

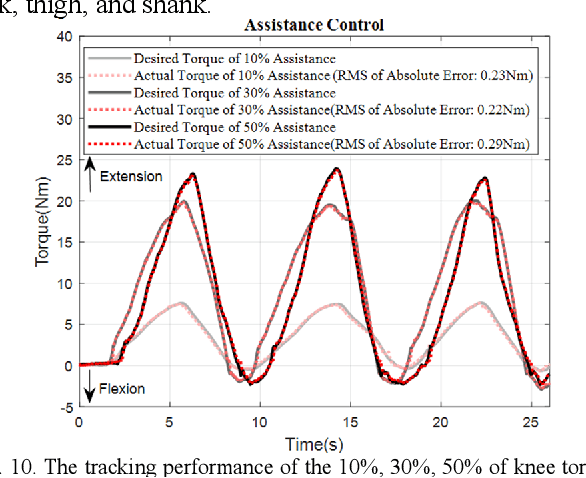

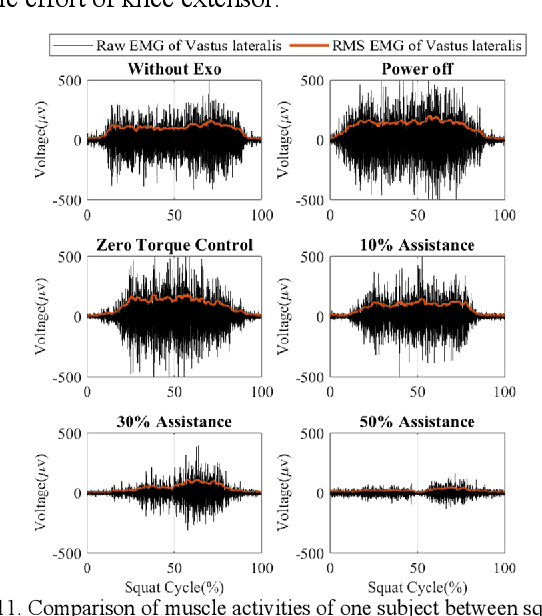

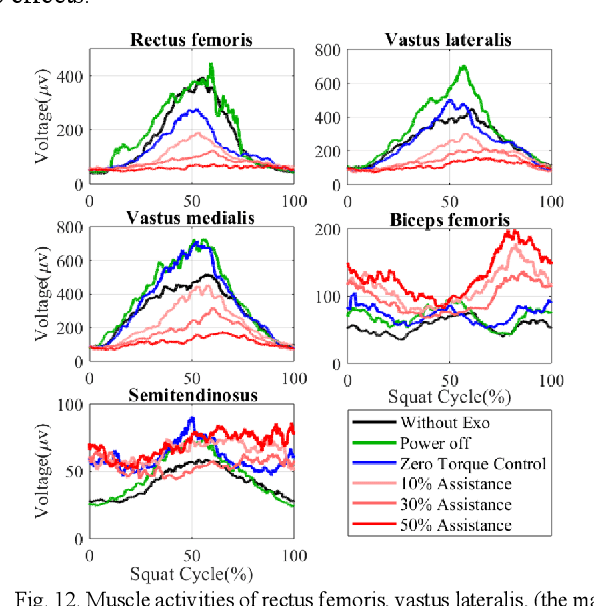

Abstract:This paper presents a new design approach of wearable robots that tackle the three barriers to mainstay practical use of exoskeletons, namely discomfort, weight of the device, and symbiotic control of the exoskeleton-human co-robot system. The hybrid exoskeleton approach, demonstrated in a soft knee industrial exoskeleton case, mitigates the discomfort of wearers as it aims to avoid the drawbacks of rigid exoskeletons and textile-based soft exosuits. Quasi-direct drive actuation using high-torque density motors minimizes the weight of the device and presents high backdrivability that does not restrict natural movement. We derive a biomechanics model that is generic to both squat and stoop lifting motion. The control algorithm symbiotically detects posture using compact inertial measurement unit (IMU) sensors to generate an assistive profile that is proportional to the biological torque generated from our model. Experimental results demonstrate that the robot exhibits 1.5 Nm torque when it is unpowered and 0.5 Nm torque with zero-torque tracking control. The efficacy of injury prevention is demonstrated with one healthy subject. Root mean square (RMS) error of torque tracking is less than 0.29 Nm (1.21% of 24 Nm peak torque) for 50% assistance of biological torque. Comparing to the squat without exoskeleton, the maximum amplitude of the knee extensor muscle activity (rectus femoris) measured by Electromyography (EMG) sensors is reduced by 30% with 50% assistance of biological torque.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge