David Mary

LAGRANGE, OCA

Machine learning meets false discovery rate

Aug 13, 2022

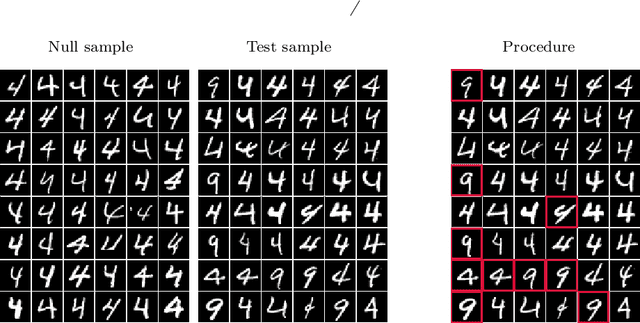

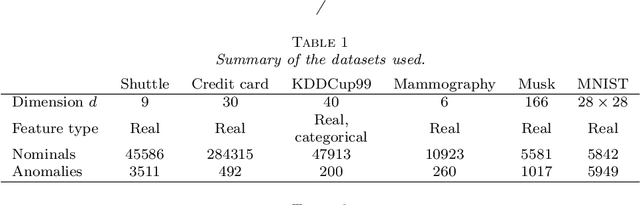

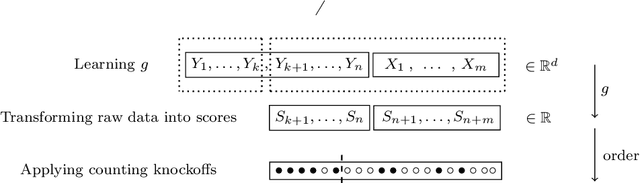

Abstract:Classical false discovery rate (FDR) controlling procedures offer strong and interpretable guarantees, while they often lack of flexibility. On the other hand, recent machine learning classification algorithms, as those based on random forests (RF) or neural networks (NN), have great practical performances but lack of interpretation and of theoretical guarantees. In this paper, we make these two meet by introducing a new adaptive novelty detection procedure with FDR control, called AdaDetect. It extends the scope of recent works of multiple testing literature to the high dimensional setting, notably the one in Yang et al. (2021). AdaDetect is shown to both control strongly the FDR and to have a power that mimics the one of the oracle in a specific sense. The interest and validity of our approach is demonstrated with theoretical results, numerical experiments on several benchmark datasets and with an application to astrophysical data. In particular, while AdaDetect can be used in combination with any classifier, it is particularly efficient on real-world datasets with RF, and on images with NN.

Multi-frequency image reconstruction for radio-interferometry with self-tuned regularization parameters

Mar 10, 2017

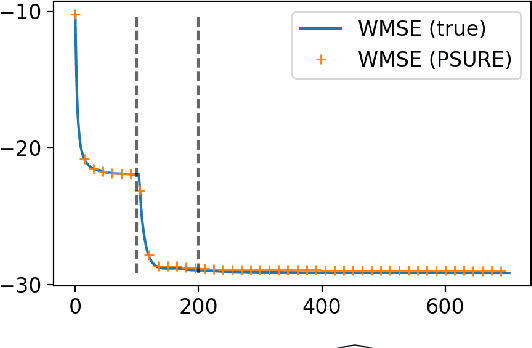

Abstract:As the world's largest radio telescope, the Square Kilometer Array (SKA) will provide radio interferometric data with unprecedented detail. Image reconstruction algorithms for radio interferometry are challenged to scale well with TeraByte image sizes never seen before. In this work, we investigate one such 3D image reconstruction algorithm known as MUFFIN (MUlti-Frequency image reconstruction For radio INterferometry). In particular, we focus on the challenging task of automatically finding the optimal regularization parameter values. In practice, finding the regularization parameters using classical grid search is computationally intensive and nontrivial due to the lack of ground- truth. We adopt a greedy strategy where, at each iteration, the optimal parameters are found by minimizing the predicted Stein unbiased risk estimate (PSURE). The proposed self-tuned version of MUFFIN involves parallel and computationally efficient steps, and scales well with large- scale data. Finally, numerical results on a 3D image are presented to showcase the performance of the proposed approach.

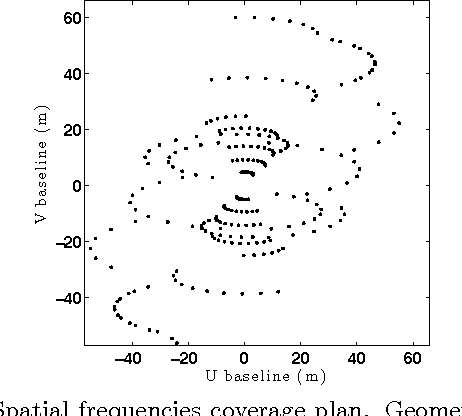

Distributed image reconstruction for very large arrays in radio astronomy

Jul 02, 2015

Abstract:Current and future radio interferometric arrays such as LOFAR and SKA are characterized by a paradox. Their large number of receptors (up to millions) allow theoretically unprecedented high imaging resolution. In the same time, the ultra massive amounts of samples makes the data transfer and computational loads (correlation and calibration) order of magnitudes too high to allow any currently existing image reconstruction algorithm to achieve, or even approach, the theoretical resolution. We investigate here decentralized and distributed image reconstruction strategies which select, transfer and process only a fraction of the total data. The loss in MSE incurred by the proposed approach is evaluated theoretically and numerically on simple test cases.

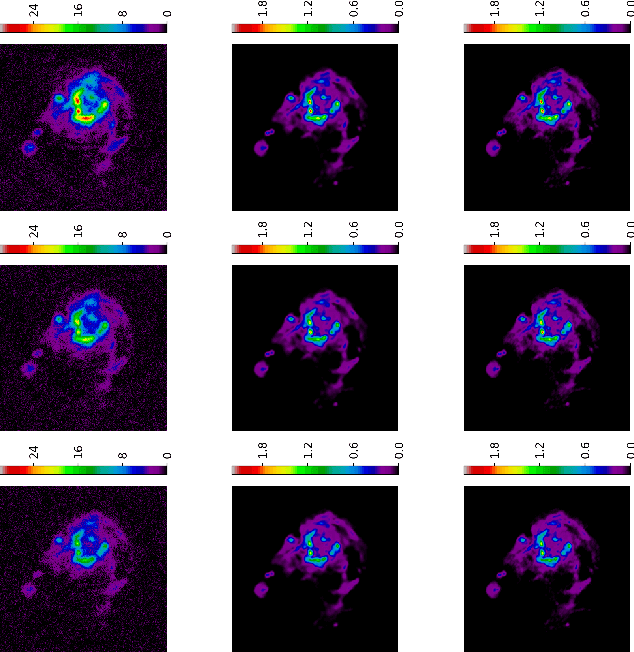

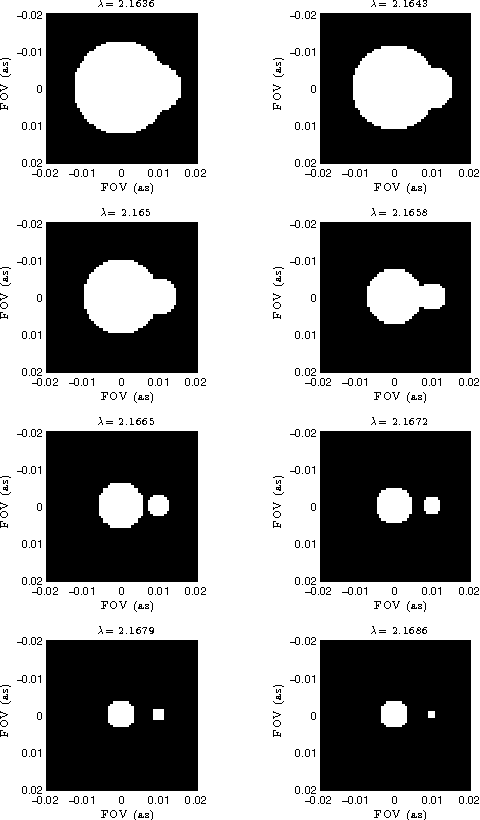

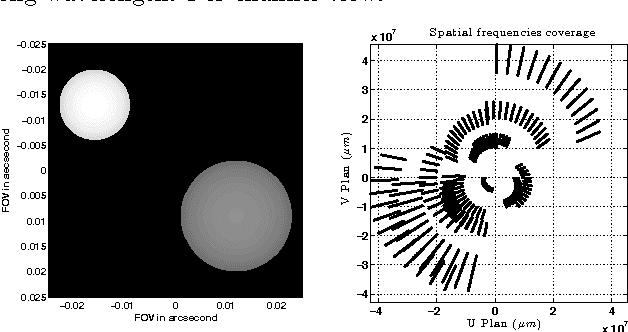

PAINTER: a spatio-spectral image reconstruction algorithm for optical interferometry

Sep 27, 2014

Abstract:Astronomical optical interferometers sample the Fourier transform of the intensity distribution of a source at the observation wavelength. Because of rapid perturbations caused by atmospheric turbulence, the phases of the complex Fourier samples (visibilities) cannot be directly exploited. Consequently, specific image reconstruction methods have been devised in the last few decades. Modern polychromatic optical interferometric instruments are now paving the way to multiwavelength imaging. This paper is devoted to the derivation of a spatio-spectral (3D) image reconstruction algorithm, coined PAINTER (Polychromatic opticAl INTErferometric Reconstruction software). The algorithm relies on an iterative process, which alternates estimation of polychromatic images and of complex visibilities. The complex visibilities are not only estimated from squared moduli and closure phases, but also differential phases, which helps to better constrain the polychromatic reconstruction. Simulations on synthetic data illustrate the efficiency of the algorithm and in particular the relevance of injecting a differential phases model in the reconstruction.

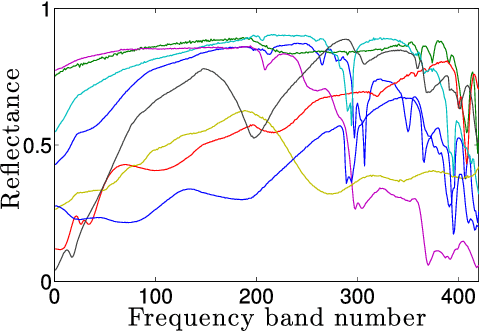

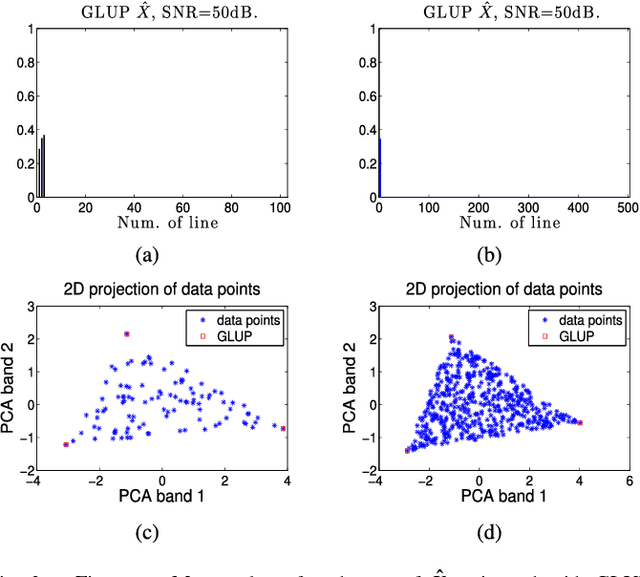

Blind and fully constrained unmixing of hyperspectral images

Mar 03, 2014

Abstract:This paper addresses the problem of blind and fully constrained unmixing of hyperspectral images. Unmixing is performed without the use of any dictionary, and assumes that the number of constituent materials in the scene and their spectral signatures are unknown. The estimated abundances satisfy the desired sum-to-one and nonnegativity constraints. Two models with increasing complexity are developed to achieve this challenging task, depending on how noise interacts with hyperspectral data. The first one leads to a convex optimization problem, and is solved with the Alternating Direction Method of Multipliers. The second one accounts for signal-dependent noise, and is addressed with a Reweighted Least Squares algorithm. Experiments on synthetic and real data demonstrate the effectiveness of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge