Daniel Köglmayr

Controlling dynamical systems into unseen target states using machine learning

Dec 13, 2024

Abstract:We present a novel, model-free, and data-driven methodology for controlling complex dynamical systems into previously unseen target states, including those with significantly different and complex dynamics. Leveraging a parameter-aware realization of next-generation reservoir computing, our approach accurately predicts system behavior in unobserved parameter regimes, enabling control over transitions to arbitrary target states. Crucially, this includes states with dynamics that differ fundamentally from known regimes, such as shifts from periodic to intermittent or chaotic behavior. The method's parameter-awareness facilitates non-stationary control, ensuring smooth transitions between states. By extending the applicability of machine learning-based control mechanisms to previously inaccessible target dynamics, this methodology opens the door to transformative new applications while maintaining exceptional efficiency. Our results highlight reservoir computing as a powerful alternative to traditional methods for dynamic system control.

Extrapolating tipping points and simulating non-stationary dynamics of complex systems using efficient machine learning

Dec 11, 2023Abstract:Model-free and data-driven prediction of tipping point transitions in nonlinear dynamical systems is a challenging and outstanding task in complex systems science. We propose a novel, fully data-driven machine learning algorithm based on next-generation reservoir computing to extrapolate the bifurcation behavior of nonlinear dynamical systems using stationary training data samples. We show that this method can extrapolate tipping point transitions. Furthermore, it is demonstrated that the trained next-generation reservoir computing architecture can be used to predict non-stationary dynamics with time-varying bifurcation parameters. In doing so, post-tipping point dynamics of unseen parameter regions can be simulated.

Controlling dynamical systems to complex target states using machine learning: next-generation vs. classical reservoir computing

Jul 14, 2023

Abstract:Controlling nonlinear dynamical systems using machine learning allows to not only drive systems into simple behavior like periodicity but also to more complex arbitrary dynamics. For this, it is crucial that a machine learning system can be trained to reproduce the target dynamics sufficiently well. On the example of forcing a chaotic parametrization of the Lorenz system into intermittent dynamics, we show first that classical reservoir computing excels at this task. In a next step, we compare those results based on different amounts of training data to an alternative setup, where next-generation reservoir computing is used instead. It turns out that while delivering comparable performance for usual amounts of training data, next-generation RC significantly outperforms in situations where only very limited data is available. This opens even further practical control applications in real world problems where data is restricted.

Exploring the limits of multifunctionality across different reservoir computers

May 23, 2022

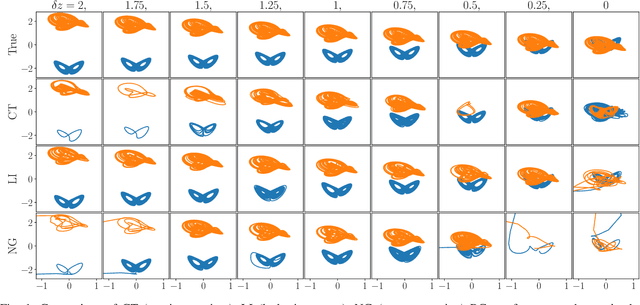

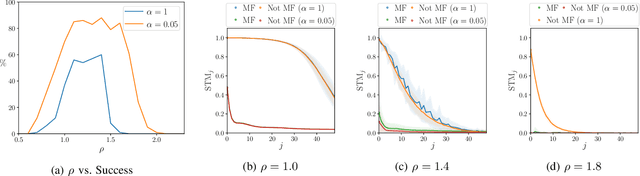

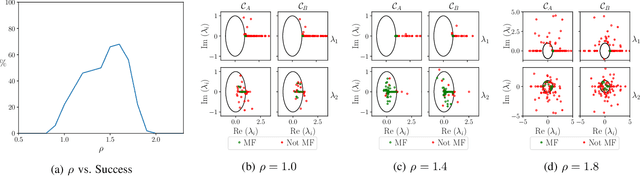

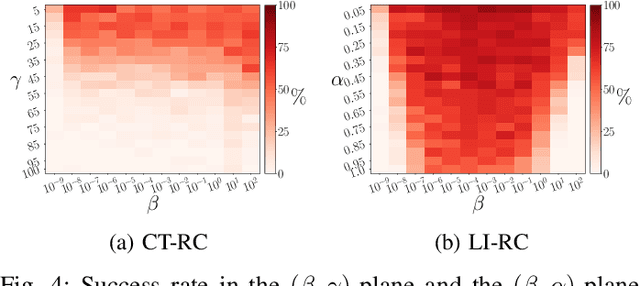

Abstract:Multifunctional neural networks are capable of performing more than one task without changing any network connections. In this paper we explore the performance of a continuous-time, leaky-integrator, and next-generation `reservoir computer' (RC), when trained on tasks which test the limits of multifunctionality. In the first task we train each RC to reconstruct a coexistence of chaotic attractors from different dynamical systems. By moving the data describing these attractors closer together, we find that the extent to which each RC can reconstruct both attractors diminishes as they begin to overlap in state space. In order to provide a greater understanding of this inhibiting effect, in the second task we train each RC to reconstruct a coexistence of two circular orbits which differ only in the direction of rotation. We examine the critical effects that certain parameters can have in each RC to achieve multifunctionality in this extreme case of completely overlapping training data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge