Daiki Nishiyama

Explainable Classifier for Malignant Lymphoma Subtyping via Cell Graph and Image Fusion

Mar 02, 2025

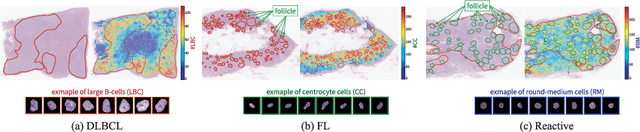

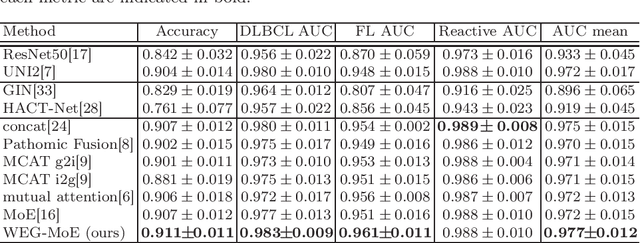

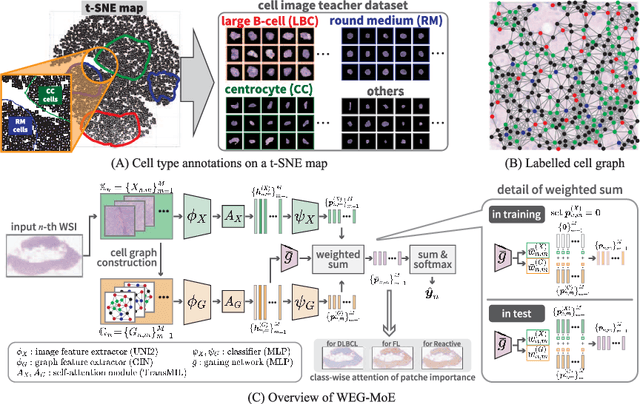

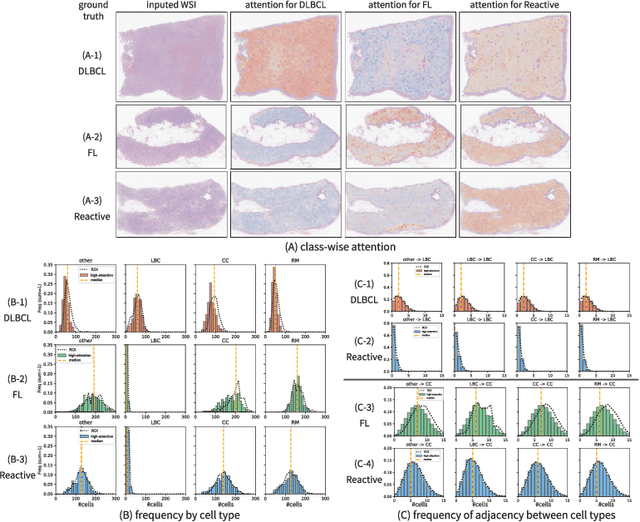

Abstract:Malignant lymphoma subtype classification directly impacts treatment strategies and patient outcomes, necessitating classification models that achieve both high accuracy and sufficient explainability. This study proposes a novel explainable Multi-Instance Learning (MIL) framework that identifies subtype-specific Regions of Interest (ROIs) from Whole Slide Images (WSIs) while integrating cell distribution characteristics and image information. Our framework simultaneously addresses three objectives: (1) indicating appropriate ROIs for each subtype, (2) explaining the frequency and spatial distribution of characteristic cell types, and (3) achieving high-accuracy subtyping by leveraging both image and cell-distribution modalities. The proposed method fuses cell graph and image features extracted from each patch in the WSI using a Mixture-of-Experts (MoE) approach and classifies subtypes within an MIL framework. Experiments on a dataset of 1,233 WSIs demonstrate that our approach achieves state-of-the-art accuracy among ten comparative methods and provides region-level and cell-level explanations that align with a pathologist's perspectives.

Adversarial Attacks on Hidden Tasks in Multi-Task Learning

May 28, 2024Abstract:Deep learning models are susceptible to adversarial attacks, where slight perturbations to input data lead to misclassification. Adversarial attacks become increasingly effective with access to information about the targeted classifier. In the context of multi-task learning, where a single model learns multiple tasks simultaneously, attackers may aim to exploit vulnerabilities in specific tasks with limited information. This paper investigates the feasibility of attacking hidden tasks within multi-task classifiers, where model access regarding the hidden target task and labeled data for the hidden target task are not available, but model access regarding the non-target tasks is available. We propose a novel adversarial attack method that leverages knowledge from non-target tasks and the shared backbone network of the multi-task model to force the model to forget knowledge related to the target task. Experimental results on CelebA and DeepFashion datasets demonstrate the effectiveness of our method in degrading the accuracy of hidden tasks while preserving the performance of visible tasks, contributing to the understanding of adversarial vulnerabilities in multi-task classifiers.

CAMRI Loss: Improving Recall of a Specific Class without Sacrificing Accuracy

Sep 22, 2022

Abstract:In real-world applications of multi-class classification models, misclassification in an important class (e.g., stop sign) can be significantly more harmful than in other classes (e.g., speed limit). In this paper, we propose a loss function that can improve the recall of an important class while maintaining the same level of accuracy as the case using cross-entropy loss. For our purpose, we need to make the separation of the important class better than the other classes. However, existing methods that give a class-sensitive penalty for cross-entropy loss do not improve the separation. On the other hand, the method that gives a margin to the angle between the feature vectors and the weight vectors of the last fully connected layer corresponding to each feature can improve the separation. Therefore, we propose a loss function that can improve the separation of the important class by setting the margin only for the important class, called Class-sensitive Additive Angular Margin Loss (CAMRI Loss). CAMRI loss is expected to reduce the variance of angles between features and weights of the important class relative to other classes due to the margin around the important class in the feature space by adding a penalty to the angle. In addition, concentrating the penalty only on the important classes hardly sacrifices the separation of the other classes. Experiments on CIFAR-10, GTSRB, and AwA2 showed that the proposed method could improve up to 9% recall improvement on cross-entropy loss without sacrificing accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge