Cristian Jimenez-Romero

Multi-Agent Systems Powered by Large Language Models: Applications in Swarm Intelligence

Mar 05, 2025Abstract:This work examines the integration of large language models (LLMs) into multi-agent simulations by replacing the hard-coded programs of agents with LLM-driven prompts. The proposed approach is showcased in the context of two examples of complex systems from the field of swarm intelligence: ant colony foraging and bird flocking. Central to this study is a toolchain that integrates LLMs with the NetLogo simulation platform, leveraging its Python extension to enable communication with GPT-4o via the OpenAI API. This toolchain facilitates prompt-driven behavior generation, allowing agents to respond adaptively to environmental data. For both example applications mentioned above, we employ both structured, rule-based prompts and autonomous, knowledge-driven prompts. Our work demonstrates how this toolchain enables LLMs to study self-organizing processes and induce emergent behaviors within multi-agent environments, paving the way for new approaches to exploring intelligent systems and modeling swarm intelligence inspired by natural phenomena. We provide the code, including simulation files and data at https://github.com/crjimene/swarm_gpt.

Exploring hyper-parameter spaces of neuroscience models on high performance computers with Learning to Learn

Feb 28, 2022

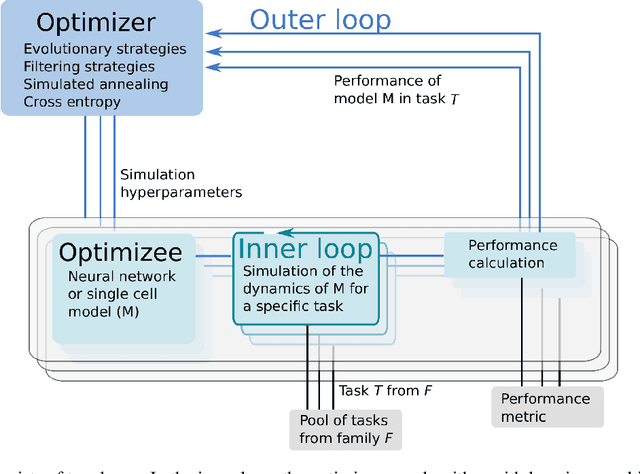

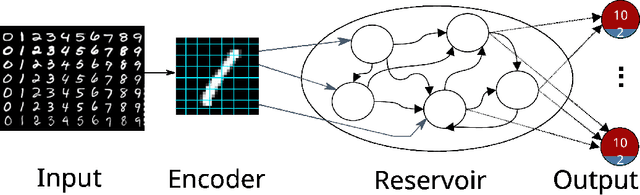

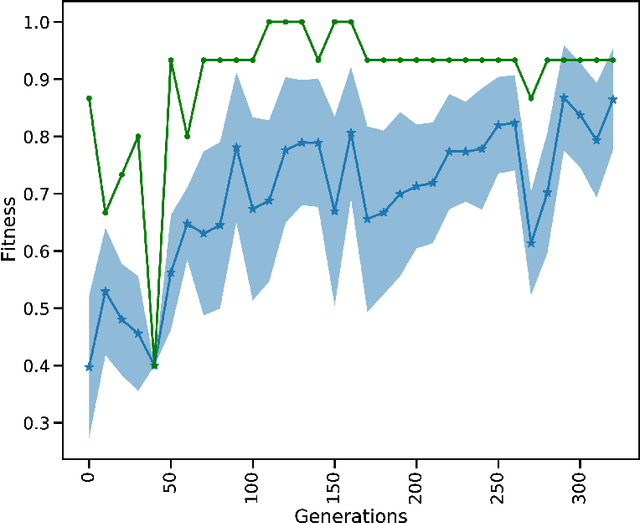

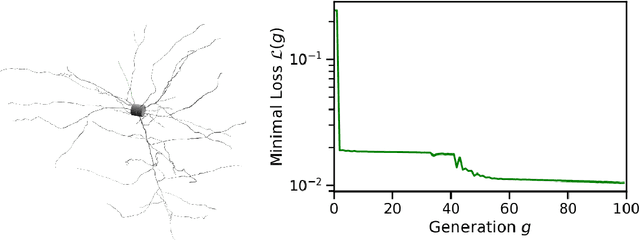

Abstract:Neuroscience models commonly have a high number of degrees of freedom and only specific regions within the parameter space are able to produce dynamics of interest. This makes the development of tools and strategies to efficiently find these regions of high importance to advance brain research. Exploring the high dimensional parameter space using numerical simulations has been a frequently used technique in the last years in many areas of computational neuroscience. High performance computing (HPC) can provide today a powerful infrastructure to speed up explorations and increase our general understanding of the model's behavior in reasonable times.

Designing Behaviour in Bio-inspired Robots Using Associative Topologies of Spiking-Neural-Networks

Sep 24, 2015

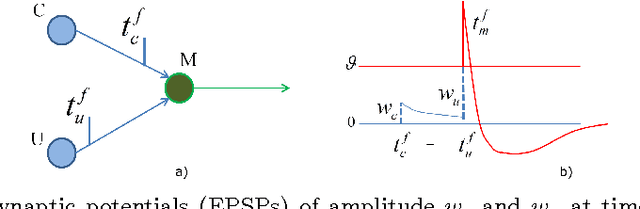

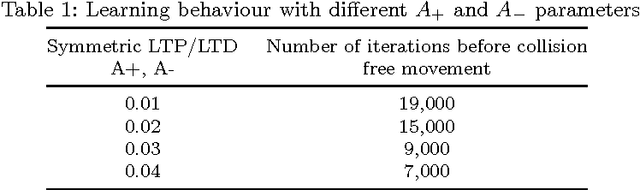

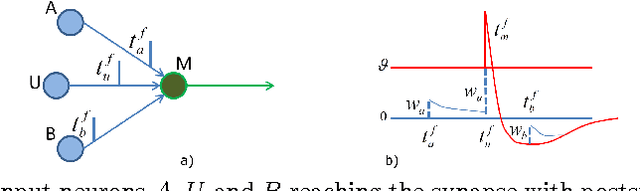

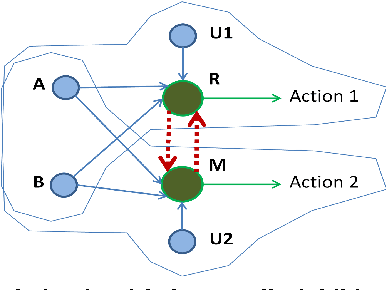

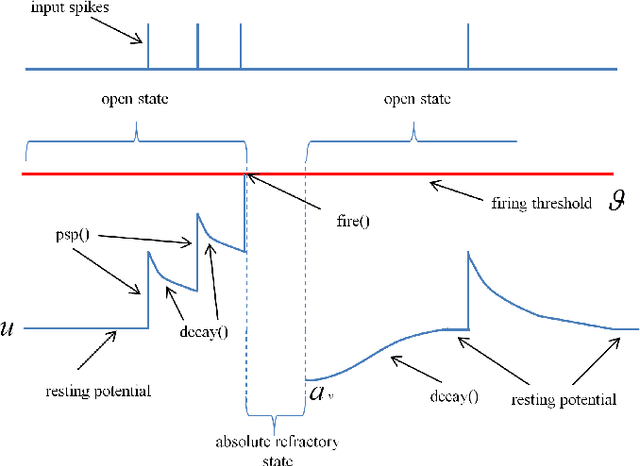

Abstract:This study explores the design and control of the behaviour of agents and robots using simple circuits of spiking neurons and Spike Timing Dependent Plasticity (STDP) as a mechanism of associative and unsupervised learning. Based on a "reward and punishment" classical conditioning, it is demonstrated that these robots learnt to identify and avoid obstacles as well as to identify and look for rewarding stimuli. Using the simulation and programming environment NetLogo, a software engine for the Integrate and Fire model was developed, which allowed us to monitor in discrete time steps the dynamics of each single neuron, synapse and spike in the proposed neural networks. These spiking neural networks (SNN) served as simple brains for the experimental robots. The Lego Mindstorms robot kit was used for the embodiment of the simulated agents. In this paper the topological building blocks are presented as well as the neural parameters required to reproduce the experiments. This paper summarizes the resulting behaviour as well as the observed dynamics of the neural circuits. The Internet-link to the NetLogo code is included in the annex.

A Model for Foraging Ants, Controlled by Spiking Neural Networks and Double Pheromones

Sep 18, 2015

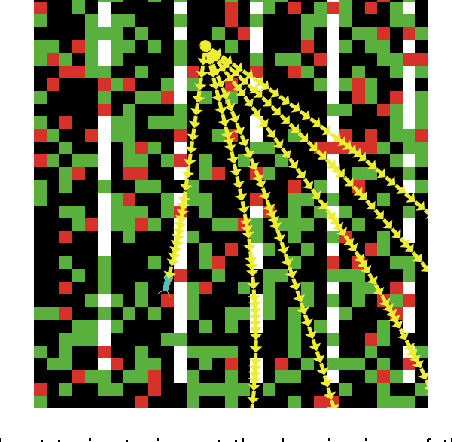

Abstract:A model of an Ant System where ants are controlled by a spiking neural circuit and a second order pheromone mechanism in a foraging task is presented. A neural circuit is trained for individual ants and subsequently the ants are exposed to a virtual environment where a swarm of ants performed a resource foraging task. The model comprises an associative and unsupervised learning strategy for the neural circuit of the ant. The neural circuit adapts to the environment by means of classical conditioning. The initially unknown environment includes different types of stimuli representing food and obstacles which, when they come in direct contact with the ant, elicit a reflex response in the motor neural system of the ant: moving towards or away from the source of the stimulus. The ants are released on a landscape with multiple food sources where one ant alone would have difficulty harvesting the landscape to maximum efficiency. The introduction of a double pheromone mechanism yields better results than traditional ant colony optimization strategies. Traditional ant systems include mainly a positive reinforcement pheromone. This approach uses a second pheromone that acts as a marker for forbidden paths (negative feedback). This blockade is not permanent and is controlled by the evaporation rate of the pheromones. The combined action of both pheromones acts as a collective stigmergic memory of the swarm, which reduces the search space of the problem. This paper explores how the adaptation and learning abilities observed in biologically inspired cognitive architectures is synergistically enhanced by swarm optimization strategies. The model portraits two forms of artificial intelligent behaviour: at the individual level the spiking neural network is the main controller and at the collective level the pheromone distribution is a map towards the solution emerged by the colony.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge