Clément Luneau

Information theoretic limits of learning a sparse rule

Jun 19, 2020

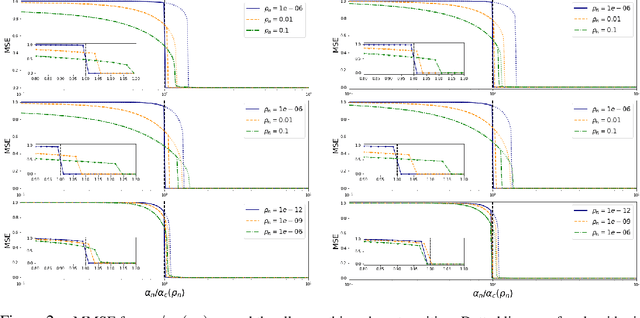

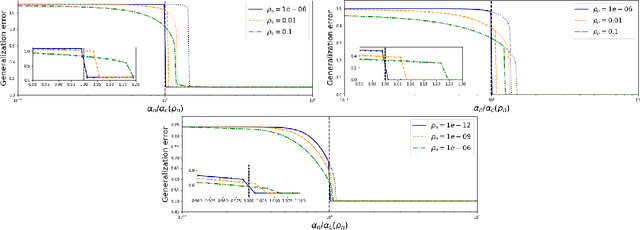

Abstract:We consider generalized linear models in regimes where the number of nonzerocomponents of the signal and accessible data points are sublinear with respect to the size of the signal. We prove a variational formula for the asymptotic mutual information per sample when the system size grows to infinity. This result allows us to heuristically derive an expression for the minimum mean-square error (MMSE)of the Bayesian estimator. We then find that, for discrete signals and suitable vanishing scalings of the sparsity and sampling rate, the MMSE displays an all-or-nothing phenomenon, namely, the MMSE sharply jumps from its maximum value to zero at a critical sampling rate. The all-or-nothing phenomenon has recently been proved to occur in high-dimensional linear regression. Our analysis goes beyond the linear case and applies to learning the weights of a perceptron with general activation function in a teacher-student scenario. In particular we discuss an all-or-nothing phenomenon for the generalization error with a sublinear set of training examples.

Entropy and mutual information in models of deep neural networks

Oct 29, 2018

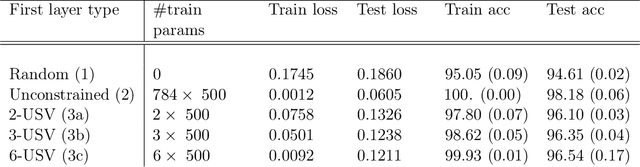

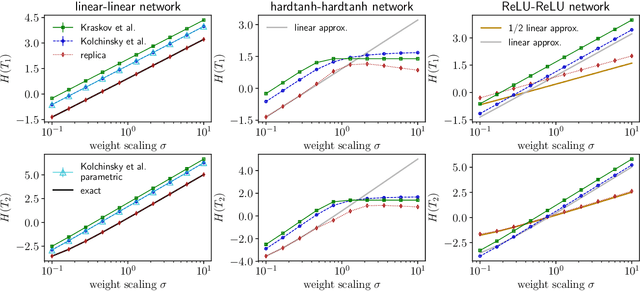

Abstract:We examine a class of deep learning models with a tractable method to compute information-theoretic quantities. Our contributions are three-fold: (i) We show how entropies and mutual informations can be derived from heuristic statistical physics methods, under the assumption that weight matrices are independent and orthogonally-invariant. (ii) We extend particular cases in which this result is known to be rigorously exact by providing a proof for two-layers networks with Gaussian random weights, using the recently introduced adaptive interpolation method. (iii) We propose an experiment framework with generative models of synthetic datasets, on which we train deep neural networks with a weight constraint designed so that the assumption in (i) is verified during learning. We study the behavior of entropies and mutual informations throughout learning and conclude that, in the proposed setting, the relationship between compression and generalization remains elusive.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge