Chul Min Yeum

3D Reconstruction by Looking: Instantaneous Blind Spot Detector for Indoor SLAM through Mixed Reality

Nov 19, 2024

Abstract:Indoor SLAM often suffers from issues such as scene drifting, double walls, and blind spots, particularly in confined spaces with objects close to the sensors (e.g. LiDAR and cameras) in reconstruction tasks. Real-time visualization of point cloud registration during data collection may help mitigate these issues, but a significant limitation remains in the inability to in-depth compare the scanned data with actual physical environments. These challenges obstruct the quality of reconstruction products, frequently necessitating revisit and rescan efforts. For this regard, we developed the LiMRSF (LiDAR-MR-RGB Sensor Fusion) system, allowing users to perceive the in-situ point cloud registration by looking through a Mixed-Reality (MR) headset. This tailored framework visualizes point cloud meshes as holograms, seamlessly matching with the real-time scene on see-through glasses, and automatically highlights errors detected while they overlap. Such holographic elements are transmitted via a TCP server to an MR headset, where it is calibrated to align with the world coordinate, the physical location. This allows users to view the localized reconstruction product instantaneously, enabling them to quickly identify blind spots and errors, and take prompt action on-site. Our blind spot detector achieves an error detection precision with an F1 Score of 75.76% with acceptably high fidelity of monitoring through the LiMRSF system (highest SSIM of 0.5619, PSNR of 14.1004, and lowest MSE of 0.0389 in the five different sections of the simplified mesh model which users visualize through the LiMRSF device see-through glasses). This method ensures the creation of detailed, high-quality datasets for 3D models, with potential applications in Building Information Modeling (BIM) but not limited.

Gaze-based Human-Robot Interaction System for Infrastructure Inspections

Mar 12, 2024Abstract:Routine inspections for critical infrastructures such as bridges are required in most jurisdictions worldwide. Such routine inspections are largely visual in nature, which are qualitative, subjective, and not repeatable. Although robotic infrastructure inspections address such limitations, they cannot replace the superior ability of experts to make decisions in complex situations, thus making human-robot interaction systems a promising technology. This study presents a novel gaze-based human-robot interaction system, designed to augment the visual inspection performance through mixed reality. Through holograms from a mixed reality device, gaze can be utilized effectively to estimate the properties of the defect in real-time. Additionally, inspectors can monitor the inspection progress online, which enhances the speed of the entire inspection process. Limited controlled experiments demonstrate its effectiveness across various users and defect types. To our knowledge, this is the first demonstration of the real-time application of eye gaze in civil infrastructure inspections.

Towards fully automated post-event data collection and analysis: pre-event and post-event information fusion

Jun 30, 2019

Abstract:In post-event reconnaissance missions, engineers and researchers collect perishable information about damaged buildings in the affected geographical region to learn from the consequences of the event. A typical post-event reconnaissance mission is conducted by first doing a preliminary survey, followed by a detailed survey. The preliminary survey is typically conducted by driving slowly along a pre-determined route, observing the damage, and noting where further detailed data should be collected. This involves several manual, time-consuming steps that can be accelerated by exploiting recent advances in computer vision and artificial intelligence. The objective of this work is to develop and validate an automated technique to support post-event reconnaissance teams in the rapid collection of reliable and sufficiently comprehensive data, for planning the detailed survey. The technique incorporates several methods designed to automate the process of categorizing buildings based on their key physical attributes, and rapidly assessing their post-event structural condition. It is divided into pre-event and post-event streams, each intending to first extract all possible information about the target buildings using both pre-event and post-event images. Algorithms based on convolutional neural network (CNNs) are implemented for scene (image) classification. A probabilistic approach is developed to fuse the results obtained from analyzing several images to yield a robust decision regarding the attributes and condition of a target building. We validate the technique using post-event images captured during reconnaissance missions that took place after hurricanes Harvey and Irma. The validation data were collected by a structural wind and coastal engineering reconnaissance team, the National Science Foundation (NSF) funded Structural Extreme Events Reconnaissance (StEER) Network.

Automated Building Image Extraction from 360-degree Panoramas for Post-Disaster Evaluation

May 04, 2019

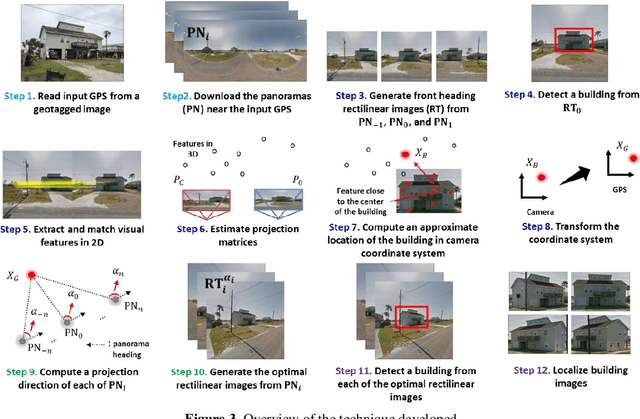

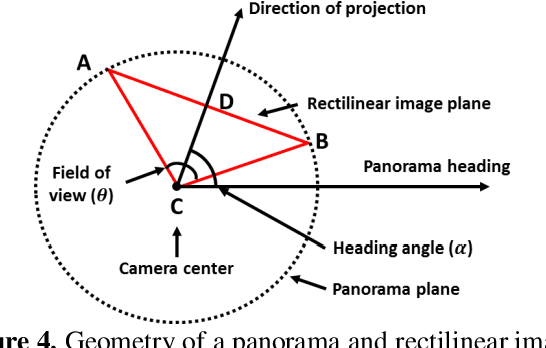

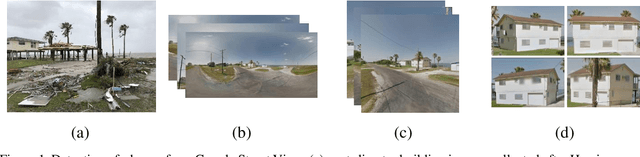

Abstract:After a disaster, teams of structural engineers collect vast amounts of images from damaged buildings to obtain new knowledge and extract lessons from the event. However, in many cases, the images collected are captured without sufficient spatial context. When damage is severe, it may be quite difficult to even recognize the building. Accessing images of the pre-disaster condition of those buildings is required to accurately identify the cause of the failure or the actual loss in the building. Here, to address this issue, we develop a method to automatically extract pre-event building images from 360o panorama images (panoramas). By providing a geotagged image collected near the target building as the input, panoramas close to the input image location are automatically downloaded through street view services (e.g., Google or Bing in the United States). By computing the geometric relationship between the panoramas and the target building, the most suitable projection direction for each panorama is identified to generate high-quality 2D images of the building. Region-based convolutional neural networks are exploited to recognize the building within those 2D images. Several panoramas are used so that the detected building images provide various viewpoints of the building. To demonstrate the capability of the technique, we consider residential buildings in Holiday Beach, Texas, the United States which experienced significant devastation in Hurricane Harvey in 2017. Using geotagged images gathered during actual post-disaster building reconnaissance missions, we verify the method by successfully extracting residential building images from Google Street View images, which were captured before the event.

Automated Detection of Pre-Disaster Building Images from Google Street View

Feb 13, 2019

Abstract:After a disaster, teams of structural engineers collect vast amounts of images from damaged buildings to obtain lessons and gain knowledge from the event. Images of damaged buildings and components provide valuable evidence to understand the consequences on our structures. However, in many cases, images of damaged buildings are often captured without sufficient spatial context. Also, they may be hard to recognize in cases with severe damage. Incorporating past images showing a pre-disaster condition of such buildings is helpful to accurately evaluate possible circumstances related to a building's failure. One of the best resources to observe the pre-disaster condition of the buildings is Google Street View. A sequence of 360 panorama images which are captured along streets enables all-around views at each location on the street. Once a user knows the GPS information near the building, all external views of the building can be made available. In this study, we develop an automated technique to extract past building images from 360 panorama images serviced by Google Street View. Users only need to provide a geo-tagged image, collected near the target building, and the rest of the process is fully automated. High-quality and undistorted building images are extracted from past panoramas. Since the panoramas are collected from various locations near the building along the street, the user can identify its pre-disaster conditions from the full set of external views.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge