Jongseong Brad Choi

SARA: Scene-Aware Reconstruction Accelerator

Jan 11, 2026Abstract:We present SARA (Scene-Aware Reconstruction Accelerator), a geometry-driven pair selection module for Structure-from-Motion (SfM). Unlike conventional pipelines that select pairs based on visual similarity alone, SARA introduces geometry-first pair selection by scoring reconstruction informativeness - the product of overlap and parallax - before expensive matching. A lightweight pre-matching stage uses mutual nearest neighbors and RANSAC to estimate these cues, then constructs an Information-Weighted Spanning Tree (IWST) augmented with targeted edges for loop closure, long-baseline anchors, and weak-view reinforcement. Compared to exhaustive matching, SARA reduces rotation errors by 46.5+-5.5% and translation errors by 12.5+-6.5% across modern learned detectors, while achieving at most 50x speedup through 98% pair reduction (from 30,848 to 580 pairs). This reduces matching complexity from quadratic to quasi-linear, maintaining within +-3% of baseline reconstruction metrics for 3D Gaussian Splatting and SVRaster.

PRISM: Color-Stratified Point Cloud Sampling

Jan 11, 2026Abstract:We present PRISM, a novel color-guided stratified sampling method for RGB-LiDAR point clouds. Our approach is motivated by the observation that unique scene features often exhibit chromatic diversity while repetitive, redundant features are homogeneous in color. Conventional downsampling methods (Random Sampling, Voxel Grid, Normal Space Sampling) enforce spatial uniformity while ignoring this photometric content. In contrast, PRISM allocates sampling density proportional to chormatic diversity. By treating RGB color space as the stratification domain and imposing a maximum capacity k per color bin, the method preserves texture-rich regions with high color variation while substantially reducing visually homogeneous surfaces. This shifts the sampling space from spatial coverage to visual complexity to produce sparser point clouds that retain essential features for 3D reconstruction tasks.

A Hybrid Surrogate for Electric Vehicle Parameter Estimation and Power Consumption via Physics-Informed Neural Operators

Aug 18, 2025Abstract:We present a hybrid surrogate model for electric vehicle parameter estimation and power consumption. We combine our novel architecture Spectral Parameter Operator built on a Fourier Neural Operator backbone for global context and a differentiable physics module in the forward pass. From speed and acceleration alone, it outputs time-varying motor and regenerative braking efficiencies, as well as aerodynamic drag, rolling resistance, effective mass, and auxiliary power. These parameters drive a physics-embedded estimate of battery power, eliminating any separate physics-residual loss. The modular design lets representations converge to physically meaningful parameters that reflect the current state and condition of the vehicle. We evaluate on real-world logs from a Tesla Model 3, Tesla Model S, and the Kia EV9. The surrogate achieves a mean absolute error of 0.2kW (about 1% of average traction power at highway speeds) for Tesla vehicles and about 0.8kW on the Kia EV9. The framework is interpretable, and it generalizes well to unseen conditions, and sampling rates, making it practical for path optimization, eco-routing, on-board diagnostics, and prognostics health management.

MATT-GS: Masked Attention-based 3DGS for Robot Perception and Object Detection

Mar 25, 2025Abstract:This paper presents a novel masked attention-based 3D Gaussian Splatting (3DGS) approach to enhance robotic perception and object detection in industrial and smart factory environments. U2-Net is employed for background removal to isolate target objects from raw images, thereby minimizing clutter and ensuring that the model processes only relevant data. Additionally, a Sobel filter-based attention mechanism is integrated into the 3DGS framework to enhance fine details - capturing critical features such as screws, wires, and intricate textures essential for high-precision tasks. We validate our approach using quantitative metrics, including L1 loss, SSIM, PSNR, comparing the performance of the background-removed and attention-incorporated 3DGS model against the ground truth images and the original 3DGS training baseline. The results demonstrate significant improves in visual fidelity and detail preservation, highlighting the effectiveness of our method in enhancing robotic vision for object recognition and manipulation in complex industrial settings.

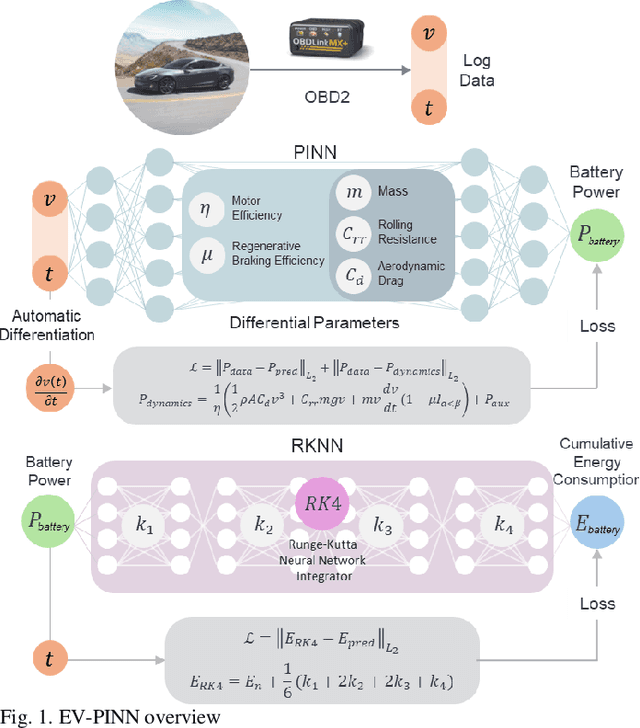

EV-PINN: A Physics-Informed Neural Network for Predicting Electric Vehicle Dynamics

Nov 22, 2024

Abstract:An onboard prediction of dynamic parameters (e.g. Aerodynamic drag, rolling resistance) enables accurate path planning for EVs. This paper presents EV-PINN, a Physics-Informed Neural Network approach in predicting instantaneous battery power and cumulative energy consumption during cruising while generalizing to the nonlinear dynamics of an EV. Our method learns real-world parameters such as motor efficiency, regenerative braking efficiency, vehicle mass, coefficient of aerodynamic drag, and coefficient of rolling resistance using automatic differentiation based on dynamics and ensures consistency with ground truth vehicle data. EV-PINN was validated using 15 and 35 minutes of in-situ battery log data from the Tesla Model 3 Long Range and Tesla Model S, respectively. With only vehicle speed and time as inputs, our model achieves high accuracy and generalization to dynamics, with validation losses of 0.002195 and 0.002292, respectively. This demonstrates EV-PINN's effectiveness in estimating parameters and predicting battery usage under actual driving conditions without the need for additional sensors.

3D Reconstruction by Looking: Instantaneous Blind Spot Detector for Indoor SLAM through Mixed Reality

Nov 19, 2024

Abstract:Indoor SLAM often suffers from issues such as scene drifting, double walls, and blind spots, particularly in confined spaces with objects close to the sensors (e.g. LiDAR and cameras) in reconstruction tasks. Real-time visualization of point cloud registration during data collection may help mitigate these issues, but a significant limitation remains in the inability to in-depth compare the scanned data with actual physical environments. These challenges obstruct the quality of reconstruction products, frequently necessitating revisit and rescan efforts. For this regard, we developed the LiMRSF (LiDAR-MR-RGB Sensor Fusion) system, allowing users to perceive the in-situ point cloud registration by looking through a Mixed-Reality (MR) headset. This tailored framework visualizes point cloud meshes as holograms, seamlessly matching with the real-time scene on see-through glasses, and automatically highlights errors detected while they overlap. Such holographic elements are transmitted via a TCP server to an MR headset, where it is calibrated to align with the world coordinate, the physical location. This allows users to view the localized reconstruction product instantaneously, enabling them to quickly identify blind spots and errors, and take prompt action on-site. Our blind spot detector achieves an error detection precision with an F1 Score of 75.76% with acceptably high fidelity of monitoring through the LiMRSF system (highest SSIM of 0.5619, PSNR of 14.1004, and lowest MSE of 0.0389 in the five different sections of the simplified mesh model which users visualize through the LiMRSF device see-through glasses). This method ensures the creation of detailed, high-quality datasets for 3D models, with potential applications in Building Information Modeling (BIM) but not limited.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge