Christoph Hauger

Carl Zeiss Meditec AG

Intra-operative Brain Tumor Detection with Deep Learning-Optimized Hyperspectral Imaging

Feb 06, 2023Abstract:Surgery for gliomas (intrinsic brain tumors), especially when low-grade, is challenging due to the infiltrative nature of the lesion. Currently, no real-time, intra-operative, label-free and wide-field tool is available to assist and guide the surgeon to find the relevant demarcations for these tumors. While marker-based methods exist for the high-grade glioma case, there is no convenient solution available for the low-grade case; thus, marker-free optical techniques represent an attractive option. Although RGB imaging is a standard tool in surgical microscopes, it does not contain sufficient information for tissue differentiation. We leverage the richer information from hyperspectral imaging (HSI), acquired with a snapscan camera in the 468-787 nm range, coupled to a surgical microscope, to build a deep-learning-based diagnostic tool for cancer resection with potential for intra-operative guidance. However, the main limitation of the HSI snapscan camera is the image acquisition time, limiting its widespread deployment in the operation theater. Here, we investigate the effect of HSI channel reduction and pre-selection to scope the design space for the development of cheaper and faster sensors. Neural networks are used to identify the most important spectral channels for tumor tissue differentiation, optimizing the trade-off between the number of channels and precision to enable real-time intra-surgical application. We evaluate the performance of our method on a clinical dataset that was acquired during surgery on five patients. By demonstrating the possibility to efficiently detect low-grade glioma, these results can lead to better cancer resection demarcations, potentially improving treatment effectiveness and patient outcome.

Domain-specific loss design for unsupervised physical training: A new approach to modeling medical ML solutions

May 09, 2020

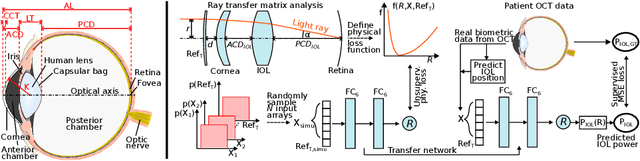

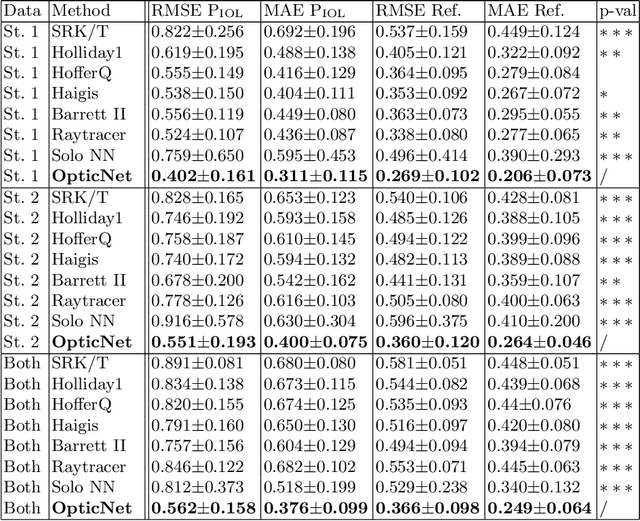

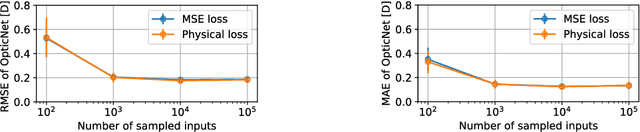

Abstract:Today, cataract surgery is the most frequently performed ophthalmic surgery in the world. The cataract, a developing opacity of the human eye lens, constitutes the world's most frequent cause for blindness. During surgery, the lens is removed and replaced by an artificial intraocular lens (IOL). To prevent patients from needing strong visual aids after surgery, a precise prediction of the optical properties of the inserted IOL is crucial. There has been lots of activity towards developing methods to predict these properties from biometric eye data obtained by OCT devices, recently also by employing machine learning. They consider either only biometric data or physical models, but rarely both, and often neglect the IOL geometry. In this work, we propose OpticNet, a novel optical refraction network, loss function, and training scheme which is unsupervised, domain-specific, and physically motivated. We derive a precise light propagation eye model using single-ray raytracing and formulate a differentiable loss function that back-propagates physical gradients into the network. Further, we propose a new transfer learning procedure, which allows unsupervised training on the physical model and fine-tuning of the network on a cohort of real IOL patient cases. We show that our network is not only superior to systems trained with standard procedures but also that our method outperforms the current state of the art in IOL calculation when compared on two biometric data sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge