Christian Mandery

A Framework for Evaluating Motion Segmentation Algorithms

Sep 30, 2018

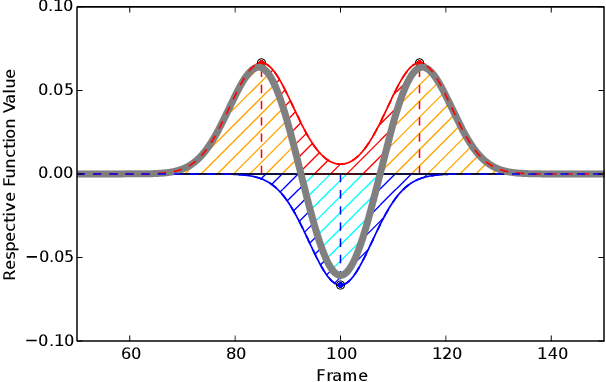

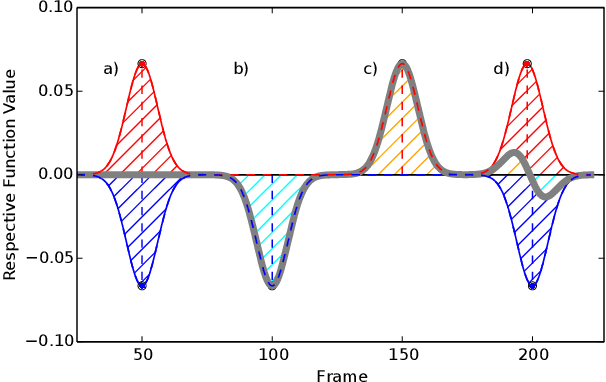

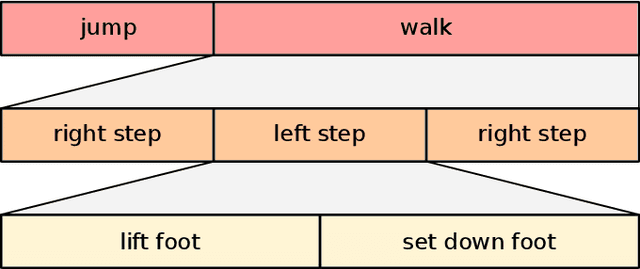

Abstract:There have been many proposals for algorithms segmenting human whole-body motion in the literature. However, the wide range of use cases, datasets, and quality measures that were used for the evaluation render the comparison of algorithms challenging. In this paper, we introduce a framework that puts motion segmentation algorithms on a unified testing ground and provides a possibility to allow comparing them. The testing ground features both a set of quality measures known from the literature and a novel approach tailored to the evaluation of motion segmentation algorithms, termed Integrated Kernel approach. Datasets of motion recordings, provided with a ground truth, are included as well. They are labelled in a new way, which hierarchically organises the ground truth, to cover different use cases that segmentation algorithms can possess. The framework and datasets are publicly available and are intended to represent a service for the community regarding the comparison and evaluation of existing and new motion segmentation algorithms.

The KIT Motion-Language Dataset

Aug 09, 2018

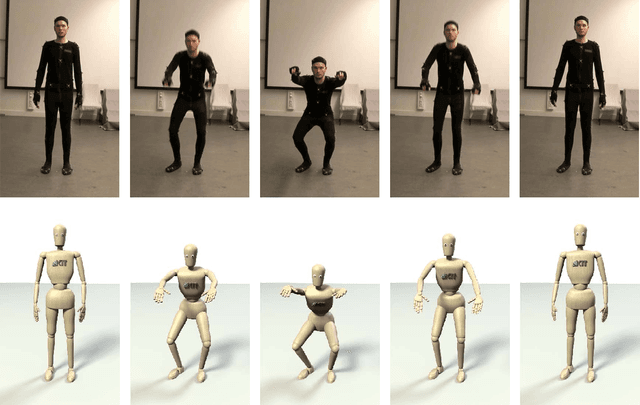

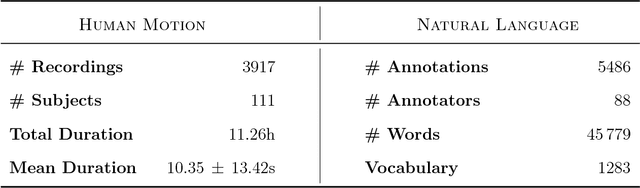

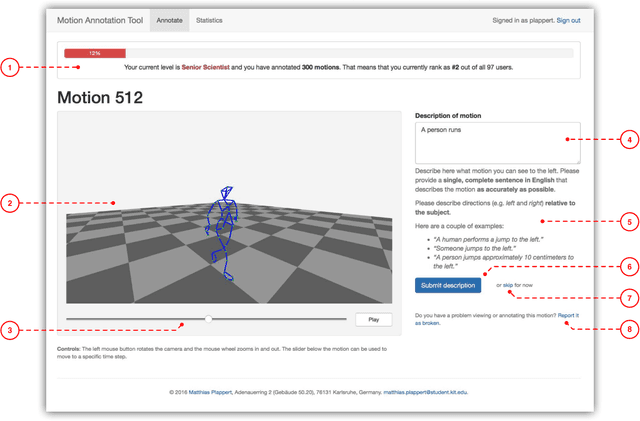

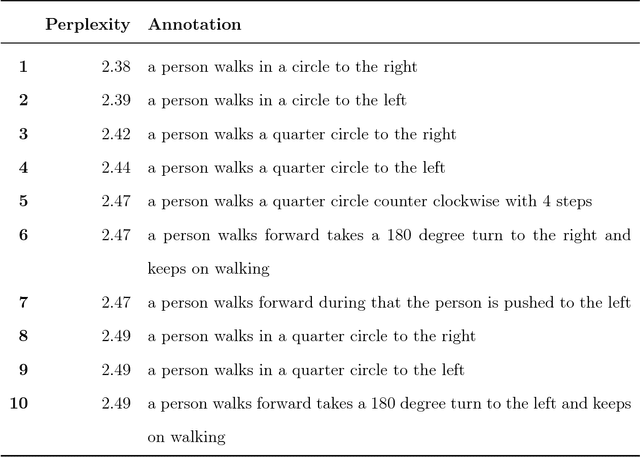

Abstract:Linking human motion and natural language is of great interest for the generation of semantic representations of human activities as well as for the generation of robot activities based on natural language input. However, while there have been years of research in this area, no standardized and openly available dataset exists to support the development and evaluation of such systems. We therefore propose the KIT Motion-Language Dataset, which is large, open, and extensible. We aggregate data from multiple motion capture databases and include them in our dataset using a unified representation that is independent of the capture system or marker set, making it easy to work with the data regardless of its origin. To obtain motion annotations in natural language, we apply a crowd-sourcing approach and a web-based tool that was specifically build for this purpose, the Motion Annotation Tool. We thoroughly document the annotation process itself and discuss gamification methods that we used to keep annotators motivated. We further propose a novel method, perplexity-based selection, which systematically selects motions for further annotation that are either under-represented in our dataset or that have erroneous annotations. We show that our method mitigates the two aforementioned problems and ensures a systematic annotation process. We provide an in-depth analysis of the structure and contents of our resulting dataset, which, as of October 10, 2016, contains 3911 motions with a total duration of 11.23 hours and 6278 annotations in natural language that contain 52,903 words. We believe this makes our dataset an excellent choice that enables more transparent and comparable research in this important area.

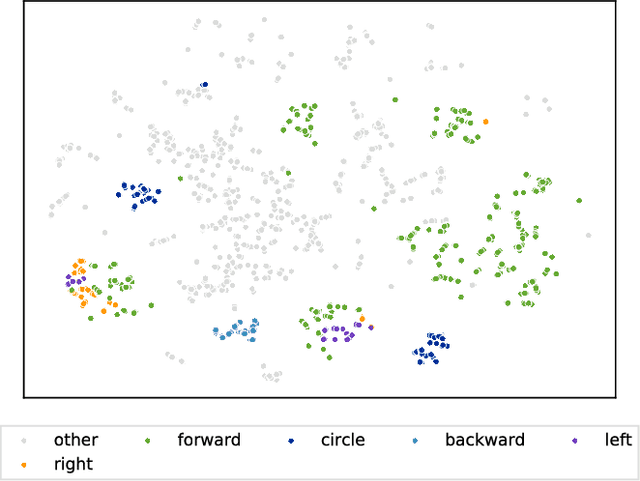

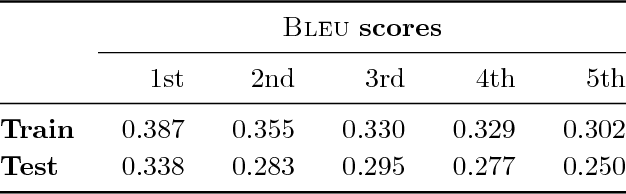

Learning a bidirectional mapping between human whole-body motion and natural language using deep recurrent neural networks

Aug 02, 2018

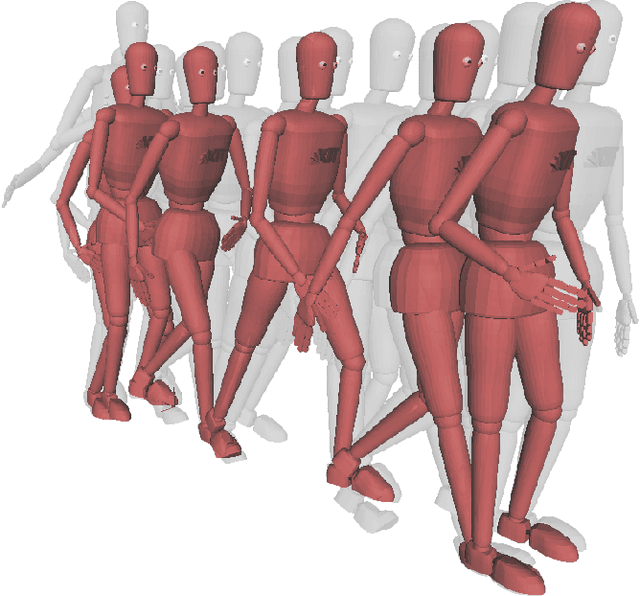

Abstract:Linking human whole-body motion and natural language is of great interest for the generation of semantic representations of observed human behaviors as well as for the generation of robot behaviors based on natural language input. While there has been a large body of research in this area, most approaches that exist today require a symbolic representation of motions (e.g. in the form of motion primitives), which have to be defined a-priori or require complex segmentation algorithms. In contrast, recent advances in the field of neural networks and especially deep learning have demonstrated that sub-symbolic representations that can be learned end-to-end usually outperform more traditional approaches, for applications such as machine translation. In this paper we propose a generative model that learns a bidirectional mapping between human whole-body motion and natural language using deep recurrent neural networks (RNNs) and sequence-to-sequence learning. Our approach does not require any segmentation or manual feature engineering and learns a distributed representation, which is shared for all motions and descriptions. We evaluate our approach on 2,846 human whole-body motions and 6,187 natural language descriptions thereof from the KIT Motion-Language Dataset. Our results clearly demonstrate the effectiveness of the proposed model: We show that our model generates a wide variety of realistic motions only from descriptions thereof in form of a single sentence. Conversely, our model is also capable of generating correct and detailed natural language descriptions from human motions.

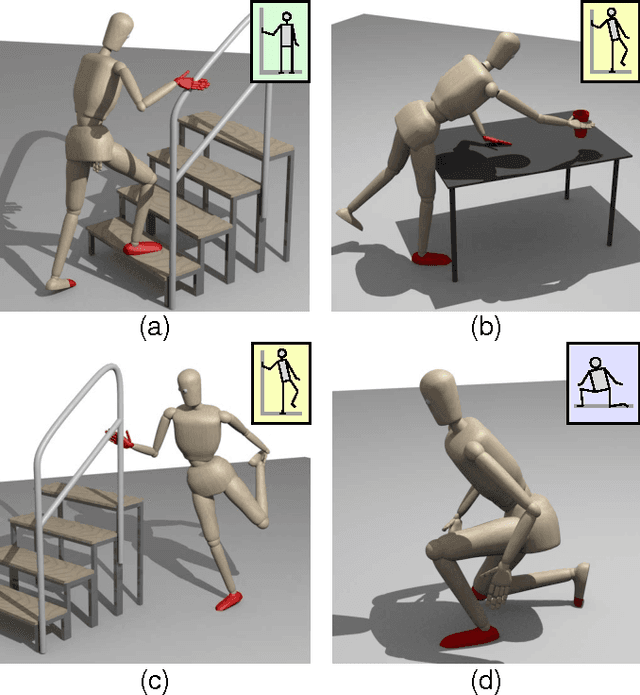

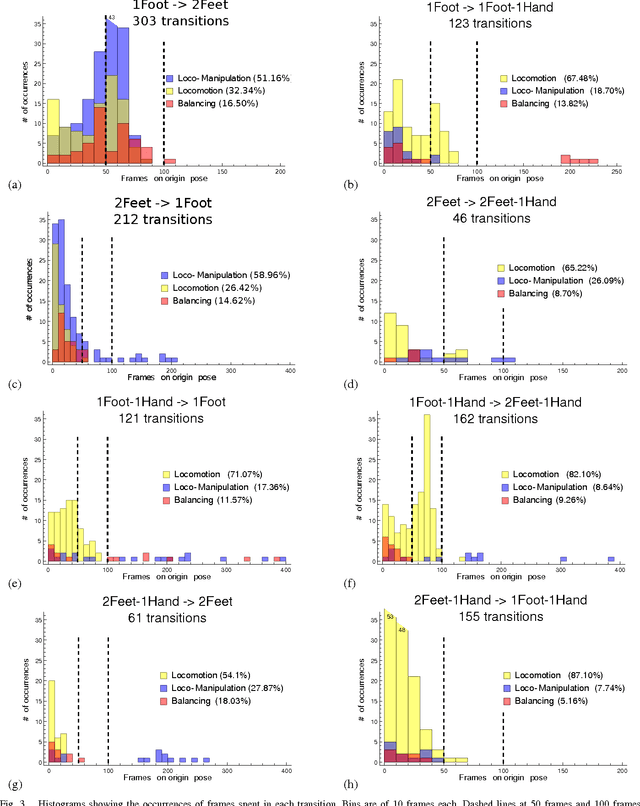

Analyzing Whole-Body Pose Transitions in Multi-Contact Motions

Sep 30, 2015

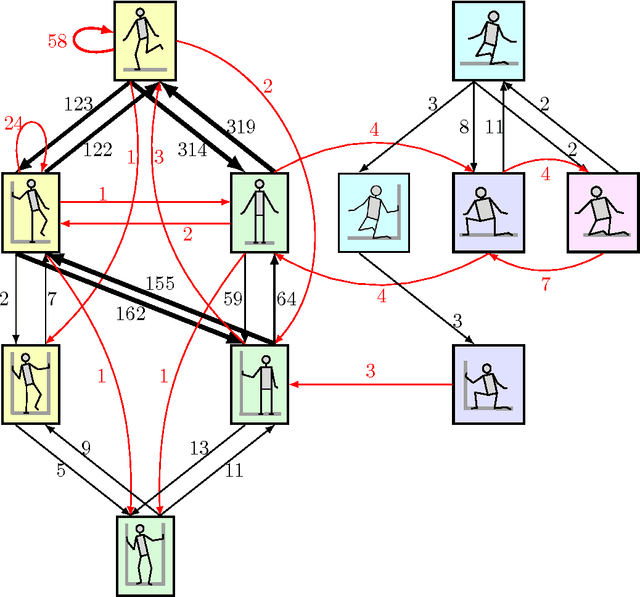

Abstract:When executing whole-body motions, humans are able to use a large variety of support poses which not only utilize the feet, but also hands, knees and elbows to enhance stability. While there are many works analyzing the transitions involved in walking, very few works analyze human motion where more complex supports occur. In this work, we analyze complex support pose transitions in human motion involving locomotion and manipulation tasks (loco-manipulation). We have applied a method for the detection of human support contacts from motion capture data to a large-scale dataset of loco-manipulation motions involving multi-contact supports, providing a semantic representation of them. Our results provide a statistical analysis of the used support poses, their transitions and the time spent in each of them. In addition, our data partially validates our taxonomy of whole-body support poses presented in our previous work. We believe that this work extends our understanding of human motion for humanoids, with a long-term objective of developing methods for autonomous multi-contact motion planning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge