Cheryl Flynn

Towards Algorithmic Fairness in Space-Time: Filling in Black Holes

Nov 08, 2022Abstract:New technologies and the availability of geospatial data have drawn attention to spatio-temporal biases present in society. For example: the COVID-19 pandemic highlighted disparities in the availability of broadband service and its role in the digital divide; the environmental justice movement in the United States has raised awareness to health implications for minority populations stemming from historical redlining practices; and studies have found varying quality and coverage in the collection and sharing of open-source geospatial data. Despite the extensive literature on machine learning (ML) fairness, few algorithmic strategies have been proposed to mitigate such biases. In this paper we highlight the unique challenges for quantifying and addressing spatio-temporal biases, through the lens of use cases presented in the scientific literature and media. We envision a roadmap of ML strategies that need to be developed or adapted to quantify and overcome these challenges -- including transfer learning, active learning, and reinforcement learning techniques. Further, we discuss the potential role of ML in providing guidance to policy makers on issues related to spatial fairness.

System to Integrate Fairness Transparently: An Industry Approach

Jun 10, 2020

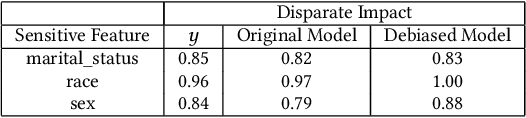

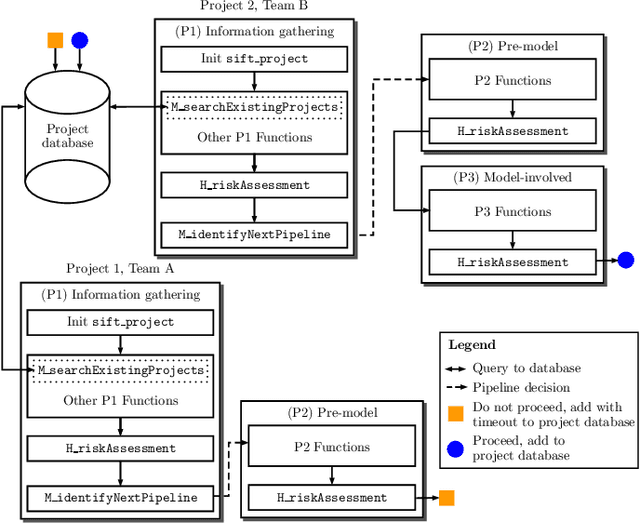

Abstract:There have been significant research efforts to address the issue of unintentional bias in Machine Learning (ML). Many well-known companies have dealt with the fallout after the deployment of their products due to this issue. In an industrial context, enterprises have large-scale ML solutions for a broad class of use cases deployed for different swaths of customers. Trading off the cost of detecting and mitigating bias across this landscape over the lifetime of each use case against the risk of impact to the brand image is a key consideration. We propose a framework for industrial uses that addresses their methodological and mechanization needs. Our approach benefits from prior experience handling security and privacy concerns as well as past internal ML projects. Through significant reuse of bias handling ability at every stage in the ML development lifecycle to guide users we can lower overall costs of reducing bias.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge