Chenwei Wang

Recover Cell Tensor: Diffusion-Equivalent Tensor Completion for Fluorescence Microscopy Imaging

Jan 27, 2026Abstract:Fluorescence microscopy (FM) imaging is a fundamental technique for observing live cell division, one of the most essential processes in the cycle of life and death. Observing 3D live cells requires scanning through the cell volume while minimizing lethal phototoxicity. That limits acquisition time and results in sparsely sampled volumes with anisotropic resolution and high noise. Existing image restoration methods, primarily based on inverse problem modeling, assume known and stable degradation processes and struggle under such conditions, especially in the absence of high-quality reference volumes. In this paper, from a new perspective, we propose a novel tensor completion framework tailored to the nature of FM imaging, which inherently involves nonlinear signal degradation and incomplete observations. Specifically, FM imaging with equidistant Z-axis sampling is essentially a tensor completion task under a uniformly random sampling condition. On one hand, we derive the theoretical lower bound for exact cell tensor completion, validating the feasibility of accurately recovering 3D cell tensor. On the other hand, we reformulate the tensor completion problem as a mathematically equivalent score-based generative model. By incorporating structural consistency priors, the generative trajectory is effectively guided toward denoised and geometrically coherent reconstructions. Our method demonstrates state-of-the-art performance on SR-CACO-2 and three real \textit{in vivo} cellular datasets, showing substantial improvements in both signal-to-noise ratio and structural fidelity.

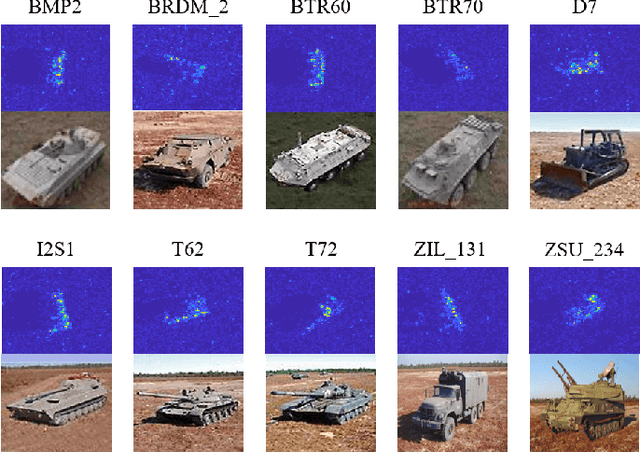

An Interpretable Two-Stage Feature Decomposition Method for Deep Learning-based SAR ATR

Jun 11, 2025

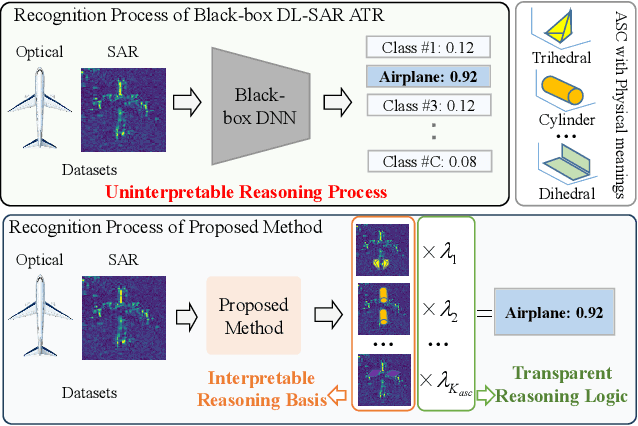

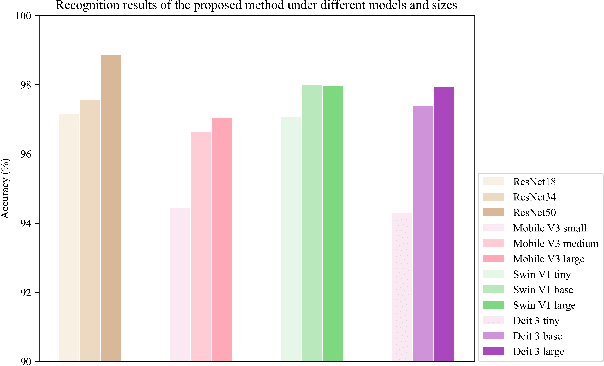

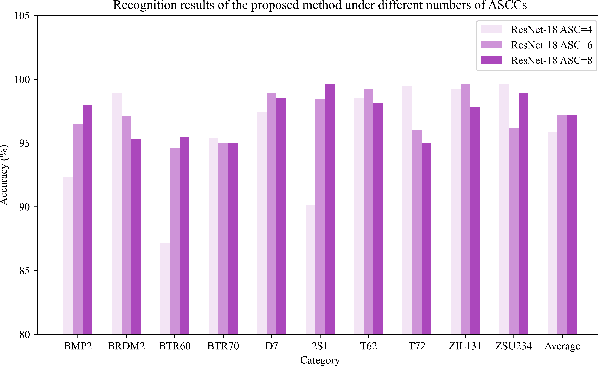

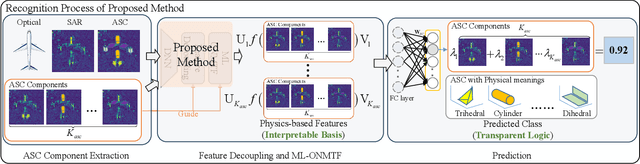

Abstract:Synthetic aperture radar automatic target recognition (SAR ATR) has seen significant performance improvements with deep learning. However, the black-box nature of deep SAR ATR introduces low confidence and high risks in decision-critical SAR applications, hindering practical deployment. To address this issue, deep SAR ATR should provide an interpretable reasoning basis $r_b$ and logic $\lambda_w$, forming the reasoning logic $\sum_{i} {{r_b^i} \times {\lambda_w^i}} =pred$ behind the decisions. Therefore, this paper proposes a physics-based two-stage feature decomposition method for interpretable deep SAR ATR, which transforms uninterpretable deep features into attribute scattering center components (ASCC) with clear physical meanings. First, ASCCs are obtained through a clustering algorithm. To extract independent physical components from deep features, we propose a two-stage decomposition method. In the first stage, a feature decoupling and discrimination module separates deep features into approximate ASCCs with global discriminability. In the second stage, a multilayer orthogonal non-negative matrix tri-factorization (MLO-NMTF) further decomposes the ASCCs into independent components with distinct physical meanings. The MLO-NMTF elegantly aligns with the clustering algorithms to obtain ASCCs. Finally, this method ensures both an interpretable reasoning process and accurate recognition results. Extensive experiments on four benchmark datasets confirm its effectiveness, showcasing the method's interpretability, robust recognition performance, and strong generalization capability.

CiUAV: A Multi-Task 3D Indoor Localization System for UAVs based on Channel State Information

May 27, 2025

Abstract:Accurate indoor positioning for unmanned aerial vehicles (UAVs) is critical for logistics, surveillance, and emergency response applications, particularly in GPS-denied environments. Existing indoor localization methods, including optical tracking, ultra-wideband, and Bluetooth-based systems, face cost, accuracy, and robustness trade-offs, limiting their practicality for UAV navigation. This paper proposes CiUAV, a novel 3D indoor localization system designed for UAVs, leveraging channel state information (CSI) obtained from low-cost ESP32 IoT-based sensors. The system incorporates a dynamic automatic gain control (AGC) compensation algorithm to mitigate noise and stabilize CSI signals, significantly enhancing the robustness of the measurement. Additionally, a multi-task 3D localization model, Sensor-in-Sample (SiS), is introduced to enhance system robustness by addressing challenges related to incomplete sensor data and limited training samples. SiS achieves this by joint training with varying sensor configurations and sample sizes, ensuring reliable performance even in resource-constrained scenarios. Experiment results demonstrate that CiUAV achieves a LMSE localization error of 0.2629 m in a 3D space, achieving good accuracy and robustness. The proposed system provides a cost-effective and scalable solution, demonstrating its usefulness for UAV applications in resource-constrained indoor environments.

Volume Tells: Dual Cycle-Consistent Diffusion for 3D Fluorescence Microscopy De-noising and Super-Resolution

Mar 04, 2025Abstract:3D fluorescence microscopy is essential for understanding fundamental life processes through long-term live-cell imaging. However, due to inherent issues in imaging principles, it faces significant challenges including spatially varying noise and anisotropic resolution, where the axial resolution lags behind the lateral resolution up to 4.5 times. Meanwhile, laser power is kept low to maintain cell viability, leading to inaccessible low-noise and high-resolution paired ground truth (GT). To tackle these limitations, a dual Cycle-consistent Diffusion is proposed to effectively mine intra-volume imaging priors within 3D cell volumes in an unsupervised manner, i.e., Volume Tells (VTCD), achieving de-noising and super-resolution (SR) simultaneously. Specifically, a spatially iso-distributed denoiser is designed to exploit the noise distribution consistency between adjacent low-noise and high-noise regions within the 3D cell volume, suppressing the spatially varying noise. Then, in light of the structural consistency of the cell volume, a cross-plane global-propagation SR module propagates high-resolution details from the XY plane into adjacent regions in the XZ and YZ planes, progressively enhancing resolution across the entire 3D cell volume. Experimental results on 10 in vivo cellular dataset demonstrate high improvements in both denoising and super-resolution, with axial resolution enhanced from ~ 430 nm to ~ 90 nm.

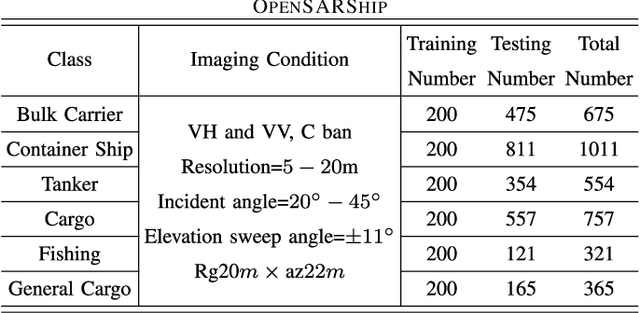

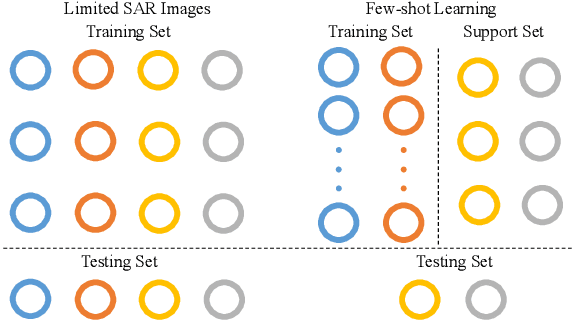

SAR ATR Method with Limited Training Data via an Embedded Feature Augmenter and Dynamic Hierarchical-Feature Refiner

Sep 01, 2023Abstract:Without sufficient data, the quantity of information available for supervised training is constrained, as obtaining sufficient synthetic aperture radar (SAR) training data in practice is frequently challenging. Therefore, current SAR automatic target recognition (ATR) algorithms perform poorly with limited training data availability, resulting in a critical need to increase SAR ATR performance. In this study, a new method to improve SAR ATR when training data are limited is proposed. First, an embedded feature augmenter is designed to enhance the extracted virtual features located far away from the class center. Based on the relative distribution of the features, the algorithm pulls the corresponding virtual features with different strengths toward the corresponding class center. The designed augmenter increases the amount of information available for supervised training and improves the separability of the extracted features. Second, a dynamic hierarchical-feature refiner is proposed to capture the discriminative local features of the samples. Through dynamically generated kernels, the proposed refiner integrates the discriminative local features of different dimensions into the global features, further enhancing the inner-class compactness and inter-class separability of the extracted features. The proposed method not only increases the amount of information available for supervised training but also extracts the discriminative features from the samples, resulting in superior ATR performance in problems with limited SAR training data. Experimental results on the moving and stationary target acquisition and recognition (MSTAR), OpenSARShip, and FUSAR-Ship benchmark datasets demonstrate the robustness and outstanding ATR performance of the proposed method in response to limited SAR training data.

Semi-Supervised SAR ATR Framework with Transductive Auxiliary Segmentation

Aug 31, 2023Abstract:Convolutional neural networks (CNNs) have achieved high performance in synthetic aperture radar (SAR) automatic target recognition (ATR). However, the performance of CNNs depends heavily on a large amount of training data. The insufficiency of labeled training SAR images limits the recognition performance and even invalidates some ATR methods. Furthermore, under few labeled training data, many existing CNNs are even ineffective. To address these challenges, we propose a Semi-supervised SAR ATR Framework with transductive Auxiliary Segmentation (SFAS). The proposed framework focuses on exploiting the transductive generalization on available unlabeled samples with an auxiliary loss serving as a regularizer. Through auxiliary segmentation of unlabeled SAR samples and information residue loss (IRL) in training, the framework can employ the proposed training loop process and gradually exploit the information compilation of recognition and segmentation to construct a helpful inductive bias and achieve high performance. Experiments conducted on the MSTAR dataset have shown the effectiveness of our proposed SFAS for few-shot learning. The recognition performance of 94.18\% can be achieved under 20 training samples in each class with simultaneous accurate segmentation results. Facing variances of EOCs, the recognition ratios are higher than 88.00\% when 10 training samples each class.

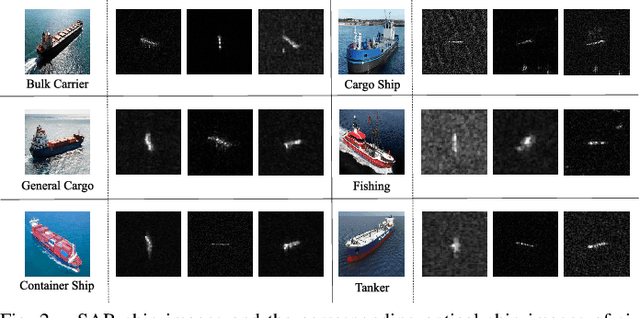

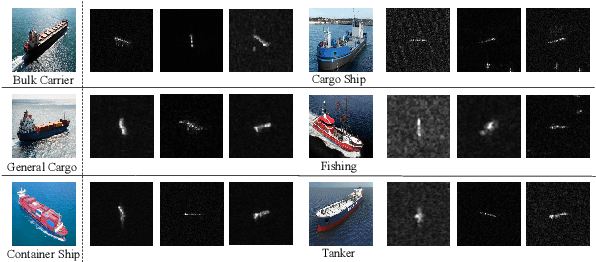

SAR Ship Target Recognition via Selective Feature Discrimination and Multifeature Center Classifier

Aug 20, 2023Abstract:Maritime surveillance is not only necessary for every country, such as in maritime safeguarding and fishing controls, but also plays an essential role in international fields, such as in rescue support and illegal immigration control. Most of the existing automatic target recognition (ATR) methods directly send the extracted whole features of SAR ships into one classifier. The classifiers of most methods only assign one feature center to each class. However, the characteristics of SAR ship images, large inner-class variance, and small interclass difference lead to the whole features containing useless partial features and a single feature center for each class in the classifier failing with large inner-class variance. We proposes a SAR ship target recognition method via selective feature discrimination and multifeature center classifier. The selective feature discrimination automatically finds the similar partial features from the most similar interclass image pairs and the dissimilar partial features from the most dissimilar inner-class image pairs. It then provides a loss to enhance these partial features with more interclass separability. Motivated by divide and conquer, the multifeature center classifier assigns multiple learnable feature centers for each ship class. In this way, the multifeature centers divide the large inner-class variance into several smaller variances and conquered by combining all feature centers of one ship class. Finally, the probability distribution over all feature centers is considered comprehensively to achieve an accurate recognition of SAR ship images. The ablation experiments and experimental results on OpenSARShip and FUSAR-Ship datasets show that our method has achieved superior recognition performance under decreasing training SAR ship samples.

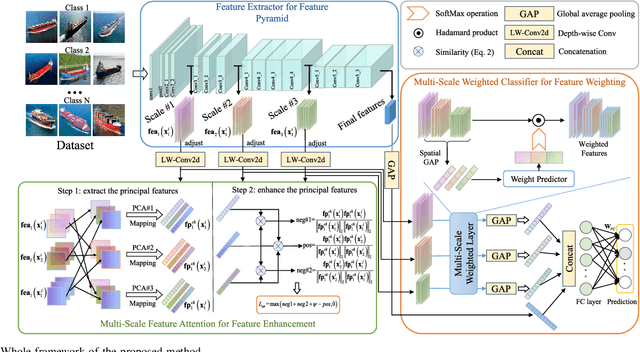

SAR Ship Target Recognition Via Multi-Scale Feature Attention and Adaptive-Weighed Classifier

Aug 20, 2023

Abstract:Maritime surveillance is indispensable for civilian fields, including national maritime safeguarding, channel monitoring, and so on, in which synthetic aperture radar (SAR) ship target recognition is a crucial research field. The core problem to realizing accurate SAR ship target recognition is the large inner-class variance and inter-class overlap of SAR ship features, which limits the recognition performance. Most existing methods plainly extract multi-scale features of the network and utilize equally each feature scale in the classification stage. However, the shallow multi-scale features are not discriminative enough, and each scale feature is not equally effective for recognition. These factors lead to the limitation of recognition performance. Therefore, we proposed a SAR ship recognition method via multi-scale feature attention and adaptive-weighted classifier to enhance features in each scale, and adaptively choose the effective feature scale for accurate recognition. We first construct an in-network feature pyramid to extract multi-scale features from SAR ship images. Then, the multi-scale feature attention can extract and enhance the principal components from the multi-scale features with more inner-class compactness and inter-class separability. Finally, the adaptive weighted classifier chooses the effective feature scales in the feature pyramid to achieve the final precise recognition. Through experiments and comparisons under OpenSARship data set, the proposed method is validated to achieve state-of-the-art performance for SAR ship recognition.

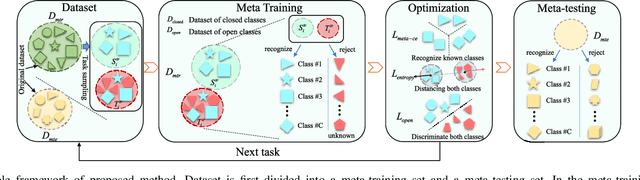

An Entropy-Awareness Meta-Learning Method for SAR Open-Set ATR

Aug 20, 2023

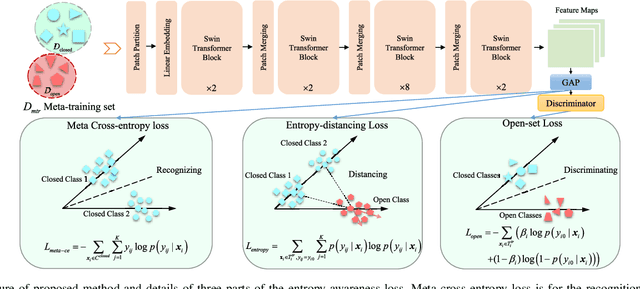

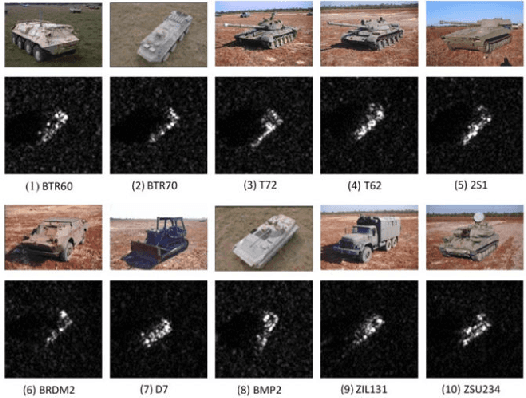

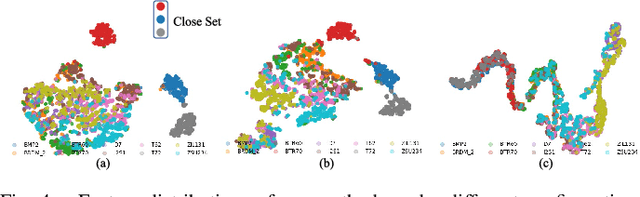

Abstract:Existing synthetic aperture radar automatic target recognition (SAR ATR) methods have been effective for the classification of seen target classes. However, it is more meaningful and challenging to distinguish the unseen target classes, i.e., open set recognition (OSR) problem, which is an urgent problem for the practical SAR ATR. The key solution of OSR is to effectively establish the exclusiveness of feature distribution of known classes. In this letter, we propose an entropy-awareness meta-learning method that improves the exclusiveness of feature distribution of known classes which means our method is effective for not only classifying the seen classes but also encountering the unseen other classes. Through meta-learning tasks, the proposed method learns to construct a feature space of the dynamic-assigned known classes. This feature space is required by the tasks to reject all other classes not belonging to the known classes. At the same time, the proposed entropy-awareness loss helps the model to enhance the feature space with effective and robust discrimination between the known and unknown classes. Therefore, our method can construct a dynamic feature space with discrimination between the known and unknown classes to simultaneously classify the dynamic-assigned known classes and reject the unknown classes. Experiments conducted on the moving and stationary target acquisition and recognition (MSTAR) dataset have shown the effectiveness of our method for SAR OSR.

Crucial Feature Capture and Discrimination for Limited Training Data SAR ATR

Aug 20, 2023

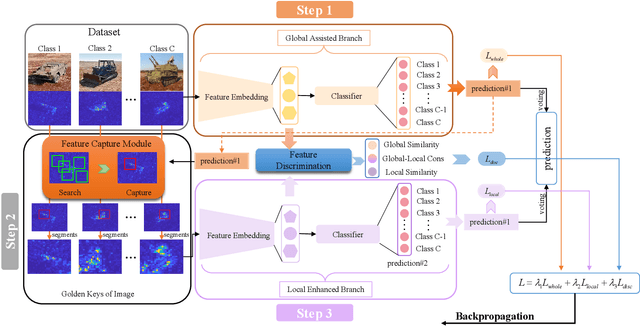

Abstract:Although deep learning-based methods have achieved excellent performance on SAR ATR, the fact that it is difficult to acquire and label a lot of SAR images makes these methods, which originally performed well, perform weakly. This may be because most of them consider the whole target images as input, but the researches find that, under limited training data, the deep learning model can't capture discriminative image regions in the whole images, rather focus on more useless even harmful image regions for recognition. Therefore, the results are not satisfactory. In this paper, we design a SAR ATR framework under limited training samples, which mainly consists of two branches and two modules, global assisted branch and local enhanced branch, feature capture module and feature discrimination module. In every training process, the global assisted branch first finishes the initial recognition based on the whole image. Based on the initial recognition results, the feature capture module automatically searches and locks the crucial image regions for correct recognition, which we named as the golden key of image. Then the local extract the local features from the captured crucial image regions. Finally, the overall features and local features are input into the classifier and dynamically weighted using the learnable voting parameters to collaboratively complete the final recognition under limited training samples. The model soundness experiments demonstrate the effectiveness of our method through the improvement of feature distribution and recognition probability. The experimental results and comparisons on MSTAR and OPENSAR show that our method has achieved superior recognition performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge