C. Liu

MPIA, Heidelberg

PointNetPGAP-SLC: A 3D LiDAR-based Place Recognition Approach with Segment-level Consistency Training for Mobile Robots in Horticulture

May 29, 2024

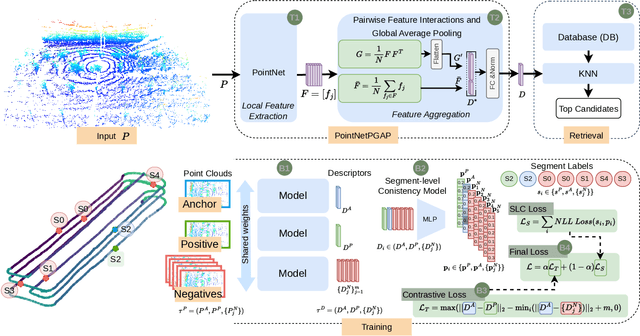

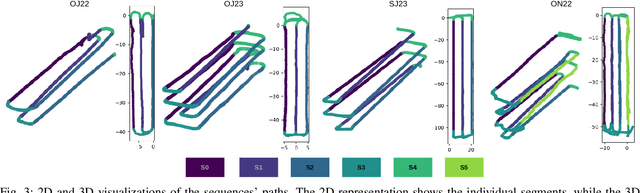

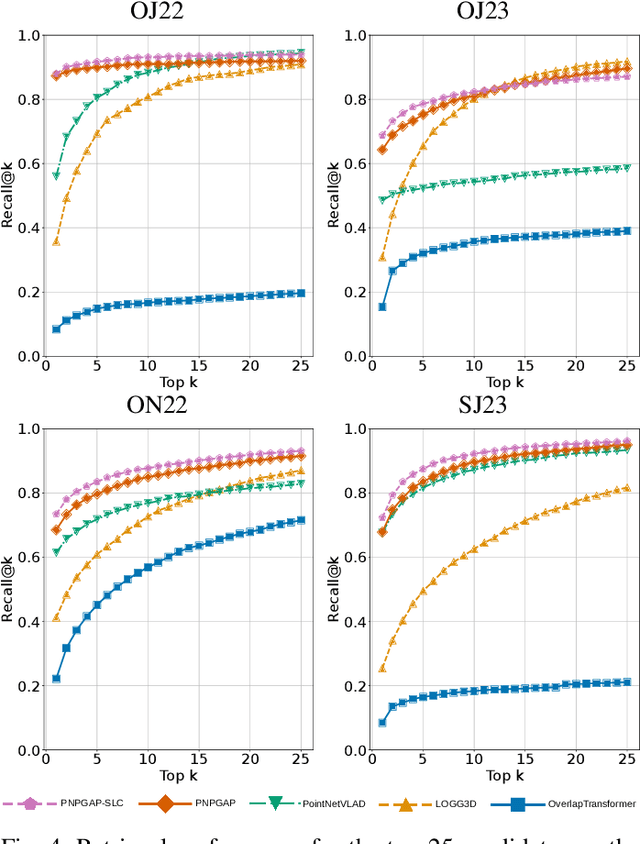

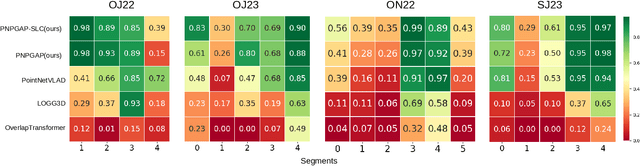

Abstract:This paper addresses robotic place recognition in horticultural environments using 3D-LiDAR technology and deep learning. Three main contributions are proposed: (i) a novel model called PointNetPGAP, which combines a global average pooling aggregator and a pairwise feature interaction aggregator; (ii) a Segment-Level Consistency (SLC) model, used only during training, with the goal of augmenting the contrastive loss with a context-specific training signal to enhance descriptors; and (iii) a novel dataset named HORTO-3DLM featuring sequences from orchards and strawberry plantations. The experimental evaluation, conducted on the new HORTO-3DLM dataset, compares PointNetPGAP at the sequence- and segment-level with state-of-the-art (SOTA) models, including OverlapTransformer, PointNetVLAD, and LOGG3D. Additionally, all models were trained and evaluated using the SLC. Empirical results obtained through a cross-validation evaluation protocol demonstrate the superiority of PointNetPGAP compared to existing SOTA models. PointNetPGAP emerges as the best model in retrieving the top-1 candidate, outperforming PointNetVLAD (the second-best model). Moreover, when comparing the impact of training with the SLC model, performance increased on four out of the five evaluated models, indicating that adding a context-specific signal to the contrastive loss leads to improved descriptors.

ORCHNet: A Robust Global Feature Aggregation approach for 3D LiDAR-based Place recognition in Orchards

Mar 01, 2023

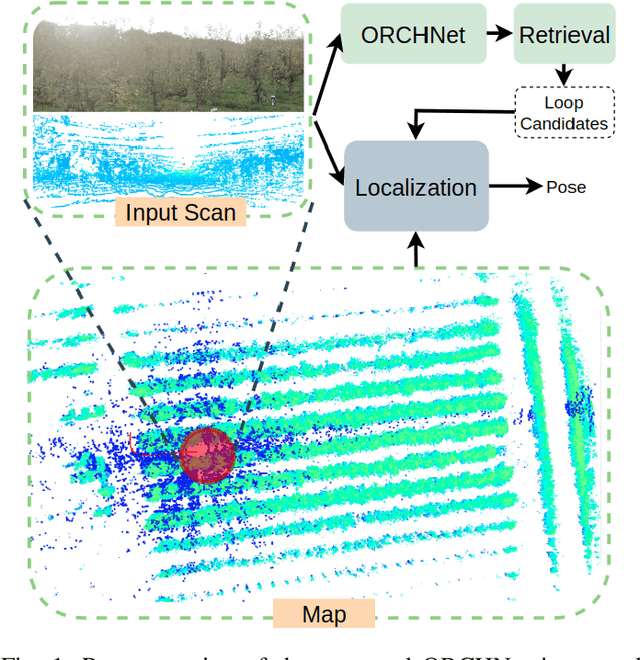

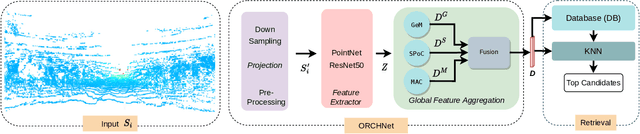

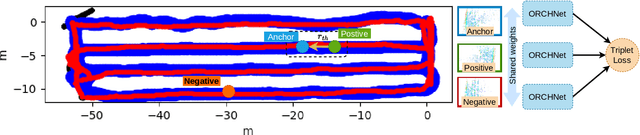

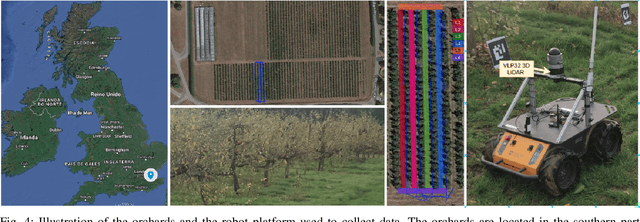

Abstract:Robust and reliable place recognition and loop closure detection in agricultural environments is still an open problem. In particular, orchards are a difficult case study due to structural similarity across the entire field. In this work, we address the place recognition problem in orchards resorting to 3D LiDAR data, which is considered a key modality for robustness. Hence, we propose ORCHNet, a deep-learning-based approach that maps 3D-LiDAR scans to global descriptors. Specifically, this work proposes a new global feature aggregation approach, which fuses multiple aggregation methods into a robust global descriptor. ORCHNet is evaluated on real-world data collected in orchards, comprising data from the summer and autumn seasons. To assess the robustness, We compare ORCHNet with state-of-the-art aggregation approaches on data from the same season and across seasons. Moreover, we additionally evaluate the proposed approach as part of a localization framework, where ORCHNet is used as a loop closure detector. The empirical results indicate that, on the place recognition task, ORCHNet outperforms the remaining approaches, and is also more robust across seasons. As for the localization, the edge cases where the path goes through the trees are solved when integrating ORCHNet as a loop detector, showing the potential applicability of the proposed approach in this task. The code and dataset will be publicly available at:\url{https://github.com/Cybonic/ORCHNet.git}

A Memristor based Unsupervised Neuromorphic System Towards Fast and Energy-Efficient GAN

May 09, 2018

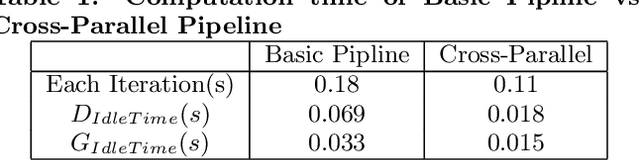

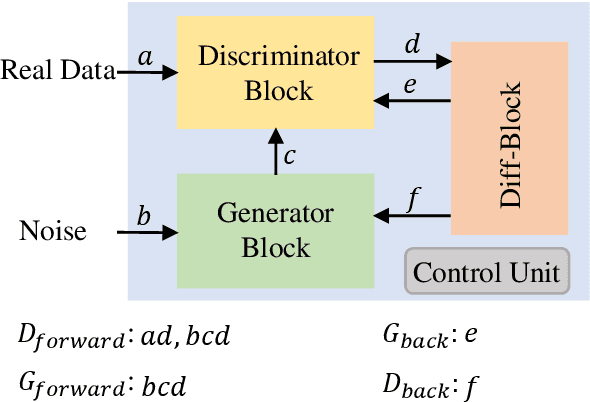

Abstract:Deep Learning has gained immense success in pushing today's artificial intelligence forward. To solve the challenge of limited labeled data in the supervised learning world, unsupervised learning has been proposed years ago while low accuracy hinters its realistic applications. Generative adversarial network (GAN) emerges as an unsupervised learning approach with promising accuracy and are under extensively study. However, the execution of GAN is extremely memory and computation intensive and results in ultra-low speed and high-power consumption. In this work, we proposed a holistic solution for fast and energy-efficient GAN computation through a memristor-based neuromorphic system. First, we exploited a hardware and software co-design approach to map the computation blocks in GAN efficiently. We also proposed an efficient data flow for optimal parallelism training and testing, depending on the computation correlations between different computing blocks. To compute the unique and complex loss of GAN, we developed a diff-block with optimized accuracy and performance. The experiment results on big data show that our design achieves 2.8x speedup and 6.1x energy-saving compared with the traditional GPU accelerator, as well as 5.5x speedup and 1.4x energy-saving compared with the previous FPGA-based accelerator.

Towards Accurate and High-Speed Spiking Neuromorphic Systems with Data Quantization-Aware Deep Networks

May 08, 2018

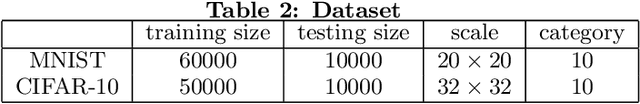

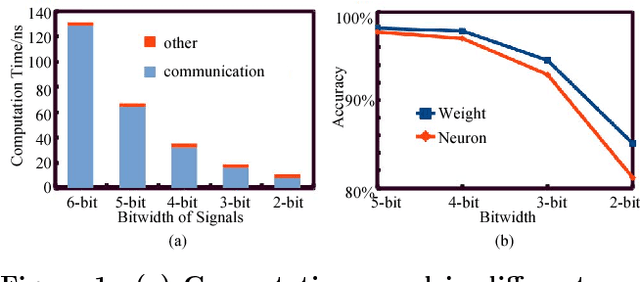

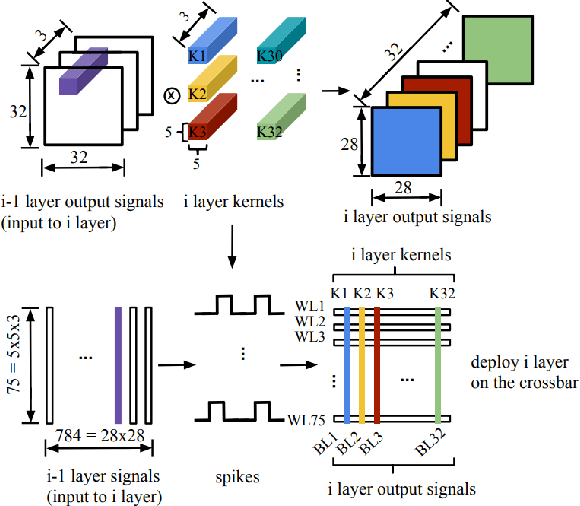

Abstract:Deep Neural Networks (DNNs) have gained immense success in cognitive applications and greatly pushed today's artificial intelligence forward. The biggest challenge in executing DNNs is their extremely data-extensive computations. The computing efficiency in speed and energy is constrained when traditional computing platforms are employed in such computational hungry executions. Spiking neuromorphic computing (SNC) has been widely investigated in deep networks implementation own to their high efficiency in computation and communication. However, weights and signals of DNNs are required to be quantized when deploying the DNNs on the SNC, which results in unacceptable accuracy loss. %However, the system accuracy is limited by quantizing data directly in deep networks deployment. Previous works mainly focus on weights discretize while inter-layer signals are mainly neglected. In this work, we propose to represent DNNs with fixed integer inter-layer signals and fixed-point weights while holding good accuracy. We implement the proposed DNNs on the memristor-based SNC system as a deployment example. With 4-bit data representation, our results show that the accuracy loss can be controlled within 0.02% (2.3%) on MNIST (CIFAR-10). Compared with the 8-bit dynamic fixed-point DNNs, our system can achieve more than 9.8x speedup, 89.1% energy saving, and 30% area saving.

The expected performance of stellar parametrization with Gaia spectrophotometry

Aug 29, 2012

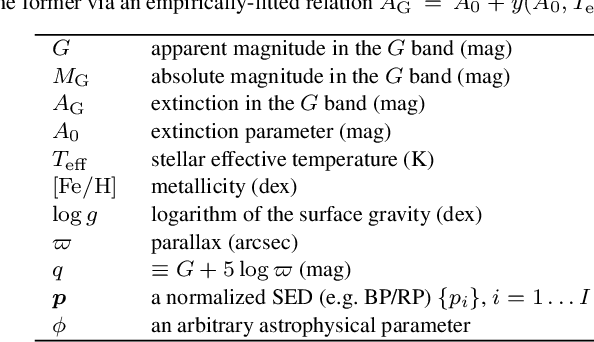

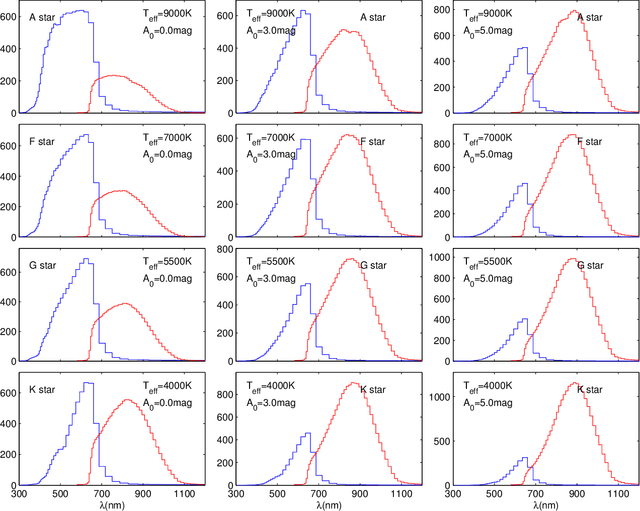

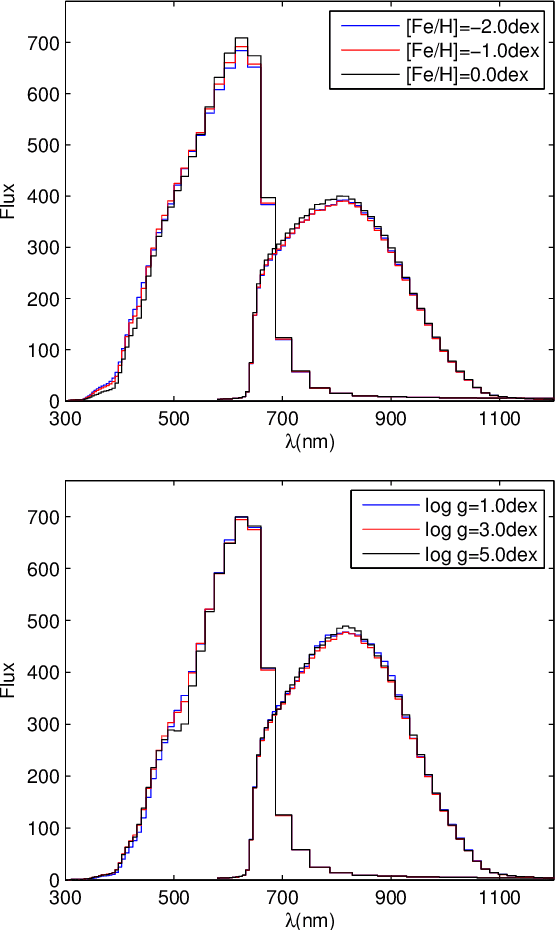

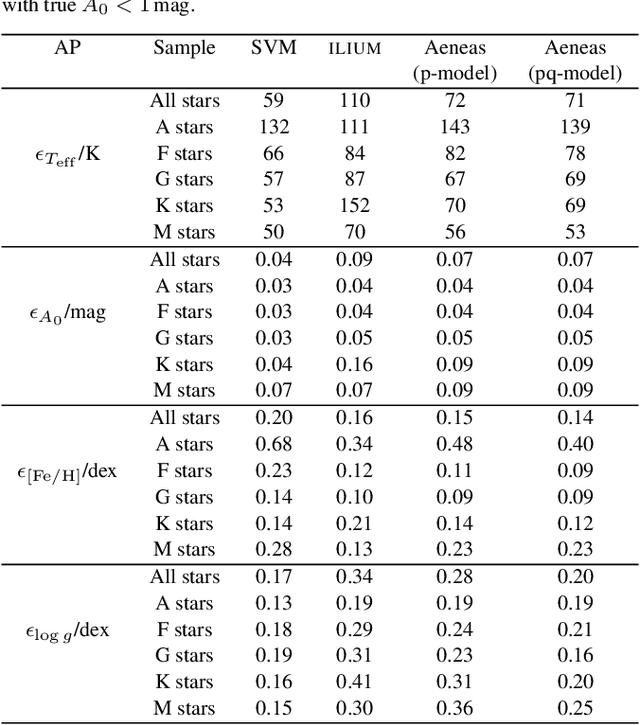

Abstract:Gaia will obtain astrometry and spectrophotometry for essentially all sources in the sky down to a broad band magnitude limit of G=20, an expected yield of 10^9 stars. Its main scientific objective is to reveal the formation and evolution of our Galaxy through chemo-dynamical analysis. In addition to inferring positions, parallaxes and proper motions from the astrometry, we must also infer the astrophysical parameters of the stars from the spectrophotometry, the BP/RP spectrum. Here we investigate the performance of three different algorithms (SVM, ILIUM, Aeneas) for estimating the effective temperature, line-of-sight interstellar extinction, metallicity and surface gravity of A-M stars over a wide range of these parameters and over the full magnitude range Gaia will observe (G=6-20mag). One of the algorithms, Aeneas, infers the posterior probability density function over all parameters, and can optionally take into account the parallax and the Hertzsprung-Russell diagram to improve the estimates. For all algorithms the accuracy of estimation depends on G and on the value of the parameters themselves, so a broad summary of performance is only approximate. For stars at G=15 with less than two magnitudes extinction, we expect to be able to estimate Teff to within 1%, logg to 0.1-0.2dex, and [Fe/H] (for FGKM stars) to 0.1-0.2dex, just using the BP/RP spectrum (mean absolute error statistics are quoted). Performance degrades at larger extinctions, but not always by a large amount. Extinction can be estimated to an accuracy of 0.05-0.2mag for stars across the full parameter range with a priori unknown extinction between 0 and 10mag. Performance degrades at fainter magnitudes, but even at G=19 we can estimate logg to better than 0.2dex for all spectral types, and [Fe/H] to within 0.35dex for FGKM stars, for extinctions below 1mag.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge