C. Chen

Observation of high-energy neutrinos from the Galactic plane

Jul 10, 2023Abstract:The origin of high-energy cosmic rays, atomic nuclei that continuously impact Earth's atmosphere, has been a mystery for over a century. Due to deflection in interstellar magnetic fields, cosmic rays from the Milky Way arrive at Earth from random directions. However, near their sources and during propagation, cosmic rays interact with matter and produce high-energy neutrinos. We search for neutrino emission using machine learning techniques applied to ten years of data from the IceCube Neutrino Observatory. We identify neutrino emission from the Galactic plane at the 4.5$\sigma$ level of significance, by comparing diffuse emission models to a background-only hypothesis. The signal is consistent with modeled diffuse emission from the Galactic plane, but could also arise from a population of unresolved point sources.

* Submitted on May 12th, 2022; Accepted on May 4th, 2023

Data efficient reinforcement learning and adaptive optimal perimeter control of network traffic dynamics

Sep 13, 2022

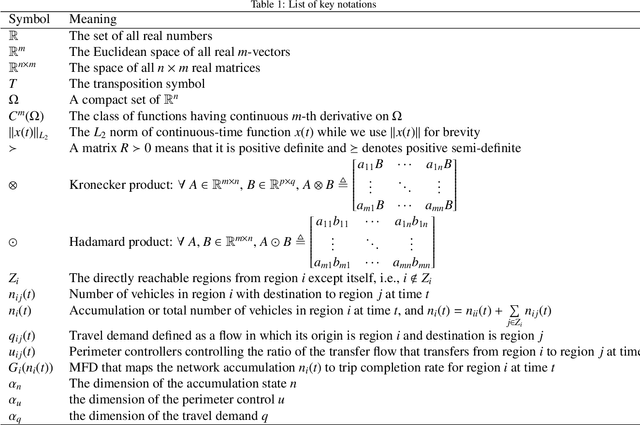

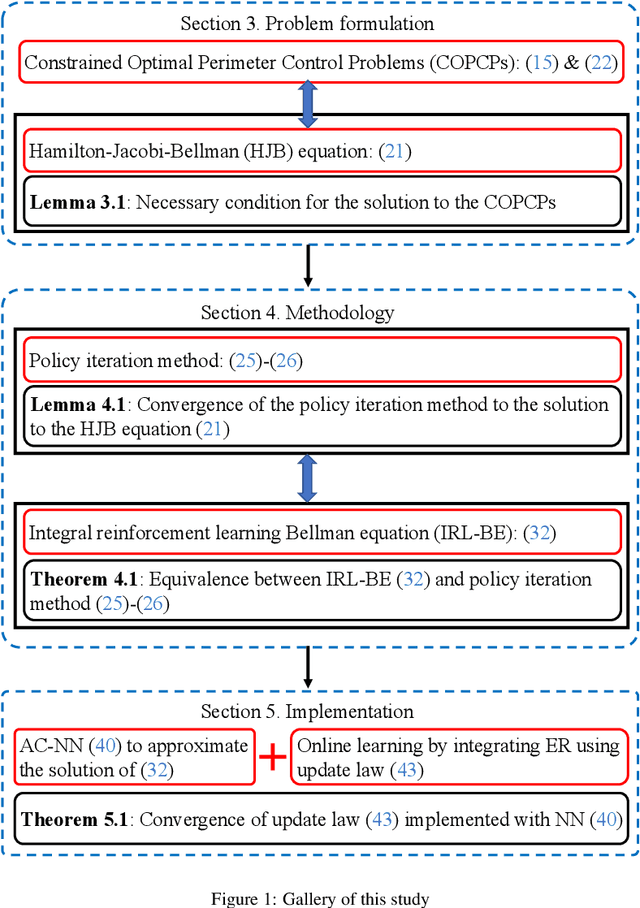

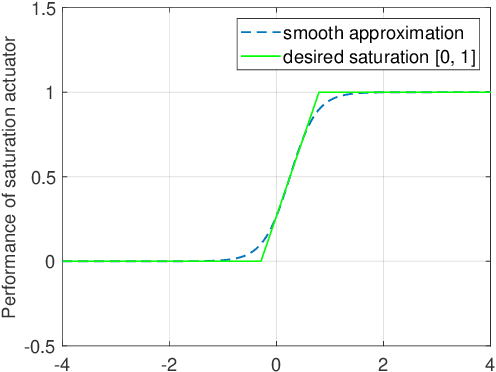

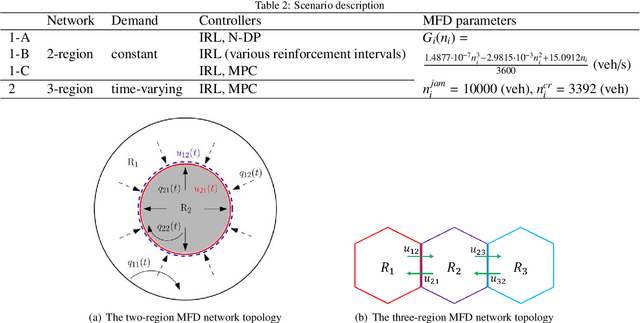

Abstract:Existing data-driven and feedback traffic control strategies do not consider the heterogeneity of real-time data measurements. Besides, traditional reinforcement learning (RL) methods for traffic control usually converge slowly for lacking data efficiency. Moreover, conventional optimal perimeter control schemes require exact knowledge of the system dynamics and thus would be fragile to endogenous uncertainties. To handle these challenges, this work proposes an integral reinforcement learning (IRL) based approach to learning the macroscopic traffic dynamics for adaptive optimal perimeter control. This work makes the following primary contributions to the transportation literature: (a) A continuous-time control is developed with discrete gain updates to adapt to the discrete-time sensor data. (b) To reduce the sampling complexity and use the available data more efficiently, the experience replay (ER) technique is introduced to the IRL algorithm. (c) The proposed method relaxes the requirement on model calibration in a "model-free" manner that enables robustness against modeling uncertainty and enhances the real-time performance via a data-driven RL algorithm. (d) The convergence of the IRL-based algorithms and the stability of the controlled traffic dynamics are proven via the Lyapunov theory. The optimal control law is parameterized and then approximated by neural networks (NN), which moderates the computational complexity. Both state and input constraints are considered while no model linearization is required. Numerical examples and simulation experiments are presented to verify the effectiveness and efficiency of the proposed method.

Graph Neural Networks for Low-Energy Event Classification & Reconstruction in IceCube

Sep 07, 2022Abstract:IceCube, a cubic-kilometer array of optical sensors built to detect atmospheric and astrophysical neutrinos between 1 GeV and 1 PeV, is deployed 1.45 km to 2.45 km below the surface of the ice sheet at the South Pole. The classification and reconstruction of events from the in-ice detectors play a central role in the analysis of data from IceCube. Reconstructing and classifying events is a challenge due to the irregular detector geometry, inhomogeneous scattering and absorption of light in the ice and, below 100 GeV, the relatively low number of signal photons produced per event. To address this challenge, it is possible to represent IceCube events as point cloud graphs and use a Graph Neural Network (GNN) as the classification and reconstruction method. The GNN is capable of distinguishing neutrino events from cosmic-ray backgrounds, classifying different neutrino event types, and reconstructing the deposited energy, direction and interaction vertex. Based on simulation, we provide a comparison in the 1-100 GeV energy range to the current state-of-the-art maximum likelihood techniques used in current IceCube analyses, including the effects of known systematic uncertainties. For neutrino event classification, the GNN increases the signal efficiency by 18% at a fixed false positive rate (FPR), compared to current IceCube methods. Alternatively, the GNN offers a reduction of the FPR by over a factor 8 (to below half a percent) at a fixed signal efficiency. For the reconstruction of energy, direction, and interaction vertex, the resolution improves by an average of 13%-20% compared to current maximum likelihood techniques in the energy range of 1-30 GeV. The GNN, when run on a GPU, is capable of processing IceCube events at a rate nearly double of the median IceCube trigger rate of 2.7 kHz, which opens the possibility of using low energy neutrinos in online searches for transient events.

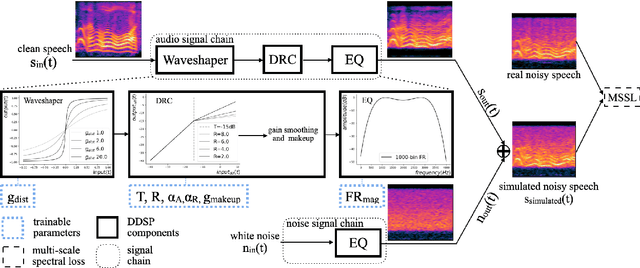

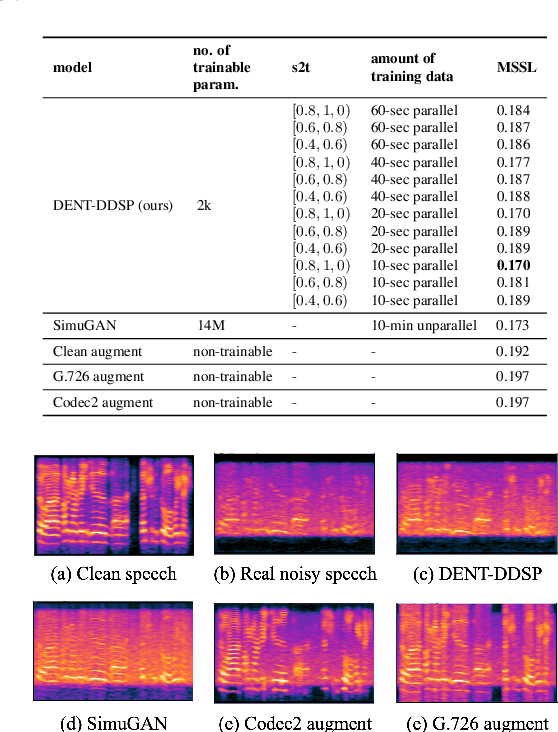

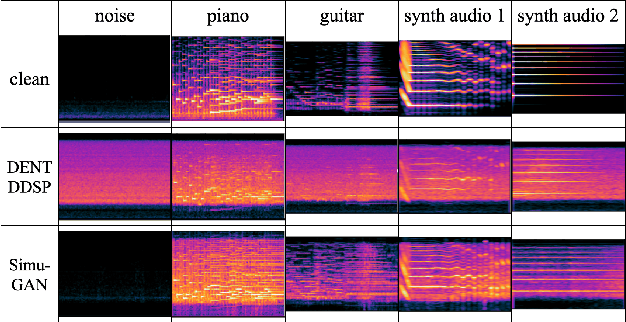

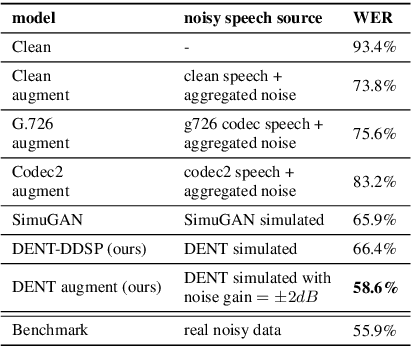

DENT-DDSP: Data-efficient noisy speech generator using differentiable digital signal processors for explicit distortion modelling and noise-robust speech recognition

Aug 01, 2022

Abstract:The performances of automatic speech recognition (ASR) systems degrade drastically under noisy conditions. Explicit distortion modelling (EDM), as a feature compensation step, is able to enhance ASR systems under such conditions by simulating the in-domain noisy speeches from the clean counterparts. Yet, existing distortion models are either non-trainable or unexplainable and often lack controllability and generalization ability. In this paper, we propose a fully explainable and controllable model: DENT-DDSP to achieve EDM. DENT-DDSP utilizes novel differentiable digital signal processing (DDSP) components and requires only 10 seconds of training data to achieve high fidelity. The experiment shows that the simulated noisy data from DENT-DDSP achieves the highest simulation fidelity compared to other baseline models in terms of multi-scale spectral loss (MSSL). Moreover, to validate whether the data simulated by DENT-DDSP are able to replace the scarce in-domain noisy data in the noise-robust ASR tasks, several downstream ASR models with the same architecture are trained using the simulated data and the real data. The experiment shows that the model trained with the simulated noisy data from DENT-DDSP achieves similar performances to the benchmark with a 2.7\% difference in terms of word error rate (WER). The code of the model is released online.

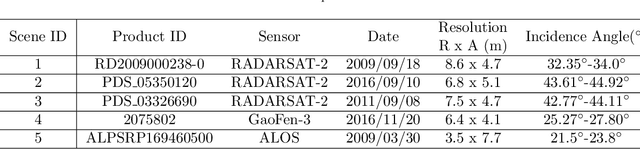

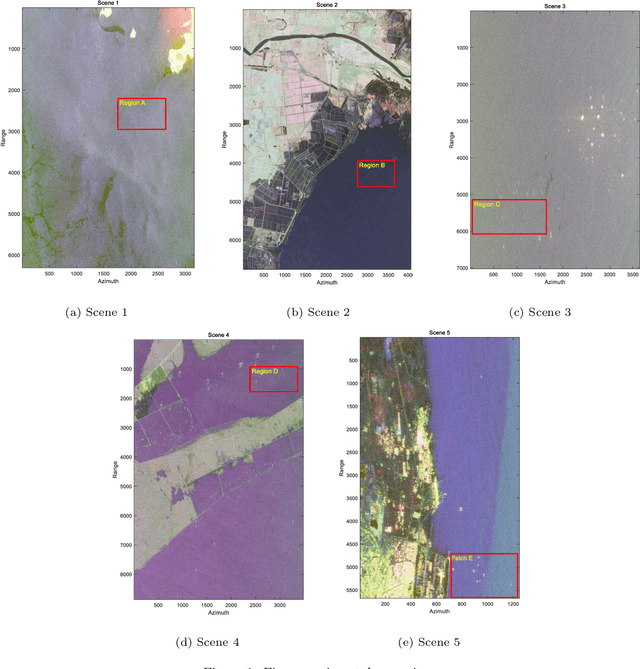

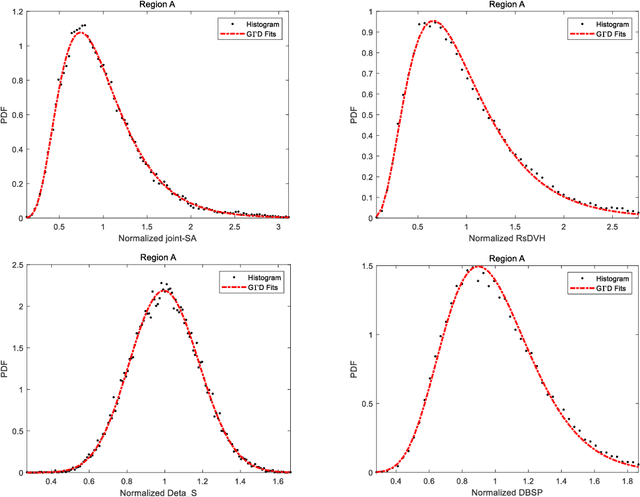

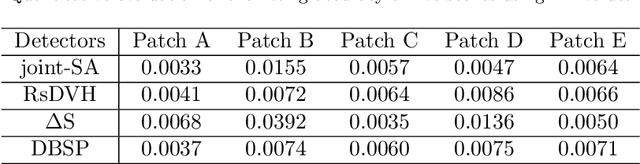

A Novel Full-Polarization SAR Images Ship Detector Based on the Scattering Mechanisms and the Wave Polarization Anisotropy

Dec 07, 2021

Abstract:Synthetic aperture radar (SAR) is considered being a good option for earth observation with its unique advantages. In this paper, we proposed an adaptive ship detector using full-polarization SAR images. First, by thoroughly investigating the scattering characteristics between ships and their background, and the wave polarization anisotropy, a novel ship detector is proposed by jointing the two characteristics, named Scattering-Anisotropy joint (joint-SA). Based on the theoretical analysis, we showed that the joint-SA is an effective physical quantity to show the difference between the ship and its background, and thus joint-SA can be used for ship detection of full-polarization image data. Second, the generalized Gamma distribution was used to characterize the joint-SA statistics of sea clutter with a large range of homogeneity. As a result, an adaptive constant false alarm rate (CFAR) method was implemented based on the joint-SA. Finally, RADARSAT-2 and GF-3 data in C-band and ALOS data in L-band are used for verification. We tested on five datasets, and the experimental results verify the correctness and superiority of the constant false alarm rate (CFAR) method based on the joint-SA. In addition, the experimental results also showed that the signal-clutter ratio (SCR) of the proposed ship detector joint-SA (33.17 dB, 35.98 dB, 57.25 dB) is better than that of DBSP (8.92 dB, 3.43 dB, 25.40 dB) and RsDVH (17.28 dB, 11.17 dB, 54.55 dB). More importantly, the proposed detector joint-SA has higher detection accuracy and a lower false alarm rate.

A Convolutional Neural Network based Cascade Reconstruction for the IceCube Neutrino Observatory

Jan 27, 2021Abstract:Continued improvements on existing reconstruction methods are vital to the success of high-energy physics experiments, such as the IceCube Neutrino Observatory. In IceCube, further challenges arise as the detector is situated at the geographic South Pole where computational resources are limited. However, to perform real-time analyses and to issue alerts to telescopes around the world, powerful and fast reconstruction methods are desired. Deep neural networks can be extremely powerful, and their usage is computationally inexpensive once the networks are trained. These characteristics make a deep learning-based approach an excellent candidate for the application in IceCube. A reconstruction method based on convolutional architectures and hexagonally shaped kernels is presented. The presented method is robust towards systematic uncertainties in the simulation and has been tested on experimental data. In comparison to standard reconstruction methods in IceCube, it can improve upon the reconstruction accuracy, while reducing the time necessary to run the reconstruction by two to three orders of magnitude.

Discriminative Feature Representation with Spatio-temporal Cues for Vehicle Re-identification

Nov 13, 2020

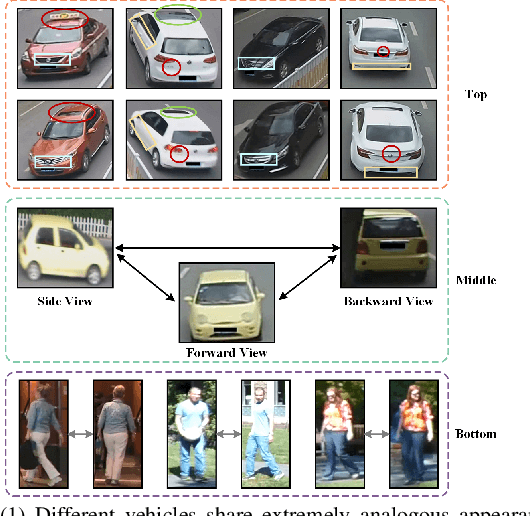

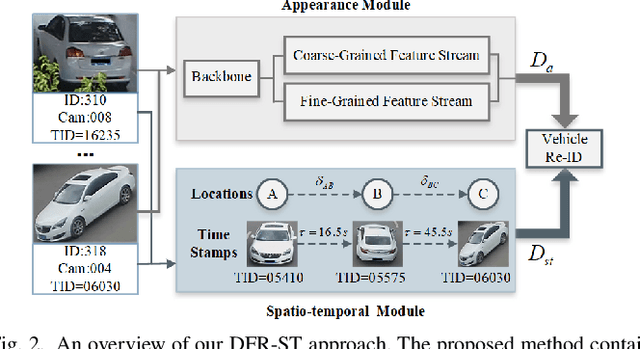

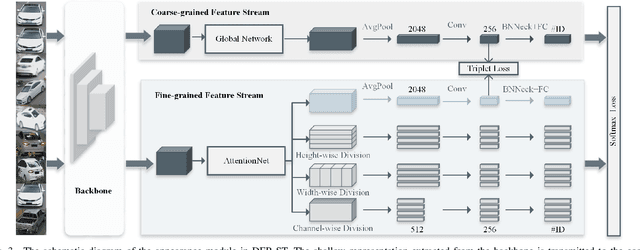

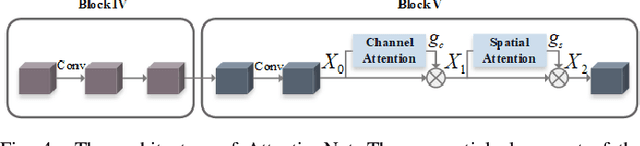

Abstract:Vehicle re-identification (re-ID) aims to discover and match the target vehicles from a gallery image set taken by different cameras on a wide range of road networks. It is crucial for lots of applications such as security surveillance and traffic management. The remarkably similar appearances of distinct vehicles and the significant changes of viewpoints and illumination conditions take grand challenges to vehicle re-ID. Conventional solutions focus on designing global visual appearances without sufficient consideration of vehicles' spatiotamporal relationships in different images. In this paper, we propose a novel discriminative feature representation with spatiotemporal clues (DFR-ST) for vehicle re-ID. It is capable of building robust features in the embedding space by involving appearance and spatio-temporal information. Based on this multi-modal information, the proposed DFR-ST constructs an appearance model for a multi-grained visual representation by a two-stream architecture and a spatio-temporal metric to provide complementary information. Experimental results on two public datasets demonstrate DFR-ST outperforms the state-of-the-art methods, which validate the effectiveness of the proposed method.

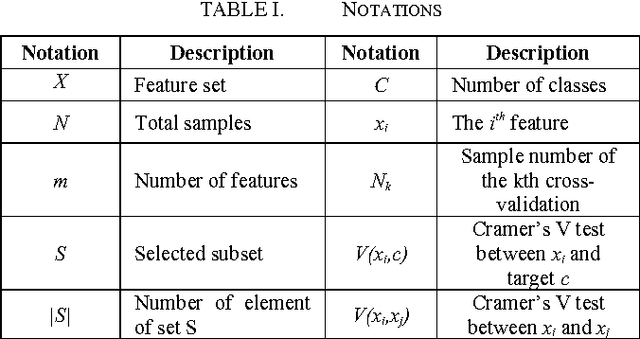

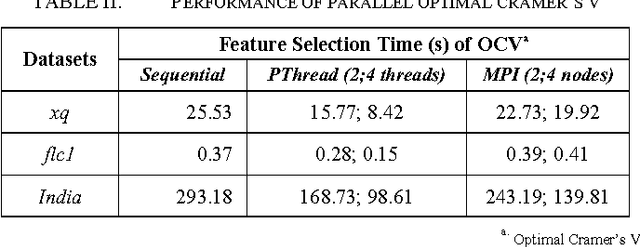

Feature Selection Parallel Technique for Remotely Sensed Imagery Classification

Apr 11, 2017

Abstract:Remote sensing research focusing on feature selection has long attracted the attention of the remote sensing community because feature selection is a prerequisite for image processing and various applications. Different feature selection methods have been proposed to improve the classification accuracy. They vary from basic search techniques to clonal selections, and various optimal criteria have been investigated. Recently, methods using dependence-based measures have attracted much attention due to their ability to deal with very high dimensional datasets. However, these methods are based on Cramers V test, which has performance issues with large datasets. In this paper, we propose a parallel approach to improve their performance. We evaluate our approach on hyper-spectral and high spatial resolution images and compare it to the proposed methods with a centralized version as preliminary results. The results are very promising.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge