Branislav Bošanský

How to Train your Antivirus: RL-based Hardening through the Problem-Space

Feb 29, 2024

Abstract:ML-based malware detection on dynamic analysis reports is vulnerable to both evasion and spurious correlations. In this work, we investigate a specific ML architecture employed in the pipeline of a widely-known commercial antivirus company, with the goal to harden it against adversarial malware. Adversarial training, the sole defensive technique that can confer empirical robustness, is not applicable out of the box in this domain, for the principal reason that gradient-based perturbations rarely map back to feasible problem-space programs. We introduce a novel Reinforcement Learning approach for constructing adversarial examples, a constituent part of adversarially training a model against evasion. Our approach comes with multiple advantages. It performs modifications that are feasible in the problem-space, and only those; thus it circumvents the inverse mapping problem. It also makes possible to provide theoretical guarantees on the robustness of the model against a particular set of adversarial capabilities. Our empirical exploration validates our theoretical insights, where we can consistently reach 0\% Attack Success Rate after a few adversarial retraining iterations.

Explaining Classifiers Trained on Raw Hierarchical Multiple-Instance Data

Aug 04, 2022

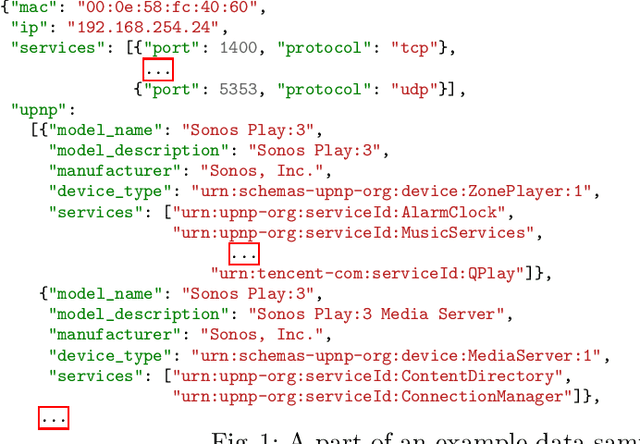

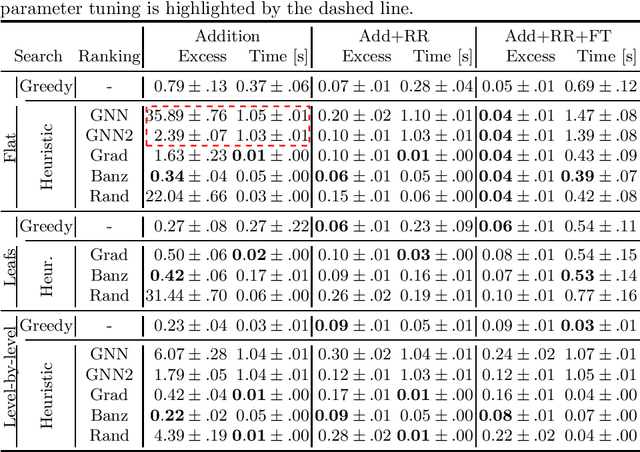

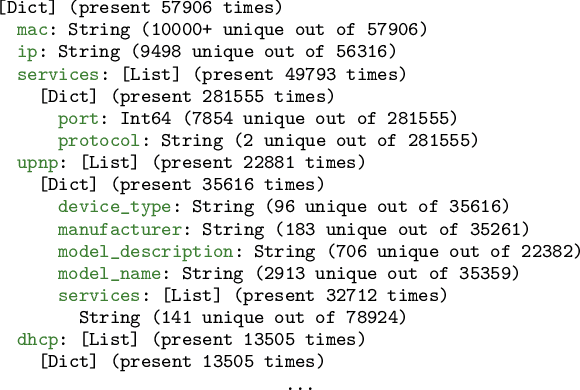

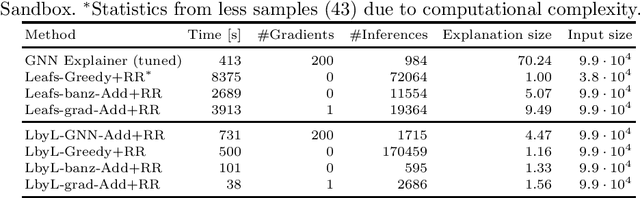

Abstract:Learning from raw data input, thus limiting the need for feature engineering, is a component of many successful applications of machine learning methods in various domains. While many problems naturally translate into a vector representation directly usable in standard classifiers, a number of data sources have the natural form of structured data interchange formats (e.g., security logs in JSON/XML format). Existing methods, such as in Hierarchical Multiple Instance Learning (HMIL), allow learning from such data in their raw form. However, the explanation of the classifiers trained on raw structured data remains largely unexplored. By treating these models as sub-set selections problems, we demonstrate how interpretable explanations, with favourable properties, can be generated using computationally efficient algorithms. We compare to an explanation technique adopted from graph neural networks showing an order of magnitude speed-up and higher-quality explanations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge