Binghui Peng

High dimensional online calibration in polynomial time

Apr 12, 2025Abstract:In online (sequential) calibration, a forecaster predicts probability distributions over a finite outcome space $[d]$ over a sequence of $T$ days, with the goal of being calibrated. While asymptotically calibrated strategies are known to exist, they suffer from the curse of dimensionality: the best known algorithms require $\exp(d)$ days to achieve non-trivial calibration. In this work, we present the first asymptotically calibrated strategy that guarantees non-trivial calibration after a polynomial number of rounds. Specifically, for any desired accuracy $\epsilon > 0$, our forecaster becomes $\epsilon$-calibrated after $T = d^{O(1/\epsilon^2)}$ days. We complement this result with a lower bound, proving that at least $T = d^{\Omega(\log(1/\epsilon))}$ rounds are necessary to achieve $\epsilon$-calibration. Our results resolve the open questions posed by [Abernethy-Mannor'11, Hazan-Kakade'12]. Our algorithm is inspired by recent breakthroughs in swap regret minimization [Peng-Rubinstein'24, Dagan et al.'24]. Despite its strong theoretical guarantees, the approach is remarkably simple and intuitive: it randomly selects among a set of sub-forecasters, each of which predicts the empirical outcome frequency over recent time windows.

Theoretical limitations of multi-layer Transformer

Dec 04, 2024Abstract:Transformers, especially the decoder-only variants, are the backbone of most modern large language models; yet we do not have much understanding of their expressive power except for the simple $1$-layer case. Due to the difficulty of analyzing multi-layer models, all previous work relies on unproven complexity conjectures to show limitations for multi-layer Transformers. In this work, we prove the first $\textit{unconditional}$ lower bound against multi-layer decoder-only transformers. For any constant $L$, we prove that any $L$-layer decoder-only transformer needs a polynomial model dimension ($n^{\Omega(1)}$) to perform sequential composition of $L$ functions over an input of $n$ tokens. As a consequence, our results give: (1) the first depth-width trade-off for multi-layer transformers, exhibiting that the $L$-step composition task is exponentially harder for $L$-layer models compared to $(L+1)$-layer ones; (2) an unconditional separation between encoder and decoder, exhibiting a hard task for decoders that can be solved by an exponentially shallower and smaller encoder; (3) a provable advantage of chain-of-thought, exhibiting a task that becomes exponentially easier with chain-of-thought. On the technical side, we propose the multi-party $\textit{autoregressive}$ $\textit{communication}$ $\textit{model}$ that captures the computation of a decoder-only Transformer. We also introduce a new proof technique that finds a certain $\textit{indistinguishable}$ $\textit{decomposition}$ of all possible inputs iteratively for proving lower bounds in this model. We believe our new communication model and proof technique will be helpful to further understand the computational power of transformers.

The complexity of approximate (coarse) correlated equilibrium for incomplete information games

Jun 04, 2024Abstract:We study the iteration complexity of decentralized learning of approximate correlated equilibria in incomplete information games. On the negative side, we prove that in $\mathit{extensive}$-$\mathit{form}$ $\mathit{games}$, assuming $\mathsf{PPAD} \not\subset \mathsf{TIME}(n^{\mathsf{polylog}(n)})$, any polynomial-time learning algorithms must take at least $2^{\log_2^{1-o(1)}(|\mathcal{I}|)}$ iterations to converge to the set of $\epsilon$-approximate correlated equilibrium, where $|\mathcal{I}|$ is the number of nodes in the game and $\epsilon > 0$ is an absolute constant. This nearly matches, up to the $o(1)$ term, the algorithms of [PR'24, DDFG'24] for learning $\epsilon$-approximate correlated equilibrium, and resolves an open question of Anagnostides, Kalavasis, Sandholm, and Zampetakis [AKSZ'24]. Our lower bound holds even for the easier solution concept of $\epsilon$-approximate $\mathit{coarse}$ correlated equilibrium On the positive side, we give uncoupled dynamics that reach $\epsilon$-approximate correlated equilibria of a $\mathit{Bayesian}$ $\mathit{game}$ in polylogarithmic iterations, without any dependence of the number of types. This demonstrates a separation between Bayesian games and extensive-form games.

On Limitations of the Transformer Architecture

Feb 26, 2024

Abstract:What are the root causes of hallucinations in large language models (LLMs)? We use Communication Complexity to prove that the Transformer layer is incapable of composing functions (e.g., identify a grandparent of a person in a genealogy) if the domains of the functions are large enough; we show through examples that this inability is already empirically present when the domains are quite small. We also point out that several mathematical tasks that are at the core of the so-called compositional tasks thought to be hard for LLMs are unlikely to be solvable by Transformers, for large enough instances and assuming that certain well accepted conjectures in the field of Computational Complexity are true.

The sample complexity of multi-distribution learning

Dec 07, 2023Abstract:Multi-distribution learning generalizes the classic PAC learning to handle data coming from multiple distributions. Given a set of $k$ data distributions and a hypothesis class of VC dimension $d$, the goal is to learn a hypothesis that minimizes the maximum population loss over $k$ distributions, up to $\epsilon$ additive error. In this paper, we settle the sample complexity of multi-distribution learning by giving an algorithm of sample complexity $\widetilde{O}((d+k)\epsilon^{-2}) \cdot (k/\epsilon)^{o(1)}$. This matches the lower bound up to sub-polynomial factor and resolves the COLT 2023 open problem of Awasthi, Haghtalab and Zhao [AHZ23].

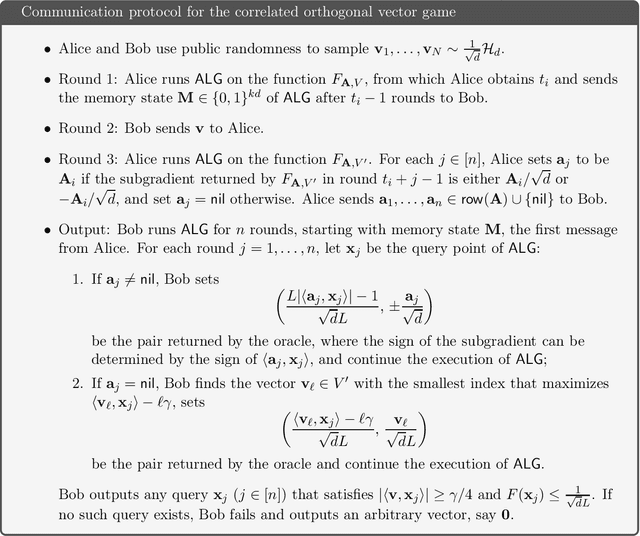

Fast swap regret minimization and applications to approximate correlated equilibria

Oct 30, 2023Abstract:We give a simple and computationally efficient algorithm that, for any constant $\varepsilon>0$, obtains $\varepsilon T$-swap regret within only $T = \mathsf{polylog}(n)$ rounds; this is an exponential improvement compared to the super-linear number of rounds required by the state-of-the-art algorithm, and resolves the main open problem of [Blum and Mansour 2007]. Our algorithm has an exponential dependence on $\varepsilon$, but we prove a new, matching lower bound. Our algorithm for swap regret implies faster convergence to $\varepsilon$-Correlated Equilibrium ($\varepsilon$-CE) in several regimes: For normal form two-player games with $n$ actions, it implies the first uncoupled dynamics that converges to the set of $\varepsilon$-CE in polylogarithmic rounds; a $\mathsf{polylog}(n)$-bit communication protocol for $\varepsilon$-CE in two-player games (resolving an open problem mentioned by [Babichenko-Rubinstein'2017, Goos-Rubinstein'2018, Ganor-CS'2018]; and an $\tilde{O}(n)$-query algorithm for $\varepsilon$-CE (resolving an open problem of [Babichenko'2020] and obtaining the first separation between $\varepsilon$-CE and $\varepsilon$-Nash equilibrium in the query complexity model). For extensive-form games, our algorithm implies a PTAS for $\mathit{normal}$ $\mathit{form}$ $\mathit{correlated}$ $\mathit{equilibria}$, a solution concept often conjectured to be computationally intractable (e.g. [Stengel-Forges'08, Fujii'23]).

The complexity of non-stationary reinforcement learning

Jul 13, 2023

Abstract:The problem of continual learning in the domain of reinforcement learning, often called non-stationary reinforcement learning, has been identified as an important challenge to the application of reinforcement learning. We prove a worst-case complexity result, which we believe captures this challenge: Modifying the probabilities or the reward of a single state-action pair in a reinforcement learning problem requires an amount of time almost as large as the number of states in order to keep the value function up to date, unless the strong exponential time hypothesis (SETH) is false; SETH is a widely accepted strengthening of the P $\neq$ NP conjecture. Recall that the number of states in current applications of reinforcement learning is typically astronomical. In contrast, we show that just $\textit{adding}$ a new state-action pair is considerably easier to implement.

Memory-Query Tradeoffs for Randomized Convex Optimization

Jun 21, 2023

Abstract:We show that any randomized first-order algorithm which minimizes a $d$-dimensional, $1$-Lipschitz convex function over the unit ball must either use $\Omega(d^{2-\delta})$ bits of memory or make $\Omega(d^{1+\delta/6-o(1)})$ queries, for any constant $\delta\in (0,1)$ and when the precision $\epsilon$ is quasipolynomially small in $d$. Our result implies that cutting plane methods, which use $\tilde{O}(d^2)$ bits of memory and $\tilde{O}(d)$ queries, are Pareto-optimal among randomized first-order algorithms, and quadratic memory is required to achieve optimal query complexity for convex optimization.

Near Optimal Memory-Regret Tradeoff for Online Learning

Mar 09, 2023Abstract:In the experts problem, on each of $T$ days, an agent needs to follow the advice of one of $n$ ``experts''. After each day, the loss associated with each expert's advice is revealed. A fundamental result in learning theory says that the agent can achieve vanishing regret, i.e. their cumulative loss is within $o(T)$ of the cumulative loss of the best-in-hindsight expert. Can the agent perform well without sufficient space to remember all the experts? We extend a nascent line of research on this question in two directions: $\bullet$ We give a new algorithm against the oblivious adversary, improving over the memory-regret tradeoff obtained by [PZ23], and nearly matching the lower bound of [SWXZ22]. $\bullet$ We also consider an adaptive adversary who can observe past experts chosen by the agent. In this setting we give both a new algorithm and a novel lower bound, proving that roughly $\sqrt{n}$ memory is both necessary and sufficient for obtaining $o(T)$ regret.

Online Prediction in Sub-linear Space

Jul 16, 2022

Abstract:We provide the first sub-linear space and sub-linear regret algorithm for online learning with expert advice (against an oblivious adversary), addressing an open question raised recently by Srinivas, Woodruff, Xu and Zhou (STOC 2022). We also demonstrate a separation between oblivious and (strong) adaptive adversaries by proving a linear memory lower bound of any sub-linear regret algorithm against an adaptive adversary. Our algorithm is based on a novel pool selection procedure that bypasses the traditional wisdom of leader selection for online learning, and a generic reduction that transforms any weakly sub-linear regret $o(T)$ algorithm to $T^{1-\alpha}$ regret algorithm, which may be of independent interest. Our lower bound utilizes the connection of no-regret learning and equilibrium computation in zero-sum games, leading to a proof of a strong lower bound against an adaptive adversary.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge