Bernd Meyer

Self-supervised Learning for Acoustic Few-Shot Classification

Sep 15, 2024

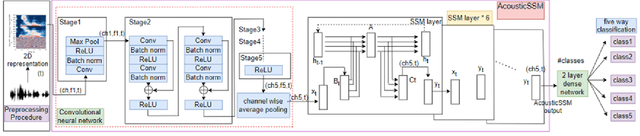

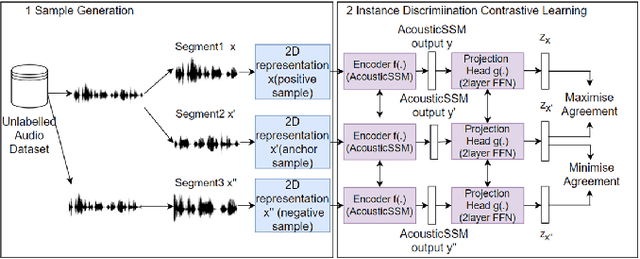

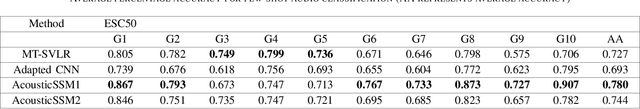

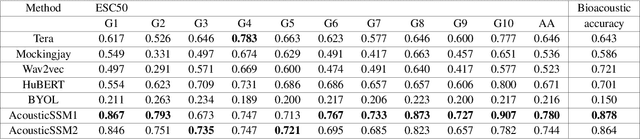

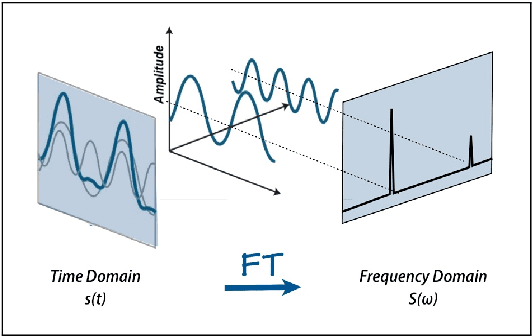

Abstract:Labelled data are limited and self-supervised learning is one of the most important approaches for reducing labelling requirements. While it has been extensively explored in the image domain, it has so far not received the same amount of attention in the acoustic domain. Yet, reducing labelling is a key requirement for many acoustic applications. Specifically in bioacoustic, there are rarely sufficient labels for fully supervised learning available. This has led to the widespread use of acoustic recognisers that have been pre-trained on unrelated data for bioacoustic tasks. We posit that training on the actual task data and combining self-supervised pre-training with few-shot classification is a superior approach that has the ability to deliver high accuracy even when only a few labels are available. To this end, we introduce and evaluate a new architecture that combines CNN-based preprocessing with feature extraction based on state space models (SSMs). This combination is motivated by the fact that CNN-based networks alone struggle to capture temporal information effectively, which is crucial for classifying acoustic signals. SSMs, specifically S4 and Mamba, on the other hand, have been shown to have an excellent ability to capture long-range dependencies in sequence data. We pre-train this architecture using contrastive learning on the actual task data and subsequent fine-tuning with an extremely small amount of labelled data. We evaluate the performance of this proposed architecture for ($n$-shot, $n$-class) classification on standard benchmarks as well as real-world data. Our evaluation shows that it outperforms state-of-the-art architectures on the few-shot classification problem.

Deep Active Audio Feature Learning in Resource-Constrained Environments

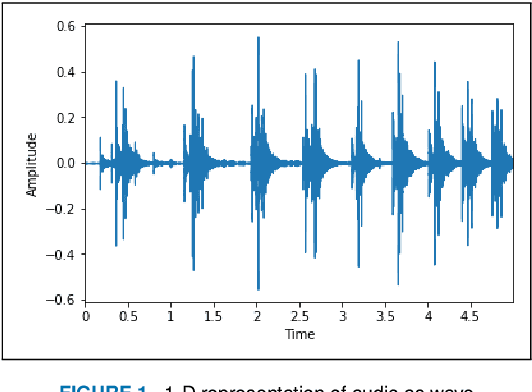

Aug 25, 2023Abstract:The scarcity of labelled data makes training Deep Neural Network (DNN) models in bioacoustic applications challenging. In typical bioacoustics applications, manually labelling the required amount of data can be prohibitively expensive. To effectively identify both new and current classes, DNN models must continue to learn new features from a modest amount of fresh data. Active Learning (AL) is an approach that can help with this learning while requiring little labelling effort. Nevertheless, the use of fixed feature extraction approaches limits feature quality, resulting in underutilization of the benefits of AL. We describe an AL framework that addresses this issue by incorporating feature extraction into the AL loop and refining the feature extractor after each round of manual annotation. In addition, we use raw audio processing rather than spectrograms, which is a novel approach. Experiments reveal that the proposed AL framework requires 14.3%, 66.7%, and 47.4% less labelling effort on benchmark audio datasets ESC-50, UrbanSound8k, and InsectWingBeat, respectively, for a large DNN model and similar savings on a microcontroller-based counterpart. Furthermore, we showcase the practical relevance of our study by incorporating data from conservation biology projects.

Tracking Different Ant Species: An Unsupervised Domain Adaptation Framework and a Dataset for Multi-object Tracking

Jan 25, 2023

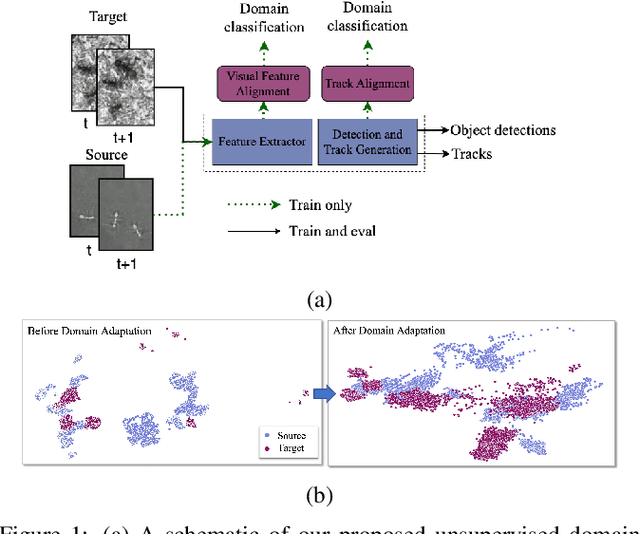

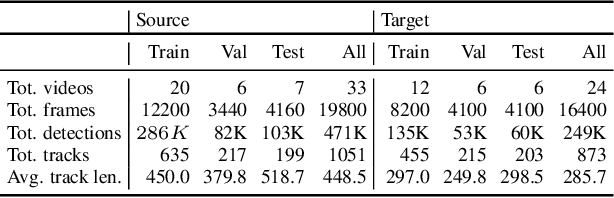

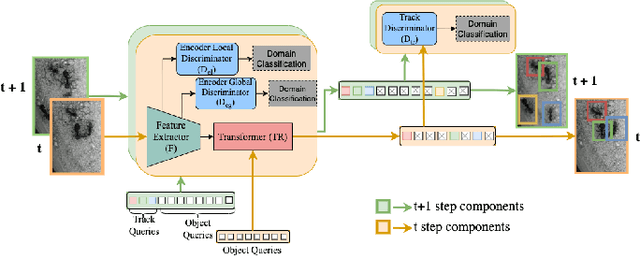

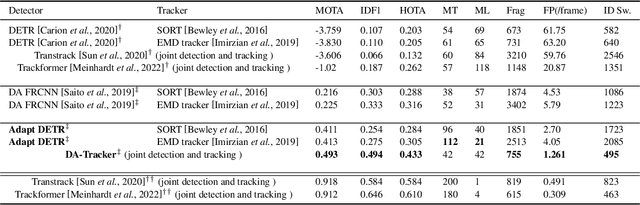

Abstract:Tracking individuals is a vital part of many experiments conducted to understand collective behaviour. Ants are the paradigmatic model system for such experiments but their lack of individually distinguishing visual features and their high colony densities make it extremely difficult to perform reliable tracking automatically. Additionally, the wide diversity of their species' appearances makes a generalized approach even harder. In this paper, we propose a data-driven multi-object tracker that, for the first time, employs domain adaptation to achieve the required generalisation. This approach is built upon a joint-detection-and-tracking framework that is extended by a set of domain discriminator modules integrating an adversarial training strategy in addition to the tracking loss. In addition to this novel domain-adaptive tracking framework, we present a new dataset and a benchmark for the ant tracking problem. The dataset contains 57 video sequences with full trajectory annotation, including 30k frames captured from two different ant species moving on different background patterns. It comprises 33 and 24 sequences for source and target domains, respectively. We compare our proposed framework against other domain-adaptive and non-domain-adaptive multi-object tracking baselines using this dataset and show that incorporating domain adaptation at multiple levels of the tracking pipeline yields significant improvements. The code and the dataset are available at https://github.com/chamathabeysinghe/da-tracker.

Pruning vs XNOR-Net: A Comprehensive Study of Deep Learning for Audio Classification on Edge-devices

Aug 29, 2021

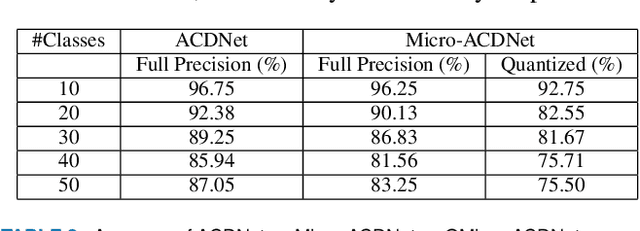

Abstract:Deep Learning has celebrated resounding successes in many application areas of relevance to the Internet-of-Things, for example, computer vision and machine listening. To fully harness the power of deep leaning for the IoT, these technologies must ultimately be brought directly to the edge. The obvious challenge is that deep learning techniques can only be implemented on strictly resource-constrained edge devices if the models are radically downsized. This task relies on different model compression techniques, such as network pruning, quantization, and the recent advancement of XNOR-Net. This paper examines the suitability of these techniques for audio classification on microcontrollers. We present an XNOR-Net for end-to-end raw audio classification and a comprehensive empirical study comparing this approach with pruning-and-quantization methods. We show that raw audio classification with XNOR yields comparable performance to regular full precision networks for small numbers of classes while reducing memory requirements 32-fold and computation requirements 58-fold. However, as the number of classes increases significantly, performance degrades, and pruning-and-quantization based compression techniques take over as the preferred technique being able to satisfy the same space constraints but requiring about 8x more computation. We show that these insights are consistent between raw audio classification and image classification using standard benchmark sets. To the best of our knowledge, this is the first study applying XNOR to end-to-end audio classification and evaluating it in the context of alternative techniques. All code is publicly available on GitHub.

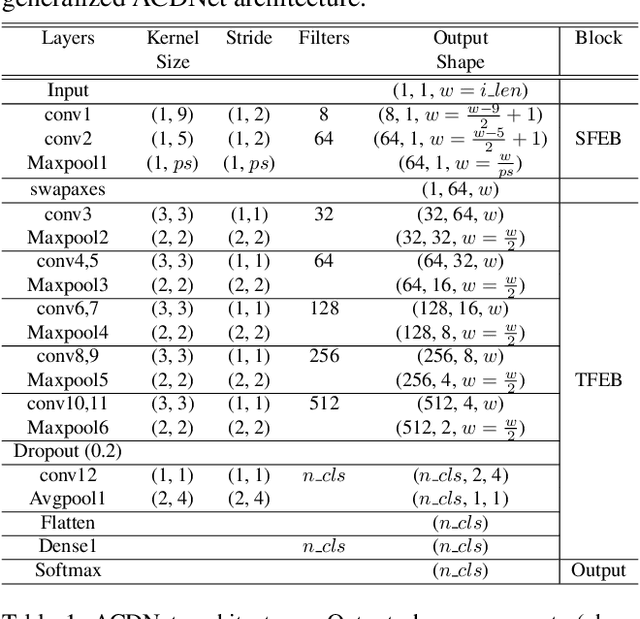

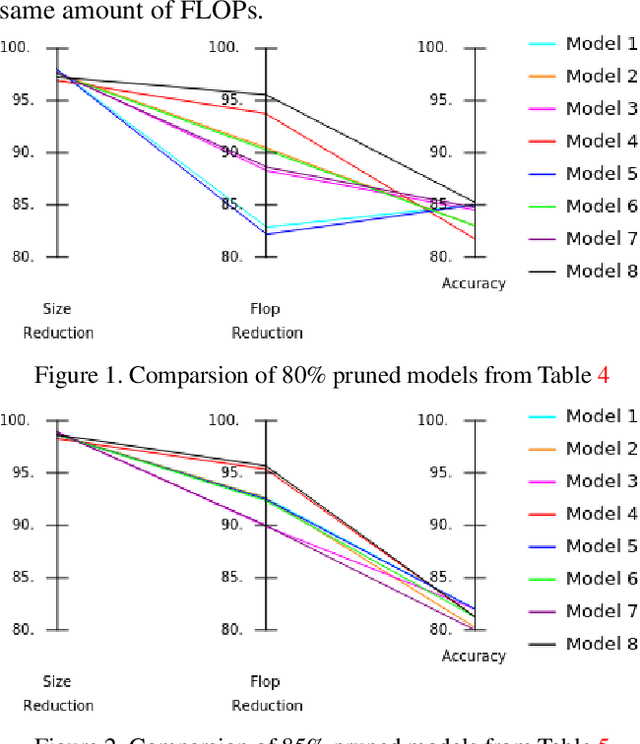

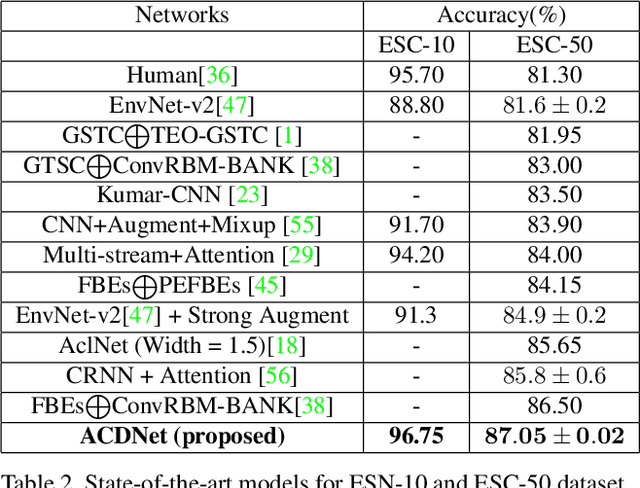

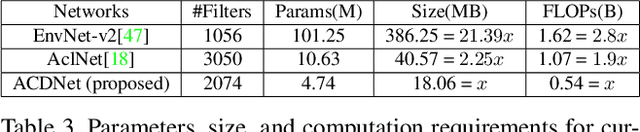

Environmental Sound Classification on the Edge: A Pipeline for Deep Acoustic Networks on Extremely Resource-Constrained Devices

Apr 06, 2021

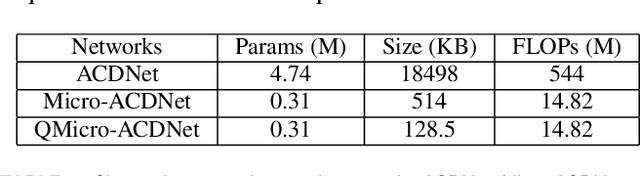

Abstract:Significant efforts are being invested to bring state-of-the-art classification and recognition to edge devices with extreme resource constraints (memory, speed and lack of GPU support). Here, we demonstrate the first deep network for acoustic recognition that is small enough for an off-the-shelf microcrocontroller, yet achieves state-of-the-art performance on standard benchmarks. Rather than handcrafting a once-off solution, we present a universal pipeline that converts a large deep convolutional network automatically via compression and quantization into a network for resource-impoverished edge devices. After introducing ACDNet, which produces above state-of-the-art accuracy on ESC-10 (96.65%) and ESC-50 (87.1%), we describe the compression pipeline and show that it allows us to achieve 97.22% size reduction and 97.28% FLOP reduction while maintaining close to state-of-the-art accuracy (83.65% on ESC-50). We describe a successful implementation on a standard off-the-shelf microcontroller and, beyond laboratory benchmarks, report successful tests on real-world data sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge