Avleen Bijral

NonSTOP: A NonSTationary Online Prediction Method for Time Series

Aug 26, 2018

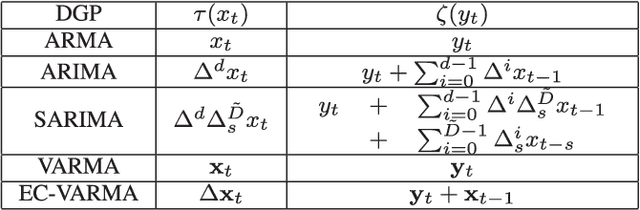

Abstract:We present online prediction methods for time series that let us explicitly handle nonstationary artifacts (e.g. trend and seasonality) present in most real time series. Specifically, we show that applying appropriate transformations to such time series before prediction can lead to improved theoretical and empirical prediction performance. Moreover, since these transformations are usually unknown, we employ the learning with experts setting to develop a fully online method (NonSTOP-NonSTationary Online Prediction) for predicting nonstationary time series. This framework allows for seasonality and/or other trends in univariate time series and cointegration in multivariate time series. Our algorithms and regret analysis subsume recent related work while significantly expanding the applicability of such methods. For all the methods, we provide sub-linear regret bounds using relaxed assumptions. The theoretical guarantees do not fully capture the benefits of the transformations, thus we provide a data-dependent analysis of the follow-the-leader algorithm that provides insight into the success of using such transformations. We support all of our results with experiments on simulated and real data.

Mini-Batch Primal and Dual Methods for SVMs

Mar 10, 2013

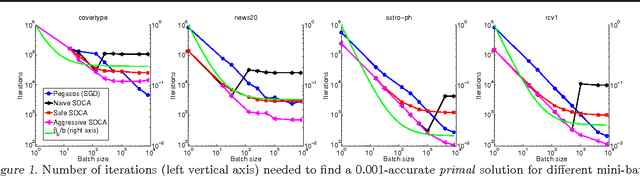

Abstract:We address the issue of using mini-batches in stochastic optimization of SVMs. We show that the same quantity, the spectral norm of the data, controls the parallelization speedup obtained for both primal stochastic subgradient descent (SGD) and stochastic dual coordinate ascent (SCDA) methods and use it to derive novel variants of mini-batched SDCA. Our guarantees for both methods are expressed in terms of the original nonsmooth primal problem based on the hinge-loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge