Andy Li

Enhancing Strawberry Yield Forecasting with Backcasted IoT Sensor Data and Machine Learning

Apr 25, 2025Abstract:Due to rapid population growth globally, digitally-enabled agricultural sectors are crucial for sustainable food production and making informed decisions about resource management for farmers and various stakeholders. The deployment of Internet of Things (IoT) technologies that collect real-time observations of various environmental (e.g., temperature, humidity, etc.) and operational factors (e.g., irrigation) influencing production is often seen as a critical step to enable additional novel downstream tasks, such as AI-based yield forecasting. However, since AI models require large amounts of data, this creates practical challenges in a real-world dynamic farm setting where IoT observations would need to be collected over a number of seasons. In this study, we deployed IoT sensors in strawberry production polytunnels for two growing seasons to collect environmental data, including water usage, external and internal temperature, external and internal humidity, soil moisture, soil temperature, and photosynthetically active radiation. The sensor observations were combined with manually provided yield records spanning a period of four seasons. To bridge the gap of missing IoT observations for two additional seasons, we propose an AI-based backcasting approach to generate synthetic sensor observations using historical weather data from a nearby weather station and the existing polytunnel observations. We built an AI-based yield forecasting model to evaluate our approach using the combination of real and synthetic observations. Our results demonstrated that incorporating synthetic data improved yield forecasting accuracy, with models incorporating synthetic data outperforming those trained only on historical yield, weather records, and real sensor data.

Comparing Large Language Models and Traditional Machine Translation Tools for Translating Medical Consultation Summaries: A Pilot Study

Apr 23, 2025

Abstract:This study evaluates how well large language models (LLMs) and traditional machine translation (MT) tools translate medical consultation summaries from English into Arabic, Chinese, and Vietnamese. It assesses both patient, friendly and clinician, focused texts using standard automated metrics. Results showed that traditional MT tools generally performed better, especially for complex texts, while LLMs showed promise, particularly in Vietnamese and Chinese, when translating simpler summaries. Arabic translations improved with complexity due to the language's morphology. Overall, while LLMs offer contextual flexibility, they remain inconsistent, and current evaluation metrics fail to capture clinical relevance. The study highlights the need for domain-specific training, improved evaluation methods, and human oversight in medical translation.

Pushing the Limits of Sparsity: A Bag of Tricks for Extreme Pruning

Nov 21, 2024

Abstract:Pruning of deep neural networks has been an effective technique for reducing model size while preserving most of the performance of dense networks, crucial for deploying models on memory and power-constrained devices. While recent sparse learning methods have shown promising performance up to moderate sparsity levels such as 95% and 98%, accuracy quickly deteriorates when pushing sparsities to extreme levels. Obtaining sparse networks at such extreme sparsity levels presents unique challenges, such as fragile gradient flow and heightened risk of layer collapse. In this work, we explore network performance beyond the commonly studied sparsities, and propose a collection of techniques that enable the continuous learning of networks without accuracy collapse even at extreme sparsities, including 99.90%, 99.95% and 99.99% on ResNet architectures. Our approach combines 1) Dynamic ReLU phasing, where DyReLU initially allows for richer parameter exploration before being gradually replaced by standard ReLU, 2) weight sharing which reuses parameters within a residual layer while maintaining the same number of learnable parameters, and 3) cyclic sparsity, where both sparsity levels and sparsity patterns evolve dynamically throughout training to better encourage parameter exploration. We evaluate our method, which we term Extreme Adaptive Sparse Training (EAST) at extreme sparsities using ResNet-34 and ResNet-50 on CIFAR-10, CIFAR-100, and ImageNet, achieving significant performance improvements over state-of-the-art methods we compared with.

Mini-Giants: "Small" Language Models and Open Source Win-Win

Jul 17, 2023

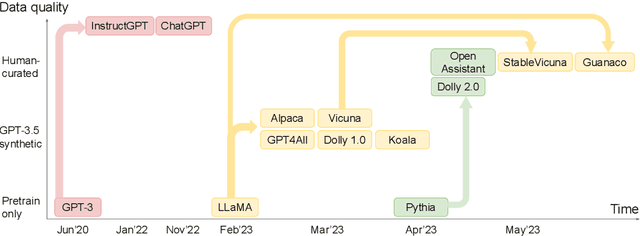

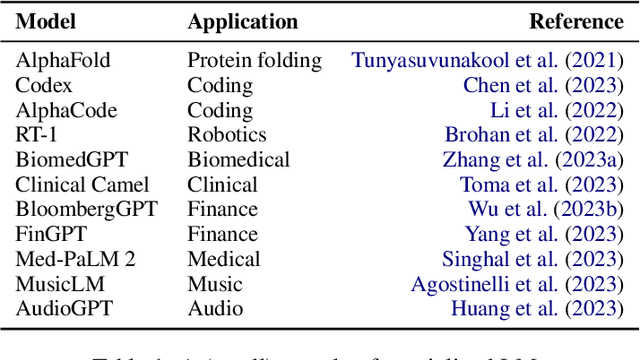

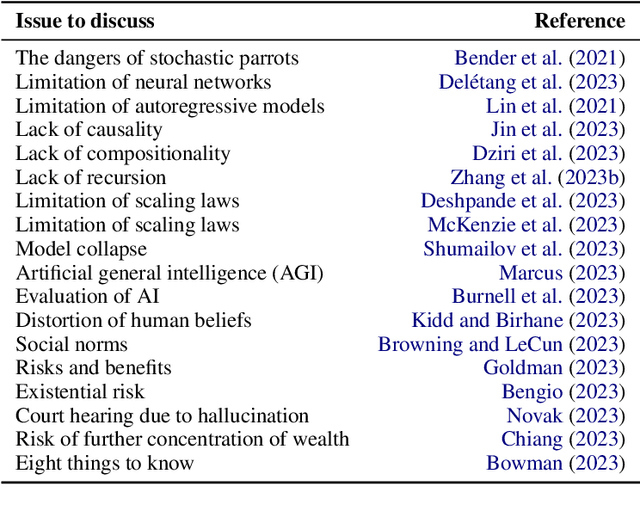

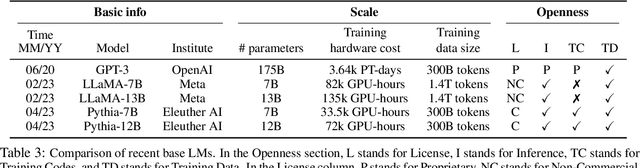

Abstract:ChatGPT is phenomenal. However, it is prohibitively expensive to train and refine such giant models. Fortunately, small language models are flourishing and becoming more and more competent. We call them "mini-giants". We argue that open source community like Kaggle and mini-giants will win-win in many ways, technically, ethically and socially. In this article, we present a brief yet rich background, discuss how to attain small language models, present a comparative study of small language models and a brief discussion of evaluation methods, discuss the application scenarios where small language models are most needed in the real world, and conclude with discussion and outlook.

Model Pruning Enables Localized and Efficient Federated Learning for Yield Forecasting and Data Sharing

Apr 19, 2023

Abstract:Federated Learning (FL) presents a decentralized approach to model training in the agri-food sector and offers the potential for improved machine learning performance, while ensuring the safety and privacy of individual farms or data silos. However, the conventional FL approach has two major limitations. First, the heterogeneous data on individual silos can cause the global model to perform well for some clients but not all, as the update direction on some clients may hinder others after they are aggregated. Second, it is lacking with respect to the efficiency perspective concerning communication costs during FL and large model sizes. This paper proposes a new technical solution that utilizes network pruning on client models and aggregates the pruned models. This method enables local models to be tailored to their respective data distribution and mitigate the data heterogeneity present in agri-food data. Moreover, it allows for more compact models that consume less data during transmission. We experiment with a soybean yield forecasting dataset and find that this approach can improve inference performance by 15.5% to 20% compared to FedAvg, while reducing local model sizes by up to 84% and the data volume communicated between the clients and the server by 57.1% to 64.7%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge