Andreas Gruebl

Neuromorphic Hardware In The Loop: Training a Deep Spiking Network on the BrainScaleS Wafer-Scale System

Mar 06, 2017

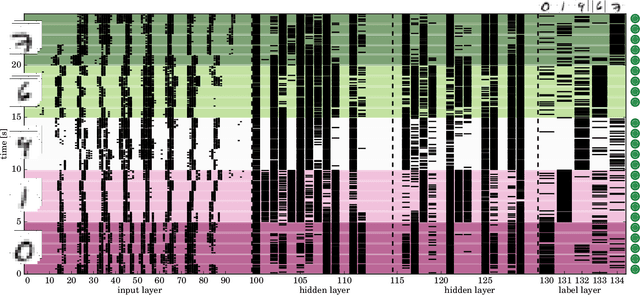

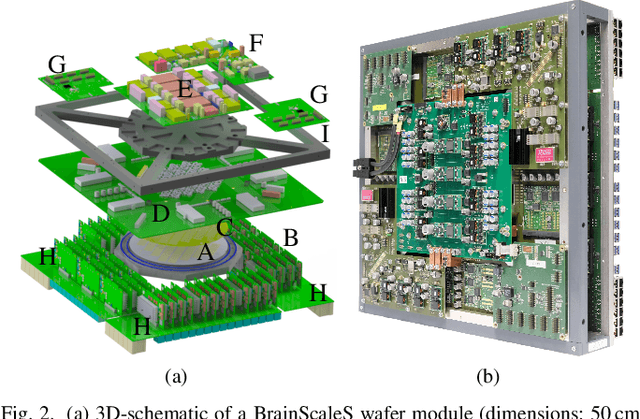

Abstract:Emulating spiking neural networks on analog neuromorphic hardware offers several advantages over simulating them on conventional computers, particularly in terms of speed and energy consumption. However, this usually comes at the cost of reduced control over the dynamics of the emulated networks. In this paper, we demonstrate how iterative training of a hardware-emulated network can compensate for anomalies induced by the analog substrate. We first convert a deep neural network trained in software to a spiking network on the BrainScaleS wafer-scale neuromorphic system, thereby enabling an acceleration factor of 10 000 compared to the biological time domain. This mapping is followed by the in-the-loop training, where in each training step, the network activity is first recorded in hardware and then used to compute the parameter updates in software via backpropagation. An essential finding is that the parameter updates do not have to be precise, but only need to approximately follow the correct gradient, which simplifies the computation of updates. Using this approach, after only several tens of iterations, the spiking network shows an accuracy close to the ideal software-emulated prototype. The presented techniques show that deep spiking networks emulated on analog neuromorphic devices can attain good computational performance despite the inherent variations of the analog substrate.

Demonstrating Hybrid Learning in a Flexible Neuromorphic Hardware System

Oct 13, 2016

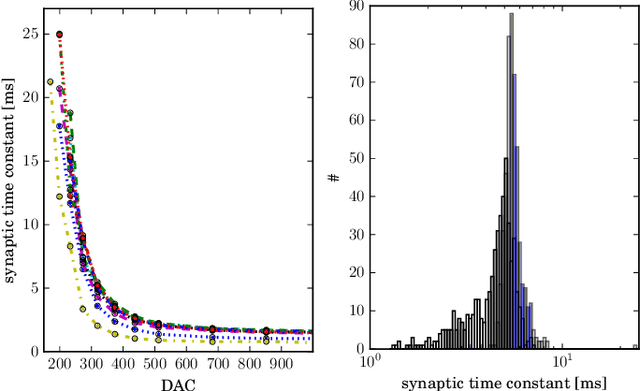

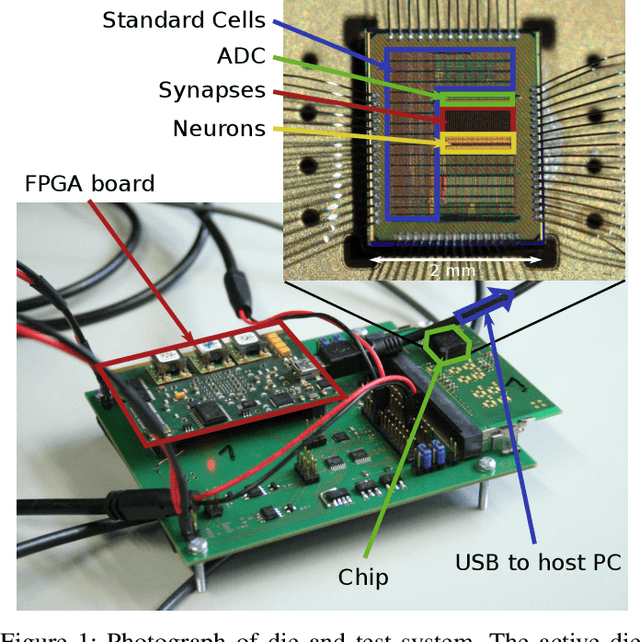

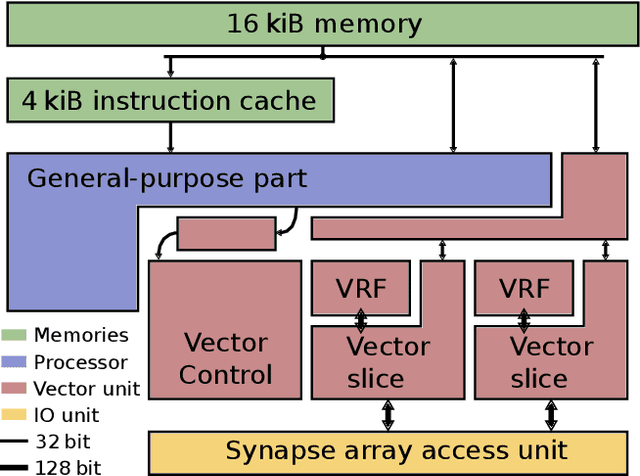

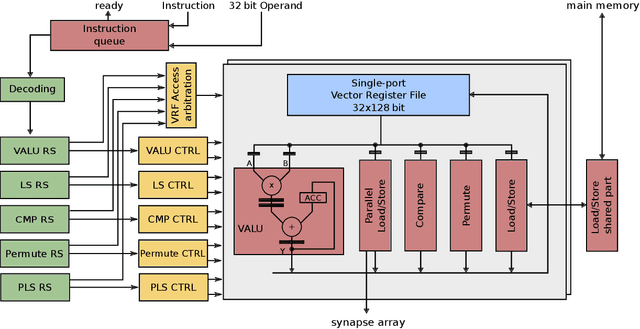

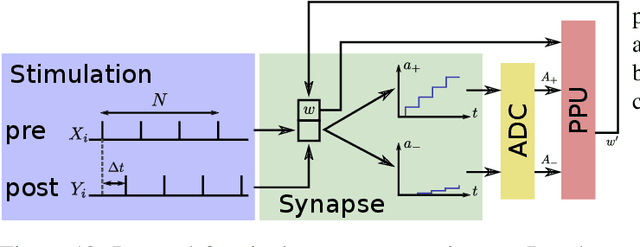

Abstract:We present results from a new approach to learning and plasticity in neuromorphic hardware systems: to enable flexibility in implementable learning mechanisms while keeping high efficiency associated with neuromorphic implementations, we combine a general-purpose processor with full-custom analog elements. This processor is operating in parallel with a fully parallel neuromorphic system consisting of an array of synapses connected to analog, continuous time neuron circuits. Novel analog correlation sensor circuits process spike events for each synapse in parallel and in real-time. The processor uses this pre-processing to compute new weights possibly using additional information following its program. Therefore, learning rules can be defined in software giving a large degree of flexibility. Synapses realize correlation detection geared towards Spike-Timing Dependent Plasticity (STDP) as central computational primitive in the analog domain. Operating at a speed-up factor of 1000 compared to biological time-scale, we measure time-constants from tens to hundreds of micro-seconds. We analyze variability across multiple chips and demonstrate learning using a multiplicative STDP rule. We conclude, that the presented approach will enable flexible and efficient learning as a platform for neuroscientific research and technological applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge