Andrea Valenti

Graph-based Polyphonic Multitrack Music Generation

Jul 27, 2023

Abstract:Graphs can be leveraged to model polyphonic multitrack symbolic music, where notes, chords and entire sections may be linked at different levels of the musical hierarchy by tonal and rhythmic relationships. Nonetheless, there is a lack of works that consider graph representations in the context of deep learning systems for music generation. This paper bridges this gap by introducing a novel graph representation for music and a deep Variational Autoencoder that generates the structure and the content of musical graphs separately, one after the other, with a hierarchical architecture that matches the structural priors of music. By separating the structure and content of musical graphs, it is possible to condition generation by specifying which instruments are played at certain times. This opens the door to a new form of human-computer interaction in the context of music co-creation. After training the model on existing MIDI datasets, the experiments show that the model is able to generate appealing short and long musical sequences and to realistically interpolate between them, producing music that is tonally and rhythmically consistent. Finally, the visualization of the embeddings shows that the model is able to organize its latent space in accordance with known musical concepts.

ChemAlgebra: Algebraic Reasoning on Chemical Reactions

Oct 05, 2022

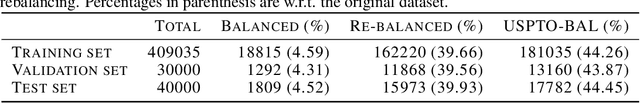

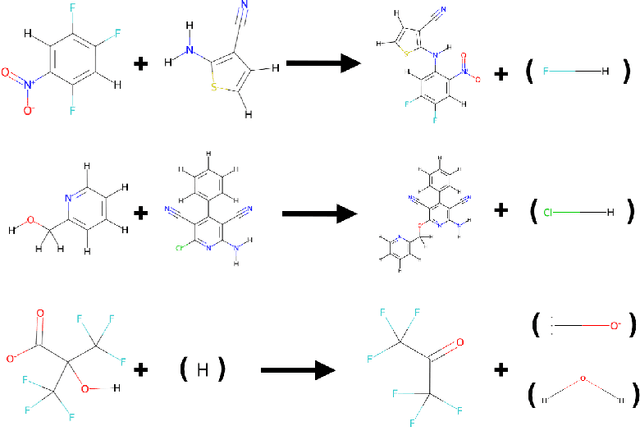

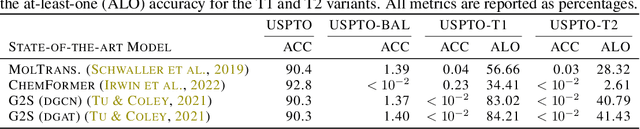

Abstract:While showing impressive performance on various kinds of learning tasks, it is yet unclear whether deep learning models have the ability to robustly tackle reasoning tasks. than by learning the underlying reasoning process that is actually required to solve the tasks. Measuring the robustness of reasoning in machine learning models is challenging as one needs to provide a task that cannot be easily shortcut by exploiting spurious statistical correlations in the data, while operating on complex objects and constraints. reasoning task. To address this issue, we propose ChemAlgebra, a benchmark for measuring the reasoning capabilities of deep learning models through the prediction of stoichiometrically-balanced chemical reactions. ChemAlgebra requires manipulating sets of complex discrete objects -- molecules represented as formulas or graphs -- under algebraic constraints such as the mass preservation principle. We believe that ChemAlgebra can serve as a useful test bed for the next generation of machine reasoning models and as a promoter of their development.

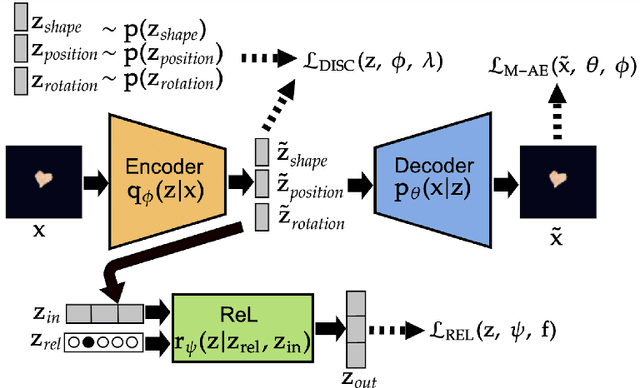

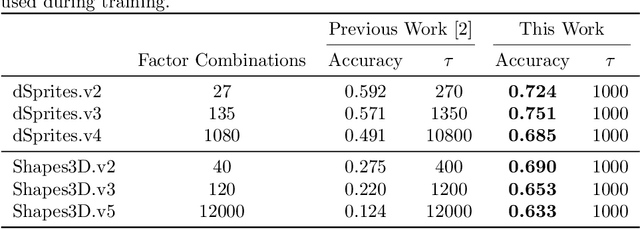

Modular Representations for Weak Disentanglement

Sep 12, 2022

Abstract:The recently introduced weakly disentangled representations proposed to relax some constraints of the previous definitions of disentanglement, in exchange for more flexibility. However, at the moment, weak disentanglement can only be achieved by increasing the amount of supervision as the number of factors of variations of the data increase. In this paper, we introduce modular representations for weak disentanglement, a novel method that allows to keep the amount of supervised information constant with respect the number of generative factors. The experiments shows that models using modular representations can increase their performance with respect to previous work without the need of additional supervision.

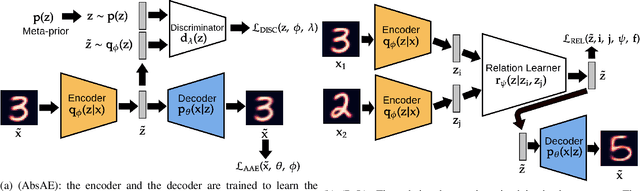

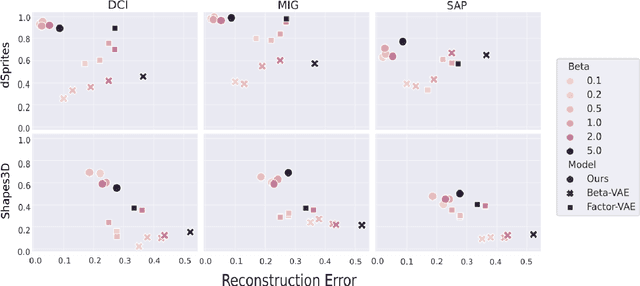

Leveraging Relational Information for Learning Weakly Disentangled Representations

May 20, 2022

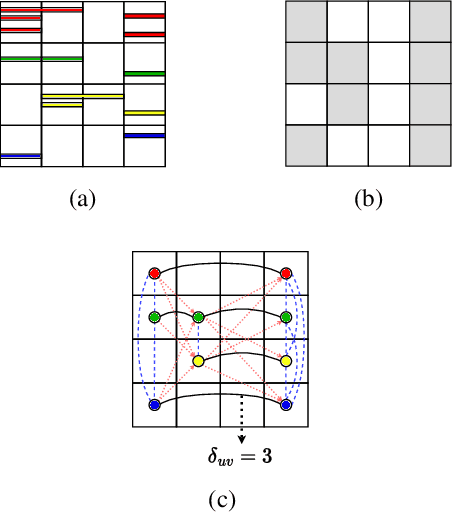

Abstract:Disentanglement is a difficult property to enforce in neural representations. This might be due, in part, to a formalization of the disentanglement problem that focuses too heavily on separating relevant factors of variation of the data in single isolated dimensions of the neural representation. We argue that such a definition might be too restrictive and not necessarily beneficial in terms of downstream tasks. In this work, we present an alternative view over learning (weakly) disentangled representations, which leverages concepts from relational learning. We identify the regions of the latent space that correspond to specific instances of generative factors, and we learn the relationships among these regions in order to perform controlled changes to the latent codes. We also introduce a compound generative model that implements such a weak disentanglement approach. Our experiments shows that the learned representations can separate the relevant factors of variation in the data, while preserving the information needed for effectively generating high quality data samples.

Calliope -- A Polyphonic Music Transformer

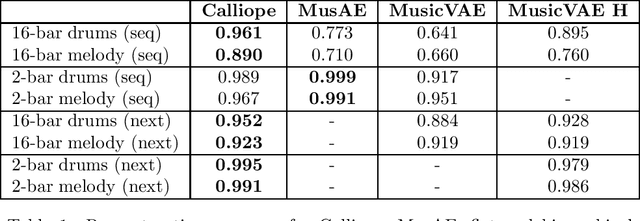

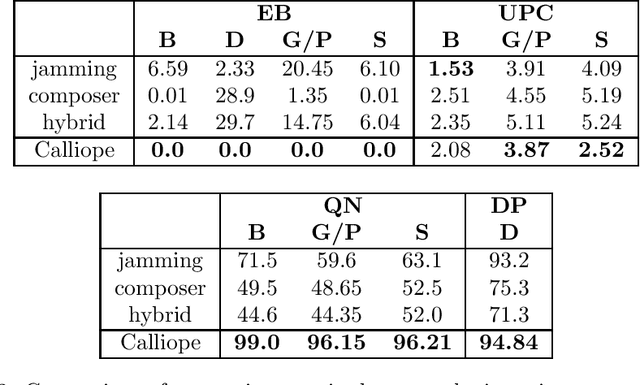

Jul 08, 2021

Abstract:The polyphonic nature of music makes the application of deep learning to music modelling a challenging task. On the other hand, the Transformer architecture seems to be a good fit for this kind of data. In this work, we present Calliope, a novel autoencoder model based on Transformers for the efficient modelling of multi-track sequences of polyphonic music. The experiments show that our model is able to improve the state of the art on musical sequence reconstruction and generation, with remarkably good results especially on long sequences.

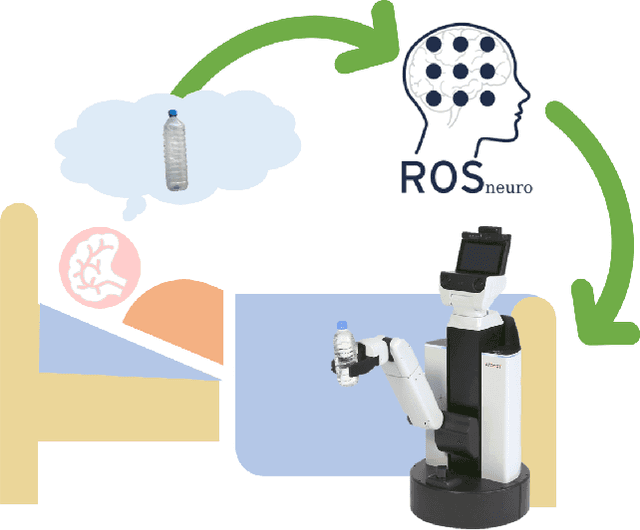

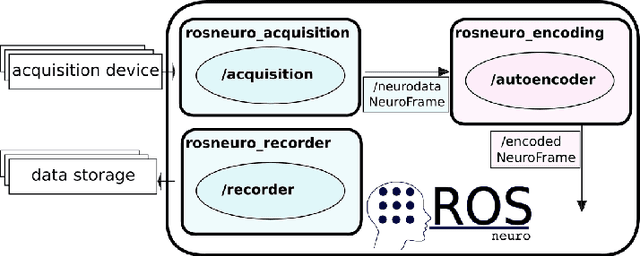

ROS-Neuro Integration of Deep Convolutional Autoencoders for EEG Signal Compression in Real-time BCIs

Aug 31, 2020

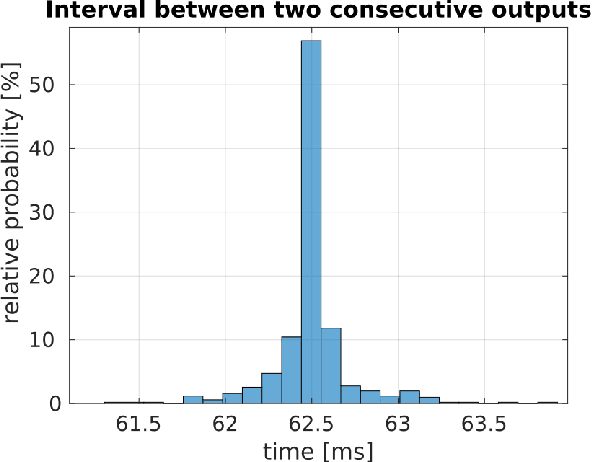

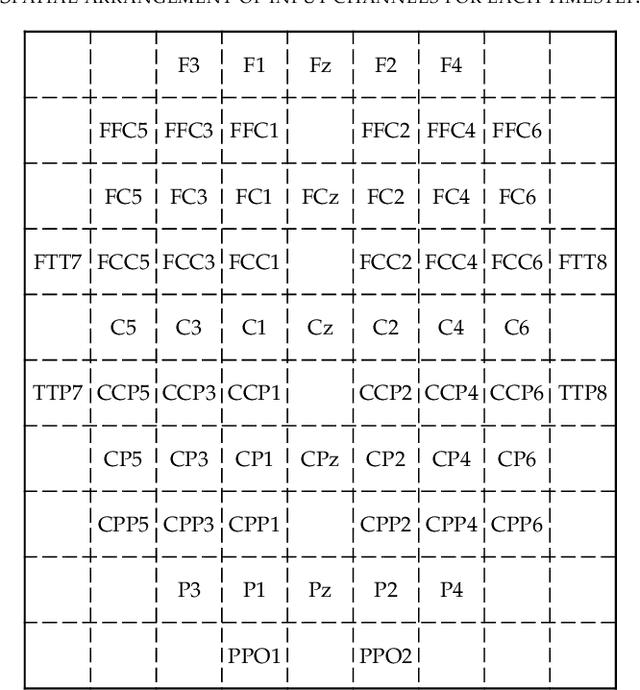

Abstract:Typical EEG-based BCI applications require the computation of complex functions over the noisy EEG channels to be carried out in an efficient way. Deep learning algorithms are capable of learning flexible nonlinear functions directly from data, and their constant processing latency is perfect for their deployment into online BCI systems. However, it is crucial for the jitter of the processing system to be as low as possible, in order to avoid unpredictable behaviour that can ruin the system's overall usability. In this paper, we present a novel encoding method, based on on deep convolutional autoencoders, that is able to perform efficient compression of the raw EEG inputs. We deploy our model in a ROS-Neuro node, thus making it suitable for the integration in ROS-based BCI and robotic systems in real world scenarios. The experimental results show that our system is capable to generate meaningful compressed encoding preserving to original information contained in the raw input. They also show that the ROS-Neuro node is able to produce such encodings at a steady rate, with minimal jitter. We believe that our system can represent an important step towards the development of an effective BCI processing pipeline fully standardized in ROS-Neuro framework.

Learning Style-Aware Symbolic Music Representations by Adversarial Autoencoders

Feb 20, 2020

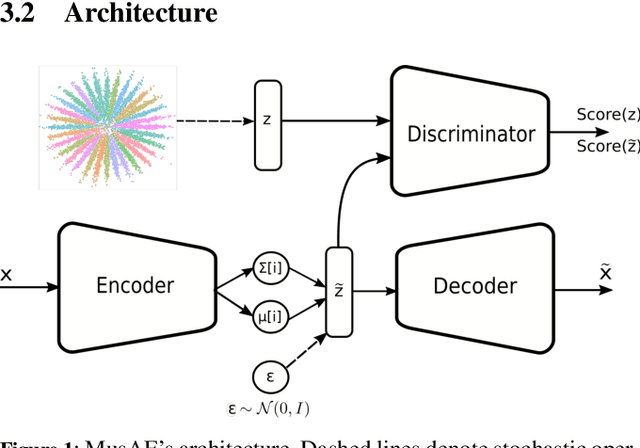

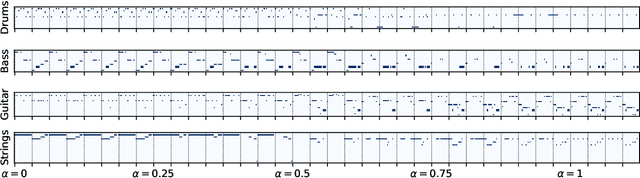

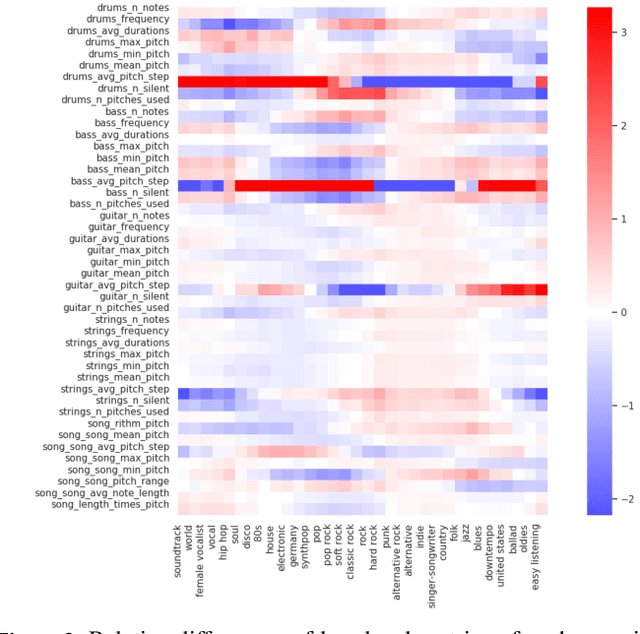

Abstract:We address the challenging open problem of learning an effective latent space for symbolic music data in generative music modeling. We focus on leveraging adversarial regularization as a flexible and natural mean to imbue variational autoencoders with context information concerning music genre and style. Through the paper, we show how Gaussian mixtures taking into account music metadata information can be used as an effective prior for the autoencoder latent space, introducing the first Music Adversarial Autoencoder (MusAE). The empirical analysis on a large scale benchmark shows that our model has a higher reconstruction accuracy than state-of-the-art models based on standard variational autoencoders. It is also able to create realistic interpolations between two musical sequences, smoothly changing the dynamics of the different tracks. Experiments show that the model can organise its latent space accordingly to low-level properties of the musical pieces, as well as to embed into the latent variables the high-level genre information injected from the prior distribution to increase its overall performance. This allows us to perform changes to the generated pieces in a principled way.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge