Andrea Nascetti

NeighborMAE: Exploiting Spatial Dependencies between Neighboring Earth Observation Images in Masked Autoencoders Pretraining

Mar 03, 2026Abstract:Masked Image Modeling has been one of the most popular self-supervised learning paradigms to learn representations from large-scale, unlabeled Earth Observation images. While incorporating multi-modal and multi-temporal Earth Observation data into Masked Image Modeling has been widely explored, the spatial dependencies between images captured from neighboring areas remains largely overlooked. Since the Earth's surface is continuous, neighboring images are highly related and offer rich contextual information for self-supervised learning. To close this gap, we propose NeighborMAE, which learns spatial dependencies by joint reconstruction of neighboring Earth Observation images. To ensure that the reconstruction remains challenging, we leverage a heuristic strategy to dynamically adjust the mask ratio and the pixel-level loss weight. Experimental results across various pretraining datasets and downstream tasks show that NeighborMAE significantly outperforms existing baselines, underscoring the value of neighboring images in Masked Image Modeling for Earth Observation and the efficacy of our designs.

Data-Centric Benchmark for Label Noise Estimation and Ranking in Remote Sensing Image Segmentation

Feb 28, 2026Abstract:High-quality pixel-level annotations are essential for the semantic segmentation of remote sensing imagery. However, such labels are expensive to obtain and often affected by noise due to the labor-intensive and time-consuming nature of pixel-wise annotation, which makes it challenging for human annotators to label every pixel accurately. Annotation errors can significantly degrade the performance and robustness of modern segmentation models, motivating the need for reliable mechanisms to identify and quantify noisy training samples. This paper introduces a novel Data-Centric benchmark, together with a novel, publicly available dataset and two techniques for identifying, quantifying, and ranking training samples according to their level of label noise in remote sensing semantic segmentation. Such proposed methods leverage complementary strategies based on model uncertainty, prediction consistency, and representation analysis, and consistently outperform established baselines across a range of experimental settings. The outcomes of this work are publicly available at https://github.com/keillernogueira/label_noise_segmentation.

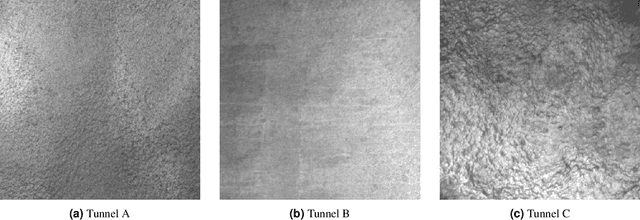

TACK Tunnel Data (TTD): A Benchmark Dataset for Deep Learning-Based Defect Detection in Tunnels

Dec 16, 2025

Abstract:Tunnels are essential elements of transportation infrastructure, but are increasingly affected by ageing and deterioration mechanisms such as cracking. Regular inspections are required to ensure their safety, yet traditional manual procedures are time-consuming, subjective, and costly. Recent advances in mobile mapping systems and Deep Learning (DL) enable automated visual inspections. However, their effectiveness is limited by the scarcity of tunnel datasets. This paper introduces a new publicly available dataset containing annotated images of three different tunnel linings, capturing typical defects: cracks, leaching, and water infiltration. The dataset is designed to support supervised, semi-supervised, and unsupervised DL methods for defect detection and segmentation. Its diversity in texture and construction techniques also enables investigation of model generalization and transferability across tunnel types. By addressing the critical lack of domain-specific data, this dataset contributes to advancing automated tunnel inspection and promoting safer, more efficient infrastructure maintenance strategies.

Can Generative Geospatial Diffusion Models Excel as Discriminative Geospatial Foundation Models?

Mar 10, 2025Abstract:Self-supervised learning (SSL) has revolutionized representation learning in Remote Sensing (RS), advancing Geospatial Foundation Models (GFMs) to leverage vast unlabeled satellite imagery for diverse downstream tasks. Currently, GFMs primarily focus on discriminative objectives, such as contrastive learning or masked image modeling, owing to their proven success in learning transferable representations. However, generative diffusion models--which demonstrate the potential to capture multi-grained semantics essential for RS tasks during image generation--remain underexplored for discriminative applications. This prompts the question: can generative diffusion models also excel and serve as GFMs with sufficient discriminative power? In this work, we answer this question with SatDiFuser, a framework that transforms a diffusion-based generative geospatial foundation model into a powerful pretraining tool for discriminative RS. By systematically analyzing multi-stage, noise-dependent diffusion features, we develop three fusion strategies to effectively leverage these diverse representations. Extensive experiments on remote sensing benchmarks show that SatDiFuser outperforms state-of-the-art GFMs, achieving gains of up to +5.7% mIoU in semantic segmentation and +7.9% F1-score in classification, demonstrating the capacity of diffusion-based generative foundation models to rival or exceed discriminative GFMs. Code will be released.

PANGAEA: A Global and Inclusive Benchmark for Geospatial Foundation Models

Dec 05, 2024Abstract:Geospatial Foundation Models (GFMs) have emerged as powerful tools for extracting representations from Earth observation data, but their evaluation remains inconsistent and narrow. Existing works often evaluate on suboptimal downstream datasets and tasks, that are often too easy or too narrow, limiting the usefulness of the evaluations to assess the real-world applicability of GFMs. Additionally, there is a distinct lack of diversity in current evaluation protocols, which fail to account for the multiplicity of image resolutions, sensor types, and temporalities, which further complicates the assessment of GFM performance. In particular, most existing benchmarks are geographically biased towards North America and Europe, questioning the global applicability of GFMs. To overcome these challenges, we introduce PANGAEA, a standardized evaluation protocol that covers a diverse set of datasets, tasks, resolutions, sensor modalities, and temporalities. It establishes a robust and widely applicable benchmark for GFMs. We evaluate the most popular GFMs openly available on this benchmark and analyze their performance across several domains. In particular, we compare these models to supervised baselines (e.g. UNet and vanilla ViT), and assess their effectiveness when faced with limited labeled data. Our findings highlight the limitations of GFMs, under different scenarios, showing that they do not consistently outperform supervised models. PANGAEA is designed to be highly extensible, allowing for the seamless inclusion of new datasets, models, and tasks in future research. By releasing the evaluation code and benchmark, we aim to enable other researchers to replicate our experiments and build upon our work, fostering a more principled evaluation protocol for large pre-trained geospatial models. The code is available at https://github.com/VMarsocci/pangaea-bench.

A CNN regression model to estimate buildings height maps using Sentinel-1 SAR and Sentinel-2 MSI time series

Jul 03, 2023Abstract:Accurate estimation of building heights is essential for urban planning, infrastructure management, and environmental analysis. In this study, we propose a supervised Multimodal Building Height Regression Network (MBHR-Net) for estimating building heights at 10m spatial resolution using Sentinel-1 (S1) and Sentinel-2 (S2) satellite time series. S1 provides Synthetic Aperture Radar (SAR) data that offers valuable information on building structures, while S2 provides multispectral data that is sensitive to different land cover types, vegetation phenology, and building shadows. Our MBHR-Net aims to extract meaningful features from the S1 and S2 images to learn complex spatio-temporal relationships between image patterns and building heights. The model is trained and tested in 10 cities in the Netherlands. Root Mean Squared Error (RMSE), Intersection over Union (IOU), and R-squared (R2) score metrics are used to evaluate the performance of the model. The preliminary results (3.73m RMSE, 0.95 IoU, 0.61 R2) demonstrate the effectiveness of our deep learning model in accurately estimating building heights, showcasing its potential for urban planning, environmental impact analysis, and other related applications.

Context-Aware Change Detection With Semi-Supervised Learning

Jun 15, 2023

Abstract:Change detection using earth observation data plays a vital role in quantifying the impact of disasters in affected areas. While data sources like Sentinel-2 provide rich optical information, they are often hindered by cloud cover, limiting their usage in disaster scenarios. However, leveraging pre-disaster optical data can offer valuable contextual information about the area such as landcover type, vegetation cover, soil types, enabling a better understanding of the disaster's impact. In this study, we develop a model to assess the contribution of pre-disaster Sentinel-2 data in change detection tasks, focusing on disaster-affected areas. The proposed Context-Aware Change Detection Network (CACDN) utilizes a combination of pre-disaster Sentinel-2 data, pre and post-disaster Sentinel-1 data and ancillary Digital Elevation Models (DEM) data. The model is validated on flood and landslide detection and evaluated using three metrics: Area Under the Precision-Recall Curve (AUPRC), Intersection over Union (IoU), and mean IoU. The preliminary results show significant improvement (4\%, AUPRC, 3-7\% IoU, 3-6\% mean IoU) in model's change detection capabilities when incorporated with pre-disaster optical data reflecting the effectiveness of using contextual information for accurate flood and landslide detection.

Investigating Imbalances Between SAR and Optical Utilization for Multi-Modal Urban Mapping

Apr 11, 2023

Abstract:Accurate urban maps provide essential information to support sustainable urban development. Recent urban mapping methods use multi-modal deep neural networks to fuse Synthetic Aperture Radar (SAR) and optical data. However, multi-modal networks may rely on just one modality due to the greedy nature of learning. In turn, the imbalanced utilization of modalities can negatively affect the generalization ability of a network. In this paper, we investigate the utilization of SAR and optical data for urban mapping. To that end, a dual-branch network architecture using intermediate fusion modules to share information between the uni-modal branches is utilized. A cut-off mechanism in the fusion modules enables the stopping of information flow between the branches, which is used to estimate the network's dependence on SAR and optical data. While our experiments on the SEN12 Global Urban Mapping dataset show that good performance can be achieved with conventional SAR-optical data fusion (F1 score = 0.682 $\pm$ 0.014), we also observed a clear under-utilization of optical data. Therefore, future work is required to investigate whether a more balanced utilization of SAR and optical data can lead to performance improvements.

Unsupervised Flood Detection on SAR Time Series

Dec 07, 2022

Abstract:Human civilization has an increasingly powerful influence on the earth system. Affected by climate change and land-use change, natural disasters such as flooding have been increasing in recent years. Earth observations are an invaluable source for assessing and mitigating negative impacts. Detecting changes from Earth observation data is one way to monitor the possible impact. Effective and reliable Change Detection (CD) methods can help in identifying the risk of disaster events at an early stage. In this work, we propose a novel unsupervised CD method on time series Synthetic Aperture Radar~(SAR) data. Our proposed method is a probabilistic model trained with unsupervised learning techniques, reconstruction, and contrastive learning. The change map is generated with the help of the distribution difference between pre-incident and post-incident data. Our proposed CD model is evaluated on flood detection data. We verified the efficacy of our model on 8 different flood sites, including three recent flood events from Copernicus Emergency Management Services and six from the Sen1Floods11 dataset. Our proposed model achieved an average of 64.53\% Intersection Over Union(IoU) value and 75.43\% F1 score. Our achieved IoU score is approximately 6-27\% and F1 score is approximately 7-22\% better than the compared unsupervised and supervised existing CD methods. The results and extensive discussion presented in the study show the effectiveness of the proposed unsupervised CD method.

Building Change Detection using Multi-Temporal Airborne LiDAR Data

Apr 26, 2022

Abstract:Building change detection is essential for monitoring urbanization, disaster assessment, urban planning and frequently updating the maps. 3D structure information from airborne light detection and ranging (LiDAR) is very effective for detecting urban changes. But the 3D point cloud from airborne LiDAR(ALS) holds an enormous amount of unordered and irregularly sparse information. Handling such data is tricky and consumes large memory for processing. Most of this information is not necessary when we are looking for a particular type of urban change. In this study, we propose an automatic method that reduces the 3D point clouds into a much smaller representation without losing the necessary information required for detecting Building changes. The method utilizes the Deep Learning(DL) model U-Net for segmenting the buildings from the background. Produced segmentation maps are then processed further for detecting changes and the results are refined using morphological methods. For the change detection task, we used multi-temporal airborne LiDAR data. The data is acquired over Stockholm in the years 2017 and 2019. The changes in buildings are classified into four types: 'newly built', 'demolished', 'taller' and 'shorter'. The detected changes are visualized in one map for better interpretation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge