Anastasia Podosinnikova

Overcomplete Independent Component Analysis via SDP

Jan 24, 2019

Abstract:We present a novel algorithm for overcomplete independent components analysis (ICA), where the number of latent sources k exceeds the dimension p of observed variables. Previous algorithms either suffer from high computational complexity or make strong assumptions about the form of the mixing matrix. Our algorithm does not make any sparsity assumption yet enjoys favorable computational and theoretical properties. Our algorithm consists of two main steps: (a) estimation of the Hessians of the cumulant generating function (as opposed to the fourth and higher order cumulants used by most algorithms) and (b) a novel semi-definite programming (SDP) relaxation for recovering a mixing component. We show that this relaxation can be efficiently solved with a projected accelerated gradient descent method, which makes the whole algorithm computationally practical. Moreover, we conjecture that the proposed program recovers a mixing component at the rate k < p^2/4 and prove that a mixing component can be recovered with high probability when k < (2 - epsilon) p log p when the original components are sampled uniformly at random on the hyper sphere. Experiments are provided on synthetic data and the CIFAR-10 dataset of real images.

Beyond CCA: Moment Matching for Multi-View Models

Jun 03, 2016

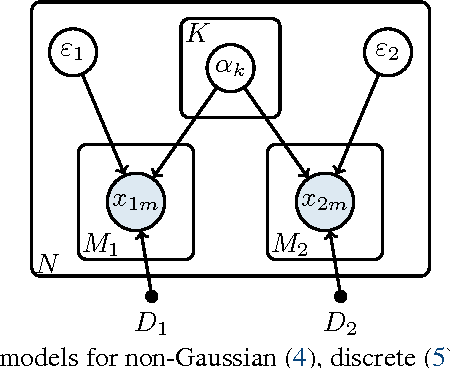

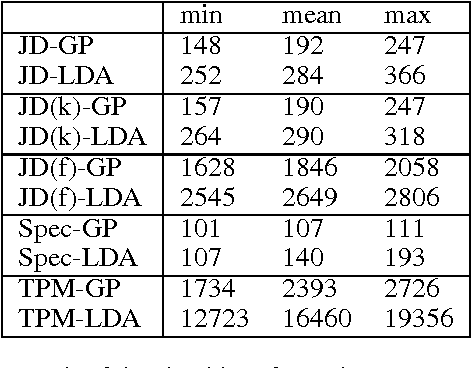

Abstract:We introduce three novel semi-parametric extensions of probabilistic canonical correlation analysis with identifiability guarantees. We consider moment matching techniques for estimation in these models. For that, by drawing explicit links between the new models and a discrete version of independent component analysis (DICA), we first extend the DICA cumulant tensors to the new discrete version of CCA. By further using a close connection with independent component analysis, we introduce generalized covariance matrices, which can replace the cumulant tensors in the moment matching framework, and, therefore, improve sample complexity and simplify derivations and algorithms significantly. As the tensor power method or orthogonal joint diagonalization are not applicable in the new setting, we use non-orthogonal joint diagonalization techniques for matching the cumulants. We demonstrate performance of the proposed models and estimation techniques on experiments with both synthetic and real datasets.

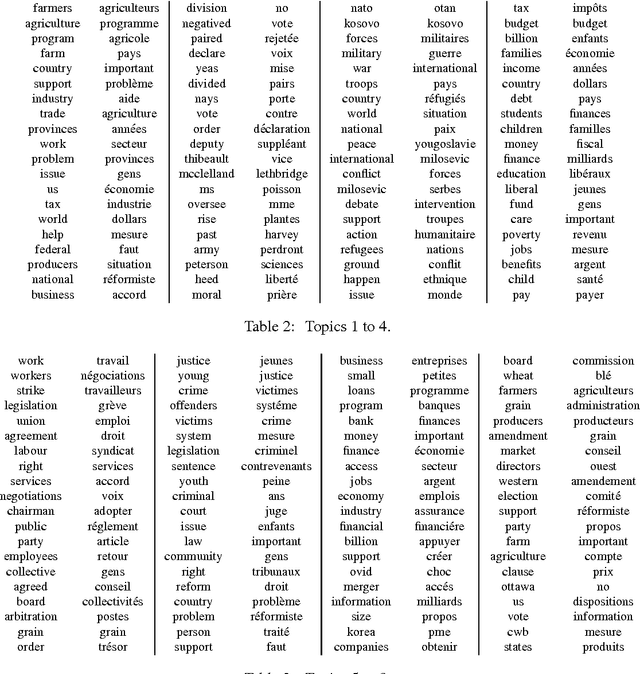

Rethinking LDA: moment matching for discrete ICA

Nov 05, 2015

Abstract:We consider moment matching techniques for estimation in Latent Dirichlet Allocation (LDA). By drawing explicit links between LDA and discrete versions of independent component analysis (ICA), we first derive a new set of cumulant-based tensors, with an improved sample complexity. Moreover, we reuse standard ICA techniques such as joint diagonalization of tensors to improve over existing methods based on the tensor power method. In an extensive set of experiments on both synthetic and real datasets, we show that our new combination of tensors and orthogonal joint diagonalization techniques outperforms existing moment matching methods.

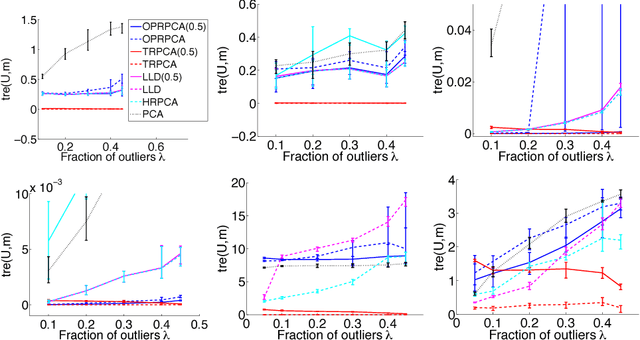

Robust PCA: Optimization of the Robust Reconstruction Error over the Stiefel Manifold

Jun 01, 2015

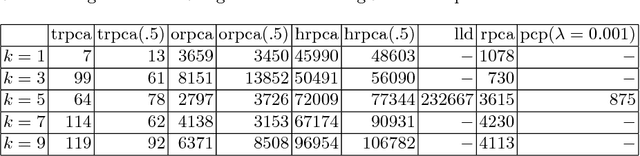

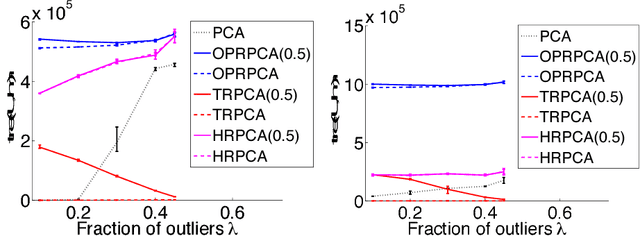

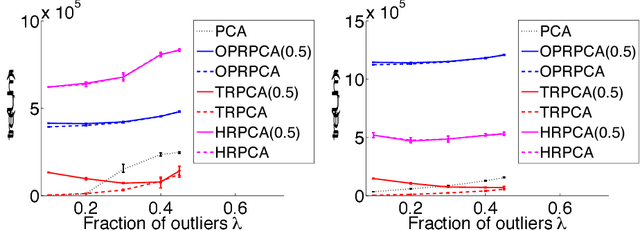

Abstract:It is well known that Principal Component Analysis (PCA) is strongly affected by outliers and a lot of effort has been put into robustification of PCA. In this paper we present a new algorithm for robust PCA minimizing the trimmed reconstruction error. By directly minimizing over the Stiefel manifold, we avoid deflation as often used by projection pursuit methods. In distinction to other methods for robust PCA, our method has no free parameter and is computationally very efficient. We illustrate the performance on various datasets including an application to background modeling and subtraction. Our method performs better or similar to current state-of-the-art methods while being faster.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge