Alireza Ahmadi

BonnBot-I Plus: A Bio-diversity Aware Precise Weed Management Robotic Platform

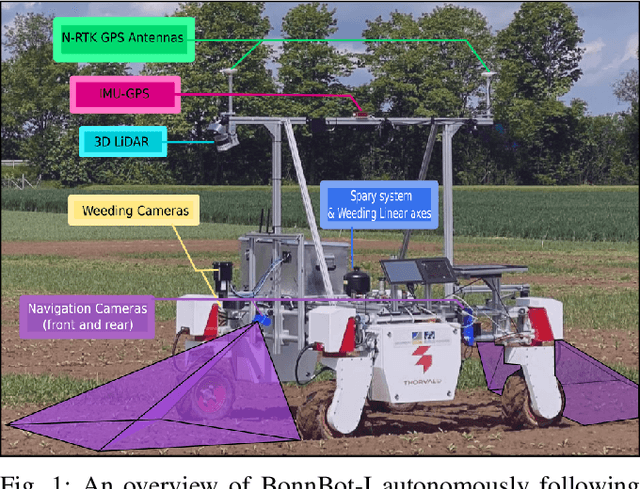

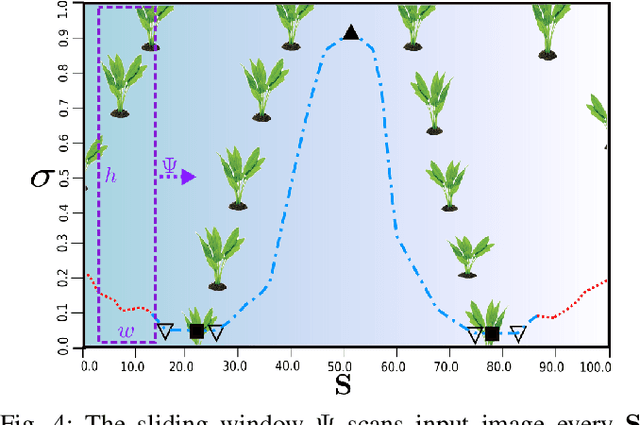

May 15, 2024Abstract:In this article, we focus on the critical tasks of plant protection in arable farms, addressing a modern challenge in agriculture: integrating ecological considerations into the operational strategy of precision weeding robots like \bbot. This article presents the recent advancements in weed management algorithms and the real-world performance of \bbot\ at the University of Bonn's Klein-Altendorf campus. We present a novel Rolling-view observation model for the BonnBot-Is weed monitoring section which leads to an average absolute weeding performance enhancement of $3.4\%$. Furthermore, for the first time, we show how precision weeding robots could consider bio-diversity-aware concerns in challenging weeding scenarios. We carried out comprehensive weeding experiments in sugar-beet fields, covering both weed-only and mixed crop-weed situations, and introduced a new dataset compatible with precision weeding. Our real-field experiments revealed that our weeding approach is capable of handling diverse weed distributions, with a minimal loss of only $11.66\%$ attributable to intervention planning and $14.7\%$ to vision system limitations highlighting required improvements of the vision system.

BonnBot-I: A Precise Weed Management and Crop Monitoring Platform

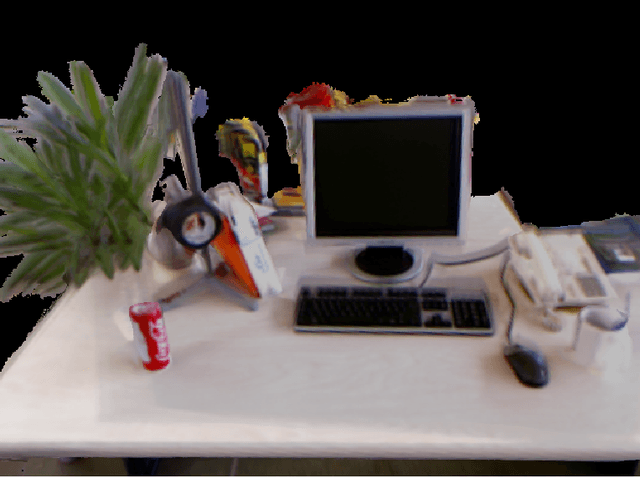

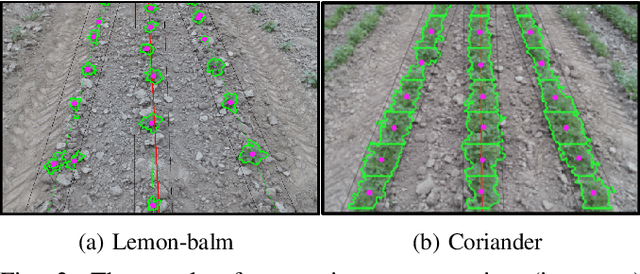

Jul 24, 2023Abstract:Cultivation and weeding are two of the primary tasks performed by farmers today. A recent challenge for weeding is the desire to reduce herbicide and pesticide treatments while maintaining crop quality and quantity. In this paper we introduce BonnBot-I a precise weed management platform which can also performs field monitoring. Driven by crop monitoring approaches which can accurately locate and classify plants (weed and crop) we further improve their performance by fusing the platform available GNSS and wheel odometry. This improves tracking accuracy of our crop monitoring approach from a normalized average error of 8.3% to 3.5%, evaluated on a new publicly available corn dataset. We also present a novel arrangement of weeding tools mounted on linear actuators evaluated in simulated environments. We replicate weed distributions from a real field, using the results from our monitoring approach, and show the validity of our work-space division techniques which require significantly less movement (a 50% reduction) to achieve similar results. Overall, BonnBot-I is a significant step forward in precise weed management with a novel method of selectively spraying and controlling weeds in an arable field

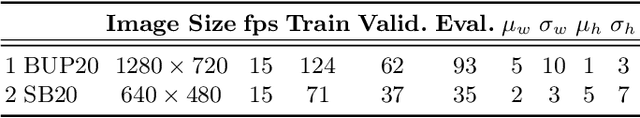

Explicitly incorporating spatial information to recurrent networks for agriculture

Jun 27, 2022

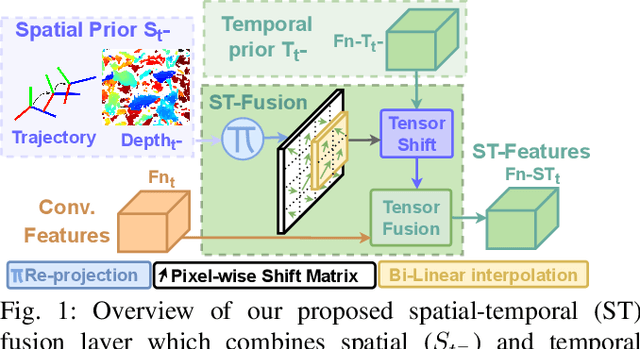

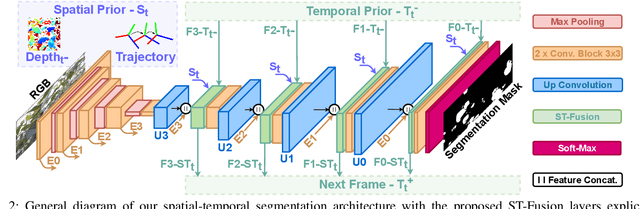

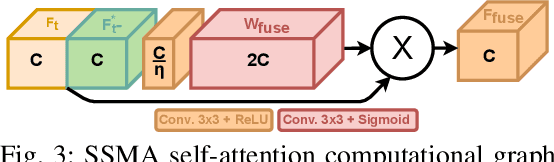

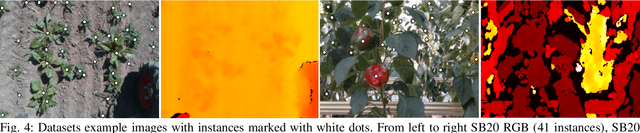

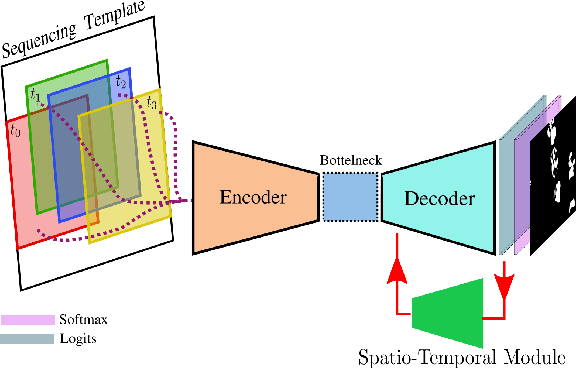

Abstract:In agriculture, the majority of vision systems perform still image classification. Yet, recent work has highlighted the potential of spatial and temporal cues as a rich source of information to improve the classification performance. In this paper, we propose novel approaches to explicitly capture both spatial and temporal information to improve the classification of deep convolutional neural networks. We leverage available RGB-D images and robot odometry to perform inter-frame feature map spatial registration. This information is then fused within recurrent deep learnt models, to improve their accuracy and robustness. We demonstrate that this can considerably improve the classification performance with our best performing spatial-temporal model (ST-Atte) achieving absolute performance improvements for intersection-over-union (IoU[%]) of 4.7 for crop-weed segmentation and 2.6 for fruit (sweet pepper) segmentation. Furthermore, we show that these approaches are robust to variable framerates and odometry errors, which are frequently observed in real-world applications.

Registration Techniques for Deformable Objects

Nov 07, 2021

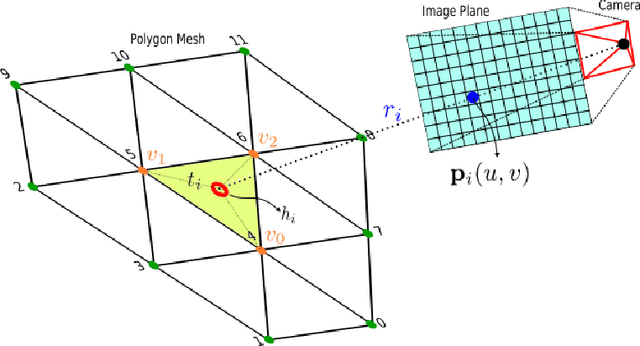

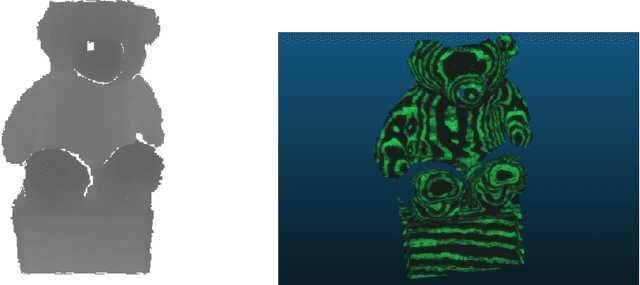

Abstract:In general, the problem of non-rigid registration is about matching two different scans of a dynamic object taken at two different points in time. These scans can undergo both rigid motions and non-rigid deformations. Since new parts of the model may come into view and other parts get occluded in between two scans, the region of overlap is a subset of both scans. In the most general setting, no prior template shape is given and no markers or explicit feature point correspondences are available. So, this case is a partial matching problem that takes into account the assumption that consequent scans undergo small deformations while having a significant amount of overlapping area [28]. The problem which this thesis is addressing is mapping deforming objects and localizing cameras in the environment at the same time.

Towards Autonomous Crop-Agnostic Visual Navigation in Arable Fields

Sep 24, 2021

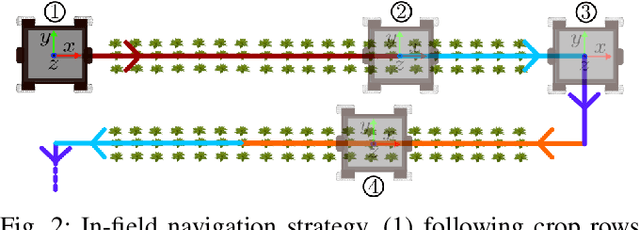

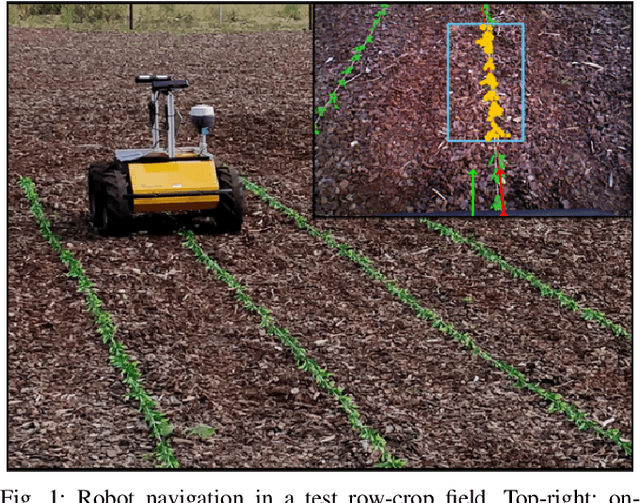

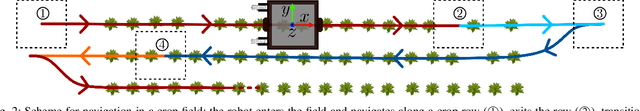

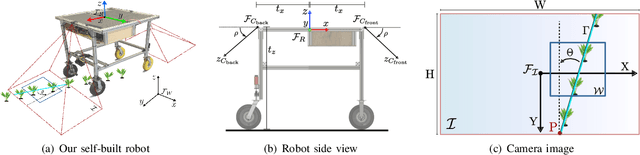

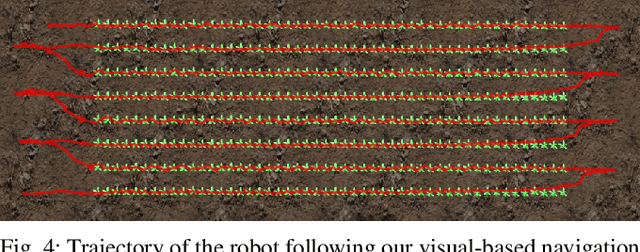

Abstract:Autonomous navigation of a robot in agricultural fields is essential for every task from crop monitoring through to weed management and fertilizer application. Many current approaches rely on accurate GPS, however, such technology is expensive and also prone to failure~(e.g. through lack of coverage). As such, navigation through sensors that can interpret their environment (such as cameras) is important to achieve the goal of autonomy in agriculture. In this paper, we introduce a purely vision-based navigation scheme which is able to reliably guide the robot through row-crop fields. Independent of any global localization or mapping, this approach is able to accurately follow the crop-rows and switch between the rows, only using on-board cameras. With the help of a novel crop-row detection and a novel crop-row switching technique, our navigation scheme can be deployed in a wide range of fields with different canopy types in various growth stages. We have extensively tested our approach in five different fields under various illumination conditions using our agricultural robotic platform (BonnBot-I). And our evaluations show that we have achieved a navigation accuracy of 3.82cm over five different crop fields.

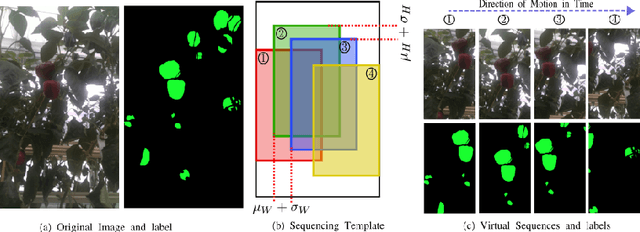

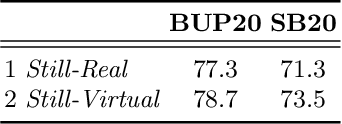

Virtual Temporal Samples for Recurrent Neural Networks: applied to semantic segmentation in agriculture

Jun 18, 2021

Abstract:This paper explores the potential for performing temporal semantic segmentation in the context of agricultural robotics without temporally labelled data. We achieve this by proposing to generate virtual temporal samples from labelled still images. This allows us, with no extra annotation effort, to generate virtually labelled temporal sequences. Normally, to train a recurrent neural network (RNN), labelled samples from a video (temporal) sequence are required which is laborious and has stymied work in this direction. By generating virtual temporal samples, we demonstrate that it is possible to train a lightweight RNN to perform semantic segmentation on two challenging agricultural datasets. Our results show that by training a temporal semantic segmenter using virtual samples we can increase the performance by an absolute amount of 4.6 and 4.9 on sweet pepper and sugar beet datasets, respectively. This indicates that our virtual data augmentation technique is able to accurately classify agricultural images temporally without the use of complicated synthetic data generation techniques nor with the overhead of labelling large amounts of temporal sequences.

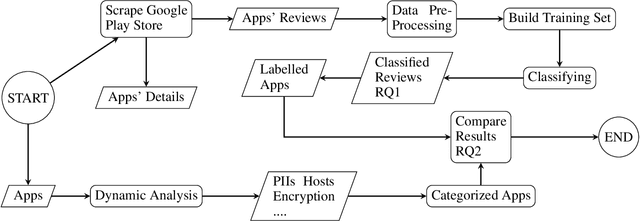

An Empirical Study on User Reviews Targeting Mobile Apps' Security & Privacy

Oct 11, 2020

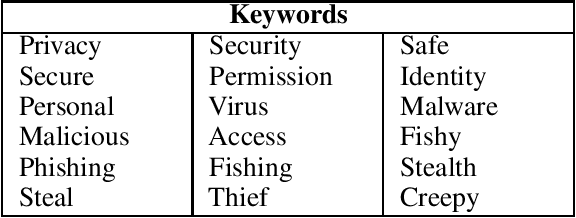

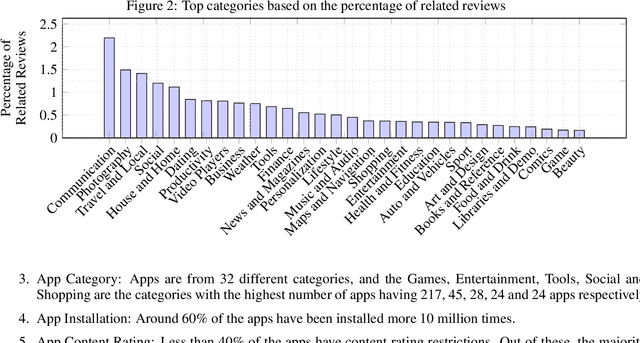

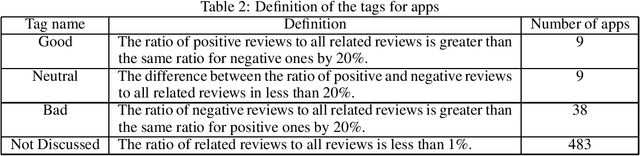

Abstract:Application markets provide a communication channel between app developers and their end-users in form of app reviews, which allow users to provide feedback about the apps. Although security and privacy in mobile apps are one of the biggest issues, it is unclear how much people are aware of these or discuss them in reviews. In this study, we explore the privacy and security concerns of users using reviews in the Google Play Store. For this, we conducted a study by analyzing around 2.2M reviews from the top 539 apps of this Android market. We found that 0.5\% of these reviews are related to the security and privacy concerns of the users. We further investigated these apps by performing dynamic analysis which provided us valuable insights into their actual behaviors. Based on the different perspectives, we categorized the apps and evaluated how the different factors influence the users' perception of the apps. It was evident from the results that the number of permissions that the apps request plays a dominant role in this matter. We also found that sending out the location can affect the users' thoughts about the app. The other factors do not directly affect the privacy and security concerns for the users.

Visual Servoing-based Navigation for Monitoring Row-Crop Fields

Sep 27, 2019

Abstract:Autonomous navigation is a pre-requisite for field robots to carry out precision agriculture tasks. Typically, a robot has to navigate through a whole crop field several times during a season for monitoring the plants, for applying agrochemicals, or for performing targeted intervention actions. In this paper, we propose a framework tailored for navigation in row-crop fields by exploiting the regular crop-row structure present in the fields. Our approach uses only the images from on-board cameras without the need for performing explicit localization or maintaining a map of the field and thus can operate without expensive RTK-GPS solutions often used in agriculture automation systems. Our navigation approach allows the robot to follow the crop-rows accurately and handles the switch to the next row seamlessly within the same framework. We implemented our approach using C++ and ROS and thoroughly tested it in several simulated environments with different shapes and sizes of field. We also demonstrated the system running at frame-rate on an actual robot operating on a test row-crop field. The code and data have been published.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge