Alexandre Marcireau

LAND: A Longitudinal Analysis of Neuromorphic Datasets

Feb 17, 2026Abstract:Neuromorphic engineering has a data problem. Despite the meteoric rise in the number of neuromorphic datasets published over the past ten years, the conclusion of a significant portion of neuromorphic research papers still states that there is a need for yet more data and even larger datasets. Whilst this need is driven in part by the sheer volume of data required by modern deep learning approaches, it is also fuelled by the current state of the available neuromorphic datasets and the difficulties in finding them, understanding their purpose, and determining the nature of their underlying task. This is further compounded by practical difficulties in downloading and using these datasets. This review starts by capturing a snapshot of the existing neuromorphic datasets, covering over 423 datasets, and then explores the nature of their tasks and the underlying structure of the presented data. Analysing these datasets shows the difficulties arising from their size, the lack of standardisation, and difficulties in accessing the actual data. This paper also highlights the growth in the size of individual datasets and the complexities involved in working with the data. However, a more important concern is the rise of synthetic datasets, created by either simulation or video-to-events methods. This review explores the benefits of simulated data for testing existing algorithms and applications, highlighting the potential pitfalls for exploring new applications of neuromorphic technologies. This review also introduces the concepts of meta-datasets, created from existing datasets, as a way of both reducing the need for more data, and to remove potential bias arising from defining both the dataset and the task.

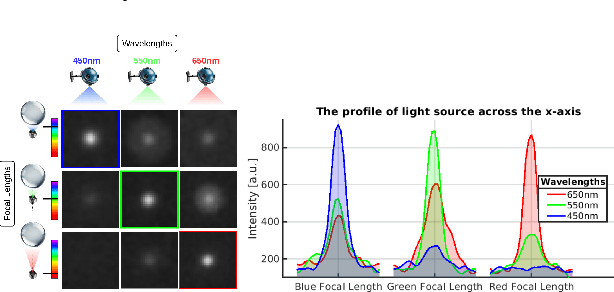

Seeing like a Cephalopod: Colour Vision with a Monochrome Event Camera

Apr 15, 2025

Abstract:Cephalopods exhibit unique colour discrimination capabilities despite having one type of photoreceptor, relying instead on chromatic aberration induced by their ocular optics and pupil shapes to perceive spectral information. We took inspiration from this biological mechanism to design a spectral imaging system that combines a ball lens with an event-based camera. Our approach relies on a motorised system that shifts the focal position, mirroring the adaptive lens motion in cephalopods. This approach has enabled us to achieve wavelength-dependent focusing across the visible light and near-infrared spectrum, making the event a spectral sensor. We characterise chromatic aberration effects, using both event-based and conventional frame-based sensors, validating the effectiveness of bio-inspired spectral discrimination both in simulation and in a real setup as well as assessing the spectral discrimination performance. Our proposed approach provides a robust spectral sensing capability without conventional colour filters or computational demosaicing. This approach opens new pathways toward new spectral sensing systems inspired by nature's evolutionary solutions. Code and analysis are available at: https://samiarja.github.io/neuromorphic_octopus_eye/

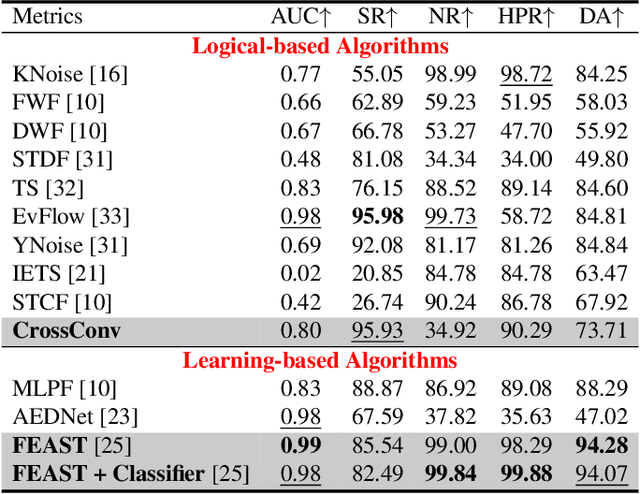

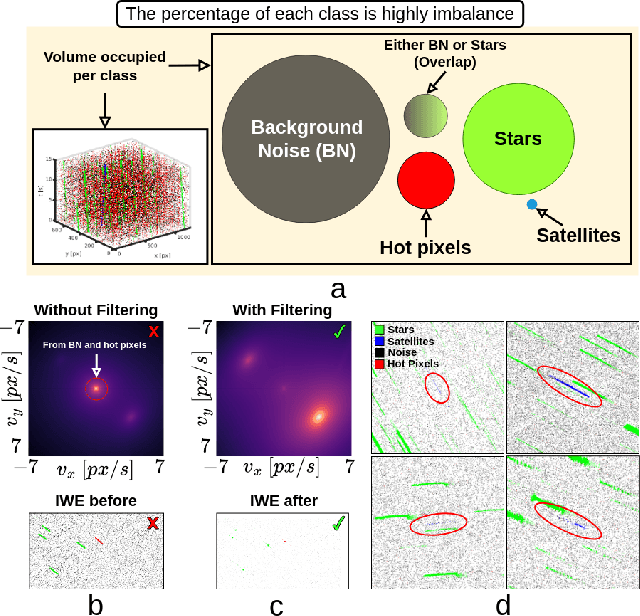

Noise Filtering Benchmark for Neuromorphic Satellites Observations

Nov 18, 2024

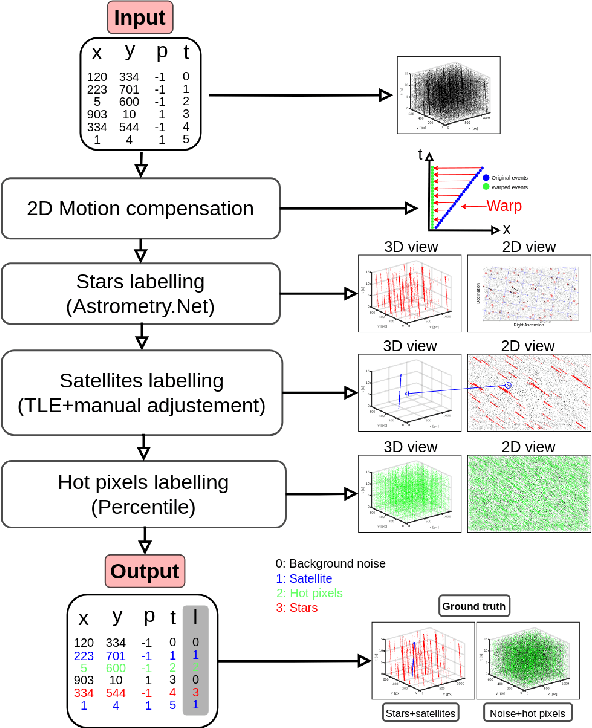

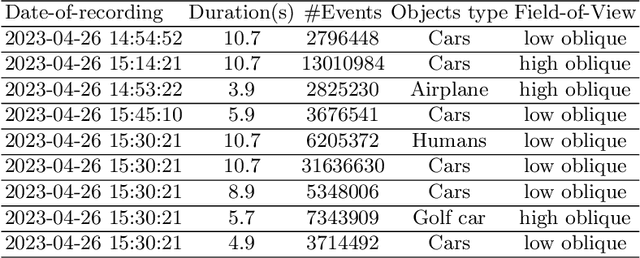

Abstract:Event cameras capture sparse, asynchronous brightness changes which offer high temporal resolution, high dynamic range, low power consumption, and sparse data output. These advantages make them ideal for Space Situational Awareness, particularly in detecting resident space objects moving within a telescope's field of view. However, the output from event cameras often includes substantial background activity noise, which is known to be more prevalent in low-light conditions. This noise can overwhelm the sparse events generated by satellite signals, making detection and tracking more challenging. Existing noise-filtering algorithms struggle in these scenarios because they are typically designed for denser scenes, where losing some signal is acceptable. This limitation hinders the application of event cameras in complex, real-world environments where signals are extremely sparse. In this paper, we propose new event-driven noise-filtering algorithms specifically designed for very sparse scenes. We categorise the algorithms into logical-based and learning-based approaches and benchmark their performance against 11 state-of-the-art noise-filtering algorithms, evaluating how effectively they remove noise and hot pixels while preserving the signal. Their performance was quantified by measuring signal retention and noise removal accuracy, with results reported using ROC curves across the parameter space. Additionally, we introduce a new high-resolution satellite dataset with ground truth from a real-world platform under various noise conditions, which we have made publicly available. Code, dataset, and trained weights are available at \url{https://github.com/samiarja/dvs_sparse_filter}.

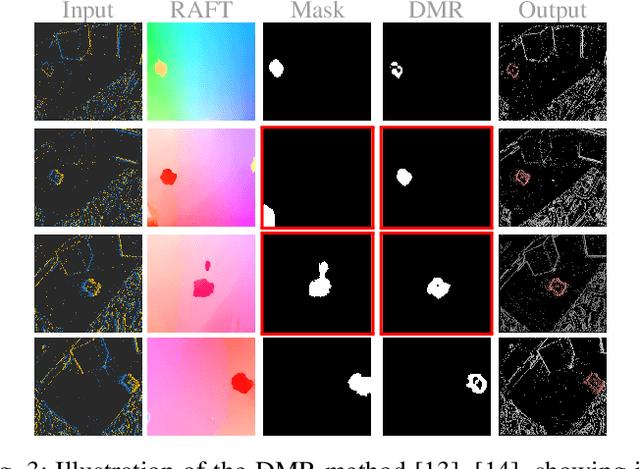

Unsupervised Motion Segmentation for Neuromorphic Aerial Surveillance

May 24, 2024

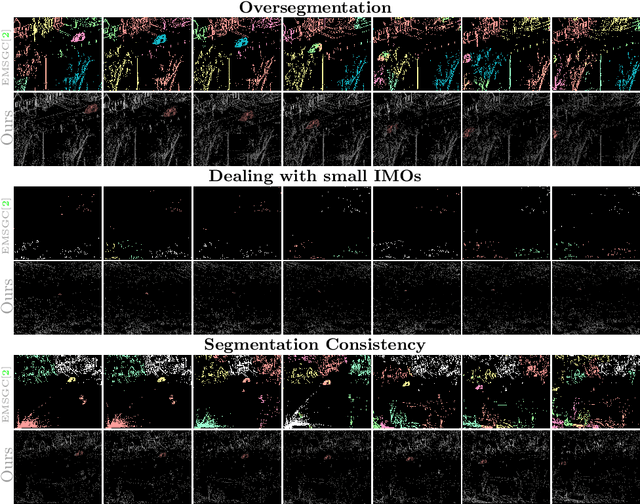

Abstract:Achieving optimal performance with frame-based vision sensors on aerial platforms poses a significant challenge due to the fundamental tradeoffs between bandwidth and latency. Event cameras, which draw inspiration from biological vision systems, present a promising alternative due to their exceptional temporal resolution, superior dynamic range, and minimal power requirements. Due to these properties, they are well-suited for processing and segmenting fast motions that require rapid reactions. However, previous methods for event-based motion segmentation encountered limitations, such as the need for per-scene parameter tuning or manual labelling to achieve satisfactory results. To overcome these issues, our proposed method leverages features from self-supervised transformers on both event data and optical flow information, eliminating the need for human annotations and reducing the parameter tuning problem. In this paper, we use an event camera with HD resolution onboard a highly dynamic aerial platform in an urban setting. We conduct extensive evaluations of our framework across multiple datasets, demonstrating state-of-the-art performance compared to existing works. Our method can effectively handle various types of motion and an arbitrary number of moving objects. Code and dataset are available at: \url{https://samiarja.github.io/evairborne/}

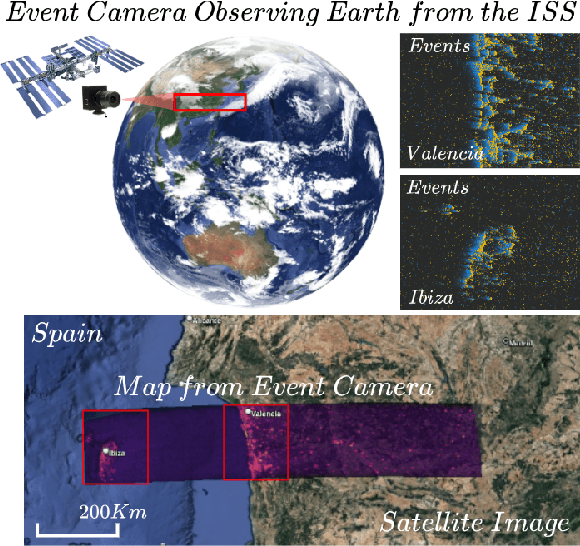

Density Invariant Contrast Maximization for Neuromorphic Earth Observations

May 03, 2023

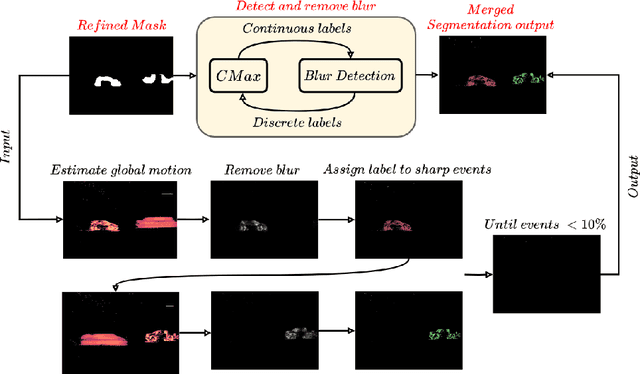

Abstract:Contrast maximization (CMax) techniques are widely used in event-based vision systems to estimate the motion parameters of the camera and generate high-contrast images. However, these techniques are noise-intolerance and suffer from the multiple extrema problem which arises when the scene contains more noisy events than structure, causing the contrast to be higher at multiple locations. This makes the task of estimating the camera motion extremely challenging, which is a problem for neuromorphic earth observation, because, without a proper estimation of the motion parameters, it is not possible to generate a map with high contrast, causing important details to be lost. Similar methods that use CMax addressed this problem by changing or augmenting the objective function to enable it to converge to the correct motion parameters. Our proposed solution overcomes the multiple extrema and noise-intolerance problems by correcting the warped event before calculating the contrast and offers the following advantages: it does not depend on the event data, it does not require a prior about the camera motion, and keeps the rest of the CMax pipeline unchanged. This is to ensure that the contrast is only high around the correct motion parameters. Our approach enables the creation of better motion-compensated maps through an analytical compensation technique using a novel dataset from the International Space Station (ISS). Code is available at \url{https://github.com/neuromorphicsystems/event_warping}

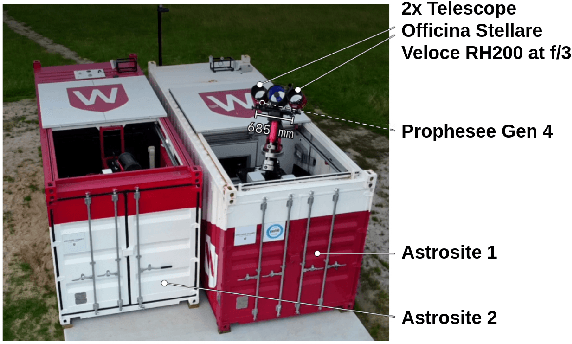

Astrometric Calibration and Source Characterisation of the Latest Generation Neuromorphic Event-based Cameras for Space Imaging

Nov 17, 2022Abstract:As an emerging approach to space situational awareness and space imaging, the practical use of an event-based camera in space imaging for precise source analysis is still in its infancy. The nature of event-based space imaging and data collection needs to be further explored to develop more effective event-based space image systems and advance the capabilities of event-based tracking systems with improved target measurement models. Moreover, for event measurements to be meaningful, a framework must be investigated for event-based camera calibration to project events from pixel array coordinates in the image plane to coordinates in a target resident space object's reference frame. In this paper, the traditional techniques of conventional astronomy are reconsidered to properly utilise the event-based camera for space imaging and space situational awareness. This paper presents the techniques and systems used for calibrating an event-based camera for reliable and accurate measurement acquisition. These techniques are vital in building event-based space imaging systems capable of real-world space situational awareness tasks. By calibrating sources detected using the event-based camera, the spatio-temporal characteristics of detected sources or `event sources' can be related to the photometric characteristics of the underlying astrophysical objects. Finally, these characteristics are analysed to establish a foundation for principled processing and observing techniques which appropriately exploit the capabilities of the event-based camera.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge