Abdullah Tokmak

Safe Bayesian optimization across noise models via scenario programming

Dec 12, 2025Abstract:Safe Bayesian optimization (BO) with Gaussian processes is an effective tool for tuning control policies in safety-critical real-world systems, specifically due to its sample efficiency and safety guarantees. However, most safe BO algorithms assume homoscedastic sub-Gaussian measurement noise, an assumption that does not hold in many relevant applications. In this article, we propose a straightforward yet rigorous approach for safe BO across noise models, including homoscedastic sub-Gaussian and heteroscedastic heavy-tailed distributions. We provide a high-probability bound on the measurement noise via the scenario approach, integrate these bounds into high probability confidence intervals, and prove safety and optimality for our proposed safe BO algorithm. We deploy our algorithm in synthetic examples and in tuning a controller for the Franka Emika manipulator in simulation.

Safe exploration in reproducing kernel Hilbert spaces

Mar 13, 2025

Abstract:Popular safe Bayesian optimization (BO) algorithms learn control policies for safety-critical systems in unknown environments. However, most algorithms make a smoothness assumption, which is encoded by a known bounded norm in a reproducing kernel Hilbert space (RKHS). The RKHS is a potentially infinite-dimensional space, and it remains unclear how to reliably obtain the RKHS norm of an unknown function. In this work, we propose a safe BO algorithm capable of estimating the RKHS norm from data. We provide statistical guarantees on the RKHS norm estimation, integrate the estimated RKHS norm into existing confidence intervals and show that we retain theoretical guarantees, and prove safety of the resulting safe BO algorithm. We apply our algorithm to safely optimize reinforcement learning policies on physics simulators and on a real inverted pendulum, demonstrating improved performance, safety, and scalability compared to the state-of-the-art.

PACSBO: Probably approximately correct safe Bayesian optimization

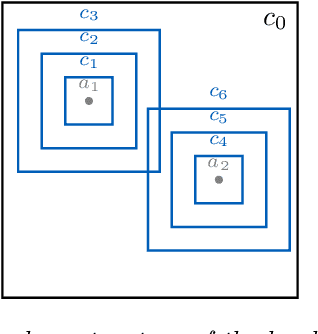

Sep 02, 2024Abstract:Safe Bayesian optimization (BO) algorithms promise to find optimal control policies without knowing the system dynamics while at the same time guaranteeing safety with high probability. In exchange for those guarantees, popular algorithms require a smoothness assumption: a known upper bound on a norm in a reproducing kernel Hilbert space (RKHS). The RKHS is a potentially infinite-dimensional space, and it is unclear how to, in practice, obtain an upper bound of an unknown function in its corresponding RKHS. In response, we propose an algorithm that estimates an upper bound on the RKHS norm of an unknown function from data and investigate its theoretical properties. Moreover, akin to Lipschitz-based methods, we treat the RKHS norm as a local rather than a global object, and thus reduce conservatism. Integrating the RKHS norm estimation and the local interpretation of the RKHS norm into a safe BO algorithm yields PACSBO, an algorithm for probably approximately correct safe Bayesian optimization, for which we provide numerical and hardware experiments that demonstrate its applicability and benefits over popular safe BO algorithms.

Automatic nonlinear MPC approximation with closed-loop guarantees

Dec 15, 2023Abstract:In this paper, we address the problem of automatically approximating nonlinear model predictive control (MPC) schemes with closed-loop guarantees. First, we discuss how this problem can be reduced to a function approximation problem, which we then tackle by proposing ALKIA-X, the Adaptive and Localized Kernel Interpolation Algorithm with eXtrapolated reproducing kernel Hilbert space norm. ALKIA-X is a non-iterative algorithm that ensures numerically well-conditioned computations, a fast-to-evaluate approximating function, and the guaranteed satisfaction of any desired bound on the approximation error. Hence, ALKIA-X automatically computes an explicit function that approximates the MPC, yielding a controller suitable for safety-critical systems and high sampling rates. In a numerical experiment, we apply ALKIA-X to a nonlinear MPC scheme, demonstrating reduced offline computation and online evaluation time compared to a state-of-the-art method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge