Towards Adversarial Patch Analysis and Certified Defense against Crowd Counting

Paper and Code

Apr 22, 2021

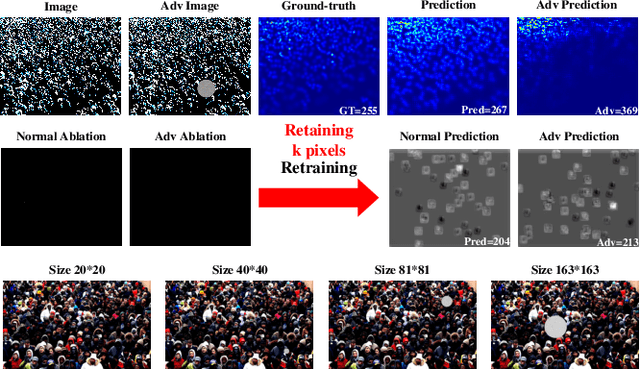

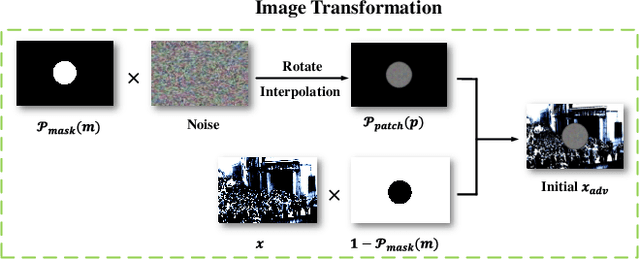

Crowd counting has drawn much attention due to its importance in safety-critical surveillance systems. Especially, deep neural network (DNN) methods have significantly reduced estimation errors for crowd counting missions. Recent studies have demonstrated that DNNs are vulnerable to adversarial attacks, i.e., normal images with human-imperceptible perturbations could mislead DNNs to make false predictions. In this work, we propose a robust attack strategy called Adversarial Patch Attack with Momentum (APAM) to systematically evaluate the robustness of crowd counting models, where the attacker's goal is to create an adversarial perturbation that severely degrades their performances, thus leading to public safety accidents (e.g., stampede accidents). Especially, the proposed attack leverages the extreme-density background information of input images to generate robust adversarial patches via a series of transformations (e.g., interpolation, rotation, etc.). We observe that by perturbing less than 6\% of image pixels, our attacks severely degrade the performance of crowd counting systems, both digitally and physically. To better enhance the adversarial robustness of crowd counting models, we propose the first regression model-based Randomized Ablation (RA), which is more sufficient than Adversarial Training (ADT) (Mean Absolute Error of RA is 5 lower than ADT on clean samples and 30 lower than ADT on adversarial examples). Extensive experiments on five crowd counting models demonstrate the effectiveness and generality of the proposed method. Code is available at \url{https://github.com/harrywuhust2022/Adv-Crowd-analysis}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge